Have a language expert improve your writing

Run a free plagiarism check in 10 minutes, generate accurate citations for free.

- Knowledge Base

- Understanding P values | Definition and Examples

Understanding P-values | Definition and Examples

Published on July 16, 2020 by Rebecca Bevans . Revised on June 22, 2023.

The p value is a number, calculated from a statistical test, that describes how likely you are to have found a particular set of observations if the null hypothesis were true.

P values are used in hypothesis testing to help decide whether to reject the null hypothesis. The smaller the p value, the more likely you are to reject the null hypothesis.

Table of contents

What is a null hypothesis, what exactly is a p value, how do you calculate the p value, p values and statistical significance, reporting p values, caution when using p values, other interesting articles, frequently asked questions about p-values.

All statistical tests have a null hypothesis. For most tests, the null hypothesis is that there is no relationship between your variables of interest or that there is no difference among groups.

For example, in a two-tailed t test , the null hypothesis is that the difference between two groups is zero.

- Null hypothesis ( H 0 ): there is no difference in longevity between the two groups.

- Alternative hypothesis ( H A or H 1 ): there is a difference in longevity between the two groups.

Prevent plagiarism. Run a free check.

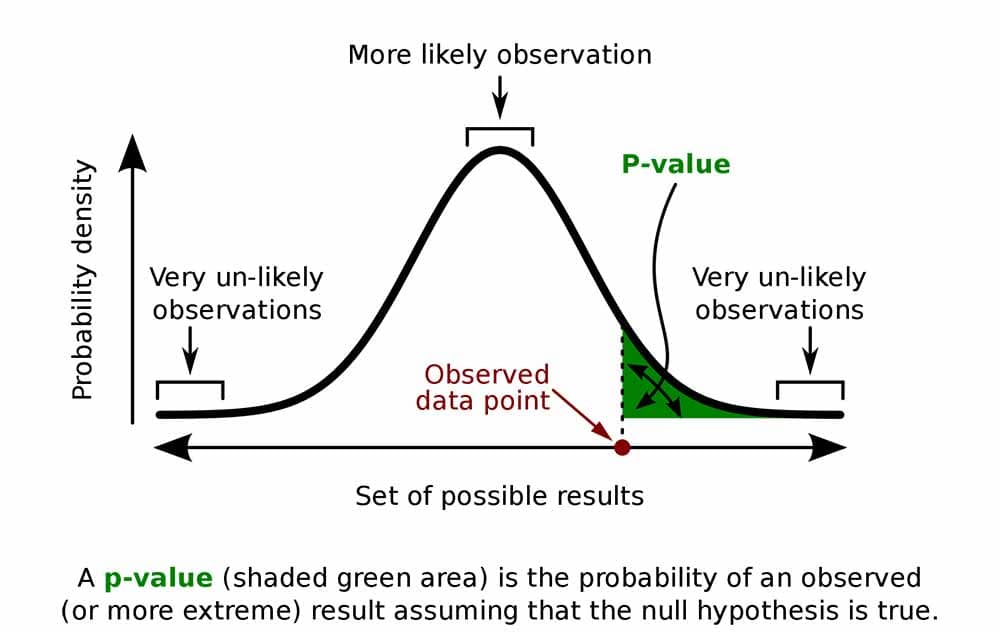

The p value , or probability value, tells you how likely it is that your data could have occurred under the null hypothesis. It does this by calculating the likelihood of your test statistic , which is the number calculated by a statistical test using your data.

The p value tells you how often you would expect to see a test statistic as extreme or more extreme than the one calculated by your statistical test if the null hypothesis of that test was true. The p value gets smaller as the test statistic calculated from your data gets further away from the range of test statistics predicted by the null hypothesis.

The p value is a proportion: if your p value is 0.05, that means that 5% of the time you would see a test statistic at least as extreme as the one you found if the null hypothesis was true.

P values are usually automatically calculated by your statistical program (R, SPSS, etc.).

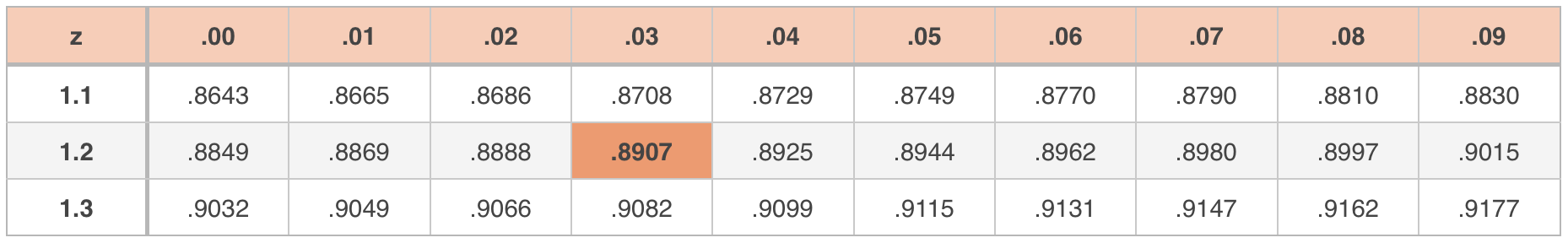

You can also find tables for estimating the p value of your test statistic online. These tables show, based on the test statistic and degrees of freedom (number of observations minus number of independent variables) of your test, how frequently you would expect to see that test statistic under the null hypothesis.

The calculation of the p value depends on the statistical test you are using to test your hypothesis :

- Different statistical tests have different assumptions and generate different test statistics. You should choose the statistical test that best fits your data and matches the effect or relationship you want to test.

- The number of independent variables you include in your test changes how large or small the test statistic needs to be to generate the same p value.

No matter what test you use, the p value always describes the same thing: how often you can expect to see a test statistic as extreme or more extreme than the one calculated from your test.

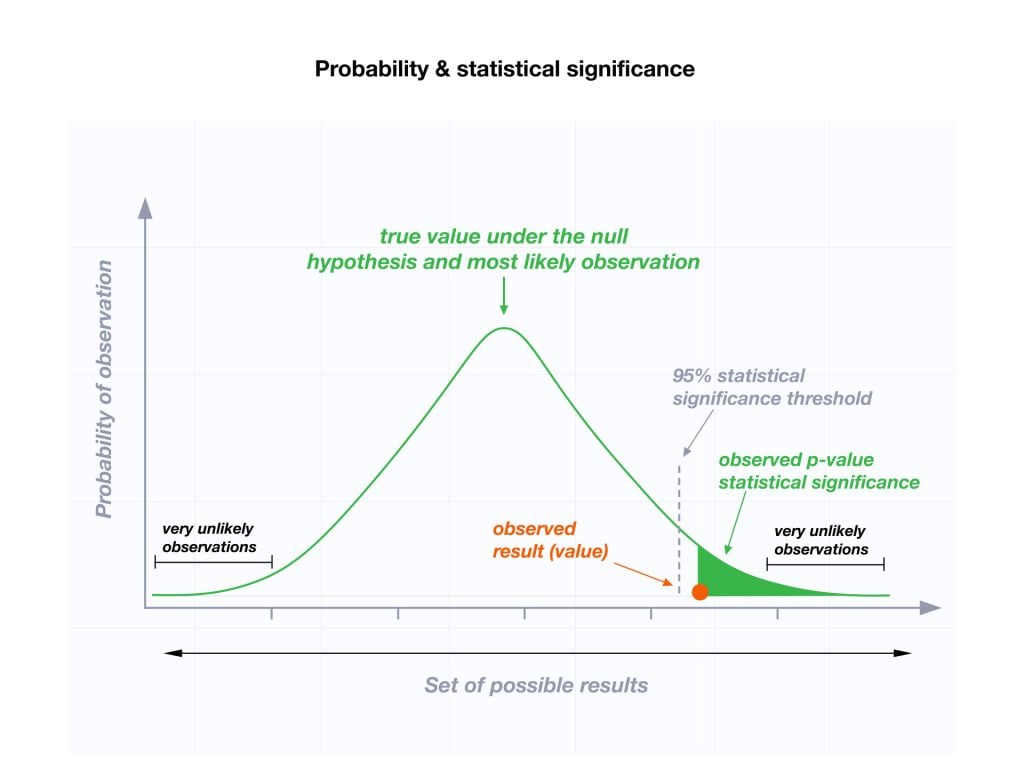

P values are most often used by researchers to say whether a certain pattern they have measured is statistically significant.

Statistical significance is another way of saying that the p value of a statistical test is small enough to reject the null hypothesis of the test.

How small is small enough? The most common threshold is p < 0.05; that is, when you would expect to find a test statistic as extreme as the one calculated by your test only 5% of the time. But the threshold depends on your field of study – some fields prefer thresholds of 0.01, or even 0.001.

The threshold value for determining statistical significance is also known as the alpha value.

P values of statistical tests are usually reported in the results section of a research paper , along with the key information needed for readers to put the p values in context – for example, correlation coefficient in a linear regression , or the average difference between treatment groups in a t -test.

P values are often interpreted as your risk of rejecting the null hypothesis of your test when the null hypothesis is actually true.

In reality, the risk of rejecting the null hypothesis is often higher than the p value, especially when looking at a single study or when using small sample sizes. This is because the smaller your frame of reference, the greater the chance that you stumble across a statistically significant pattern completely by accident.

P values are also often interpreted as supporting or refuting the alternative hypothesis. This is not the case. The p value can only tell you whether or not the null hypothesis is supported. It cannot tell you whether your alternative hypothesis is true, or why.

If you want to know more about statistics , methodology , or research bias , make sure to check out some of our other articles with explanations and examples.

- Normal distribution

- Descriptive statistics

- Measures of central tendency

- Correlation coefficient

- Null hypothesis

Methodology

- Cluster sampling

- Stratified sampling

- Types of interviews

- Cohort study

- Thematic analysis

Research bias

- Implicit bias

- Cognitive bias

- Survivorship bias

- Availability heuristic

- Nonresponse bias

- Regression to the mean

A p -value , or probability value, is a number describing how likely it is that your data would have occurred under the null hypothesis of your statistical test .

P -values are usually automatically calculated by the program you use to perform your statistical test. They can also be estimated using p -value tables for the relevant test statistic .

P -values are calculated from the null distribution of the test statistic. They tell you how often a test statistic is expected to occur under the null hypothesis of the statistical test, based on where it falls in the null distribution.

If the test statistic is far from the mean of the null distribution, then the p -value will be small, showing that the test statistic is not likely to have occurred under the null hypothesis.

Statistical significance is a term used by researchers to state that it is unlikely their observations could have occurred under the null hypothesis of a statistical test . Significance is usually denoted by a p -value , or probability value.

Statistical significance is arbitrary – it depends on the threshold, or alpha value, chosen by the researcher. The most common threshold is p < 0.05, which means that the data is likely to occur less than 5% of the time under the null hypothesis .

When the p -value falls below the chosen alpha value, then we say the result of the test is statistically significant.

No. The p -value only tells you how likely the data you have observed is to have occurred under the null hypothesis .

If the p -value is below your threshold of significance (typically p < 0.05), then you can reject the null hypothesis, but this does not necessarily mean that your alternative hypothesis is true.

Cite this Scribbr article

If you want to cite this source, you can copy and paste the citation or click the “Cite this Scribbr article” button to automatically add the citation to our free Citation Generator.

Bevans, R. (2023, June 22). Understanding P-values | Definition and Examples. Scribbr. Retrieved September 5, 2024, from https://www.scribbr.com/statistics/p-value/

Is this article helpful?

Rebecca Bevans

Other students also liked, an easy introduction to statistical significance (with examples), test statistics | definition, interpretation, and examples, what is effect size and why does it matter (examples), what is your plagiarism score.

Hypothesis Testing Calculator

| $H_o$: | |||

| $H_a$: | μ | ≠ | μ₀ |

| $n$ | = | $\bar{x}$ | = | = |

| $\text{Test Statistic: }$ | = |

| $\text{Degrees of Freedom: } $ | $df$ | = |

| $ \text{Level of Significance: } $ | $\alpha$ | = |

Type II Error

| $H_o$: | $\mu$ | ||

| $H_a$: | $\mu$ | ≠ | $\mu_0$ |

| $n$ | = | σ | = | $\mu$ | = |

| $\text{Level of Significance: }$ | $\alpha$ | = |

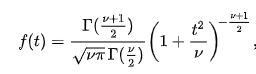

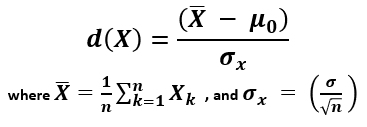

The first step in hypothesis testing is to calculate the test statistic. The formula for the test statistic depends on whether the population standard deviation (σ) is known or unknown. If σ is known, our hypothesis test is known as a z test and we use the z distribution. If σ is unknown, our hypothesis test is known as a t test and we use the t distribution. Use of the t distribution relies on the degrees of freedom, which is equal to the sample size minus one. Furthermore, if the population standard deviation σ is unknown, the sample standard deviation s is used instead. To switch from σ known to σ unknown, click on $\boxed{\sigma}$ and select $\boxed{s}$ in the Hypothesis Testing Calculator.

| $\sigma$ Known | $\sigma$ Unknown | |

| Test Statistic | $ z = \dfrac{\bar{x}-\mu_0}{\sigma/\sqrt{{\color{Black} n}}} $ | $ t = \dfrac{\bar{x}-\mu_0}{s/\sqrt{n}} $ |

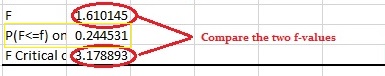

Next, the test statistic is used to conduct the test using either the p-value approach or critical value approach. The particular steps taken in each approach largely depend on the form of the hypothesis test: lower tail, upper tail or two-tailed. The form can easily be identified by looking at the alternative hypothesis (H a ). If there is a less than sign in the alternative hypothesis then it is a lower tail test, greater than sign is an upper tail test and inequality is a two-tailed test. To switch from a lower tail test to an upper tail or two-tailed test, click on $\boxed{\geq}$ and select $\boxed{\leq}$ or $\boxed{=}$, respectively.

| Lower Tail Test | Upper Tail Test | Two-Tailed Test |

| $H_0 \colon \mu \geq \mu_0$ | $H_0 \colon \mu \leq \mu_0$ | $H_0 \colon \mu = \mu_0$ |

| $H_a \colon \mu | $H_a \colon \mu \neq \mu_0$ |

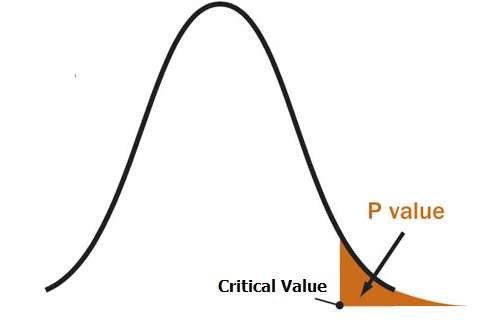

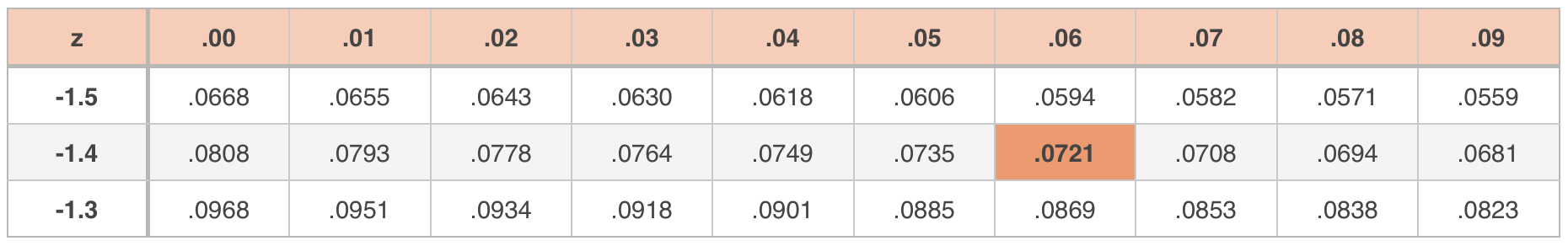

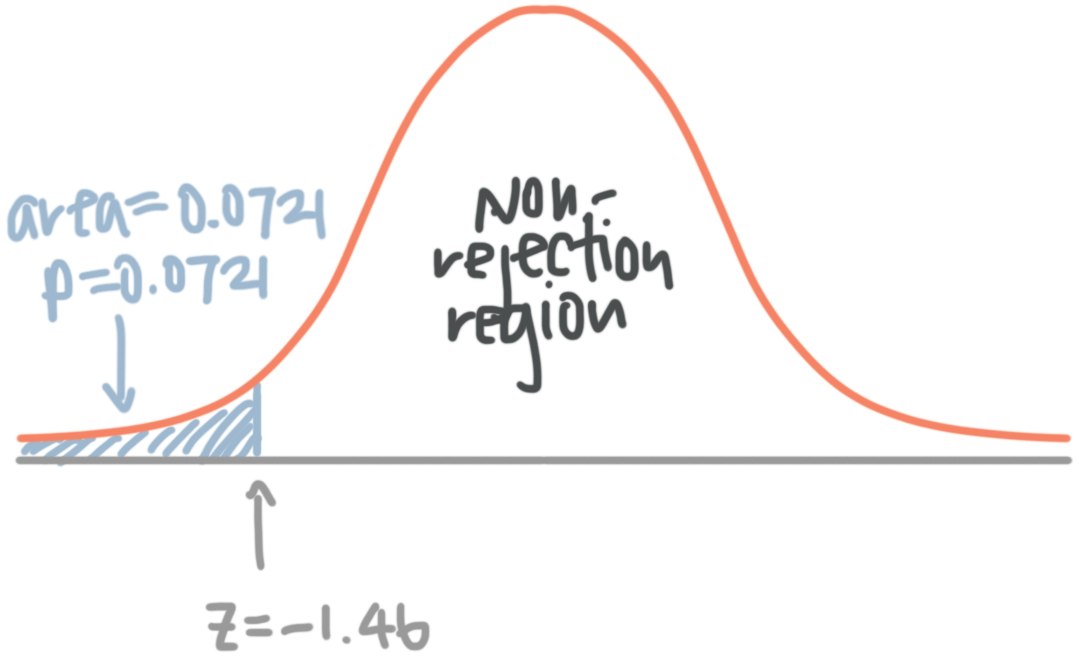

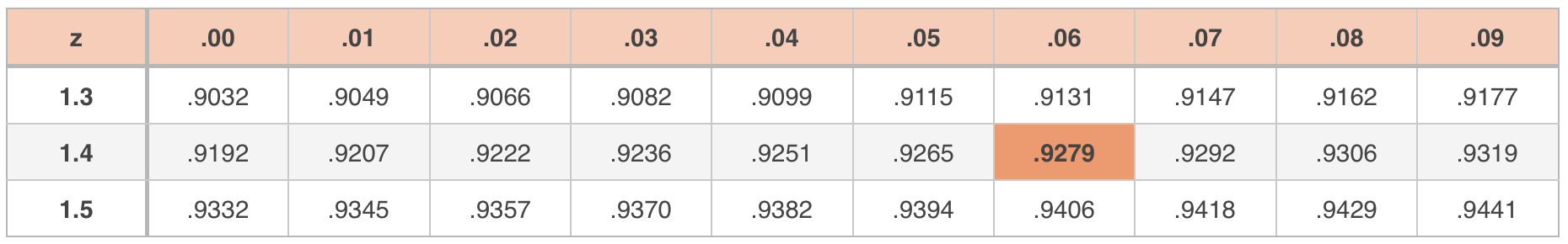

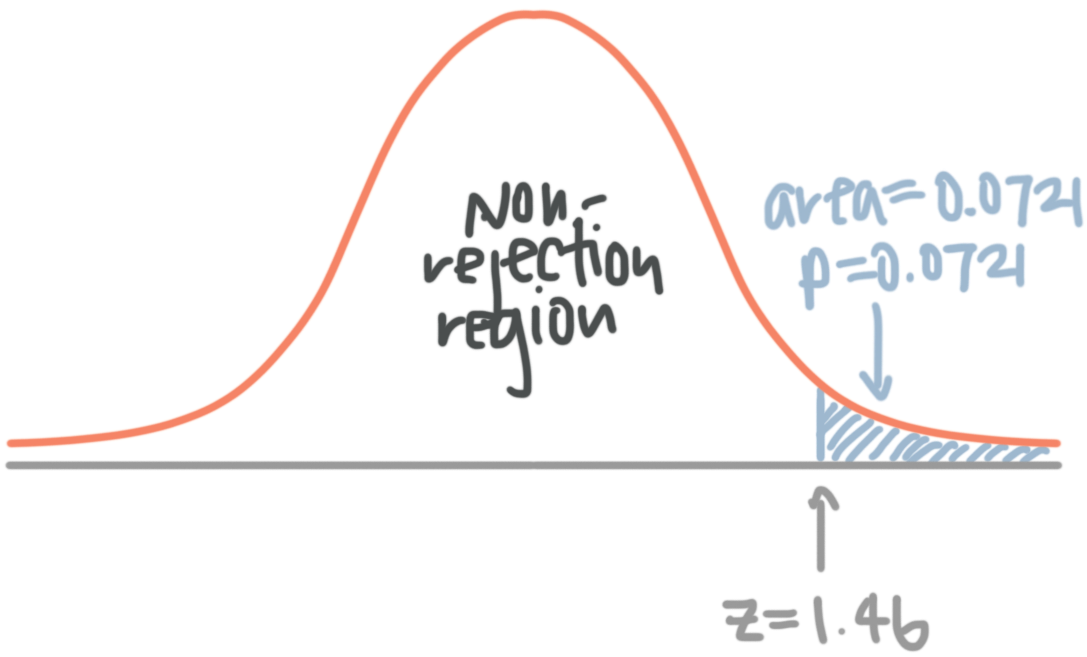

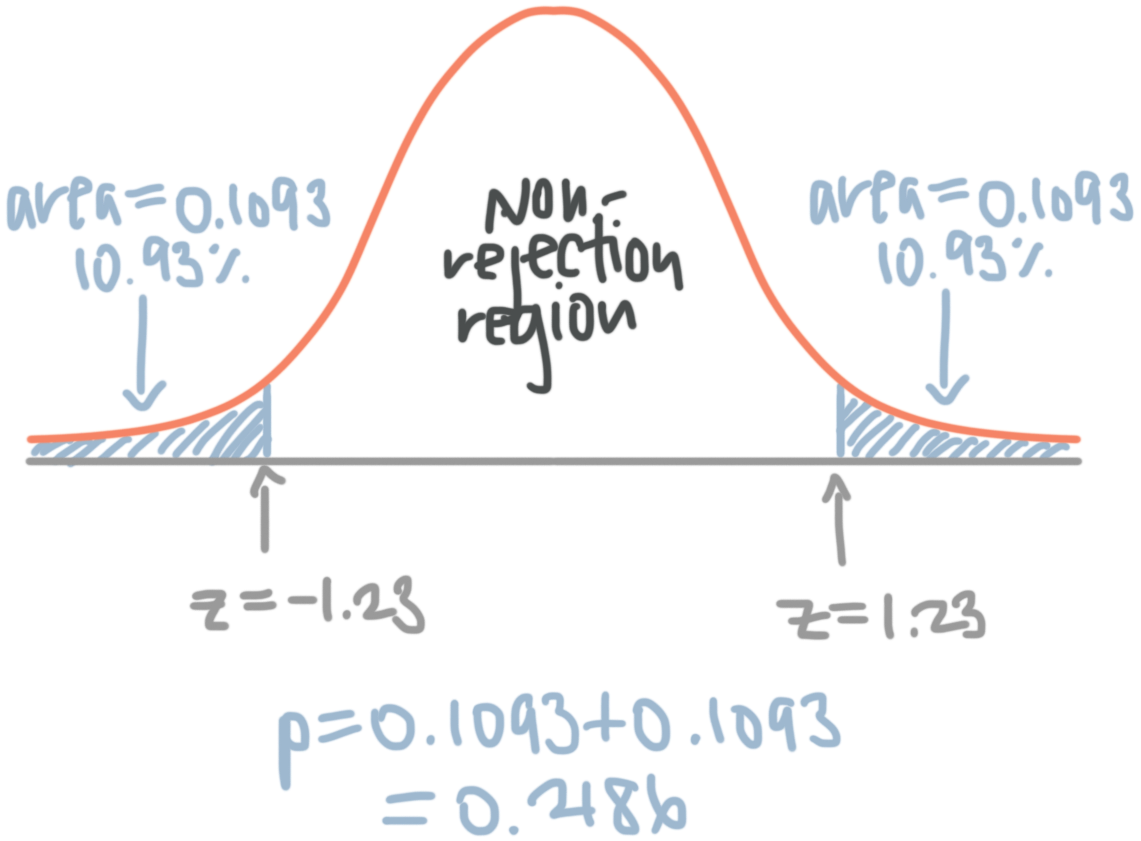

In the p-value approach, the test statistic is used to calculate a p-value. If the test is a lower tail test, the p-value is the probability of getting a value for the test statistic at least as small as the value from the sample. If the test is an upper tail test, the p-value is the probability of getting a value for the test statistic at least as large as the value from the sample. In a two-tailed test, the p-value is the probability of getting a value for the test statistic at least as unlikely as the value from the sample.

To test the hypothesis in the p-value approach, compare the p-value to the level of significance. If the p-value is less than or equal to the level of signifance, reject the null hypothesis. If the p-value is greater than the level of significance, do not reject the null hypothesis. This method remains unchanged regardless of whether it's a lower tail, upper tail or two-tailed test. To change the level of significance, click on $\boxed{.05}$. Note that if the test statistic is given, you can calculate the p-value from the test statistic by clicking on the switch symbol twice.

In the critical value approach, the level of significance ($\alpha$) is used to calculate the critical value. In a lower tail test, the critical value is the value of the test statistic providing an area of $\alpha$ in the lower tail of the sampling distribution of the test statistic. In an upper tail test, the critical value is the value of the test statistic providing an area of $\alpha$ in the upper tail of the sampling distribution of the test statistic. In a two-tailed test, the critical values are the values of the test statistic providing areas of $\alpha / 2$ in the lower and upper tail of the sampling distribution of the test statistic.

To test the hypothesis in the critical value approach, compare the critical value to the test statistic. Unlike the p-value approach, the method we use to decide whether to reject the null hypothesis depends on the form of the hypothesis test. In a lower tail test, if the test statistic is less than or equal to the critical value, reject the null hypothesis. In an upper tail test, if the test statistic is greater than or equal to the critical value, reject the null hypothesis. In a two-tailed test, if the test statistic is less than or equal the lower critical value or greater than or equal to the upper critical value, reject the null hypothesis.

| Lower Tail Test | Upper Tail Test | Two-Tailed Test |

| If $z \leq -z_\alpha$, reject $H_0$. | If $z \geq z_\alpha$, reject $H_0$. | If $z \leq -z_{\alpha/2}$ or $z \geq z_{\alpha/2}$, reject $H_0$. |

| If $t \leq -t_\alpha$, reject $H_0$. | If $t \geq t_\alpha$, reject $H_0$. | If $t \leq -t_{\alpha/2}$ or $t \geq t_{\alpha/2}$, reject $H_0$. |

When conducting a hypothesis test, there is always a chance that you come to the wrong conclusion. There are two types of errors you can make: Type I Error and Type II Error. A Type I Error is committed if you reject the null hypothesis when the null hypothesis is true. Ideally, we'd like to accept the null hypothesis when the null hypothesis is true. A Type II Error is committed if you accept the null hypothesis when the alternative hypothesis is true. Ideally, we'd like to reject the null hypothesis when the alternative hypothesis is true.

| Condition | ||||

| $H_0$ True | $H_a$ True | |||

| Conclusion | Accept $H_0$ | Correct | Type II Error | |

| Reject $H_0$ | Type I Error | Correct | ||

Hypothesis testing is closely related to the statistical area of confidence intervals. If the hypothesized value of the population mean is outside of the confidence interval, we can reject the null hypothesis. Confidence intervals can be found using the Confidence Interval Calculator . The calculator on this page does hypothesis tests for one population mean. Sometimes we're interest in hypothesis tests about two population means. These can be solved using the Two Population Calculator . The probability of a Type II Error can be calculated by clicking on the link at the bottom of the page.

Step-by-step guide to hypothesis testing in statistics

Hypothesis testing in statistics helps us use data to make informed decisions. It starts with an assumption or guess about a group or population—something we believe might be true. We then collect sample data to check if there is enough evidence to support or reject that guess. This method is useful in many fields, like science, business, and healthcare, where decisions need to be based on facts.

Learning how to do hypothesis testing in statistics step-by-step can help you better understand data and make smarter choices, even when things are uncertain. This guide will take you through each step, from creating your hypothesis to making sense of the results, so you can see how it works in practical situations.

What is Hypothesis Testing?

Table of Contents

Hypothesis testing is a method for determining whether data supports a certain idea or assumption about a larger group. It starts by making a guess, like an average or a proportion, and then uses a small sample of data to see if that guess seems true or not.

For example, if a company wants to know if its new product is more popular than its old one, it can use hypothesis testing. They start with a statement like “The new product is not more popular than the old one” (this is the null hypothesis) and compare it with “The new product is more popular” (this is the alternative hypothesis). Then, they look at customer feedback to see if there’s enough evidence to reject the first statement and support the second one.

Simply put, hypothesis testing is a way to use data to help make decisions and understand what the data is really telling us, even when we don’t have all the answers.

Importance Of Hypothesis Testing In Decision-Making And Data Analysis

Hypothesis testing is important because it helps us make smart choices and understand data better. Here’s why it’s useful:

- Reduces Guesswork : It helps us see if our guesses or ideas are likely correct, even when we don’t have all the details.

- Uses Real Data : Instead of just guessing, it checks if our ideas match up with real data, which makes our decisions more reliable.

- Avoids Errors : It helps us avoid mistakes by carefully checking if our ideas are right so we don’t make costly errors.

- Shows What to Do Next : It tells us if our ideas work or not, helping us decide whether to keep, change, or drop something. For example, a company might test a new ad and decide what to do based on the results.

- Confirms Research Findings : It makes sure that research results are accurate and not just random chance so that we can trust the findings.

Here’s a simple guide to understanding hypothesis testing, with an example:

1. Set Up Your Hypotheses

Explanation: Start by defining two statements:

- Null Hypothesis (H0): This is the idea that there is no change or effect. It’s what you assume is true.

- Alternative Hypothesis (H1): This is what you want to test. It suggests there is a change or effect.

Example: Suppose a company says their new batteries last an average of 500 hours. To check this:

- Null Hypothesis (H0): The average battery life is 500 hours.

- Alternative Hypothesis (H1): The average battery life is not 500 hours.

2. Choose the Test

Explanation: Pick a statistical test that fits your data and your hypotheses. Different tests are used for various kinds of data.

Example: Since you’re comparing the average battery life, you use a one-sample t-test .

3. Set the Significance Level

Explanation: Decide how much risk you’re willing to take if you make a wrong decision. This is called the significance level, often set at 0.05 or 5%.

Example: You choose a significance level of 0.05, meaning you’re okay with a 5% chance of being wrong.

4. Gather and Analyze Data

Explanation: Collect your data and perform the test. Calculate the test statistic to see how far your sample result is from what you assumed.

Example: You test 30 batteries and find they last an average of 485 hours. You then calculate how this average compares to the claimed 500 hours using the t-test.

5. Find the p-Value

Explanation: The p-value tells you the probability of getting a result as extreme as yours if the null hypothesis is true.

Example: You find a p-value of 0.0001. This means there’s a very small chance (0.01%) of getting an average battery life of 485 hours or less if the true average is 500 hours.

6. Make Your Decision

Explanation: Compare the p-value to your significance level. If the p-value is smaller, you reject the null hypothesis. If it’s larger, you do not reject it.

Example: Since 0.0001 is much less than 0.05, you reject the null hypothesis. This means the data suggests the average battery life is different from 500 hours.

7. Report Your Findings

Explanation: Summarize what the results mean. State whether you rejected the null hypothesis and what that implies.

Example: You conclude that the average battery life is likely different from 500 hours. This suggests the company’s claim might not be accurate.

Hypothesis testing is a way to use data to check if your guesses or assumptions are likely true. By following these steps—setting up your hypotheses, choosing the right test, deciding on a significance level, analyzing your data, finding the p-value, making a decision, and reporting results—you can determine if your data supports or challenges your initial idea.

Understanding Hypothesis Testing: A Simple Explanation

Hypothesis testing is a way to use data to make decisions. Here’s a straightforward guide:

1. What is the Null and Alternative Hypotheses?

- Null Hypothesis (H0): This is your starting assumption. It says that nothing has changed or that there is no effect. It’s what you assume to be true until your data shows otherwise. Example: If a company says their batteries last 500 hours, the null hypothesis is: “The average battery life is 500 hours.” This means you think the claim is correct unless you find evidence to prove otherwise.

- Alternative Hypothesis (H1): This is what you want to find out. It suggests that there is an effect or a difference. It’s what you are testing to see if it might be true. Example: To test the company’s claim, you might say: “The average battery life is not 500 hours.” This means you think the average battery life might be different from what the company says.

2. One-Tailed vs. Two-Tailed Tests

- One-Tailed Test: This test checks for an effect in only one direction. You use it when you’re only interested in finding out if something is either more or less than a specific value. Example: If you think the battery lasts longer than 500 hours, you would use a one-tailed test to see if the battery life is significantly more than 500 hours.

- Two-Tailed Test: This test checks for an effect in both directions. Use this when you want to see if something is different from a specific value, whether it’s more or less. Example: If you want to see if the battery life is different from 500 hours, whether it’s more or less, you would use a two-tailed test. This checks for any significant difference, regardless of the direction.

3. Common Misunderstandings

- Clarification: Hypothesis testing doesn’t prove that the null hypothesis is true. It just helps you decide if you should reject it. If there isn’t enough evidence against it, you don’t reject it, but that doesn’t mean it’s definitely true.

- Clarification: A small p-value shows that your data is unlikely if the null hypothesis is true. It suggests that the alternative hypothesis might be right, but it doesn’t prove the null hypothesis is false.

- Clarification: The significance level (alpha) is a set threshold, like 0.05, that helps you decide how much risk you’re willing to take for making a wrong decision. It should be chosen carefully, not randomly.

- Clarification: Hypothesis testing helps you make decisions based on data, but it doesn’t guarantee your results are correct. The quality of your data and the right choice of test affect how reliable your results are.

Benefits and Limitations of Hypothesis Testing

- Clear Decisions: Hypothesis testing helps you make clear decisions based on data. It shows whether the evidence supports or goes against your initial idea.

- Objective Analysis: It relies on data rather than personal opinions, so your decisions are based on facts rather than feelings.

- Concrete Numbers: You get specific numbers, like p-values, to understand how strong the evidence is against your idea.

- Control Risk: You can set a risk level (alpha level) to manage the chance of making an error, which helps avoid incorrect conclusions.

- Widely Used: It can be used in many areas, from science and business to social studies and engineering, making it a versatile tool.

Limitations

- Sample Size Matters: The results can be affected by the size of the sample. Small samples might give unreliable results, while large samples might find differences that aren’t meaningful in real life.

- Risk of Misinterpretation: A small p-value means the results are unlikely if the null hypothesis is true, but it doesn’t show how important the effect is.

- Needs Assumptions: Hypothesis testing requires certain conditions, like data being normally distributed . If these aren’t met, the results might not be accurate.

- Simple Decisions: It often results in a basic yes or no decision without giving detailed information about the size or impact of the effect.

- Can Be Misused: Sometimes, people misuse hypothesis testing, tweaking data to get a desired result or focusing only on whether the result is statistically significant.

- No Absolute Proof: Hypothesis testing doesn’t prove that your hypothesis is true. It only helps you decide if there’s enough evidence to reject the null hypothesis, so the conclusions are based on likelihood, not certainty.

Final Thoughts

Hypothesis testing helps you make decisions based on data. It involves setting up your initial idea, picking a significance level, doing the test, and looking at the results. By following these steps, you can make sure your conclusions are based on solid information, not just guesses.

This approach lets you see if the evidence supports or contradicts your initial idea, helping you make better decisions. But remember that hypothesis testing isn’t perfect. Things like sample size and assumptions can affect the results, so it’s important to be aware of these limitations.

In simple terms, using a step-by-step guide for hypothesis testing is a great way to better understand your data. Follow the steps carefully and keep in mind the method’s limits.

What is the difference between one-tailed and two-tailed tests?

A one-tailed test assesses the probability of the observed data in one direction (either greater than or less than a certain value). In contrast, a two-tailed test looks at both directions (greater than and less than) to detect any significant deviation from the null hypothesis.

How do you choose the appropriate test for hypothesis testing?

The choice of test depends on the type of data you have and the hypotheses you are testing. Common tests include t-tests, chi-square tests, and ANOVA. You get more details about ANOVA, you may read Complete Details on What is ANOVA in Statistics ? It’s important to match the test to the data characteristics and the research question.

What is the role of sample size in hypothesis testing?

Sample size affects the reliability of hypothesis testing. Larger samples provide more reliable estimates and can detect smaller effects, while smaller samples may lead to less accurate results and reduced power.

Can hypothesis testing prove that a hypothesis is true?

Hypothesis testing cannot prove that a hypothesis is true. It can only provide evidence to support or reject the null hypothesis. A result can indicate whether the data is consistent with the null hypothesis or not, but it does not prove the alternative hypothesis with certainty.

Related Posts

How to Find the Best Online Statistics Homework Help

Why SPSS Homework Help Is An Important aspect for Students?

Leave a comment cancel reply.

Your email address will not be published. Required fields are marked *

Warning: The NCBI web site requires JavaScript to function. more...

An official website of the United States government

The .gov means it's official. Federal government websites often end in .gov or .mil. Before sharing sensitive information, make sure you're on a federal government site.

The site is secure. The https:// ensures that you are connecting to the official website and that any information you provide is encrypted and transmitted securely.

- Publications

- Account settings

- Browse Titles

NCBI Bookshelf. A service of the National Library of Medicine, National Institutes of Health.

StatPearls [Internet]. Treasure Island (FL): StatPearls Publishing; 2024 Jan-.

StatPearls [Internet].

Hypothesis testing, p values, confidence intervals, and significance.

Jacob Shreffler ; Martin R. Huecker .

Affiliations

Last Update: March 13, 2023 .

- Definition/Introduction

Medical providers often rely on evidence-based medicine to guide decision-making in practice. Often a research hypothesis is tested with results provided, typically with p values, confidence intervals, or both. Additionally, statistical or research significance is estimated or determined by the investigators. Unfortunately, healthcare providers may have different comfort levels in interpreting these findings, which may affect the adequate application of the data.

- Issues of Concern

Without a foundational understanding of hypothesis testing, p values, confidence intervals, and the difference between statistical and clinical significance, it may affect healthcare providers' ability to make clinical decisions without relying purely on the research investigators deemed level of significance. Therefore, an overview of these concepts is provided to allow medical professionals to use their expertise to determine if results are reported sufficiently and if the study outcomes are clinically appropriate to be applied in healthcare practice.

Hypothesis Testing

Investigators conducting studies need research questions and hypotheses to guide analyses. Starting with broad research questions (RQs), investigators then identify a gap in current clinical practice or research. Any research problem or statement is grounded in a better understanding of relationships between two or more variables. For this article, we will use the following research question example:

Research Question: Is Drug 23 an effective treatment for Disease A?

Research questions do not directly imply specific guesses or predictions; we must formulate research hypotheses. A hypothesis is a predetermined declaration regarding the research question in which the investigator(s) makes a precise, educated guess about a study outcome. This is sometimes called the alternative hypothesis and ultimately allows the researcher to take a stance based on experience or insight from medical literature. An example of a hypothesis is below.

Research Hypothesis: Drug 23 will significantly reduce symptoms associated with Disease A compared to Drug 22.

The null hypothesis states that there is no statistical difference between groups based on the stated research hypothesis.

Researchers should be aware of journal recommendations when considering how to report p values, and manuscripts should remain internally consistent.

Regarding p values, as the number of individuals enrolled in a study (the sample size) increases, the likelihood of finding a statistically significant effect increases. With very large sample sizes, the p-value can be very low significant differences in the reduction of symptoms for Disease A between Drug 23 and Drug 22. The null hypothesis is deemed true until a study presents significant data to support rejecting the null hypothesis. Based on the results, the investigators will either reject the null hypothesis (if they found significant differences or associations) or fail to reject the null hypothesis (they could not provide proof that there were significant differences or associations).

To test a hypothesis, researchers obtain data on a representative sample to determine whether to reject or fail to reject a null hypothesis. In most research studies, it is not feasible to obtain data for an entire population. Using a sampling procedure allows for statistical inference, though this involves a certain possibility of error. [1] When determining whether to reject or fail to reject the null hypothesis, mistakes can be made: Type I and Type II errors. Though it is impossible to ensure that these errors have not occurred, researchers should limit the possibilities of these faults. [2]

Significance

Significance is a term to describe the substantive importance of medical research. Statistical significance is the likelihood of results due to chance. [3] Healthcare providers should always delineate statistical significance from clinical significance, a common error when reviewing biomedical research. [4] When conceptualizing findings reported as either significant or not significant, healthcare providers should not simply accept researchers' results or conclusions without considering the clinical significance. Healthcare professionals should consider the clinical importance of findings and understand both p values and confidence intervals so they do not have to rely on the researchers to determine the level of significance. [5] One criterion often used to determine statistical significance is the utilization of p values.

P values are used in research to determine whether the sample estimate is significantly different from a hypothesized value. The p-value is the probability that the observed effect within the study would have occurred by chance if, in reality, there was no true effect. Conventionally, data yielding a p<0.05 or p<0.01 is considered statistically significant. While some have debated that the 0.05 level should be lowered, it is still universally practiced. [6] Hypothesis testing allows us to determine the size of the effect.

An example of findings reported with p values are below:

Statement: Drug 23 reduced patients' symptoms compared to Drug 22. Patients who received Drug 23 (n=100) were 2.1 times less likely than patients who received Drug 22 (n = 100) to experience symptoms of Disease A, p<0.05.

Statement:Individuals who were prescribed Drug 23 experienced fewer symptoms (M = 1.3, SD = 0.7) compared to individuals who were prescribed Drug 22 (M = 5.3, SD = 1.9). This finding was statistically significant, p= 0.02.

For either statement, if the threshold had been set at 0.05, the null hypothesis (that there was no relationship) should be rejected, and we should conclude significant differences. Noticeably, as can be seen in the two statements above, some researchers will report findings with < or > and others will provide an exact p-value (0.000001) but never zero [6] . When examining research, readers should understand how p values are reported. The best practice is to report all p values for all variables within a study design, rather than only providing p values for variables with significant findings. [7] The inclusion of all p values provides evidence for study validity and limits suspicion for selective reporting/data mining.

While researchers have historically used p values, experts who find p values problematic encourage the use of confidence intervals. [8] . P-values alone do not allow us to understand the size or the extent of the differences or associations. [3] In March 2016, the American Statistical Association (ASA) released a statement on p values, noting that scientific decision-making and conclusions should not be based on a fixed p-value threshold (e.g., 0.05). They recommend focusing on the significance of results in the context of study design, quality of measurements, and validity of data. Ultimately, the ASA statement noted that in isolation, a p-value does not provide strong evidence. [9]

When conceptualizing clinical work, healthcare professionals should consider p values with a concurrent appraisal study design validity. For example, a p-value from a double-blinded randomized clinical trial (designed to minimize bias) should be weighted higher than one from a retrospective observational study [7] . The p-value debate has smoldered since the 1950s [10] , and replacement with confidence intervals has been suggested since the 1980s. [11]

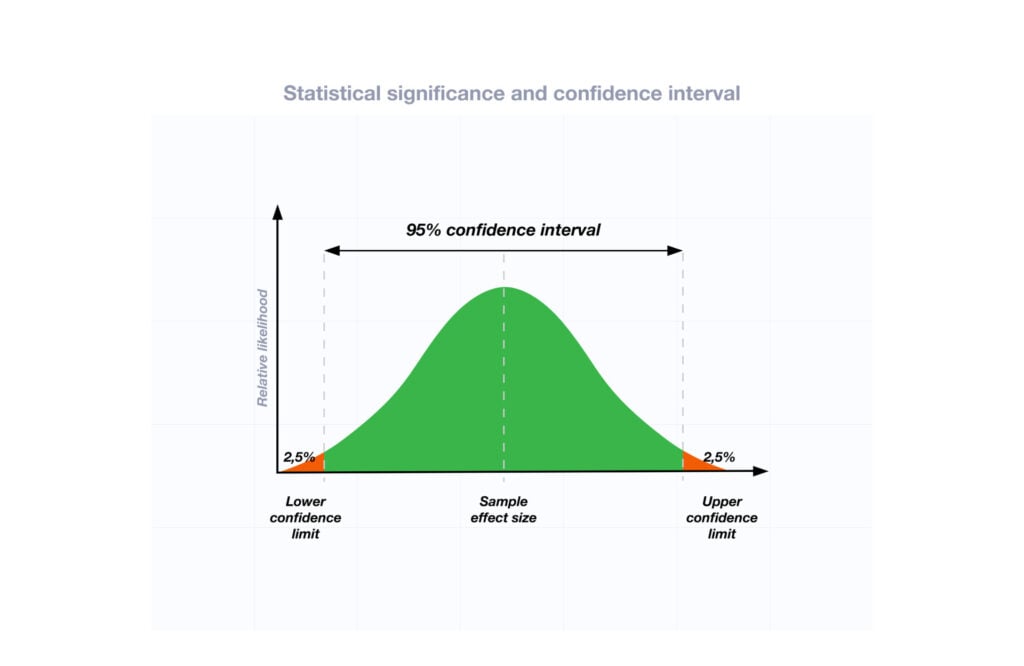

Confidence Intervals

A confidence interval provides a range of values within given confidence (e.g., 95%), including the accurate value of the statistical constraint within a targeted population. [12] Most research uses a 95% CI, but investigators can set any level (e.g., 90% CI, 99% CI). [13] A CI provides a range with the lower bound and upper bound limits of a difference or association that would be plausible for a population. [14] Therefore, a CI of 95% indicates that if a study were to be carried out 100 times, the range would contain the true value in 95, [15] confidence intervals provide more evidence regarding the precision of an estimate compared to p-values. [6]

In consideration of the similar research example provided above, one could make the following statement with 95% CI:

Statement: Individuals who were prescribed Drug 23 had no symptoms after three days, which was significantly faster than those prescribed Drug 22; there was a mean difference between the two groups of days to the recovery of 4.2 days (95% CI: 1.9 – 7.8).

It is important to note that the width of the CI is affected by the standard error and the sample size; reducing a study sample number will result in less precision of the CI (increase the width). [14] A larger width indicates a smaller sample size or a larger variability. [16] A researcher would want to increase the precision of the CI. For example, a 95% CI of 1.43 – 1.47 is much more precise than the one provided in the example above. In research and clinical practice, CIs provide valuable information on whether the interval includes or excludes any clinically significant values. [14]

Null values are sometimes used for differences with CI (zero for differential comparisons and 1 for ratios). However, CIs provide more information than that. [15] Consider this example: A hospital implements a new protocol that reduced wait time for patients in the emergency department by an average of 25 minutes (95% CI: -2.5 – 41 minutes). Because the range crosses zero, implementing this protocol in different populations could result in longer wait times; however, the range is much higher on the positive side. Thus, while the p-value used to detect statistical significance for this may result in "not significant" findings, individuals should examine this range, consider the study design, and weigh whether or not it is still worth piloting in their workplace.

Similarly to p-values, 95% CIs cannot control for researchers' errors (e.g., study bias or improper data analysis). [14] In consideration of whether to report p-values or CIs, researchers should examine journal preferences. When in doubt, reporting both may be beneficial. [13] An example is below:

Reporting both: Individuals who were prescribed Drug 23 had no symptoms after three days, which was significantly faster than those prescribed Drug 22, p = 0.009. There was a mean difference between the two groups of days to the recovery of 4.2 days (95% CI: 1.9 – 7.8).

- Clinical Significance

Recall that clinical significance and statistical significance are two different concepts. Healthcare providers should remember that a study with statistically significant differences and large sample size may be of no interest to clinicians, whereas a study with smaller sample size and statistically non-significant results could impact clinical practice. [14] Additionally, as previously mentioned, a non-significant finding may reflect the study design itself rather than relationships between variables.

Healthcare providers using evidence-based medicine to inform practice should use clinical judgment to determine the practical importance of studies through careful evaluation of the design, sample size, power, likelihood of type I and type II errors, data analysis, and reporting of statistical findings (p values, 95% CI or both). [4] Interestingly, some experts have called for "statistically significant" or "not significant" to be excluded from work as statistical significance never has and will never be equivalent to clinical significance. [17]

The decision on what is clinically significant can be challenging, depending on the providers' experience and especially the severity of the disease. Providers should use their knowledge and experiences to determine the meaningfulness of study results and make inferences based not only on significant or insignificant results by researchers but through their understanding of study limitations and practical implications.

- Nursing, Allied Health, and Interprofessional Team Interventions

All physicians, nurses, pharmacists, and other healthcare professionals should strive to understand the concepts in this chapter. These individuals should maintain the ability to review and incorporate new literature for evidence-based and safe care.

- Review Questions

- Access free multiple choice questions on this topic.

- Comment on this article.

Disclosure: Jacob Shreffler declares no relevant financial relationships with ineligible companies.

Disclosure: Martin Huecker declares no relevant financial relationships with ineligible companies.

This book is distributed under the terms of the Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International (CC BY-NC-ND 4.0) ( http://creativecommons.org/licenses/by-nc-nd/4.0/ ), which permits others to distribute the work, provided that the article is not altered or used commercially. You are not required to obtain permission to distribute this article, provided that you credit the author and journal.

- Cite this Page Shreffler J, Huecker MR. Hypothesis Testing, P Values, Confidence Intervals, and Significance. [Updated 2023 Mar 13]. In: StatPearls [Internet]. Treasure Island (FL): StatPearls Publishing; 2024 Jan-.

In this Page

Bulk download.

- Bulk download StatPearls data from FTP

Related information

- PMC PubMed Central citations

- PubMed Links to PubMed

Similar articles in PubMed

- The reporting of p values, confidence intervals and statistical significance in Preventive Veterinary Medicine (1997-2017). [PeerJ. 2021] The reporting of p values, confidence intervals and statistical significance in Preventive Veterinary Medicine (1997-2017). Messam LLM, Weng HY, Rosenberger NWY, Tan ZH, Payet SDM, Santbakshsing M. PeerJ. 2021; 9:e12453. Epub 2021 Nov 24.

- Review Clinical versus statistical significance: interpreting P values and confidence intervals related to measures of association to guide decision making. [J Pharm Pract. 2010] Review Clinical versus statistical significance: interpreting P values and confidence intervals related to measures of association to guide decision making. Ferrill MJ, Brown DA, Kyle JA. J Pharm Pract. 2010 Aug; 23(4):344-51. Epub 2010 Apr 13.

- Interpreting "statistical hypothesis testing" results in clinical research. [J Ayurveda Integr Med. 2012] Interpreting "statistical hypothesis testing" results in clinical research. Sarmukaddam SB. J Ayurveda Integr Med. 2012 Apr; 3(2):65-9.

- Confidence intervals in procedural dermatology: an intuitive approach to interpreting data. [Dermatol Surg. 2005] Confidence intervals in procedural dermatology: an intuitive approach to interpreting data. Alam M, Barzilai DA, Wrone DA. Dermatol Surg. 2005 Apr; 31(4):462-6.

- Review Is statistical significance testing useful in interpreting data? [Reprod Toxicol. 1993] Review Is statistical significance testing useful in interpreting data? Savitz DA. Reprod Toxicol. 1993; 7(2):95-100.

Recent Activity

- Hypothesis Testing, P Values, Confidence Intervals, and Significance - StatPearl... Hypothesis Testing, P Values, Confidence Intervals, and Significance - StatPearls

Your browsing activity is empty.

Activity recording is turned off.

Turn recording back on

Connect with NLM

National Library of Medicine 8600 Rockville Pike Bethesda, MD 20894

Web Policies FOIA HHS Vulnerability Disclosure

Help Accessibility Careers

P-Value And Statistical Significance: What It Is & Why It Matters

Saul McLeod, PhD

Editor-in-Chief for Simply Psychology

BSc (Hons) Psychology, MRes, PhD, University of Manchester

Saul McLeod, PhD., is a qualified psychology teacher with over 18 years of experience in further and higher education. He has been published in peer-reviewed journals, including the Journal of Clinical Psychology.

Learn about our Editorial Process

Olivia Guy-Evans, MSc

Associate Editor for Simply Psychology

BSc (Hons) Psychology, MSc Psychology of Education

Olivia Guy-Evans is a writer and associate editor for Simply Psychology. She has previously worked in healthcare and educational sectors.

On This Page:

The p-value in statistics quantifies the evidence against a null hypothesis. A low p-value suggests data is inconsistent with the null, potentially favoring an alternative hypothesis. Common significance thresholds are 0.05 or 0.01.

Hypothesis testing

When you perform a statistical test, a p-value helps you determine the significance of your results in relation to the null hypothesis.

The null hypothesis (H0) states no relationship exists between the two variables being studied (one variable does not affect the other). It states the results are due to chance and are not significant in supporting the idea being investigated. Thus, the null hypothesis assumes that whatever you try to prove did not happen.

The alternative hypothesis (Ha or H1) is the one you would believe if the null hypothesis is concluded to be untrue.

The alternative hypothesis states that the independent variable affected the dependent variable, and the results are significant in supporting the theory being investigated (i.e., the results are not due to random chance).

What a p-value tells you

A p-value, or probability value, is a number describing how likely it is that your data would have occurred by random chance (i.e., that the null hypothesis is true).

The level of statistical significance is often expressed as a p-value between 0 and 1.

The smaller the p -value, the less likely the results occurred by random chance, and the stronger the evidence that you should reject the null hypothesis.

Remember, a p-value doesn’t tell you if the null hypothesis is true or false. It just tells you how likely you’d see the data you observed (or more extreme data) if the null hypothesis was true. It’s a piece of evidence, not a definitive proof.

Example: Test Statistic and p-Value

Suppose you’re conducting a study to determine whether a new drug has an effect on pain relief compared to a placebo. If the new drug has no impact, your test statistic will be close to the one predicted by the null hypothesis (no difference between the drug and placebo groups), and the resulting p-value will be close to 1. It may not be precisely 1 because real-world variations may exist. Conversely, if the new drug indeed reduces pain significantly, your test statistic will diverge further from what’s expected under the null hypothesis, and the p-value will decrease. The p-value will never reach zero because there’s always a slim possibility, though highly improbable, that the observed results occurred by random chance.

P-value interpretation

The significance level (alpha) is a set probability threshold (often 0.05), while the p-value is the probability you calculate based on your study or analysis.

A p-value less than or equal to your significance level (typically ≤ 0.05) is statistically significant.

A p-value less than or equal to a predetermined significance level (often 0.05 or 0.01) indicates a statistically significant result, meaning the observed data provide strong evidence against the null hypothesis.

This suggests the effect under study likely represents a real relationship rather than just random chance.

For instance, if you set α = 0.05, you would reject the null hypothesis if your p -value ≤ 0.05.

It indicates strong evidence against the null hypothesis, as there is less than a 5% probability the null is correct (and the results are random).

Therefore, we reject the null hypothesis and accept the alternative hypothesis.

Example: Statistical Significance

Upon analyzing the pain relief effects of the new drug compared to the placebo, the computed p-value is less than 0.01, which falls well below the predetermined alpha value of 0.05. Consequently, you conclude that there is a statistically significant difference in pain relief between the new drug and the placebo.

What does a p-value of 0.001 mean?

A p-value of 0.001 is highly statistically significant beyond the commonly used 0.05 threshold. It indicates strong evidence of a real effect or difference, rather than just random variation.

Specifically, a p-value of 0.001 means there is only a 0.1% chance of obtaining a result at least as extreme as the one observed, assuming the null hypothesis is correct.

Such a small p-value provides strong evidence against the null hypothesis, leading to rejecting the null in favor of the alternative hypothesis.

A p-value more than the significance level (typically p > 0.05) is not statistically significant and indicates strong evidence for the null hypothesis.

This means we retain the null hypothesis and reject the alternative hypothesis. You should note that you cannot accept the null hypothesis; we can only reject it or fail to reject it.

Note : when the p-value is above your threshold of significance, it does not mean that there is a 95% probability that the alternative hypothesis is true.

One-Tailed Test

Two-Tailed Test

How do you calculate the p-value ?

Most statistical software packages like R, SPSS, and others automatically calculate your p-value. This is the easiest and most common way.

Online resources and tables are available to estimate the p-value based on your test statistic and degrees of freedom.

These tables help you understand how often you would expect to see your test statistic under the null hypothesis.

Understanding the Statistical Test:

Different statistical tests are designed to answer specific research questions or hypotheses. Each test has its own underlying assumptions and characteristics.

For example, you might use a t-test to compare means, a chi-squared test for categorical data, or a correlation test to measure the strength of a relationship between variables.

Be aware that the number of independent variables you include in your analysis can influence the magnitude of the test statistic needed to produce the same p-value.

This factor is particularly important to consider when comparing results across different analyses.

Example: Choosing a Statistical Test

If you’re comparing the effectiveness of just two different drugs in pain relief, a two-sample t-test is a suitable choice for comparing these two groups. However, when you’re examining the impact of three or more drugs, it’s more appropriate to employ an Analysis of Variance ( ANOVA) . Utilizing multiple pairwise comparisons in such cases can lead to artificially low p-values and an overestimation of the significance of differences between the drug groups.

How to report

A statistically significant result cannot prove that a research hypothesis is correct (which implies 100% certainty).

Instead, we may state our results “provide support for” or “give evidence for” our research hypothesis (as there is still a slight probability that the results occurred by chance and the null hypothesis was correct – e.g., less than 5%).

Example: Reporting the results

In our comparison of the pain relief effects of the new drug and the placebo, we observed that participants in the drug group experienced a significant reduction in pain ( M = 3.5; SD = 0.8) compared to those in the placebo group ( M = 5.2; SD = 0.7), resulting in an average difference of 1.7 points on the pain scale (t(98) = -9.36; p < 0.001).

The 6th edition of the APA style manual (American Psychological Association, 2010) states the following on the topic of reporting p-values:

“When reporting p values, report exact p values (e.g., p = .031) to two or three decimal places. However, report p values less than .001 as p < .001.

The tradition of reporting p values in the form p < .10, p < .05, p < .01, and so forth, was appropriate in a time when only limited tables of critical values were available.” (p. 114)

- Do not use 0 before the decimal point for the statistical value p as it cannot equal 1. In other words, write p = .001 instead of p = 0.001.

- Please pay attention to issues of italics ( p is always italicized) and spacing (either side of the = sign).

- p = .000 (as outputted by some statistical packages such as SPSS) is impossible and should be written as p < .001.

- The opposite of significant is “nonsignificant,” not “insignificant.”

Why is the p -value not enough?

A lower p-value is sometimes interpreted as meaning there is a stronger relationship between two variables.

However, statistical significance means that it is unlikely that the null hypothesis is true (less than 5%).

To understand the strength of the difference between the two groups (control vs. experimental) a researcher needs to calculate the effect size .

When do you reject the null hypothesis?

In statistical hypothesis testing, you reject the null hypothesis when the p-value is less than or equal to the significance level (α) you set before conducting your test. The significance level is the probability of rejecting the null hypothesis when it is true. Commonly used significance levels are 0.01, 0.05, and 0.10.

Remember, rejecting the null hypothesis doesn’t prove the alternative hypothesis; it just suggests that the alternative hypothesis may be plausible given the observed data.

The p -value is conditional upon the null hypothesis being true but is unrelated to the truth or falsity of the alternative hypothesis.

What does p-value of 0.05 mean?

If your p-value is less than or equal to 0.05 (the significance level), you would conclude that your result is statistically significant. This means the evidence is strong enough to reject the null hypothesis in favor of the alternative hypothesis.

Are all p-values below 0.05 considered statistically significant?

No, not all p-values below 0.05 are considered statistically significant. The threshold of 0.05 is commonly used, but it’s just a convention. Statistical significance depends on factors like the study design, sample size, and the magnitude of the observed effect.

A p-value below 0.05 means there is evidence against the null hypothesis, suggesting a real effect. However, it’s essential to consider the context and other factors when interpreting results.

Researchers also look at effect size and confidence intervals to determine the practical significance and reliability of findings.

How does sample size affect the interpretation of p-values?

Sample size can impact the interpretation of p-values. A larger sample size provides more reliable and precise estimates of the population, leading to narrower confidence intervals.

With a larger sample, even small differences between groups or effects can become statistically significant, yielding lower p-values. In contrast, smaller sample sizes may not have enough statistical power to detect smaller effects, resulting in higher p-values.

Therefore, a larger sample size increases the chances of finding statistically significant results when there is a genuine effect, making the findings more trustworthy and robust.

Can a non-significant p-value indicate that there is no effect or difference in the data?

No, a non-significant p-value does not necessarily indicate that there is no effect or difference in the data. It means that the observed data do not provide strong enough evidence to reject the null hypothesis.

There could still be a real effect or difference, but it might be smaller or more variable than the study was able to detect.

Other factors like sample size, study design, and measurement precision can influence the p-value. It’s important to consider the entire body of evidence and not rely solely on p-values when interpreting research findings.

Can P values be exactly zero?

While a p-value can be extremely small, it cannot technically be absolute zero. When a p-value is reported as p = 0.000, the actual p-value is too small for the software to display. This is often interpreted as strong evidence against the null hypothesis. For p values less than 0.001, report as p < .001

Further Information

- P Value Calculator From T Score

- P-Value Calculator For Chi-Square

- P-values and significance tests (Kahn Academy)

- Hypothesis testing and p-values (Kahn Academy)

- Wasserstein, R. L., Schirm, A. L., & Lazar, N. A. (2019). Moving to a world beyond “ p “< 0.05”.

- Criticism of using the “ p “< 0.05”.

- Publication manual of the American Psychological Association

- Statistics for Psychology Book Download

Bland, J. M., & Altman, D. G. (1994). One and two sided tests of significance: Authors’ reply. BMJ: British Medical Journal , 309 (6958), 874.

Goodman, S. N., & Royall, R. (1988). Evidence and scientific research. American Journal of Public Health , 78 (12), 1568-1574.

Goodman, S. (2008, July). A dirty dozen: twelve p-value misconceptions . In Seminars in hematology (Vol. 45, No. 3, pp. 135-140). WB Saunders.

Lang, J. M., Rothman, K. J., & Cann, C. I. (1998). That confounded P-value. Epidemiology (Cambridge, Mass.) , 9 (1), 7-8.

- school Campus Bookshelves

- menu_book Bookshelves

- perm_media Learning Objects

- login Login

- how_to_reg Request Instructor Account

- hub Instructor Commons

Margin Size

- Download Page (PDF)

- Download Full Book (PDF)

- Periodic Table

- Physics Constants

- Scientific Calculator

- Reference & Cite

- Tools expand_more

- Readability

selected template will load here

This action is not available.

Chapter 5: Hypothesis Testing and P-Values

- Last updated

- Save as PDF

- Page ID 99725

\( \newcommand{\vecs}[1]{\overset { \scriptstyle \rightharpoonup} {\mathbf{#1}} } \)

\( \newcommand{\vecd}[1]{\overset{-\!-\!\rightharpoonup}{\vphantom{a}\smash {#1}}} \)

\( \newcommand{\id}{\mathrm{id}}\) \( \newcommand{\Span}{\mathrm{span}}\)

( \newcommand{\kernel}{\mathrm{null}\,}\) \( \newcommand{\range}{\mathrm{range}\,}\)

\( \newcommand{\RealPart}{\mathrm{Re}}\) \( \newcommand{\ImaginaryPart}{\mathrm{Im}}\)

\( \newcommand{\Argument}{\mathrm{Arg}}\) \( \newcommand{\norm}[1]{\| #1 \|}\)

\( \newcommand{\inner}[2]{\langle #1, #2 \rangle}\)

\( \newcommand{\Span}{\mathrm{span}}\)

\( \newcommand{\id}{\mathrm{id}}\)

\( \newcommand{\kernel}{\mathrm{null}\,}\)

\( \newcommand{\range}{\mathrm{range}\,}\)

\( \newcommand{\RealPart}{\mathrm{Re}}\)

\( \newcommand{\ImaginaryPart}{\mathrm{Im}}\)

\( \newcommand{\Argument}{\mathrm{Arg}}\)

\( \newcommand{\norm}[1]{\| #1 \|}\)

\( \newcommand{\Span}{\mathrm{span}}\) \( \newcommand{\AA}{\unicode[.8,0]{x212B}}\)

\( \newcommand{\vectorA}[1]{\vec{#1}} % arrow\)

\( \newcommand{\vectorAt}[1]{\vec{\text{#1}}} % arrow\)

\( \newcommand{\vectorB}[1]{\overset { \scriptstyle \rightharpoonup} {\mathbf{#1}} } \)

\( \newcommand{\vectorC}[1]{\textbf{#1}} \)

\( \newcommand{\vectorD}[1]{\overrightarrow{#1}} \)

\( \newcommand{\vectorDt}[1]{\overrightarrow{\text{#1}}} \)

\( \newcommand{\vectE}[1]{\overset{-\!-\!\rightharpoonup}{\vphantom{a}\smash{\mathbf {#1}}}} \)

Here you can find a series of pre-recorded lecture videos that cover this content: https://youtube.com/playlist?list=PL...I4vAR1y-lP1Ees

Hypothesis Testing for a Single Mean for a Small Sample Size

The reason we are using these statistical tools is to make certain decisions regarding a measurand. One of the most common methods for doing this is hypothesis testing .

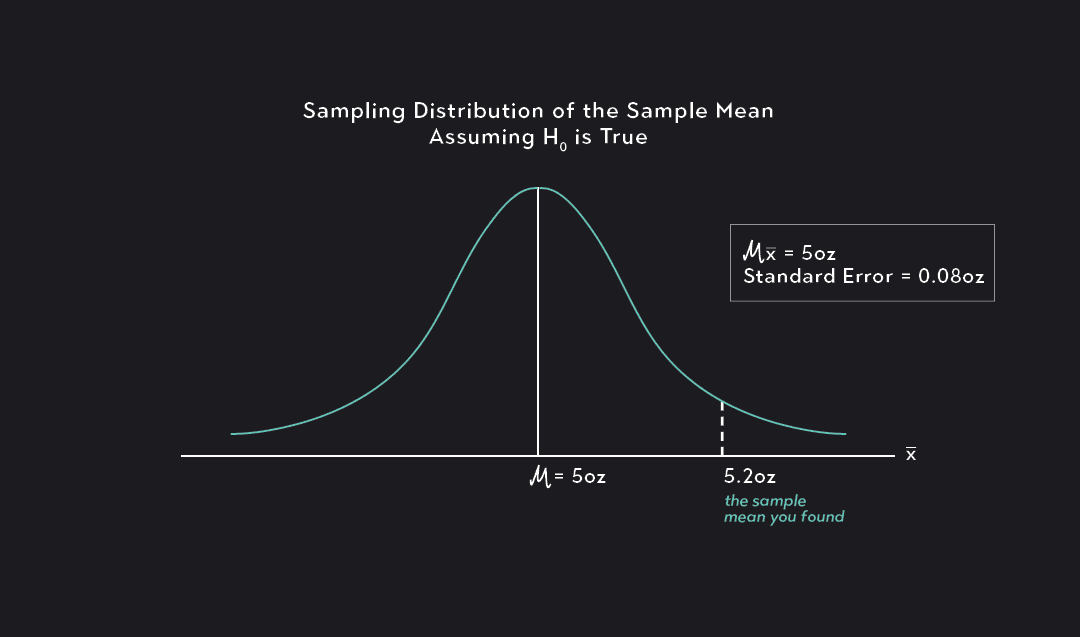

Typically we deal with two hypotheses:

• Null Hypothesis

– First step in hypothesis testing

– H 0 : µ = µ 0 where µ 0 is some constant specific value

• Alternative Hypothesis

– Second step

– Choice should reflect on what we are attempting to show

∗ Two-tailed test: concerned with whether a population mean, µ is different from specific value µ 0 , i.e. \(H_{a}: \mu \neq \mu_{0}\)

∗ Left-tailed test: concerned with whether a population mean is less than a specific value, \(H_{a}: \mu < \mu_{0}\)

∗ Right-tailed test: concerned with whether a population mean is greater than a specific value, \(H_{a}: \mu > \mu_{0}\)

Procedure for Hypothesis Testing

(1) Define null hypothesis, H 0

(2) Define alternative hypothesis, H a

(3) Define c% interval

(4) Calculate the value of t exp from the data

(5) Determine proper value of \(t_{\alpha, \nu}\) or \(t_{\frac{\alpha}{2}, \nu}\) using the degrees of freedom ν

(6) If t exp falls in the reject H 0 region, we reject H 0 and accept the alternative hypothesis H a

(7) If t exp falls in the do not reject H 0 region, we conclude that we do not have sufficient data to reject H 0 at the level of confidence specified

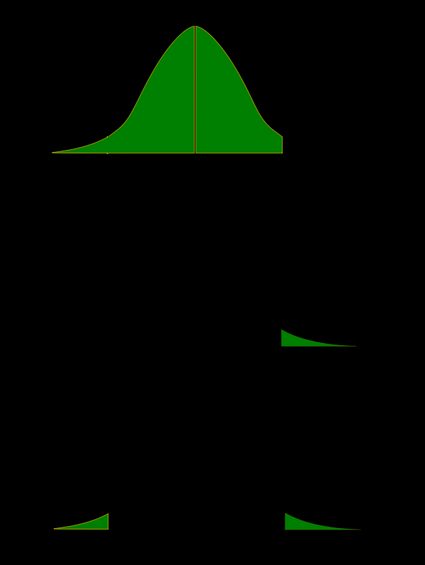

Figure \(\PageIndex{1}\): Rejecting null hypothesis for two, right, and left tailed test.

Let’s start with a two-tailed test and ask ourselves the following question, does the PCM data from the example last lecture come from a population with a true mean of 2mg assuming a confidence level of 95%.

\begin{eqnarray} H_{0}: \mu = 2.00 mg \\ H_{a}: \mu \neq 2.00 mg \\ t_{exp} = \frac{\overline{x} - \mu_{0}}{\frac{S_{x}}{\sqrt{n}}} = 0.99011 \\ t_{\alpha, \nu} = t_{0.025,17} = 2.11 \end{eqnarray}

We can look at our figure and we see that our t-value falls within the do-not reject Ho region. Does this match with result from R???

I don’t see any result only some mysterious thing called the P-value, but no worries we actually already intuitively know what this mysterious value is.

Often if you have read scientific articles or if you have taken other statistics courses you may have heard of the term P-Value . This term is ubiquitous and is used much much more often than z or t-values. Simply put, the P-value is the probability of getting a result that is more extreme than the value that is actually observed . Let’s see how it is used in the context of our previous hypothesis tests, starting with a two-tailed test.

Our P-value effectively gives us the probability of measuring a value greater than the observed, i.e. the tail ends. The way that we interpret the P-value is if we are running a two-tailed confidence interval at 5% confidence if the P-value is greater than 2.5% or 0.025 then we fall in the Do-Not Reject H o regime and if it less than that value then we fall in the Reject H o regime.

So we have previously found that we fall in the Do Not Reject region and looking at our calculated P-value of 0.336 that falls in the Do Not Reject region which matches our result!!!

Another PCM Application

Using the data from the previous example does the sample come from a population whose true mean weight is greater than 1.99mg, assuming a confidence level of 99%?

\begin{eqnarray} H_{0}: \mu = 1.99 mg \\ H_{a}: \mu > 1.99 mg \\ t_{exp} = \frac{\overline{x} - \mu_{0}}{\frac{S_{x}}{\sqrt{n}}} = 2.025 \\ t_{\alpha, \nu} = t_{0.01,17} = 2.567 \end{eqnarray}

Clearly since it is a right tailed test it does not fall in the do not reject H 0 region. So we conclude with 99% confidence that the population mean was not significantly different than 1.99mg. Note the subtle distinction of the phrase was not significantly different than 1.99mg or we do not have sufficient data to reject H 0 with 99% confidence. We are not saying that the population mean is 1.99mg.

If we examine this from a P-value perspective we find that our P-value is 0.02943 and that also falls in the Do Not Reject Region as well!!

Yet Another PCM Application

Using the data from the previous example does the sample come from a population whose true mean weight is less than 2.01mg, assuming a confidence level of 90%?

\begin{eqnarray} H_{0}: \mu = 2.01 mg \\ H_{a}: \mu < 2.01 mg \\ t_{exp} = \frac{\overline{x} - \mu_{0}}{\frac{S_{x}}{\sqrt{n}}} = -0.0448035 \\ t_{\alpha, \nu} = t_{0.01,17} = -2.567 \end{eqnarray}

Clearly since it is a left tailed test it does not fall in the do not reject H 0 region. So we conclude with 90% confidence that the population mean was not significantly different than 2.01mg.

If we examine this from a P-value perspective we find that our P-value is 0.4824 and that also falls in the Do Not Reject Region as well!!

Hypothesis Testing Single Mean for Large Sample Size

For larger sample sizes we follow the exact same procedure but we replace t α ,ν by z α and t exp by:

\begin{equation} z_{exp} = \frac{\overline{x} - \mu_{0}}{\frac{S_{x}}{\sqrt{n}}} \end{equation}

However, we can just use the t-table and use the value for degrees of freedom that corresponds to the n > 30 scenario i.e. ν ≈∞.

Looking Back at Rolling Velocity

Does the sample of our rolling magnetic beads come from a population with a velocity less than 3.1 \(\frac{\mu m}{s}\) at a confidence level of 99%.

\begin{eqnarray} H_{0}: \mu = 3.1 \frac{\mu m}{s} \\ H_{a}: \mu < 3.1 \frac{\mu m}{s} \\ t_{exp} = \frac{\overline{x} - \mu_{0}}{\frac{S_{x}}{\sqrt{n}}} = -1.3383\\ t_{0.01,|Inf} = -2.362 \end{eqnarray}

We can see we are in the Do Not Reject H 0 and we see that the P-Value is 0.09151 so we can confirm that we are in the Do Not Reject H 0 .

t-Test Comparison of Sample Means

To compare two samples solely based on their means:

\begin{equation} t = \frac{\overline{x}_{1} - \overline{x}_{2}}{\sqrt{\bigg(\frac{S_{1}^{2}}{n_{1}}\bigg) + \bigg(\frac{S_{2}^{2}}{n_{2}}\bigg)}} \end{equation}

where x 1 ,S 1 , and n 1 and x 2 , S 2 , and n 2 are the mean, standard deviations,and sizes of the two samples. and the degrees of freedom can be approximated by:

\begin{equation} \nu = \frac{[(\frac{S_{1}^{2}}{n_{1}}) + (\frac{S_{2}^{2}}{n_{2}}) ] ^{2}}{\frac{(\frac{S_{1}^{2}}{n_{1}})^{2}}{n_{1} - 1} + \frac{(\frac{S_{2}^{2}}{n_{2}})^{2}}{n_{2}-1}} \end{equation}

and ν is rounded to nearest integer. If the value t falls into the interval \(\pm t_{\frac{\alpha}{2}, \nu}\) then the two means are not significantly difference at the chosen confidence level. One of the great things about this technique is that this equation is applicable for any combination comparing large and small samples sizes.

Are these Materials Significantly Stiffer

Conisder Material A with a Young's Modulus average of 302.6 GPa, measured 12 times, and a sample standard deviation of 1.27 GPa and Material B with a Young's Modulus average of 302.3 GPa, measured 15 times, and a sample standard deviation of 1.7 GPa.

\begin{eqnarray} H_{0} = \overline{x}_{A} = \overline{x}_{B}\\ H_{a} = \overline{x}_{A} \neq \overline{x}_{B}\\ \nu \approx 25\\ t_{exp} = 0.547\\ t_{0.025,25} = 2.060 \end{eqnarray}

The value falls in the do not reject region so there is not a significant difference in the stiffness of Material A and B.

We can also perform a P-value analysis to a t-Test comparison of different samples as well you will see the R code can be simply adapted to examine different samples. Let’s simulate the example here and we find a P-value of 0.02194 so for this simulated example that would be a reject finding, again the difference here would be due to the simulated number values, as we can confirm the means and standard deviation values are slightly different. In fact if we were to re-run the analysis using our method by hand we can achieve the same result. Let’s try that really quickly.

- Skip to secondary menu

- Skip to main content

- Skip to primary sidebar

Statistics By Jim

Making statistics intuitive

How Hypothesis Tests Work: Significance Levels (Alpha) and P values

By Jim Frost 45 Comments

Hypothesis testing is a vital process in inferential statistics where the goal is to use sample data to draw conclusions about an entire population . In the testing process, you use significance levels and p-values to determine whether the test results are statistically significant.

You hear about results being statistically significant all of the time. But, what do significance levels, P values, and statistical significance actually represent? Why do we even need to use hypothesis tests in statistics?

In this post, I answer all of these questions. I use graphs and concepts to explain how hypothesis tests function in order to provide a more intuitive explanation. This helps you move on to understanding your statistical results.

Hypothesis Test Example Scenario

To start, I’ll demonstrate why we need to use hypothesis tests using an example.

A researcher is studying fuel expenditures for families and wants to determine if the monthly cost has changed since last year when the average was $260 per month. The researcher draws a random sample of 25 families and enters their monthly costs for this year into statistical software. You can download the CSV data file: FuelsCosts . Below are the descriptive statistics for this year.

We’ll build on this example to answer the research question and show how hypothesis tests work.

Descriptive Statistics Alone Won’t Answer the Question

The researcher collected a random sample and found that this year’s sample mean (330.6) is greater than last year’s mean (260). Why perform a hypothesis test at all? We can see that this year’s mean is higher by $70! Isn’t that different?

Regrettably, the situation isn’t as clear as you might think because we’re analyzing a sample instead of the full population. There are huge benefits when working with samples because it is usually impossible to collect data from an entire population. However, the tradeoff for working with a manageable sample is that we need to account for sample error.

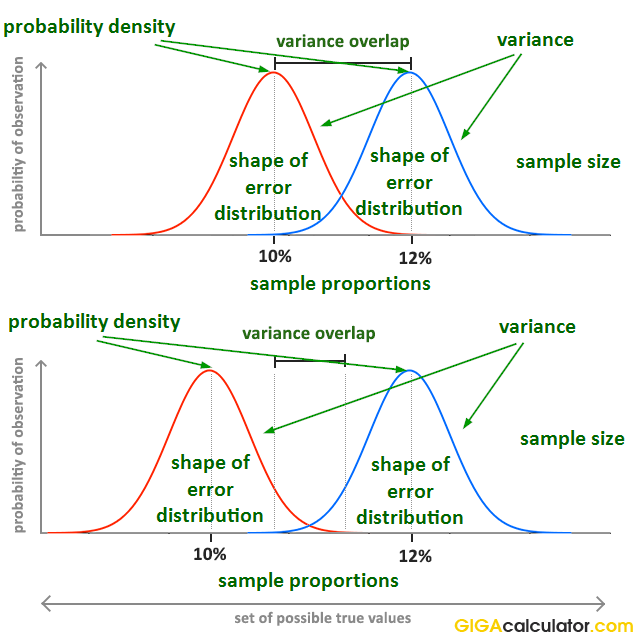

The sampling error is the gap between the sample statistic and the population parameter. For our example, the sample statistic is the sample mean, which is 330.6. The population parameter is μ, or mu, which is the average of the entire population. Unfortunately, the value of the population parameter is not only unknown but usually unknowable. Learn more about Sampling Error .

We obtained a sample mean of 330.6. However, it’s conceivable that, due to sampling error, the mean of the population might be only 260. If the researcher drew another random sample, the next sample mean might be closer to 260. It’s impossible to assess this possibility by looking at only the sample mean. Hypothesis testing is a form of inferential statistics that allows us to draw conclusions about an entire population based on a representative sample. We need to use a hypothesis test to determine the likelihood of obtaining our sample mean if the population mean is 260.

Background information : The Difference between Descriptive and Inferential Statistics and Populations, Parameters, and Samples in Inferential Statistics

A Sampling Distribution Determines Whether Our Sample Mean is Unlikely

It is very unlikely for any sample mean to equal the population mean because of sample error. In our case, the sample mean of 330.6 is almost definitely not equal to the population mean for fuel expenditures.

If we could obtain a substantial number of random samples and calculate the sample mean for each sample, we’d observe a broad spectrum of sample means. We’d even be able to graph the distribution of sample means from this process.

This type of distribution is called a sampling distribution. You obtain a sampling distribution by drawing many random samples of the same size from the same population. Why the heck would we do this?

Because sampling distributions allow you to determine the likelihood of obtaining your sample statistic and they’re crucial for performing hypothesis tests.

Luckily, we don’t need to go to the trouble of collecting numerous random samples! We can estimate the sampling distribution using the t-distribution, our sample size, and the variability in our sample.

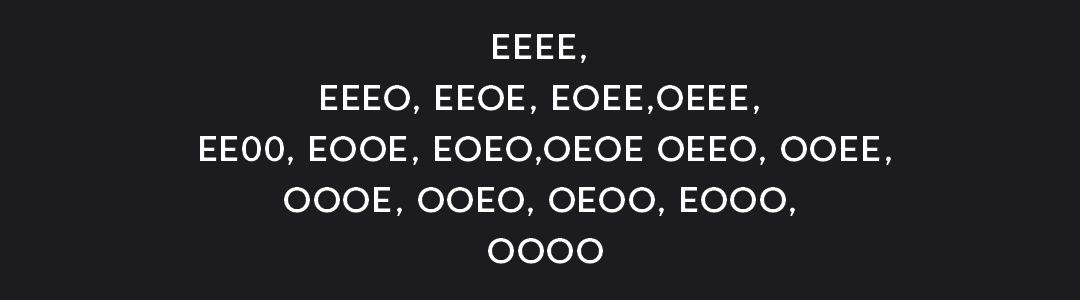

We want to find out if the average fuel expenditure this year (330.6) is different from last year (260). To answer this question, we’ll graph the sampling distribution based on the assumption that the mean fuel cost for the entire population has not changed and is still 260. In statistics, we call this lack of effect, or no change, the null hypothesis . We use the null hypothesis value as the basis of comparison for our observed sample value.

Sampling distributions and t-distributions are types of probability distributions.

Related posts : Sampling Distributions and Understanding Probability Distributions

Graphing our Sample Mean in the Context of the Sampling Distribution

The graph below shows which sample means are more likely and less likely if the population mean is 260. We can place our sample mean in this distribution. This larger context helps us see how unlikely our sample mean is if the null hypothesis is true (μ = 260).

The graph displays the estimated distribution of sample means. The most likely values are near 260 because the plot assumes that this is the true population mean. However, given random sampling error, it would not be surprising to observe sample means ranging from 167 to 352. If the population mean is still 260, our observed sample mean (330.6) isn’t the most likely value, but it’s not completely implausible either.

The Role of Hypothesis Tests

The sampling distribution shows us that we are relatively unlikely to obtain a sample of 330.6 if the population mean is 260. Is our sample mean so unlikely that we can reject the notion that the population mean is 260?

In statistics, we call this rejecting the null hypothesis. If we reject the null for our example, the difference between the sample mean (330.6) and 260 is statistically significant. In other words, the sample data favor the hypothesis that the population average does not equal 260.

However, look at the sampling distribution chart again. Notice that there is no special location on the curve where you can definitively draw this conclusion. There is only a consistent decrease in the likelihood of observing sample means that are farther from the null hypothesis value. Where do we decide a sample mean is far away enough?

To answer this question, we’ll need more tools—hypothesis tests! The hypothesis testing procedure quantifies the unusualness of our sample with a probability and then compares it to an evidentiary standard. This process allows you to make an objective decision about the strength of the evidence.

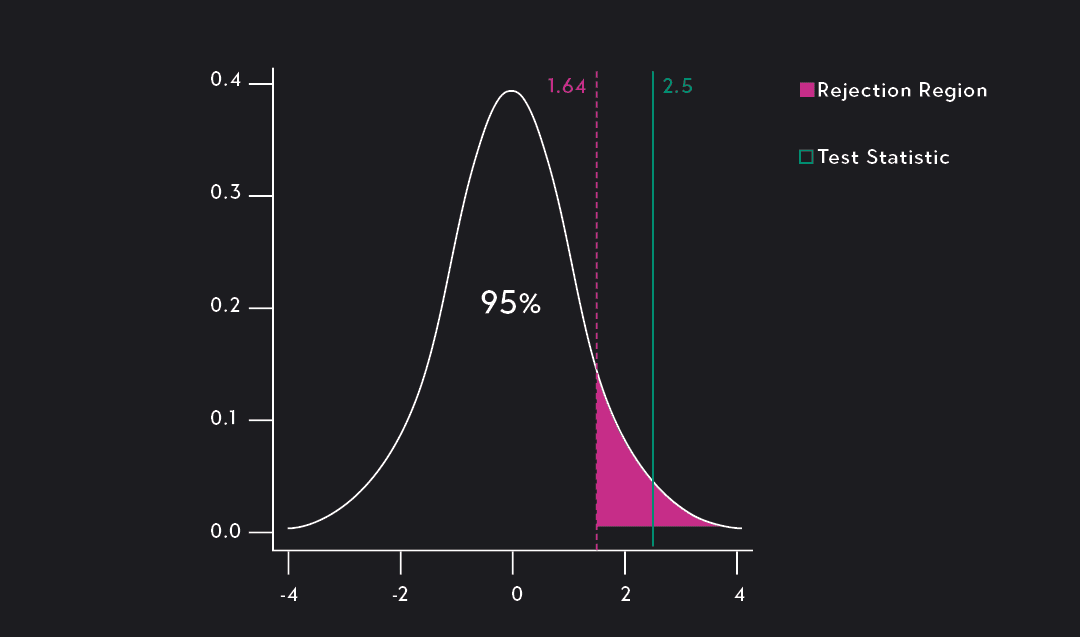

We’re going to add the tools we need to make this decision to the graph—significance levels and p-values!

These tools allow us to test these two hypotheses:

- Null hypothesis: The population mean equals the null hypothesis mean (260).

- Alternative hypothesis: The population mean does not equal the null hypothesis mean (260).

Related post : Hypothesis Testing Overview

What are Significance Levels (Alpha)?

A significance level, also known as alpha or α, is an evidentiary standard that a researcher sets before the study. It defines how strongly the sample evidence must contradict the null hypothesis before you can reject the null hypothesis for the entire population. The strength of the evidence is defined by the probability of rejecting a null hypothesis that is true. In other words, it is the probability that you say there is an effect when there is no effect.

For instance, a significance level of 0.05 signifies a 5% risk of deciding that an effect exists when it does not exist.

Lower significance levels require stronger sample evidence to be able to reject the null hypothesis. For example, to be statistically significant at the 0.01 significance level requires more substantial evidence than the 0.05 significance level. However, there is a tradeoff in hypothesis tests. Lower significance levels also reduce the power of a hypothesis test to detect a difference that does exist.

The technical nature of these types of questions can make your head spin. A picture can bring these ideas to life!

To learn a more conceptual approach to significance levels, see my post about Understanding Significance Levels .

Graphing Significance Levels as Critical Regions

On the probability distribution plot, the significance level defines how far the sample value must be from the null value before we can reject the null. The percentage of the area under the curve that is shaded equals the probability that the sample value will fall in those regions if the null hypothesis is correct.

To represent a significance level of 0.05, I’ll shade 5% of the distribution furthest from the null value.

The two shaded regions in the graph are equidistant from the central value of the null hypothesis. Each region has a probability of 0.025, which sums to our desired total of 0.05. These shaded areas are called the critical region for a two-tailed hypothesis test.

The critical region defines sample values that are improbable enough to warrant rejecting the null hypothesis. If the null hypothesis is correct and the population mean is 260, random samples (n=25) from this population have means that fall in the critical region 5% of the time.

Our sample mean is statistically significant at the 0.05 level because it falls in the critical region.

Related posts : One-Tailed and Two-Tailed Tests Explained , What Are Critical Values? , and T-distribution Table of Critical Values

Comparing Significance Levels

Let’s redo this hypothesis test using the other common significance level of 0.01 to see how it compares.

This time the sum of the two shaded regions equals our new significance level of 0.01. The mean of our sample does not fall within with the critical region. Consequently, we fail to reject the null hypothesis. We have the same exact sample data, the same difference between the sample mean and the null hypothesis value, but a different test result.

What happened? By specifying a lower significance level, we set a higher bar for the sample evidence. As the graph shows, lower significance levels move the critical regions further away from the null value. Consequently, lower significance levels require more extreme sample means to be statistically significant.

You must set the significance level before conducting a study. You don’t want the temptation of choosing a level after the study that yields significant results. The only reason I compared the two significance levels was to illustrate the effects and explain the differing results.

The graphical version of the 1-sample t-test we created allows us to determine statistical significance without assessing the P value. Typically, you need to compare the P value to the significance level to make this determination.