- Privacy Policy

Home » Research Methodology – Types, Examples and writing Guide

Research Methodology – Types, Examples and writing Guide

Table of Contents

Research Methodology

Definition:

Research Methodology refers to the systematic and scientific approach used to conduct research, investigate problems, and gather data and information for a specific purpose. It involves the techniques and procedures used to identify, collect , analyze , and interpret data to answer research questions or solve research problems . Moreover, They are philosophical and theoretical frameworks that guide the research process.

Structure of Research Methodology

Research methodology formats can vary depending on the specific requirements of the research project, but the following is a basic example of a structure for a research methodology section:

I. Introduction

- Provide an overview of the research problem and the need for a research methodology section

- Outline the main research questions and objectives

II. Research Design

- Explain the research design chosen and why it is appropriate for the research question(s) and objectives

- Discuss any alternative research designs considered and why they were not chosen

- Describe the research setting and participants (if applicable)

III. Data Collection Methods

- Describe the methods used to collect data (e.g., surveys, interviews, observations)

- Explain how the data collection methods were chosen and why they are appropriate for the research question(s) and objectives

- Detail any procedures or instruments used for data collection

IV. Data Analysis Methods

- Describe the methods used to analyze the data (e.g., statistical analysis, content analysis )

- Explain how the data analysis methods were chosen and why they are appropriate for the research question(s) and objectives

- Detail any procedures or software used for data analysis

V. Ethical Considerations

- Discuss any ethical issues that may arise from the research and how they were addressed

- Explain how informed consent was obtained (if applicable)

- Detail any measures taken to ensure confidentiality and anonymity

VI. Limitations

- Identify any potential limitations of the research methodology and how they may impact the results and conclusions

VII. Conclusion

- Summarize the key aspects of the research methodology section

- Explain how the research methodology addresses the research question(s) and objectives

Research Methodology Types

Types of Research Methodology are as follows:

Quantitative Research Methodology

This is a research methodology that involves the collection and analysis of numerical data using statistical methods. This type of research is often used to study cause-and-effect relationships and to make predictions.

Qualitative Research Methodology

This is a research methodology that involves the collection and analysis of non-numerical data such as words, images, and observations. This type of research is often used to explore complex phenomena, to gain an in-depth understanding of a particular topic, and to generate hypotheses.

Mixed-Methods Research Methodology

This is a research methodology that combines elements of both quantitative and qualitative research. This approach can be particularly useful for studies that aim to explore complex phenomena and to provide a more comprehensive understanding of a particular topic.

Case Study Research Methodology

This is a research methodology that involves in-depth examination of a single case or a small number of cases. Case studies are often used in psychology, sociology, and anthropology to gain a detailed understanding of a particular individual or group.

Action Research Methodology

This is a research methodology that involves a collaborative process between researchers and practitioners to identify and solve real-world problems. Action research is often used in education, healthcare, and social work.

Experimental Research Methodology

This is a research methodology that involves the manipulation of one or more independent variables to observe their effects on a dependent variable. Experimental research is often used to study cause-and-effect relationships and to make predictions.

Survey Research Methodology

This is a research methodology that involves the collection of data from a sample of individuals using questionnaires or interviews. Survey research is often used to study attitudes, opinions, and behaviors.

Grounded Theory Research Methodology

This is a research methodology that involves the development of theories based on the data collected during the research process. Grounded theory is often used in sociology and anthropology to generate theories about social phenomena.

Research Methodology Example

An Example of Research Methodology could be the following:

Research Methodology for Investigating the Effectiveness of Cognitive Behavioral Therapy in Reducing Symptoms of Depression in Adults

Introduction:

The aim of this research is to investigate the effectiveness of cognitive-behavioral therapy (CBT) in reducing symptoms of depression in adults. To achieve this objective, a randomized controlled trial (RCT) will be conducted using a mixed-methods approach.

Research Design:

The study will follow a pre-test and post-test design with two groups: an experimental group receiving CBT and a control group receiving no intervention. The study will also include a qualitative component, in which semi-structured interviews will be conducted with a subset of participants to explore their experiences of receiving CBT.

Participants:

Participants will be recruited from community mental health clinics in the local area. The sample will consist of 100 adults aged 18-65 years old who meet the diagnostic criteria for major depressive disorder. Participants will be randomly assigned to either the experimental group or the control group.

Intervention :

The experimental group will receive 12 weekly sessions of CBT, each lasting 60 minutes. The intervention will be delivered by licensed mental health professionals who have been trained in CBT. The control group will receive no intervention during the study period.

Data Collection:

Quantitative data will be collected through the use of standardized measures such as the Beck Depression Inventory-II (BDI-II) and the Generalized Anxiety Disorder-7 (GAD-7). Data will be collected at baseline, immediately after the intervention, and at a 3-month follow-up. Qualitative data will be collected through semi-structured interviews with a subset of participants from the experimental group. The interviews will be conducted at the end of the intervention period, and will explore participants’ experiences of receiving CBT.

Data Analysis:

Quantitative data will be analyzed using descriptive statistics, t-tests, and mixed-model analyses of variance (ANOVA) to assess the effectiveness of the intervention. Qualitative data will be analyzed using thematic analysis to identify common themes and patterns in participants’ experiences of receiving CBT.

Ethical Considerations:

This study will comply with ethical guidelines for research involving human subjects. Participants will provide informed consent before participating in the study, and their privacy and confidentiality will be protected throughout the study. Any adverse events or reactions will be reported and managed appropriately.

Data Management:

All data collected will be kept confidential and stored securely using password-protected databases. Identifying information will be removed from qualitative data transcripts to ensure participants’ anonymity.

Limitations:

One potential limitation of this study is that it only focuses on one type of psychotherapy, CBT, and may not generalize to other types of therapy or interventions. Another limitation is that the study will only include participants from community mental health clinics, which may not be representative of the general population.

Conclusion:

This research aims to investigate the effectiveness of CBT in reducing symptoms of depression in adults. By using a randomized controlled trial and a mixed-methods approach, the study will provide valuable insights into the mechanisms underlying the relationship between CBT and depression. The results of this study will have important implications for the development of effective treatments for depression in clinical settings.

How to Write Research Methodology

Writing a research methodology involves explaining the methods and techniques you used to conduct research, collect data, and analyze results. It’s an essential section of any research paper or thesis, as it helps readers understand the validity and reliability of your findings. Here are the steps to write a research methodology:

- Start by explaining your research question: Begin the methodology section by restating your research question and explaining why it’s important. This helps readers understand the purpose of your research and the rationale behind your methods.

- Describe your research design: Explain the overall approach you used to conduct research. This could be a qualitative or quantitative research design, experimental or non-experimental, case study or survey, etc. Discuss the advantages and limitations of the chosen design.

- Discuss your sample: Describe the participants or subjects you included in your study. Include details such as their demographics, sampling method, sample size, and any exclusion criteria used.

- Describe your data collection methods : Explain how you collected data from your participants. This could include surveys, interviews, observations, questionnaires, or experiments. Include details on how you obtained informed consent, how you administered the tools, and how you minimized the risk of bias.

- Explain your data analysis techniques: Describe the methods you used to analyze the data you collected. This could include statistical analysis, content analysis, thematic analysis, or discourse analysis. Explain how you dealt with missing data, outliers, and any other issues that arose during the analysis.

- Discuss the validity and reliability of your research : Explain how you ensured the validity and reliability of your study. This could include measures such as triangulation, member checking, peer review, or inter-coder reliability.

- Acknowledge any limitations of your research: Discuss any limitations of your study, including any potential threats to validity or generalizability. This helps readers understand the scope of your findings and how they might apply to other contexts.

- Provide a summary: End the methodology section by summarizing the methods and techniques you used to conduct your research. This provides a clear overview of your research methodology and helps readers understand the process you followed to arrive at your findings.

When to Write Research Methodology

Research methodology is typically written after the research proposal has been approved and before the actual research is conducted. It should be written prior to data collection and analysis, as it provides a clear roadmap for the research project.

The research methodology is an important section of any research paper or thesis, as it describes the methods and procedures that will be used to conduct the research. It should include details about the research design, data collection methods, data analysis techniques, and any ethical considerations.

The methodology should be written in a clear and concise manner, and it should be based on established research practices and standards. It is important to provide enough detail so that the reader can understand how the research was conducted and evaluate the validity of the results.

Applications of Research Methodology

Here are some of the applications of research methodology:

- To identify the research problem: Research methodology is used to identify the research problem, which is the first step in conducting any research.

- To design the research: Research methodology helps in designing the research by selecting the appropriate research method, research design, and sampling technique.

- To collect data: Research methodology provides a systematic approach to collect data from primary and secondary sources.

- To analyze data: Research methodology helps in analyzing the collected data using various statistical and non-statistical techniques.

- To test hypotheses: Research methodology provides a framework for testing hypotheses and drawing conclusions based on the analysis of data.

- To generalize findings: Research methodology helps in generalizing the findings of the research to the target population.

- To develop theories : Research methodology is used to develop new theories and modify existing theories based on the findings of the research.

- To evaluate programs and policies : Research methodology is used to evaluate the effectiveness of programs and policies by collecting data and analyzing it.

- To improve decision-making: Research methodology helps in making informed decisions by providing reliable and valid data.

Purpose of Research Methodology

Research methodology serves several important purposes, including:

- To guide the research process: Research methodology provides a systematic framework for conducting research. It helps researchers to plan their research, define their research questions, and select appropriate methods and techniques for collecting and analyzing data.

- To ensure research quality: Research methodology helps researchers to ensure that their research is rigorous, reliable, and valid. It provides guidelines for minimizing bias and error in data collection and analysis, and for ensuring that research findings are accurate and trustworthy.

- To replicate research: Research methodology provides a clear and detailed account of the research process, making it possible for other researchers to replicate the study and verify its findings.

- To advance knowledge: Research methodology enables researchers to generate new knowledge and to contribute to the body of knowledge in their field. It provides a means for testing hypotheses, exploring new ideas, and discovering new insights.

- To inform decision-making: Research methodology provides evidence-based information that can inform policy and decision-making in a variety of fields, including medicine, public health, education, and business.

Advantages of Research Methodology

Research methodology has several advantages that make it a valuable tool for conducting research in various fields. Here are some of the key advantages of research methodology:

- Systematic and structured approach : Research methodology provides a systematic and structured approach to conducting research, which ensures that the research is conducted in a rigorous and comprehensive manner.

- Objectivity : Research methodology aims to ensure objectivity in the research process, which means that the research findings are based on evidence and not influenced by personal bias or subjective opinions.

- Replicability : Research methodology ensures that research can be replicated by other researchers, which is essential for validating research findings and ensuring their accuracy.

- Reliability : Research methodology aims to ensure that the research findings are reliable, which means that they are consistent and can be depended upon.

- Validity : Research methodology ensures that the research findings are valid, which means that they accurately reflect the research question or hypothesis being tested.

- Efficiency : Research methodology provides a structured and efficient way of conducting research, which helps to save time and resources.

- Flexibility : Research methodology allows researchers to choose the most appropriate research methods and techniques based on the research question, data availability, and other relevant factors.

- Scope for innovation: Research methodology provides scope for innovation and creativity in designing research studies and developing new research techniques.

Research Methodology Vs Research Methods

| Research Methodology | Research Methods |

|---|---|

| Research methodology refers to the philosophical and theoretical frameworks that guide the research process. | refer to the techniques and procedures used to collect and analyze data. |

| It is concerned with the underlying principles and assumptions of research. | It is concerned with the practical aspects of research. |

| It provides a rationale for why certain research methods are used. | It determines the specific steps that will be taken to conduct research. |

| It is broader in scope and involves understanding the overall approach to research. | It is narrower in scope and focuses on specific techniques and tools used in research. |

| It is concerned with identifying research questions, defining the research problem, and formulating hypotheses. | It is concerned with collecting data, analyzing data, and interpreting results. |

| It is concerned with the validity and reliability of research. | It is concerned with the accuracy and precision of data. |

| It is concerned with the ethical considerations of research. | It is concerned with the practical considerations of research. |

About the author

Muhammad Hassan

Researcher, Academic Writer, Web developer

You may also like

Figures in Research Paper – Examples and Guide

Research Gap – Types, Examples and How to...

Research Approach – Types Methods and Examples

APA Research Paper Format – Example, Sample and...

Limitations in Research – Types, Examples and...

Informed Consent in Research – Types, Templates...

- USC Libraries

- Research Guides

Organizing Your Social Sciences Research Paper

- 6. The Methodology

- Purpose of Guide

- Design Flaws to Avoid

- Independent and Dependent Variables

- Glossary of Research Terms

- Reading Research Effectively

- Narrowing a Topic Idea

- Broadening a Topic Idea

- Extending the Timeliness of a Topic Idea

- Academic Writing Style

- Applying Critical Thinking

- Choosing a Title

- Making an Outline

- Paragraph Development

- Research Process Video Series

- Executive Summary

- The C.A.R.S. Model

- Background Information

- The Research Problem/Question

- Theoretical Framework

- Citation Tracking

- Content Alert Services

- Evaluating Sources

- Primary Sources

- Secondary Sources

- Tiertiary Sources

- Scholarly vs. Popular Publications

- Qualitative Methods

- Quantitative Methods

- Insiderness

- Using Non-Textual Elements

- Limitations of the Study

- Common Grammar Mistakes

- Writing Concisely

- Avoiding Plagiarism

- Footnotes or Endnotes?

- Further Readings

- Generative AI and Writing

- USC Libraries Tutorials and Other Guides

- Bibliography

The methods section describes actions taken to investigate a research problem and the rationale for the application of specific procedures or techniques used to identify, select, process, and analyze information applied to understanding the problem, thereby, allowing the reader to critically evaluate a study’s overall validity and reliability. The methodology section of a research paper answers two main questions: How was the data collected or generated? And, how was it analyzed? The writing should be direct and precise and always written in the past tense.

Kallet, Richard H. "How to Write the Methods Section of a Research Paper." Respiratory Care 49 (October 2004): 1229-1232.

Importance of a Good Methodology Section

You must explain how you obtained and analyzed your results for the following reasons:

- Readers need to know how the data was obtained because the method you chose affects the results and, by extension, how you interpreted their significance in the discussion section of your paper.

- Methodology is crucial for any branch of scholarship because an unreliable method produces unreliable results and, as a consequence, undermines the value of your analysis of the findings.

- In most cases, there are a variety of different methods you can choose to investigate a research problem. The methodology section of your paper should clearly articulate the reasons why you have chosen a particular procedure or technique.

- The reader wants to know that the data was collected or generated in a way that is consistent with accepted practice in the field of study. For example, if you are using a multiple choice questionnaire, readers need to know that it offered your respondents a reasonable range of answers to choose from.

- The method must be appropriate to fulfilling the overall aims of the study. For example, you need to ensure that you have a large enough sample size to be able to generalize and make recommendations based upon the findings.

- The methodology should discuss the problems that were anticipated and the steps you took to prevent them from occurring. For any problems that do arise, you must describe the ways in which they were minimized or why these problems do not impact in any meaningful way your interpretation of the findings.

- In the social and behavioral sciences, it is important to always provide sufficient information to allow other researchers to adopt or replicate your methodology. This information is particularly important when a new method has been developed or an innovative use of an existing method is utilized.

Bem, Daryl J. Writing the Empirical Journal Article. Psychology Writing Center. University of Washington; Denscombe, Martyn. The Good Research Guide: For Small-Scale Social Research Projects . 5th edition. Buckingham, UK: Open University Press, 2014; Lunenburg, Frederick C. Writing a Successful Thesis or Dissertation: Tips and Strategies for Students in the Social and Behavioral Sciences . Thousand Oaks, CA: Corwin Press, 2008.

Structure and Writing Style

I. Groups of Research Methods

There are two main groups of research methods in the social sciences:

- The e mpirical-analytical group approaches the study of social sciences in a similar manner that researchers study the natural sciences . This type of research focuses on objective knowledge, research questions that can be answered yes or no, and operational definitions of variables to be measured. The empirical-analytical group employs deductive reasoning that uses existing theory as a foundation for formulating hypotheses that need to be tested. This approach is focused on explanation.

- The i nterpretative group of methods is focused on understanding phenomenon in a comprehensive, holistic way . Interpretive methods focus on analytically disclosing the meaning-making practices of human subjects [the why, how, or by what means people do what they do], while showing how those practices arrange so that it can be used to generate observable outcomes. Interpretive methods allow you to recognize your connection to the phenomena under investigation. However, the interpretative group requires careful examination of variables because it focuses more on subjective knowledge.

II. Content

The introduction to your methodology section should begin by restating the research problem and underlying assumptions underpinning your study. This is followed by situating the methods you used to gather, analyze, and process information within the overall “tradition” of your field of study and within the particular research design you have chosen to study the problem. If the method you choose lies outside of the tradition of your field [i.e., your review of the literature demonstrates that the method is not commonly used], provide a justification for how your choice of methods specifically addresses the research problem in ways that have not been utilized in prior studies.

The remainder of your methodology section should describe the following:

- Decisions made in selecting the data you have analyzed or, in the case of qualitative research, the subjects and research setting you have examined,

- Tools and methods used to identify and collect information, and how you identified relevant variables,

- The ways in which you processed the data and the procedures you used to analyze that data, and

- The specific research tools or strategies that you utilized to study the underlying hypothesis and research questions.

In addition, an effectively written methodology section should:

- Introduce the overall methodological approach for investigating your research problem . Is your study qualitative or quantitative or a combination of both (mixed method)? Are you going to take a special approach, such as action research, or a more neutral stance?

- Indicate how the approach fits the overall research design . Your methods for gathering data should have a clear connection to your research problem. In other words, make sure that your methods will actually address the problem. One of the most common deficiencies found in research papers is that the proposed methodology is not suitable to achieving the stated objective of your paper.

- Describe the specific methods of data collection you are going to use , such as, surveys, interviews, questionnaires, observation, archival research. If you are analyzing existing data, such as a data set or archival documents, describe how it was originally created or gathered and by whom. Also be sure to explain how older data is still relevant to investigating the current research problem.

- Explain how you intend to analyze your results . Will you use statistical analysis? Will you use specific theoretical perspectives to help you analyze a text or explain observed behaviors? Describe how you plan to obtain an accurate assessment of relationships, patterns, trends, distributions, and possible contradictions found in the data.

- Provide background and a rationale for methodologies that are unfamiliar for your readers . Very often in the social sciences, research problems and the methods for investigating them require more explanation/rationale than widely accepted rules governing the natural and physical sciences. Be clear and concise in your explanation.

- Provide a justification for subject selection and sampling procedure . For instance, if you propose to conduct interviews, how do you intend to select the sample population? If you are analyzing texts, which texts have you chosen, and why? If you are using statistics, why is this set of data being used? If other data sources exist, explain why the data you chose is most appropriate to addressing the research problem.

- Provide a justification for case study selection . A common method of analyzing research problems in the social sciences is to analyze specific cases. These can be a person, place, event, phenomenon, or other type of subject of analysis that are either examined as a singular topic of in-depth investigation or multiple topics of investigation studied for the purpose of comparing or contrasting findings. In either method, you should explain why a case or cases were chosen and how they specifically relate to the research problem.

- Describe potential limitations . Are there any practical limitations that could affect your data collection? How will you attempt to control for potential confounding variables and errors? If your methodology may lead to problems you can anticipate, state this openly and show why pursuing this methodology outweighs the risk of these problems cropping up.

NOTE: Once you have written all of the elements of the methods section, subsequent revisions should focus on how to present those elements as clearly and as logically as possibly. The description of how you prepared to study the research problem, how you gathered the data, and the protocol for analyzing the data should be organized chronologically. For clarity, when a large amount of detail must be presented, information should be presented in sub-sections according to topic. If necessary, consider using appendices for raw data.

ANOTHER NOTE: If you are conducting a qualitative analysis of a research problem , the methodology section generally requires a more elaborate description of the methods used as well as an explanation of the processes applied to gathering and analyzing of data than is generally required for studies using quantitative methods. Because you are the primary instrument for generating the data [e.g., through interviews or observations], the process for collecting that data has a significantly greater impact on producing the findings. Therefore, qualitative research requires a more detailed description of the methods used.

YET ANOTHER NOTE: If your study involves interviews, observations, or other qualitative techniques involving human subjects , you may be required to obtain approval from the university's Office for the Protection of Research Subjects before beginning your research. This is not a common procedure for most undergraduate level student research assignments. However, i f your professor states you need approval, you must include a statement in your methods section that you received official endorsement and adequate informed consent from the office and that there was a clear assessment and minimization of risks to participants and to the university. This statement informs the reader that your study was conducted in an ethical and responsible manner. In some cases, the approval notice is included as an appendix to your paper.

III. Problems to Avoid

Irrelevant Detail The methodology section of your paper should be thorough but concise. Do not provide any background information that does not directly help the reader understand why a particular method was chosen, how the data was gathered or obtained, and how the data was analyzed in relation to the research problem [note: analyzed, not interpreted! Save how you interpreted the findings for the discussion section]. With this in mind, the page length of your methods section will generally be less than any other section of your paper except the conclusion.

Unnecessary Explanation of Basic Procedures Remember that you are not writing a how-to guide about a particular method. You should make the assumption that readers possess a basic understanding of how to investigate the research problem on their own and, therefore, you do not have to go into great detail about specific methodological procedures. The focus should be on how you applied a method , not on the mechanics of doing a method. An exception to this rule is if you select an unconventional methodological approach; if this is the case, be sure to explain why this approach was chosen and how it enhances the overall process of discovery.

Problem Blindness It is almost a given that you will encounter problems when collecting or generating your data, or, gaps will exist in existing data or archival materials. Do not ignore these problems or pretend they did not occur. Often, documenting how you overcame obstacles can form an interesting part of the methodology. It demonstrates to the reader that you can provide a cogent rationale for the decisions you made to minimize the impact of any problems that arose.

Literature Review Just as the literature review section of your paper provides an overview of sources you have examined while researching a particular topic, the methodology section should cite any sources that informed your choice and application of a particular method [i.e., the choice of a survey should include any citations to the works you used to help construct the survey].

It’s More than Sources of Information! A description of a research study's method should not be confused with a description of the sources of information. Such a list of sources is useful in and of itself, especially if it is accompanied by an explanation about the selection and use of the sources. The description of the project's methodology complements a list of sources in that it sets forth the organization and interpretation of information emanating from those sources.

Azevedo, L.F. et al. "How to Write a Scientific Paper: Writing the Methods Section." Revista Portuguesa de Pneumologia 17 (2011): 232-238; Blair Lorrie. “Choosing a Methodology.” In Writing a Graduate Thesis or Dissertation , Teaching Writing Series. (Rotterdam: Sense Publishers 2016), pp. 49-72; Butin, Dan W. The Education Dissertation A Guide for Practitioner Scholars . Thousand Oaks, CA: Corwin, 2010; Carter, Susan. Structuring Your Research Thesis . New York: Palgrave Macmillan, 2012; Kallet, Richard H. “How to Write the Methods Section of a Research Paper.” Respiratory Care 49 (October 2004):1229-1232; Lunenburg, Frederick C. Writing a Successful Thesis or Dissertation: Tips and Strategies for Students in the Social and Behavioral Sciences . Thousand Oaks, CA: Corwin Press, 2008. Methods Section. The Writer’s Handbook. Writing Center. University of Wisconsin, Madison; Rudestam, Kjell Erik and Rae R. Newton. “The Method Chapter: Describing Your Research Plan.” In Surviving Your Dissertation: A Comprehensive Guide to Content and Process . (Thousand Oaks, Sage Publications, 2015), pp. 87-115; What is Interpretive Research. Institute of Public and International Affairs, University of Utah; Writing the Experimental Report: Methods, Results, and Discussion. The Writing Lab and The OWL. Purdue University; Methods and Materials. The Structure, Format, Content, and Style of a Journal-Style Scientific Paper. Department of Biology. Bates College.

Writing Tip

Statistical Designs and Tests? Do Not Fear Them!

Don't avoid using a quantitative approach to analyzing your research problem just because you fear the idea of applying statistical designs and tests. A qualitative approach, such as conducting interviews or content analysis of archival texts, can yield exciting new insights about a research problem, but it should not be undertaken simply because you have a disdain for running a simple regression. A well designed quantitative research study can often be accomplished in very clear and direct ways, whereas, a similar study of a qualitative nature usually requires considerable time to analyze large volumes of data and a tremendous burden to create new paths for analysis where previously no path associated with your research problem had existed.

To locate data and statistics, GO HERE .

Another Writing Tip

Knowing the Relationship Between Theories and Methods

There can be multiple meaning associated with the term "theories" and the term "methods" in social sciences research. A helpful way to delineate between them is to understand "theories" as representing different ways of characterizing the social world when you research it and "methods" as representing different ways of generating and analyzing data about that social world. Framed in this way, all empirical social sciences research involves theories and methods, whether they are stated explicitly or not. However, while theories and methods are often related, it is important that, as a researcher, you deliberately separate them in order to avoid your theories playing a disproportionate role in shaping what outcomes your chosen methods produce.

Introspectively engage in an ongoing dialectic between the application of theories and methods to help enable you to use the outcomes from your methods to interrogate and develop new theories, or ways of framing conceptually the research problem. This is how scholarship grows and branches out into new intellectual territory.

Reynolds, R. Larry. Ways of Knowing. Alternative Microeconomics . Part 1, Chapter 3. Boise State University; The Theory-Method Relationship. S-Cool Revision. United Kingdom.

Yet Another Writing Tip

Methods and the Methodology

Do not confuse the terms "methods" and "methodology." As Schneider notes, a method refers to the technical steps taken to do research . Descriptions of methods usually include defining and stating why you have chosen specific techniques to investigate a research problem, followed by an outline of the procedures you used to systematically select, gather, and process the data [remember to always save the interpretation of data for the discussion section of your paper].

The methodology refers to a discussion of the underlying reasoning why particular methods were used . This discussion includes describing the theoretical concepts that inform the choice of methods to be applied, placing the choice of methods within the more general nature of academic work, and reviewing its relevance to examining the research problem. The methodology section also includes a thorough review of the methods other scholars have used to study the topic.

Bryman, Alan. "Of Methods and Methodology." Qualitative Research in Organizations and Management: An International Journal 3 (2008): 159-168; Schneider, Florian. “What's in a Methodology: The Difference between Method, Methodology, and Theory…and How to Get the Balance Right?” PoliticsEastAsia.com. Chinese Department, University of Leiden, Netherlands.

- << Previous: Scholarly vs. Popular Publications

- Next: Qualitative Methods >>

- Last Updated: Sep 4, 2024 9:40 AM

- URL: https://libguides.usc.edu/writingguide

Still have questions? Leave a comment

Add Comment

Checklist: Dissertation Proposal

Enter your email id to get the downloadable right in your inbox!

Examples: Edited Papers

Need editing and proofreading services, research methodology guide: writing tips, types, & examples.

- Tags: Academic Research , Research

No dissertation or research paper is complete without the research methodology section. Since this is the chapter where you explain how you carried out your research, this is where all the meat is! Here’s where you clearly lay out the steps you have taken to test your hypothesis or research problem.

Through this blog, we’ll unravel the complexities and meaning of research methodology in academic writing , from its fundamental principles and ethics to the diverse types of research methodology in use today. Alongside offering research methodology examples, we aim to guide you on how to write research methodology, ensuring your research endeavors are both impactful and impeccably grounded!

Ensure your research methodology is foolproof. Learn more

Let’s first take a closer look at a simple research methodology definition:

Defining what is research methodology

Research methodology is the set of procedures and techniques used to collect, analyze, and interpret data to understand and solve a research problem. Methodology in research not only includes the design and methods but also the basic principles that guide the choice of specific methods.

Grasping the concept of methodology in research is essential for students and scholars, as it demonstrates the thorough and structured method used to explore a hypothesis or research question. Understanding the definition of methodology in research aids in identifying the methods used to collect data. Be it through any type of research method approach, ensuring adherence to the proper research paper format is crucial.

Now let’s explore some research methodology types:

Types of research methodology

1. qualitative research methodology.

Qualitative research methodology is aimed at understanding concepts, thoughts, or experiences. This approach is descriptive and is often utilized to gather in-depth insights into people’s attitudes, behaviors, or cultures. Qualitative research methodology involves methods like interviews, focus groups, and observation. The strength of this methodology lies in its ability to provide contextual richness.

2. Quantitative research methodology

Quantitative research methodology, on the other hand, is focused on quantifying the problem by generating numerical data or data that can be transformed into usable statistics. It uses measurable data to formulate facts and uncover patterns in research. Quantitative research methodology typically involves surveys, experiments, or statistical analysis. This methodology is appreciated for its ability to produce objective results that are generalizable to a larger population.

3. Mixed-Methods research methodology

Mixed-methods research combines both qualitative and quantitative research methodologies to provide a more comprehensive understanding of the research problem. This approach leverages the strengths of both methodologies to provide a deeper insight into the research question of a research paper .

Research methodology vs. research methods

The research methodology or design is the overall strategy and rationale that you used to carry out the research. Whereas, research methods are the specific tools and processes you use to gather and understand the data you need to test your hypothesis.

Research methodology examples and application

To further understand research methodology, let’s explore some examples of research methodology:

a. Qualitative research methodology example: A study exploring the impact of author branding on author popularity might utilize in-depth interviews to gather personal experiences and perspectives.

b. Quantitative research methodology example: A research project investigating the effects of a book promotion technique on book sales could employ a statistical analysis of profit margins and sales before and after the implementation of the method.

c. Mixed-Methods research methodology example: A study examining the relationship between social media use and academic performance might combine both qualitative and quantitative approaches. It could include surveys to quantitatively assess the frequency of social media usage and its correlation with grades, alongside focus groups or interviews to qualitatively explore students’ perceptions and experiences regarding how social media affects their study habits and academic engagement.

These examples highlight the meaning of methodology in research and how it guides the research process, from data collection to analysis, ensuring the study’s objectives are met efficiently.

Importance of methodology in research papers

When it comes to writing your study, the methodology in research papers or a dissertation plays a pivotal role. A well-crafted methodology section of a research paper or thesis not only enhances the credibility of your research but also provides a roadmap for others to replicate or build upon your work.

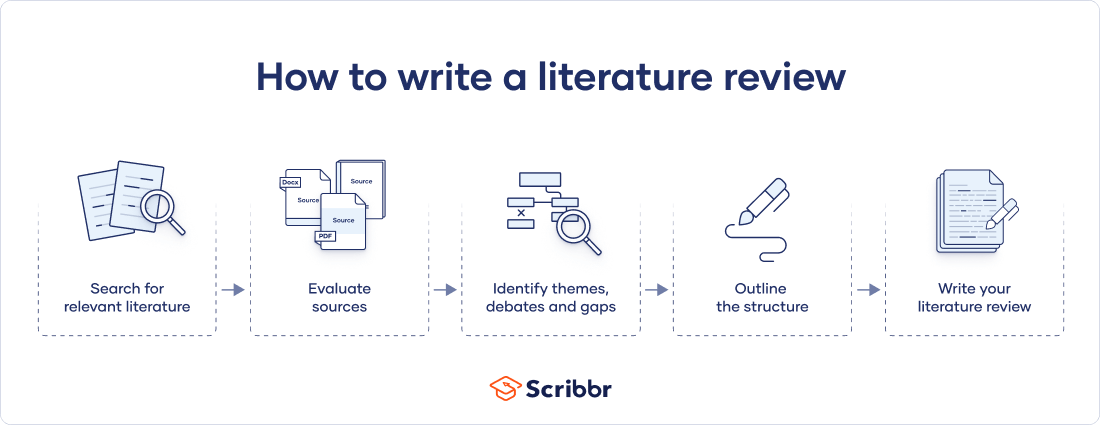

How to structure the research methods chapter

Wondering how to write the research methodology section? Follow these steps to create a strong methods chapter:

Step 1: Explain your research methodology

At the start of a research paper , you would have provided the background of your research and stated your hypothesis or research problem. In this section, you will elaborate on your research strategy.

Begin by restating your research question and proceed to explain what type of research you opted for to test it. Depending on your research, here are some questions you can consider:

a. Did you use qualitative or quantitative data to test the hypothesis?

b. Did you perform an experiment where you collected data or are you writing a dissertation that is descriptive/theoretical without data collection?

c. Did you use primary data that you collected or analyze secondary research data or existing data as part of your study?

These questions will help you establish the rationale for your study on a broader level, which you will follow by elaborating on the specific methods you used to collect and understand your data.

Step 2: Explain the methods you used to test your hypothesis

Now that you have told your reader what type of research you’ve undertaken for the dissertation, it’s time to dig into specifics. State what specific methods you used and explain the conditions and variables involved. Explain what the theoretical framework behind the method was, what samples you used for testing it, and what tools and materials you used to collect the data.

Step 3: Explain how you analyzed the results

Once you have explained the data collection process, explain how you analyzed and studied the data. Here, your focus is simply to explain the methods of analysis rather than the results of the study.

Here are some questions you can answer at this stage:

a. What tools or software did you use to analyze your results?

b. What parameters or variables did you consider while understanding and studying the data you’ve collected?

c. Was your analysis based on a theoretical framework?

Your mode of analysis will change depending on whether you used a quantitative or qualitative research methodology in your study. If you’re working within the hard sciences or physical sciences, you are likely to use a quantitative research methodology (relying on numbers and hard data). If you’re doing a qualitative study, in the social sciences or humanities, your analysis may rely on understanding language and socio-political contexts around your topic. This is why it’s important to establish what kind of study you’re undertaking at the onset.

Step 4: Defend your choice of methodology

Now that you have gone through your research process in detail, you’ll also have to make a case for it. Justify your choice of methodology and methods, explaining why it is the best choice for your research question. This is especially important if you have chosen an unconventional approach or you’ve simply chosen to study an existing research problem from a different perspective. Compare it with other methodologies, especially ones attempted by previous researchers, and discuss what contributions using your methodology makes.

Step 5: Discuss the obstacles you encountered and how you overcame them

No matter how thorough a methodology is, it doesn’t come without its hurdles. This is a natural part of scientific research that is important to document so that your peers and future researchers are aware of it. Writing in a research paper about this aspect of your research process also tells your evaluator that you have actively worked to overcome the pitfalls that came your way and you have refined the research process.

Tips to write an effective methodology chapter

1. Remember who you are writing for. Keeping sight of the reader/evaluator will help you know what to elaborate on and what information they are already likely to have. You’re condensing months’ work of research in just a few pages, so you should omit basic definitions and information about general phenomena people already know.

2. Do not give an overly elaborate explanation of every single condition in your study.

3. Skip details and findings irrelevant to the results.

4. Cite references that back your claim and choice of methodology.

5. Consistently emphasize the relationship between your research question and the methodology you adopted to study it.

To sum it up, what is methodology in research? It’s the blueprint of your research, essential for ensuring that your study is systematic, rigorous, and credible. Whether your focus is on qualitative research methodology, quantitative research methodology, or a combination of both, understanding and clearly defining your methodology is key to the success of your research.

Once you write the research methodology and complete writing the entire research paper, the next step is to edit your paper. As experts in research paper editing and proofreading services , we’d love to help you perfect your paper!

Here are some other articles that you might find useful:

- Essential Research Tips for Essay Writing

- How to Write a Lab Report: Examples from Academic Editors

- The Essential Types of Editing Every Writer Needs to Know

- Editing and Proofreading Academic Papers: A Short Guide

- The Top 10 Editing and Proofreading Services of 2023

Frequently Asked Questions

What does research methodology mean, what types of research methodologies are there, what is qualitative research methodology, how to determine sample size in research methodology, what is action research methodology.

Found this article helpful?

One comment on “ Research Methodology Guide: Writing Tips, Types, & Examples ”

This is very simplified and direct. Very helpful to understand the research methodology section of a dissertation

Leave a Comment: Cancel reply

Your email address will not be published.

Your vs. You’re: When to Use Your and You’re

Your organization needs a technical editor: here’s why, your guide to the best ebook readers in 2024, writing for the web: 7 expert tips for web content writing.

Subscribe to our Newsletter

Get carefully curated resources about writing, editing, and publishing in the comfort of your inbox.

How to Copyright Your Book?

If you’ve thought about copyrighting your book, you’re on the right path.

© 2024 All rights reserved

- Terms of service

- Privacy policy

- Self Publishing Guide

- Pre-Publishing Steps

- Fiction Writing Tips

- Traditional Publishing

- Additional Resources

- Dissertation Writing Guide

- Essay Writing Guide

- Academic Writing and Publishing

- Citation and Referencing

- Partner with us

- Annual report

- Website content

- Marketing material

- Job Applicant

- Cover letter

- Resource Center

- Case studies

Educational resources and simple solutions for your research journey

How to Write the Methods Section of a Research Paper

Writing a research paper is both an art and a skill, and knowing how to write the methods section of a research paper is the first crucial step in mastering scientific writing. If, like the majority of early career researchers, you believe that the methods section is the simplest to write and needs little in the way of careful consideration or thought, this article will help you understand it is not 1 .

We have all probably asked our supervisors, coworkers, or search engines “ how to write a methods section of a research paper ” at some point in our scientific careers, so you are not alone if that’s how you ended up here. Even for seasoned researchers, selecting what to include in the methods section from a wealth of experimental information can occasionally be a source of distress and perplexity.

Additionally, journal specifications, in some cases, may make it more of a requirement rather than a choice to provide a selective yet descriptive account of the experimental procedure. Hence, knowing these nuances of how to write the methods section of a research paper is critical to its success. The methods section of the research paper is not supposed to be a detailed heavy, dull section that some researchers tend to write; rather, it should be the central component of the study that justifies the validity and reliability of the research.

Are you still unsure of how the methods section of a research paper forms the basis of every investigation? Consider the last article you read but ignore the methods section and concentrate on the other parts of the paper . Now think whether you could repeat the study and be sure of the credibility of the findings despite knowing the literature review and even having the data in front of you. You have the answer!

Having established the importance of the methods section , the next question is how to write the methods section of a research paper that unifies the overall study. The purpose of the methods section , which was earlier called as Materials and Methods , is to describe how the authors went about answering the “research question” at hand. Here, the objective is to tell a coherent story that gives a detailed account of how the study was conducted, the rationale behind specific experimental procedures, the experimental setup, objects (variables) involved, the research protocol employed, tools utilized to measure, calculations and measurements, and the analysis of the collected data 2 .

In this article, we will take a deep dive into this topic and provide a detailed overview of how to write the methods section of a research paper . For the sake of clarity, we have separated the subject into various sections with corresponding subheadings.

Table of Contents

What is the methods section of a research paper ?

The methods section is a fundamental section of any paper since it typically discusses the ‘ what ’, ‘ how ’, ‘ which ’, and ‘ why ’ of the study, which is necessary to arrive at the final conclusions. In a research article, the introduction, which serves to set the foundation for comprehending the background and results is usually followed by the methods section, which precedes the result and discussion sections. The methods section must explicitly state what was done, how it was done, which equipment, tools and techniques were utilized, how were the measurements/calculations taken, and why specific research protocols, software, and analytical methods were employed.

Why is the methods section important?

The primary goal of the methods section is to provide pertinent details about the experimental approach so that the reader may put the results in perspective and, if necessary, replicate the findings 3 . This section offers readers the chance to evaluate the reliability and validity of any study. In short, it also serves as the study’s blueprint, assisting researchers who might be unsure about any other portion in establishing the study’s context and validity. The methods plays a rather crucial role in determining the fate of the article; an incomplete and unreliable methods section can frequently result in early rejections and may lead to numerous rounds of modifications during the publication process. This means that the reviewers also often use methods section to assess the reliability and validity of the research protocol and the data analysis employed to address the research topic. In other words, the purpose of the methods section is to demonstrate the research acumen and subject-matter expertise of the author(s) in their field.

Structure of methods section of a research paper

Similar to the research paper, the methods section also follows a defined structure; this may be dictated by the guidelines of a specific journal or can be presented in a chronological or thematic manner based on the study type. When writing the methods section , authors should keep in mind that they are telling a story about how the research was conducted. They should only report relevant information to avoid confusing the reader and include details that would aid in connecting various aspects of the entire research activity together. It is generally advisable to present experiments in the order in which they were conducted. This facilitates the logical flow of the research and allows readers to follow the progression of the study design.

It is also essential to clearly state the rationale behind each experiment and how the findings of earlier experiments informed the design or interpretation of later experiments. This allows the readers to understand the overall purpose of the study design and the significance of each experiment within that context. However, depending on the particular research question and method, it may make sense to present information in a different order; therefore, authors must select the best structure and strategy for their individual studies.

In cases where there is a lot of information, divide the sections into subheadings to cover the pertinent details. If the journal guidelines pose restrictions on the word limit , additional important information can be supplied in the supplementary files. A simple rule of thumb for sectioning the method section is to begin by explaining the methodological approach ( what was done ), describing the data collection methods ( how it was done ), providing the analysis method ( how the data was analyzed ), and explaining the rationale for choosing the methodological strategy. This is described in detail in the upcoming sections.

How to write the methods section of a research paper

Contrary to widespread assumption, the methods section of a research paper should be prepared once the study is complete to prevent missing any key parameter. Hence, please make sure that all relevant experiments are done before you start writing a methods section . The next step for authors is to look up any applicable academic style manuals or journal-specific standards to ensure that the methods section is formatted correctly. The methods section of a research paper typically constitutes materials and methods; while writing this section, authors usually arrange the information under each category.

The materials category describes the samples, materials, treatments, and instruments, while experimental design, sample preparation, data collection, and data analysis are a part of the method category. According to the nature of the study, authors should include additional subsections within the methods section, such as ethical considerations like the declaration of Helsinki (for studies involving human subjects), demographic information of the participants, and any other crucial information that can affect the output of the study. Simply put, the methods section has two major components: content and format. Here is an easy checklist for you to consider if you are struggling with how to write the methods section of a research paper .

- Explain the research design, subjects, and sample details

- Include information on inclusion and exclusion criteria

- Mention ethical or any other permission required for the study

- Include information about materials, experimental setup, tools, and software

- Add details of data collection and analysis methods

- Incorporate how research biases were avoided or confounding variables were controlled

- Evaluate and justify the experimental procedure selected to address the research question

- Provide precise and clear details of each experiment

- Flowcharts, infographics, or tables can be used to present complex information

- Use past tense to show that the experiments have been done

- Follow academic style guides (such as APA or MLA ) to structure the content

- Citations should be included as per standard protocols in the field

Now that you know how to write the methods section of a research paper , let’s address another challenge researchers face while writing the methods section —what to include in the methods section . How much information is too much is not always obvious when it comes to trying to include data in the methods section of a paper. In the next section, we examine this issue and explore potential solutions.

What to include in the methods section of a research paper

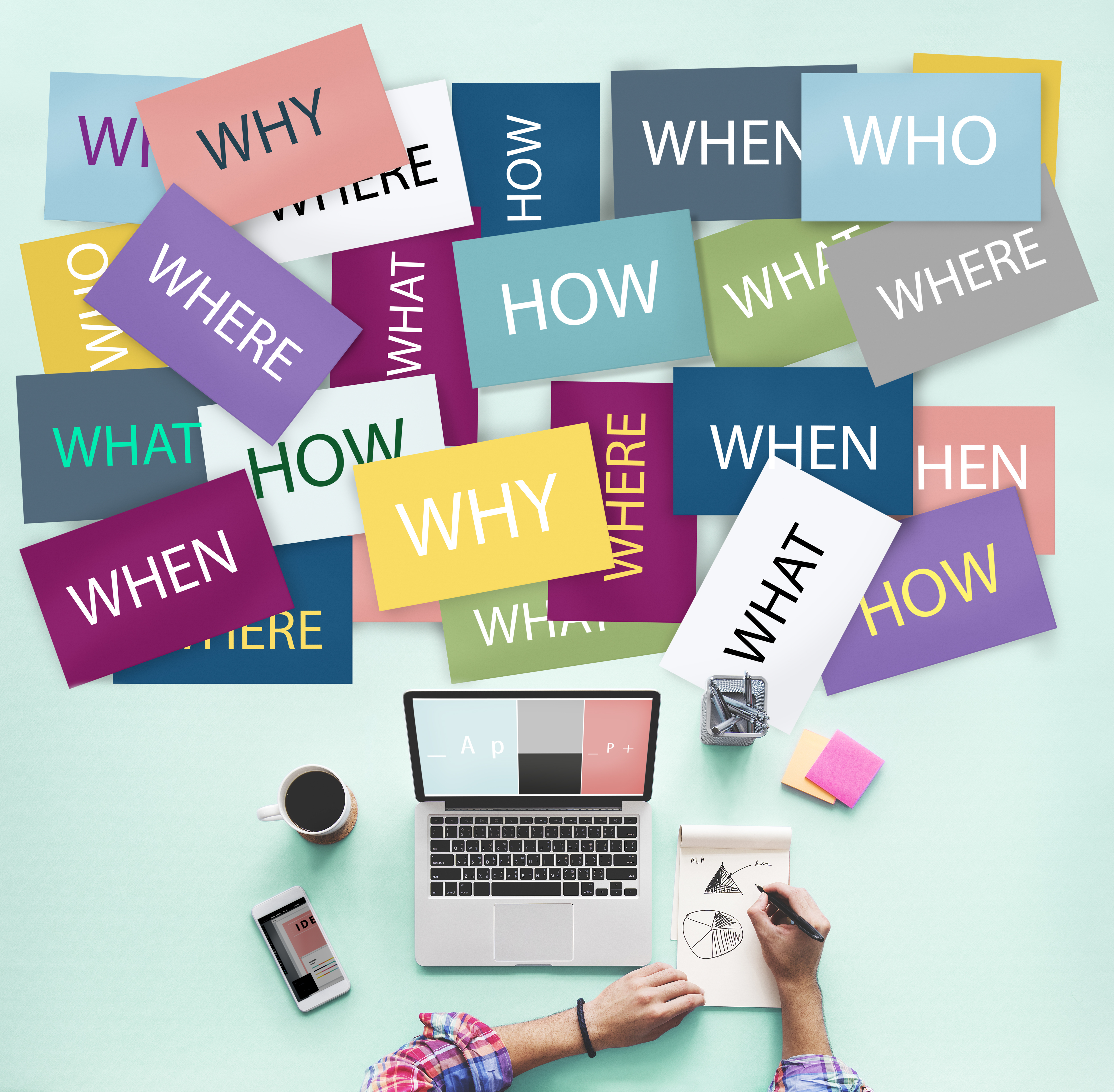

The technical nature of the methods section occasionally makes it harder to present the information clearly and concisely while staying within the study context. Many young researchers tend to veer off subject significantly, and they frequently commit the sin of becoming bogged down in itty bitty details, making the text harder to read and impairing its overall flow. However, the best way to write the methods section is to start with crucial components of the experiments. If you have trouble deciding which elements are essential, think about leaving out those that would make it more challenging to comprehend the context or replicate the results. The top-down approach helps to ensure all relevant information is incorporated and vital information is not lost in technicalities. Next, remember to add details that are significant to assess the validity and reliability of the study. Here is a simple checklist for you to follow ( bonus tip: you can also make a checklist for your own study to avoid missing any critical information while writing the methods section ).

- Structuring the methods section : Authors should diligently follow journal guidelines and adhere to the specific author instructions provided when writing the methods section . Journals typically have specific guidelines for formatting the methods section ; for example, Frontiers in Plant Sciences advises arranging the materials and methods section by subheading and citing relevant literature. There are several standardized checklists available for different study types in the biomedical field, including CONSORT (Consolidated Standards of Reporting Trials) for randomized clinical trials, PRISMA (Preferred Reporting Items for Systematic reviews and Meta-Analysis) for systematic reviews and meta-analysis, and STROBE (STrengthening the Reporting of OBservational studies in Epidemiology) for cohort, case-control, cross-sectional studies. Before starting the methods section , check the checklist available in your field that can function as a guide.

- Organizing different sections to tell a story : Once you are sure of the format required for structuring the methods section , the next is to present the sections in a logical manner; as mentioned earlier, the sections can be organized according to the chronology or themes. In the chronological arrangement, you should discuss the methods in accordance with how the experiments were carried out. An example of the method section of a research paper of an animal study should first ideally include information about the species, weight, sex, strain, and age. Next, the number of animals, their initial conditions, and their living and housing conditions should also be mentioned. Second, how the groups are assigned and the intervention (drug treatment, stress, or other) given to each group, and finally, the details of tools and techniques used to measure, collect, and analyze the data. Experiments involving animal or human subjects should additionally state an ethics approval statement. It is best to arrange the section using the thematic approach when discussing distinct experiments not following a sequential order.

- Define and explain the objects and procedure: Experimental procedure should clearly be stated in the methods section . Samples, necessary preparations (samples, treatment, and drug), and methods for manipulation need to be included. All variables (control, dependent, independent, and confounding) must be clearly defined, particularly if the confounding variables can affect the outcome of the study.

- Match the order of the methods section with the order of results: Though not mandatory, organizing the manuscript in a logical and coherent manner can improve the readability and clarity of the paper. This can be done by following a consistent structure throughout the manuscript; readers can easily navigate through the different sections and understand the methods and results in relation to each other. Using experiment names as headings for both the methods and results sections can also make it simpler for readers to locate specific information and corroborate it if needed.

- Relevant information must always be included: The methods section should have information on all experiments conducted and their details clearly mentioned. Ask the journal whether there is a way to offer more information in the supplemental files or external repositories if your target journal has strict word limitations. For example, Nature communications encourages authors to deposit their step-by-step protocols in an open-resource depository, Protocol Exchange which allows the protocols to be linked with the manuscript upon publication. Providing access to detailed protocols also helps to increase the transparency and reproducibility of the research.

- It’s all in the details: The methods section should meticulously list all the materials, tools, instruments, and software used for different experiments. Specify the testing equipment on which data was obtained, together with its manufacturer’s information, location, city, and state or any other stimuli used to manipulate the variables. Provide specifics on the research process you employed; if it was a standard protocol, cite previous studies that also used the protocol. Include any protocol modifications that were made, as well as any other factors that were taken into account when planning the study or gathering data. Any new or modified techniques should be explained by the authors. Typically, readers evaluate the reliability and validity of the procedures using the cited literature, and a widely accepted checklist helps to support the credibility of the methodology. Note: Authors should include a statement on sample size estimation (if applicable), which is often missed. It enables the reader to determine how many subjects will be required to detect the expected change in the outcome variables within a given confidence interval.

- Write for the audience: While explaining the details in the methods section , authors should be mindful of their target audience, as some of the rationale or assumptions on which specific procedures are based might not always be obvious to the audience, particularly for a general audience. Therefore, when in doubt, the objective of a procedure should be specified either in relation to the research question or to the entire protocol.

- Data interpretation and analysis : Information on data processing, statistical testing, levels of significance, and analysis tools and software should be added. Mention if the recommendations and expertise of an experienced statistician were followed. Also, evaluate and justify the preferred statistical method used in the study and its significance.

What NOT to include in the methods section of a research paper

To address “ how to write the methods section of a research paper ”, authors should not only pay careful attention to what to include but also what not to include in the methods section of a research paper . Here is a list of do not’s when writing the methods section :

- Do not elaborate on specifics of standard methods/procedures: You should refrain from adding unnecessary details of experiments and practices that are well established and cited previously. Instead, simply cite relevant literature or mention if the manufacturer’s protocol was followed.

- Do not add unnecessary details : Do not include minute details of the experimental procedure and materials/instruments used that are not significant for the outcome of the experiment. For example, there is no need to mention the brand name of the water bath used for incubation.

- Do not discuss the results: The methods section is not to discuss the results or refer to the tables and figures; save it for the results and discussion section. Also, focus on the methods selected to conduct the study and avoid diverting to other methods or commenting on their pros or cons.

- Do not make the section bulky : For extensive methods and protocols, provide the essential details and share the rest of the information in the supplemental files. The writing should be clear yet concise to maintain the flow of the section.

We hope that by this point, you understand how crucial it is to write a thoughtful and precise methods section and the ins and outs of how to write the methods section of a research paper . To restate, the entire purpose of the methods section is to enable others to reproduce the results or verify the research. We sincerely hope that this post has cleared up any confusion and given you a fresh perspective on the methods section .

As a parting gift, we’re leaving you with a handy checklist that will help you understand how to write the methods section of a research paper . Feel free to download this checklist and use or share this with those who you think may benefit from it.

References

- Bhattacharya, D. How to write the Methods section of a research paper. Editage Insights, 2018. https://www.editage.com/insights/how-to-write-the-methods-section-of-a-research-paper (2018).

- Kallet, R. H. How to Write the Methods Section of a Research Paper. Respiratory Care 49, 1229–1232 (2004). https://pubmed.ncbi.nlm.nih.gov/15447808/

- Grindstaff, T. L. & Saliba, S. A. AVOIDING MANUSCRIPT MISTAKES. Int J Sports Phys Ther 7, 518–524 (2012). https://www.ncbi.nlm.nih.gov/pmc/articles/PMC3474299/

Editage All Access is a subscription-based platform that unifies the best AI tools and services designed to speed up, simplify, and streamline every step of a researcher’s journey. The Editage All Access Pack is a one-of-a-kind subscription that unlocks full access to an AI writing assistant, literature recommender, journal finder, scientific illustration tool, and exclusive discounts on professional publication services from Editage.

Based on 22+ years of experience in academia, Editage All Access empowers researchers to put their best research forward and move closer to success. Explore our top AI Tools pack, AI Tools + Publication Services pack, or Build Your Own Plan. Find everything a researcher needs to succeed, all in one place – Get All Access now starting at just $14 a month !

Related Posts

How Editage All Access is Boosting Productivity for Academics in India

How to Write a Dissertation: A Beginner’s Guide

15 Research Methodology Examples

Tio Gabunia (B.Arch, M.Arch)

Tio Gabunia is an academic writer and architect based in Tbilisi. He has studied architecture, design, and urban planning at the Georgian Technical University and the University of Lisbon. He has worked in these fields in Georgia, Portugal, and France. Most of Tio’s writings concern philosophy. Other writings include architecture, sociology, urban planning, and economics.

Learn about our Editorial Process

Chris Drew (PhD)

Dr. Chris Drew is the founder of the Helpful Professor. He holds a PhD in education and has published over 20 articles in scholarly journals. He is the former editor of the Journal of Learning Development in Higher Education. [Image Descriptor: Photo of Chris]

Research methodologies can roughly be categorized into three group: quantitative, qualitative, and mixed-methods.

- Qualitative Research : This methodology is based on obtaining deep, contextualized, non-numerical data. It can occur, for example, through open-ended questioning of research particiapnts in order to understand human behavior. It’s all about describing and analyzing subjective phenomena such as emotions or experiences.

- Quantitative Research: This methodology is rationally-based and relies heavily on numerical analysis of empirical data . With quantitative research, you aim for objectivity by creating hypotheses and testing them through experiments or surveys, which allow for statistical analyses.

- Mixed-Methods Research: Mixed-methods research combines both previous types into one project. We have more flexibility when designing our research study with mixed methods since we can use multiple approaches depending on our needs at each time. Using mixed methods can help us validate our results and offer greater predictability than just either type of methodology alone could provide.

Below are research methodologies that fit into each category.

Qualitative Research Methodologies

1. case study.

Conducts an in-depth examination of a specific case, individual, or event to understand a phenomenon.

Instead of examining a whole population for numerical trend data, case study researchers seek in-depth explanations of one event.

The benefit of case study research is its ability to elucidate overlooked details of interesting cases of a phenomenon (Busetto, Wick & Gumbinger, 2020). It offers deep insights for empathetic, reflective, and thoughtful understandings of that phenomenon.

However, case study findings aren’t transferrable to new contexts or for population-wide predictions. Instead, they inform practitioner understandings for nuanced, deep approaches to future instances (Liamputtong, 2020).

2. Grounded Theory

Grounded theory involves generating hypotheses and theories through the collection and interpretation of data (Faggiolani, n.d.). Its distinguishing features is that it doesn’t test a hypothesis generated prior to analysis, but rather generates a hypothesis or ‘theory’ that emerges from the data.

It also involves the application of inductive reasoning and is often contrasted with the hypothetico-deductive model of scientific research. This research methodology was developed by Barney Glaser and Anselm Strauss in the 1960s (Glaser & Strauss, 2009).

The basic difference between traditional scientific approaches to research and grounded theory is that the latter begins with a question, then collects data, and the theoretical framework is said to emerge later from this data.

By contrast, scientists usually begin with an existing theoretical framework , develop hypotheses, and only then start collecting data to verify or falsify the hypotheses.

3. Ethnography

In ethnographic research , the researcher immerses themselves within the group they are studying, often for long periods of time.

This type of research aims to understand the shared beliefs, practices, and values of a particular community by immersing the researcher within the cultural group.

Although ethnographic research cannot predict or identify trends in an entire population, it can create detailed explanations of cultural practices and comparisons between social and cultural groups.

When a person conducts an ethnographic study of themselves or their own culture, it can be considered autoethnography .

Its strength lies in producing comprehensive accounts of groups of people and their interactions.

Common methods researchers use during an ethnographic study include participant observation , thick description, unstructured interviews, and field notes vignettes. These methods can provide detailed and contextualized descriptions of their subjects.

Example Study

Liquidated: An Ethnography of Wall Street by Karen Ho involves an anthropologist who embeds herself with Wall Street firms to study the culture of Wall Street bankers and how this culture affects the broader economy and world.

4. Phenomenology

Phenomenology to understand and describe individuals’ lived experiences concerning a specific phenomenon.

As a research methodology typically used in the social sciences , phenomenology involves the study of social reality as a product of intersubjectivity (the intersection of people’s cognitive perspectives) (Zahavi & Overgaard, n.d.).

This philosophical approach was first developed by Edmund Husserl.

5. Narrative Research

Narrative research explores personal stories and experiences to understand their meanings and interpretations.

It is also known as narrative inquiry and narrative analysis(Riessman, 1993).

This approach to research uses qualitative material like journals, field notes, letters, interviews, texts, photos, etc., as its data.

It is aimed at understanding the way people create meaning through narratives (Clandinin & Connelly, 2004).

6. Discourse Analysis

A discourse analysis examines the structure, patterns, and functions of language in context to understand how the text produces social constructs.

This methodology is common in critical theory , poststructuralism , and postmodernism. Its aim is to understand how language constructs discourses (roughly interpreted as “ways of thinking and constructing knowledge”).

As a qualitative methodology , its focus is on developing themes through close textual analysis rather than using numerical methods. Common methods for extracting data include semiotics and linguistic analysis.

7. Action Research

Action research involves researchers working collaboratively with stakeholders to address problems, develop interventions, and evaluate effectiveness.

Action research is a methodology and philosophy of research that is common in the social sciences.

The term was first coined in 1944 by Kurt Lewin, a German-American psychologist who also introduced applied research and group communication (Altrichter & Gstettner, 1993).

Lewin originally defined action research as involving two primary processes: taking action and doing research (Lewin, 1946).

Action research involves planning, action, and information-seeking about the result of the action.

Since Lewin’s original formulation, many different theoretical approaches to action research have been developed. These include action science, participatory action research, cooperative inquiry, and living educational theory among others.

Using Digital Sandbox Gaming to Improve Creativity Within Boys’ Writing (Ellison & Drew, 2019) is a study conducted by a school teacher who used video games to help teach his students English. It involved action research, where he interviewed his students to see if the use of games as stimuli for storytelling helped draw them into the learning experience, and iterated on his teaching style based on their feedback (disclaimer: I am the second author of this study).

See More: Examples of Qualitative Research

Quantitative Research Methodologies

8. experimental design.

As the name suggests, this type of research is based on testing hypotheses in experimental settings by manipulating variables and observing their effects on other variables.

The main benefit lies in its ability to manipulate specific variables to determine their effect on outcomes which is a great method for those looking for causational links in their research.

This is common, for example, in high-school science labs, where students are asked to introduce a variable into a setting in order to examine its effect.

9. Non-Experimental Design

Non-experimental design observes and measures associations between variables without manipulating them.

It can take, for example, the form of a ‘fly on the wall’ observation of a phenomenon, allowing researchers to examine authentic settings and changes that occur naturally in the environment.