Have a language expert improve your writing

Run a free plagiarism check in 10 minutes, generate accurate citations for free.

- Knowledge Base

Methodology

- Ethical Considerations in Research | Types & Examples

Ethical Considerations in Research | Types & Examples

Published on October 18, 2021 by Pritha Bhandari . Revised on May 9, 2024.

Ethical considerations in research are a set of principles that guide your research designs and practices. Scientists and researchers must always adhere to a certain code of conduct when collecting data from people.

The goals of human research often include understanding real-life phenomena, studying effective treatments, investigating behaviors, and improving lives in other ways. What you decide to research and how you conduct that research involve key ethical considerations.

These considerations work to

- protect the rights of research participants

- enhance research validity

- maintain scientific or academic integrity

Table of contents

Why do research ethics matter, getting ethical approval for your study, types of ethical issues, voluntary participation, informed consent, confidentiality, potential for harm, results communication, examples of ethical failures, other interesting articles, frequently asked questions about research ethics.

Research ethics matter for scientific integrity, human rights and dignity, and collaboration between science and society. These principles make sure that participation in studies is voluntary, informed, and safe for research subjects.

You’ll balance pursuing important research objectives with using ethical research methods and procedures. It’s always necessary to prevent permanent or excessive harm to participants, whether inadvertent or not.

Defying research ethics will also lower the credibility of your research because it’s hard for others to trust your data if your methods are morally questionable.

Even if a research idea is valuable to society, it doesn’t justify violating the human rights or dignity of your study participants.

Here's why students love Scribbr's proofreading services

Discover proofreading & editing

Before you start any study involving data collection with people, you’ll submit your research proposal to an institutional review board (IRB) .

An IRB is a committee that checks whether your research aims and research design are ethically acceptable and follow your institution’s code of conduct. They check that your research materials and procedures are up to code.

If successful, you’ll receive IRB approval, and you can begin collecting data according to the approved procedures. If you want to make any changes to your procedures or materials, you’ll need to submit a modification application to the IRB for approval.

If unsuccessful, you may be asked to re-submit with modifications or your research proposal may receive a rejection. To get IRB approval, it’s important to explicitly note how you’ll tackle each of the ethical issues that may arise in your study.

There are several ethical issues you should always pay attention to in your research design, and these issues can overlap with each other.

You’ll usually outline ways you’ll deal with each issue in your research proposal if you plan to collect data from participants.

| Voluntary participation | Your participants are free to opt in or out of the study at any point in time. |

|---|---|

| Informed consent | Participants know the purpose, benefits, risks, and funding behind the study before they agree or decline to join. |

| Anonymity | You don’t know the identities of the participants. Personally identifiable data is not collected. |

| Confidentiality | You know who the participants are but you keep that information hidden from everyone else. You anonymize personally identifiable data so that it can’t be linked to other data by anyone else. |

| Potential for harm | Physical, social, psychological and all other types of harm are kept to an absolute minimum. |

| Results communication | You ensure your work is free of or research misconduct, and you accurately represent your results. |

Voluntary participation means that all research subjects are free to choose to participate without any pressure or coercion.

All participants are able to withdraw from, or leave, the study at any point without feeling an obligation to continue. Your participants don’t need to provide a reason for leaving the study.

It’s important to make it clear to participants that there are no negative consequences or repercussions to their refusal to participate. After all, they’re taking the time to help you in the research process , so you should respect their decisions without trying to change their minds.

Voluntary participation is an ethical principle protected by international law and many scientific codes of conduct.

Take special care to ensure there’s no pressure on participants when you’re working with vulnerable groups of people who may find it hard to stop the study even when they want to.

Informed consent refers to a situation in which all potential participants receive and understand all the information they need to decide whether they want to participate. This includes information about the study’s benefits, risks, funding, and institutional approval.

You make sure to provide all potential participants with all the relevant information about

- what the study is about

- the risks and benefits of taking part

- how long the study will take

- your supervisor’s contact information and the institution’s approval number

Usually, you’ll provide participants with a text for them to read and ask them if they have any questions. If they agree to participate, they can sign or initial the consent form. Note that this may not be sufficient for informed consent when you work with particularly vulnerable groups of people.

If you’re collecting data from people with low literacy, make sure to verbally explain the consent form to them before they agree to participate.

For participants with very limited English proficiency, you should always translate the study materials or work with an interpreter so they have all the information in their first language.

In research with children, you’ll often need informed permission for their participation from their parents or guardians. Although children cannot give informed consent, it’s best to also ask for their assent (agreement) to participate, depending on their age and maturity level.

Anonymity means that you don’t know who the participants are and you can’t link any individual participant to their data.

You can only guarantee anonymity by not collecting any personally identifying information—for example, names, phone numbers, email addresses, IP addresses, physical characteristics, photos, and videos.

In many cases, it may be impossible to truly anonymize data collection . For example, data collected in person or by phone cannot be considered fully anonymous because some personal identifiers (demographic information or phone numbers) are impossible to hide.

You’ll also need to collect some identifying information if you give your participants the option to withdraw their data at a later stage.

Data pseudonymization is an alternative method where you replace identifying information about participants with pseudonymous, or fake, identifiers. The data can still be linked to participants but it’s harder to do so because you separate personal information from the study data.

Confidentiality means that you know who the participants are, but you remove all identifying information from your report.

All participants have a right to privacy, so you should protect their personal data for as long as you store or use it. Even when you can’t collect data anonymously, you should secure confidentiality whenever you can.

Some research designs aren’t conducive to confidentiality, but it’s important to make all attempts and inform participants of the risks involved.

As a researcher, you have to consider all possible sources of harm to participants. Harm can come in many different forms.

- Psychological harm: Sensitive questions or tasks may trigger negative emotions such as shame or anxiety.

- Social harm: Participation can involve social risks, public embarrassment, or stigma.

- Physical harm: Pain or injury can result from the study procedures.

- Legal harm: Reporting sensitive data could lead to legal risks or a breach of privacy.

It’s best to consider every possible source of harm in your study as well as concrete ways to mitigate them. Involve your supervisor to discuss steps for harm reduction.

Make sure to disclose all possible risks of harm to participants before the study to get informed consent. If there is a risk of harm, prepare to provide participants with resources or counseling or medical services if needed.

Some of these questions may bring up negative emotions, so you inform participants about the sensitive nature of the survey and assure them that their responses will be confidential.

The way you communicate your research results can sometimes involve ethical issues. Good science communication is honest, reliable, and credible. It’s best to make your results as transparent as possible.

Take steps to actively avoid plagiarism and research misconduct wherever possible.

Plagiarism means submitting others’ works as your own. Although it can be unintentional, copying someone else’s work without proper credit amounts to stealing. It’s an ethical problem in research communication because you may benefit by harming other researchers.

Self-plagiarism is when you republish or re-submit parts of your own papers or reports without properly citing your original work.

This is problematic because you may benefit from presenting your ideas as new and original even though they’ve already been published elsewhere in the past. You may also be infringing on your previous publisher’s copyright, violating an ethical code, or wasting time and resources by doing so.

In extreme cases of self-plagiarism, entire datasets or papers are sometimes duplicated. These are major ethical violations because they can skew research findings if taken as original data.

You notice that two published studies have similar characteristics even though they are from different years. Their sample sizes, locations, treatments, and results are highly similar, and the studies share one author in common.

Research misconduct

Research misconduct means making up or falsifying data, manipulating data analyses, or misrepresenting results in research reports. It’s a form of academic fraud.

These actions are committed intentionally and can have serious consequences; research misconduct is not a simple mistake or a point of disagreement about data analyses.

Research misconduct is a serious ethical issue because it can undermine academic integrity and institutional credibility. It leads to a waste of funding and resources that could have been used for alternative research.

Later investigations revealed that they fabricated and manipulated their data to show a nonexistent link between vaccines and autism. Wakefield also neglected to disclose important conflicts of interest, and his medical license was taken away.

This fraudulent work sparked vaccine hesitancy among parents and caregivers. The rate of MMR vaccinations in children fell sharply, and measles outbreaks became more common due to a lack of herd immunity.

Research scandals with ethical failures are littered throughout history, but some took place not that long ago.

Some scientists in positions of power have historically mistreated or even abused research participants to investigate research problems at any cost. These participants were prisoners, under their care, or otherwise trusted them to treat them with dignity.

To demonstrate the importance of research ethics, we’ll briefly review two research studies that violated human rights in modern history.

These experiments were inhumane and resulted in trauma, permanent disabilities, or death in many cases.

After some Nazi doctors were put on trial for their crimes, the Nuremberg Code of research ethics for human experimentation was developed in 1947 to establish a new standard for human experimentation in medical research.

In reality, the actual goal was to study the effects of the disease when left untreated, and the researchers never informed participants about their diagnoses or the research aims.

Although participants experienced severe health problems, including blindness and other complications, the researchers only pretended to provide medical care.

When treatment became possible in 1943, 11 years after the study began, none of the participants were offered it, despite their health conditions and high risk of death.

Ethical failures like these resulted in severe harm to participants, wasted resources, and lower trust in science and scientists. This is why all research institutions have strict ethical guidelines for performing research.

If you want to know more about statistics , methodology , or research bias , make sure to check out some of our other articles with explanations and examples.

- Normal distribution

- Measures of central tendency

- Chi square tests

- Confidence interval

- Quartiles & Quantiles

- Cluster sampling

- Stratified sampling

- Thematic analysis

- Cohort study

- Peer review

- Ethnography

Research bias

- Implicit bias

- Cognitive bias

- Conformity bias

- Hawthorne effect

- Availability heuristic

- Attrition bias

- Social desirability bias

Ethical considerations in research are a set of principles that guide your research designs and practices. These principles include voluntary participation, informed consent, anonymity, confidentiality, potential for harm, and results communication.

Scientists and researchers must always adhere to a certain code of conduct when collecting data from others .

These considerations protect the rights of research participants, enhance research validity , and maintain scientific integrity.

Research ethics matter for scientific integrity, human rights and dignity, and collaboration between science and society. These principles make sure that participation in studies is voluntary, informed, and safe.

Anonymity means you don’t know who the participants are, while confidentiality means you know who they are but remove identifying information from your research report. Both are important ethical considerations .

You can only guarantee anonymity by not collecting any personally identifying information—for example, names, phone numbers, email addresses, IP addresses, physical characteristics, photos, or videos.

You can keep data confidential by using aggregate information in your research report, so that you only refer to groups of participants rather than individuals.

These actions are committed intentionally and can have serious consequences; research misconduct is not a simple mistake or a point of disagreement but a serious ethical failure.

Cite this Scribbr article

If you want to cite this source, you can copy and paste the citation or click the “Cite this Scribbr article” button to automatically add the citation to our free Citation Generator.

Bhandari, P. (2024, May 09). Ethical Considerations in Research | Types & Examples. Scribbr. Retrieved September 13, 2024, from https://www.scribbr.com/methodology/research-ethics/

Is this article helpful?

Pritha Bhandari

Other students also liked, data collection | definition, methods & examples, what is self-plagiarism | definition & how to avoid it, how to avoid plagiarism | tips on citing sources, get unlimited documents corrected.

✔ Free APA citation check included ✔ Unlimited document corrections ✔ Specialized in correcting academic texts

- Privacy Policy

Home » Ethical Considerations – Types, Examples and Writing Guide

Ethical Considerations – Types, Examples and Writing Guide

Table of Contents

Ethical Considerations

Ethical considerations in research refer to the principles and guidelines that researchers must follow to ensure that their studies are conducted in an ethical and responsible manner. These considerations are designed to protect the rights, safety, and well-being of research participants, as well as the integrity and credibility of the research itself

Some of the key ethical considerations in research include:

- Informed consent: Researchers must obtain informed consent from study participants, which means they must inform participants about the study’s purpose, procedures, risks, benefits, and their right to withdraw at any time.

- Privacy and confidentiality : Researchers must ensure that participants’ privacy and confidentiality are protected. This means that personal information should be kept confidential and not shared without the participant’s consent.

- Harm reduction : Researchers must ensure that the study does not harm the participants physically or psychologically. They must take steps to minimize the risks associated with the study.

- Fairness and equity : Researchers must ensure that the study does not discriminate against any particular group or individual. They should treat all participants equally and fairly.

- Use of deception: Researchers must use deception only if it is necessary to achieve the study’s objectives. They must inform participants of the deception as soon as possible.

- Use of vulnerable populations : Researchers must be especially cautious when working with vulnerable populations, such as children, pregnant women, prisoners, and individuals with cognitive or intellectual disabilities.

- Conflict of interest : Researchers must disclose any potential conflicts of interest that may affect the study’s integrity. This includes financial or personal relationships that could influence the study’s results.

- Data manipulation: Researchers must not manipulate data to support a particular hypothesis or agenda. They should report the results of the study objectively, even if the findings are not consistent with their expectations.

- Intellectual property: Researchers must respect intellectual property rights and give credit to previous studies and research.

- Cultural sensitivity : Researchers must be sensitive to the cultural norms and beliefs of the participants. They should avoid imposing their values and beliefs on the participants and should be respectful of their cultural practices.

Types of Ethical Considerations

Types of Ethical Considerations are as follows:

Research Ethics:

This includes ethical principles and guidelines that govern research involving human or animal subjects, ensuring that the research is conducted in an ethical and responsible manner.

Business Ethics :

This refers to ethical principles and standards that guide business practices and decision-making, such as transparency, honesty, fairness, and social responsibility.

Medical Ethics :

This refers to ethical principles and standards that govern the practice of medicine, including the duty to protect patient autonomy, informed consent, confidentiality, and non-maleficence.

Environmental Ethics :

This involves ethical principles and values that guide our interactions with the natural world, including the obligation to protect the environment, minimize harm, and promote sustainability.

Legal Ethics

This involves ethical principles and standards that guide the conduct of legal professionals, including issues such as confidentiality, conflicts of interest, and professional competence.

Social Ethics

This involves ethical principles and values that guide our interactions with other individuals and society as a whole, including issues such as justice, fairness, and human rights.

Information Ethics

This involves ethical principles and values that govern the use and dissemination of information, including issues such as privacy, accuracy, and intellectual property.

Cultural Ethics

This involves ethical principles and values that govern the relationship between different cultures and communities, including issues such as respect for diversity, cultural sensitivity, and inclusivity.

Technological Ethics

This refers to ethical principles and guidelines that govern the development, use, and impact of technology, including issues such as privacy, security, and social responsibility.

Journalism Ethics

This involves ethical principles and standards that guide the practice of journalism, including issues such as accuracy, fairness, and the public interest.

Educational Ethics

This refers to ethical principles and standards that guide the practice of education, including issues such as academic integrity, fairness, and respect for diversity.

Political Ethics

This involves ethical principles and values that guide political decision-making and behavior, including issues such as accountability, transparency, and the protection of civil liberties.

Professional Ethics

This refers to ethical principles and standards that guide the conduct of professionals in various fields, including issues such as honesty, integrity, and competence.

Personal Ethics

This involves ethical principles and values that guide individual behavior and decision-making, including issues such as personal responsibility, honesty, and respect for others.

Global Ethics

This involves ethical principles and values that guide our interactions with other nations and the global community, including issues such as human rights, environmental protection, and social justice.

Applications of Ethical Considerations

Ethical considerations are important in many areas of society, including medicine, business, law, and technology. Here are some specific applications of ethical considerations:

- Medical research : Ethical considerations are crucial in medical research, particularly when human subjects are involved. Researchers must ensure that their studies are conducted in a way that does not harm participants and that participants give informed consent before participating.

- Business practices: Ethical considerations are also important in business, where companies must make decisions that are socially responsible and avoid activities that are harmful to society. For example, companies must ensure that their products are safe for consumers and that they do not engage in exploitative labor practices.

- Environmental protection: Ethical considerations play a crucial role in environmental protection, as companies and governments must weigh the benefits of economic development against the potential harm to the environment. Decisions about land use, resource allocation, and pollution must be made in an ethical manner that takes into account the long-term consequences for the planet and future generations.

- Technology development : As technology continues to advance rapidly, ethical considerations become increasingly important in areas such as artificial intelligence, robotics, and genetic engineering. Developers must ensure that their creations do not harm humans or the environment and that they are developed in a way that is fair and equitable.

- Legal system : The legal system relies on ethical considerations to ensure that justice is served and that individuals are treated fairly. Lawyers and judges must abide by ethical standards to maintain the integrity of the legal system and to protect the rights of all individuals involved.

Examples of Ethical Considerations

Here are a few examples of ethical considerations in different contexts:

- In healthcare : A doctor must ensure that they provide the best possible care to their patients and avoid causing them harm. They must respect the autonomy of their patients, and obtain informed consent before administering any treatment or procedure. They must also ensure that they maintain patient confidentiality and avoid any conflicts of interest.

- In the workplace: An employer must ensure that they treat their employees fairly and with respect, provide them with a safe working environment, and pay them a fair wage. They must also avoid any discrimination based on race, gender, religion, or any other characteristic protected by law.

- In the media : Journalists must ensure that they report the news accurately and without bias. They must respect the privacy of individuals and avoid causing harm or distress. They must also be transparent about their sources and avoid any conflicts of interest.

- In research: Researchers must ensure that they conduct their studies ethically and with integrity. They must obtain informed consent from participants, protect their privacy, and avoid any harm or discomfort. They must also ensure that their findings are reported accurately and without bias.

- In personal relationships : People must ensure that they treat others with respect and kindness, and avoid causing harm or distress. They must respect the autonomy of others and avoid any actions that would be considered unethical, such as lying or cheating. They must also respect the confidentiality of others and maintain their privacy.

How to Write Ethical Considerations

When writing about research involving human subjects or animals, it is essential to include ethical considerations to ensure that the study is conducted in a manner that is morally responsible and in accordance with professional standards. Here are some steps to help you write ethical considerations:

- Describe the ethical principles: Start by explaining the ethical principles that will guide the research. These could include principles such as respect for persons, beneficence, and justice.

- Discuss informed consent : Informed consent is a critical ethical consideration when conducting research. Explain how you will obtain informed consent from participants, including how you will explain the purpose of the study, potential risks and benefits, and how you will protect their privacy.

- Address confidentiality : Describe how you will protect the confidentiality of the participants’ personal information and data, including any measures you will take to ensure that the data is kept secure and confidential.

- Consider potential risks and benefits : Describe any potential risks or harms to participants that could result from the study and how you will minimize those risks. Also, discuss the potential benefits of the study, both to the participants and to society.

- Discuss the use of animals : If the research involves the use of animals, address the ethical considerations related to animal welfare. Explain how you will minimize any potential harm to the animals and ensure that they are treated ethically.

- Mention the ethical approval : Finally, it’s essential to acknowledge that the research has received ethical approval from the relevant institutional review board or ethics committee. State the name of the committee, the date of approval, and any specific conditions or requirements that were imposed.

When to Write Ethical Considerations

Ethical considerations should be written whenever research involves human subjects or has the potential to impact human beings, animals, or the environment in some way. Ethical considerations are also important when research involves sensitive topics, such as mental health, sexuality, or religion.

In general, ethical considerations should be an integral part of any research project, regardless of the field or subject matter. This means that they should be considered at every stage of the research process, from the initial planning and design phase to data collection, analysis, and dissemination.

Ethical considerations should also be written in accordance with the guidelines and standards set by the relevant regulatory bodies and professional associations. These guidelines may vary depending on the discipline, so it is important to be familiar with the specific requirements of your field.

Purpose of Ethical Considerations

Ethical considerations are an essential aspect of many areas of life, including business, healthcare, research, and social interactions. The primary purposes of ethical considerations are:

- Protection of human rights: Ethical considerations help ensure that people’s rights are respected and protected. This includes respecting their autonomy, ensuring their privacy is respected, and ensuring that they are not subjected to harm or exploitation.

- Promoting fairness and justice: Ethical considerations help ensure that people are treated fairly and justly, without discrimination or bias. This includes ensuring that everyone has equal access to resources and opportunities, and that decisions are made based on merit rather than personal biases or prejudices.

- Promoting honesty and transparency : Ethical considerations help ensure that people are truthful and transparent in their actions and decisions. This includes being open and honest about conflicts of interest, disclosing potential risks, and communicating clearly with others.

- Maintaining public trust: Ethical considerations help maintain public trust in institutions and individuals. This is important for building and maintaining relationships with customers, patients, colleagues, and other stakeholders.

- Ensuring responsible conduct: Ethical considerations help ensure that people act responsibly and are accountable for their actions. This includes adhering to professional standards and codes of conduct, following laws and regulations, and avoiding behaviors that could harm others or damage the environment.

Advantages of Ethical Considerations

Here are some of the advantages of ethical considerations:

- Builds Trust : When individuals or organizations follow ethical considerations, it creates a sense of trust among stakeholders, including customers, clients, and employees. This trust can lead to stronger relationships and long-term loyalty.

- Reputation and Brand Image : Ethical considerations are often linked to a company’s brand image and reputation. By following ethical practices, a company can establish a positive image and reputation that can enhance its brand value.

- Avoids Legal Issues: Ethical considerations can help individuals and organizations avoid legal issues and penalties. By adhering to ethical principles, companies can reduce the risk of facing lawsuits, regulatory investigations, and fines.

- Increases Employee Retention and Motivation: Employees tend to be more satisfied and motivated when they work for an organization that values ethics. Companies that prioritize ethical considerations tend to have higher employee retention rates, leading to lower recruitment costs.

- Enhances Decision-making: Ethical considerations help individuals and organizations make better decisions. By considering the ethical implications of their actions, decision-makers can evaluate the potential consequences and choose the best course of action.

- Positive Impact on Society: Ethical considerations have a positive impact on society as a whole. By following ethical practices, companies can contribute to social and environmental causes, leading to a more sustainable and equitable society.

About the author

Muhammad Hassan

Researcher, Academic Writer, Web developer

You may also like

Appendix in Research Paper – Examples and...

Thesis Statement – Examples, Writing Guide

Research Methods – Types, Examples and Guide

Research Project – Definition, Writing Guide and...

Assignment – Types, Examples and Writing Guide

Research Methodology – Types, Examples and...

Ethical considerations in research: Best practices and examples

To conduct responsible research, you’ve got to think about ethics. They protect participants’ rights and their well-being - and they ensure your findings are valid and reliable. This isn’t just a box for you to tick. It’s a crucial consideration that can make all the difference to the outcome of your research.

In this article, we'll explore the meaning and importance of research ethics in today's research landscape. You'll learn best practices to conduct ethical and impactful research.

Examples of ethical considerations in research

As a researcher, you're responsible for ethical research alongside your organization. Fulfilling ethical guidelines is critical. Organizations must ensure employees follow best practices to protect participants' rights and well-being.

Keep these things in mind when it comes to ethical considerations in research:

Voluntary participation

Voluntary participation is key. Nobody should feel like they're being forced to participate or pressured into doing anything they don't want to. That means giving people a choice and the ability to opt out at any time, even if they've already agreed to take part in the study.

Informed consent

Informed consent isn't just an ethical consideration. It's a legal requirement as well. Participants must fully understand what they're agreeing to, including potential risks and benefits.

The best way to go about this is by using a consent form. Make sure you include:

- A brief description of the study and research methods.

- The potential benefits and risks of participating.

- The length of the study.

- Contact information for the researcher and/or sponsor.

- Reiteration of the participant’s right to withdraw from the research project at any time without penalty.

Anonymity means that participants aren't identifiable in any way. This includes:

- Email address

- Photographs

- Video footage

You need a way to anonymize research data so that it can't be traced back to individual participants. This may involve creating a new digital ID for participants that can’t be linked back to their original identity using numerical codes.

Confidentiality

Information gathered during a study must be kept confidential. Confidentiality helps to protect the privacy of research participants. It also ensures that their information isn't disclosed to unauthorized individuals.

Some ways to ensure confidentiality include:

- Using a secure server to store data.

- Removing identifying information from databases that contain sensitive data.

- Using a third-party company to process and manage research participant data.

- Not keeping participant records for longer than necessary.

- Avoiding discussion of research findings in public forums.

Potential for harm

The potential for harm is a crucial factor in deciding whether a research study should proceed. It can manifest in various forms, such as:

- Psychological harm

- Social harm

- Physical harm

Conduct an ethical review to identify possible harms. Be prepared to explain how you’ll minimize these harms and what support is available in case they do happen.

Fair payment

One of the most crucial aspects of setting up a research study is deciding on fair compensation for your participants. Underpayment is a common ethical issue that shouldn't be overlooked. Properly rewarding participants' time is critical for boosting engagement and obtaining high-quality data. While Prolific requires a minimum payment of £6.00 / $8.00 per hour, there are other factors you need to consider when deciding on a fair payment.

First, check your institution's reimbursement guidelines to see if they already have a minimum or maximum hourly rate. You can also use the national minimum wage as a reference point.

Next, think about the amount of work you're asking participants to do. The level of effort required for a task, such as producing a video recording versus a short survey, should correspond with the reward offered.

You also need to consider the population you're targeting. To attract research subjects with specific characteristics or high-paying jobs, you may need to offer more as an incentive.

We recommend a minimum payment of £9.00 / $12.00 per hour, but we understand that payment rates can vary depending on a range of factors. Whatever payment you choose should reflect the amount of effort participants are required to put in and be fair to everyone involved.

Ethical research made easy with Prolific

At Prolific, we believe in making ethical research easy and accessible. The findings from the Fairwork Cloudwork report speak for themselves. Prolific was given the top score out of all competitors for minimum standards of fair work.

With over 25,000 researchers in our community, we're leading the way in revolutionizing the research industry. If you're interested in learning more about how we can support your research journey, sign up to get started now.

You might also like

High-quality human data to deliver world-leading research and AIs.

Follow us on

All Rights Reserved Prolific 2024

Research Ethics & Ethical Considerations

A Plain-Language Explainer With Examples

By: Derek Jansen (MBA) | Reviewers: Dr Eunice Rautenbach | May 2024

Research ethics are one of those “ unsexy but essential ” subjects that you need to fully understand (and apply) to conquer your dissertation, thesis or research paper. In this post, we’ll unpack research ethics using plain language and loads of examples .

Overview: Research Ethics 101

- What are research ethics?

- Why should you care?

- Research ethics principles

- Respect for persons

- Beneficence

- Objectivity

- Key takeaways

What (exactly) are research ethics?

At the simplest level, research ethics are a set of principles that ensure that your study is conducted responsibly, safely, and with integrity. More specifically, research ethics help protect the rights and welfare of your research participants, while also ensuring the credibility of your research findings.

Research ethics are critically important for a number of reasons:

Firstly, they’re a complete non-negotiable when it comes to getting your research proposal approved. Pretty much all universities will have a set of ethical criteria that student projects need to adhere to – and these are typically very strictly enforced. So, if your proposed study doesn’t tick the necessary ethical boxes, it won’t be approved .

Beyond the practical aspect of approval, research ethics are essential as they ensure that your study’s participants (whether human or animal) are properly protected . In turn, this fosters trust between you and your participants – as well as trust between researchers and the public more generally. As you can probably imagine, it wouldn’t be good if the general public had a negative perception of researchers!

Last but not least, research ethics help ensure that your study’s results are valid and reliable . In other words, that you measured the thing you intended to measure – and that other researchers can repeat your study. If you’re not familiar with the concepts of reliability and validity , we’ve got a straightforward explainer video covering that below.

The Core Principles

In practical terms, each university or institution will have its own ethics policy – so, what exactly constitutes “ethical research” will vary somewhat between institutions and countries. Nevertheless, there are a handful of core principles that shape ethics policies. These principles include:

Let’s unpack each of these to make them a little more tangible.

Ethics Principle 1: Respect for persons

As the name suggests, this principle is all about ensuring that your participants are treated fairly and respectfully . In practical terms, this means informed consent – in other words, participants should be fully informed about the nature of the research, as well as any potential risks. Additionally, they should be able to withdraw from the study at any time. This is especially important when you’re dealing with vulnerable populations – for example, children, the elderly or people with cognitive disabilities.

Another dimension of the “respect for persons” principle is confidentiality and data protection . In other words, your participants’ personal information should be kept strictly confidential and secure at all times. Depending on the specifics of your project, this might also involve anonymising or masking people’s identities. As mentioned earlier, the exact requirements will vary between universities, so be sure to thoroughly review your institution’s ethics policy before you start designing your project.

Need a helping hand?

Ethics Principle 2: Beneficence

This principle is a little more opaque, but in simple terms beneficence means that you, as the researcher, should aim to maximise the benefits of your work, while minimising any potential harm to your participants.

In practical terms, benefits could include advancing knowledge, improving health outcomes, or providing educational value. Conversely, potential harms could include:

- Physical harm from accidents or injuries

- Psychological harm, such as stress or embarrassment

- Social harm, such as stigmatisation or loss of reputation

- Economic harm – in other words, financial costs or lost income

Simply put, the beneficence principle means that researchers must always try to identify potential risks and take suitable measures to reduce or eliminate them.

Ethics Principle 3: Objectivity

As you can probably guess, this principle is all about attempting to minimise research bias to the greatest degree possible. In other words, you’ll need to reduce subjectivity and increase objectivity wherever possible.

In practical terms, this principle has the largest impact on the methodology of your study – specifically the data collection and data analysis aspects. For example, you’ll need to ensure that the selection of your participants (in other words, your sampling strategy ) is aligned with your research aims – and that your sample isn’t skewed in a way that supports your presuppositions.

If you’re keen to learn more about research bias and the various ways in which you could unintentionally skew your results, check out the video below.

Ethics Principle 4: Integrity

Again, no surprises here; this principle is all about producing “honest work” . It goes without saying that researchers should always conduct their work honestly and transparently, report their findings accurately, and disclose any potential conflicts of interest upfront.

This is all pretty obvious, but another aspect of the integrity principle that’s sometimes overlooked is respect for intellectual property . In practical terms, this means you need to honour any patents, copyrights, or other forms of intellectual property that you utilise while undertaking your research. Along the same vein, you shouldn’t use any unpublished data, methods, or results without explicit, written permission from the respective owner.

Linked to all of this is the broader issue of plagiarism . Needless to say, if you’re drawing on someone else’s published work, be sure to cite your sources, in the correct format. To make life easier, use a reference manager such as Mendeley or Zotero to ensure that your citations and reference list are perfectly polished.

FAQs: Research Ethics

Research ethics & ethical considertation, what is informed consent.

Informed consent simply means providing your potential participants with all necessary information about the study. This should include information regarding the study’s purpose, procedures, risks, and benefits. This information allows your potential participants to make a voluntary and informed decision about whether to participate.

How should I obtain consent from non-English speaking participants?

What about animals.

When conducting research with animals, ensure you adhere to ethical guidelines for the humane treatment of animals. Again, the exact requirements here will vary between institutions, but typically include minimising pain and distress, using alternatives where possible, and obtaining approval from an animal care and use committee.

What is the role of the ERB or IRB?

An ethics review board (ERB) or institutional review board (IRB) evaluates research proposals to ensure they meet ethical standards. The board reviews study designs, consent forms, and data handling procedures, to protect participants’ welfare and rights.

How can I obtain ethical approval for my project?

This varies between universities, but you will typically need to submit a detailed research proposal to your institution’s ethics committee. This proposal should include your research objectives, methods, and how you plan to address ethical considerations like informed consent, confidentiality, and risk minimisation. You can learn more about how to write a proposal here .

How do I ensure ethical collaboration when working with colleagues?

Collaborative research should be conducted with mutual respect and clear agreements on roles, contributions, and publication credits. Open communication is key to preventing conflicts and misunderstandings. Also, be sure to check whether your university has any specific requirements with regards to collaborative efforts and division of labour.

How should I address ethical concerns relating to my funding source?

Key takeaways: research ethics 101.

Here’s a quick recap of the key points we’ve covered:

- Research ethics are a set of principles that ensure that your study is conducted responsibly.

- It’s essential that you design your study around these principles, or it simply won’t get approved.

- The four ethics principles we looked at are: respect for persons, beneficence, objectivity and integrity

As mentioned, the exact requirements will vary slightly depending on the institution and country, so be sure to thoroughly review your university’s research ethics policy before you start developing your study.

Psst... there’s more!

This post was based on one of our popular Research Bootcamps . If you're working on a research project, you'll definitely want to check this out ...

Great piece!!!

Submit a Comment Cancel reply

Your email address will not be published. Required fields are marked *

Save my name, email, and website in this browser for the next time I comment.

- Print Friendly

What Are the Ethical Considerations in Research Design?

When I began my work on the thesis I was always focused on my research. However, once I began to make my way through research, I realized that research ethics is a core aspect of the research work and the foundation of research design.

Research ethics play a crucial role in ensuring the responsible conduct of research. Here are some key reasons why research ethics matter:

Let us look into some of the major ethical considerations in research design.

Ethical Issues in Research

There are many organizations, like the Committee on Publication Ethics , dedicated to promoting ethics in scientific research. These organizations agree that ethics is not an afterthought or side note to the research study. It is an integral aspect of research that needs to remain at the forefront of our work.

The research design must address specific research questions. Hence, the conclusions of the study must correlate to the questions posed and the results. Also, research ethics demands that the methods used must relate specifically to the research questions.

Voluntary Participation and Consent

An individual should at no point feel any coercion to participate in a study. This includes any type of persuasion or deception in attempting to gain an individual’s trust.

Informed consent states that an individual must give their explicit consent to participate in the study. You can think of consent form as an agreement of trust between the researcher and the participants.

Sampling is the first step in research design . You will need to explain why you want a particular group of participants. You will have to explain why you left out certain people or groups. In addition, if your sample includes children or special needs individuals, you will have additional requirements to address like parental permission.

Confidentiality

The third ethics principle of the Economic and Social Research Council (ESRC) states that: “The confidentiality of the information supplied by research subjects and the anonymity of respondents must be respected.” However, sometimes confidentiality is limited. For example, if a participant is at risk of harm, we must protect them. This might require releasing confidential information.

Risk of Harm

We should do everything in our power to protect study participants. For this, we should focus on the risk to benefit ratio. If possible risks outweigh the benefits, then we should abandon or redesign the study. Risk of harm also requires us to measure the risk to benefit ratio as the study progresses.

Research Methods

We know there are numerous research methods. However, when it comes to ethical considerations, some key questions can help us find the right approach for our studies.

i. Which methods most effectively fit the aims of your research?

ii. What are the strengths and restrictions of a particular method?

iii. Are there potential risks when using a particular research method?

For more guidance, you can refer to the ESRC Framework for Research Ethics .

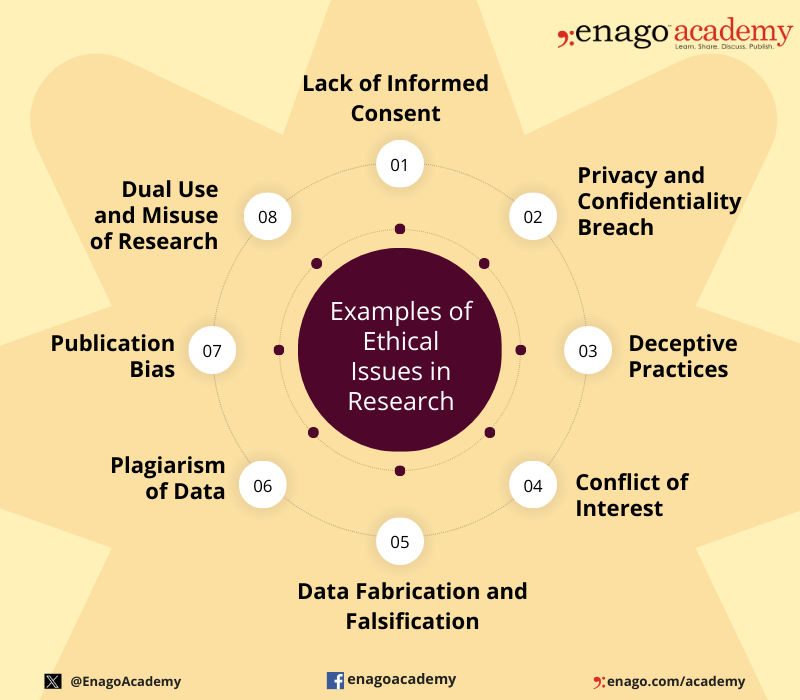

Ethical issues in research can arise at various stages of the research process and involve different aspects of the study. Here are some common examples of ethical issues in research:

Institutional Review Boards

The importance of ethics in research cannot be understated. Following ethical guidelines will ensure your study’s validity and promote its contribution to scientific study. On a personal level, you will strengthen your research and increase your opportunities to gain funding.

To address the need for ethical considerations, most institutions have their own Institutional Review Board (IRB). An IRB secures the safety of human participants and prevents violation of human rights. It reviews the research aims and methodologies to ensure ethical practices are followed. If a research design does not follow the set ethical guidelines, then the researcher will have to amend their study.

Applying for Ethical Approval

Applications for ethical approval will differ across institutions. Regardless, they focus on the benefits of your research and the risk to benefit ratio concerning participants. Therefore, you need to effectively address both in order to get ethical clearence.

Participants

It is vital that you make it clear that individuals are provided with sufficient information in order to make an informed decision on their participation. In addition, you need to demonstrate that the ethical issues of consent, risk of harm, and confidentiality are clearly defined.

Benefits of the Study

You need to prove to the panel that your work is essential and will yield results that contribute to the scientific community. For this, you should demonstrate the following:

i. The conduct of research guarantees the quality and integrity of results.

ii. The research will be properly distributed.

iii. The aims of the research are clear and the methodology is appropriate.

Integrity and transparency are vital in the research. Ethics committees expect you to share any actual or potential conflicts of interest that could affect your work. In addition, you have to be honest and transparent throughout the approval process and the research process.

The Dangers of Unethical Practices

There is a reason to follow ethical guidelines. Without these guidelines, our research will suffer. Moreover, more importantly, people could suffer.

The following are just two examples of infamous cases of unethical research practices that demonstrate the importance of adhering to ethical standards:

- The Stanford Prison Experiment (1971) aimed to investigate the psychological effects of power using the relationship between prisoners and prison officers. Those assigned the role of “prison officers” embraced measures that exposed “prisoners” to psychological and physical harm. In this case, there was voluntary participation. However, there was disregard for welfare of the participants.

- Recently, Chinese scientist He Jiankui announced his work on genetically edited babies . Over 100 Chinese scientists denounced this research, calling it “crazy” and “shocking and unacceptable.” This research shows a troubling attitude of “do first, debate later” and a disregard for the ethical concerns of manipulating the human body Wang Yuedan, a professor of immunology at Peking University, calls this “an ethics disaster for the world” and demands strict punishments for this type of ethics violation.

What are your experiences with research ethics? How have you developed an ethical approach to research design? Please share your thoughts with us in the comments section below.

I love the articulation of reasoning and practical examples of unethical research

Rate this article Cancel Reply

Your email address will not be published.

Enago Academy's Most Popular Articles

- AI in Academia

- Trending Now

6 Leading AI Detection Tools for Academic Writing — A comparative analysis

The advent of AI content generators, exemplified by advanced models like ChatGPT, Claude AI, and…

- Reporting Research

Choosing the Right Analytical Approach: Thematic analysis vs. content analysis for data interpretation

In research, choosing the right approach to understand data is crucial for deriving meaningful insights.…

- Industry News

China’s Ministry of Education Spearheads Efforts to Uphold Academic Integrity

In response to the increase in retractions of research papers submitted by Chinese scholars to…

Comparing Cross Sectional and Longitudinal Studies: 5 steps for choosing the right approach

The process of choosing the right research design can put ourselves at the crossroads of…

- Publishing Research

- Understanding Ethics

Understanding the Difference Between Research Ethics and Compliance

Ethics refers to the principles, values, and moral guidelines that guide individual or group behavior…

Unlocking the Power of Networking in Academic Conferences

Avoiding the AI Trap: Pitfalls of relying on ChatGPT for PhD applications

10 Ways to Help Students Restore Focus on Learning

Switching Your Major As a Researcher: Things to Consider Before Making the Decision

Sign-up to read more

Subscribe for free to get unrestricted access to all our resources on research writing and academic publishing including:

- 2000+ blog articles

- 50+ Webinars

- 10+ Expert podcasts

- 50+ Infographics

- 10+ Checklists

- Research Guides

We hate spam too. We promise to protect your privacy and never spam you.

- Promoting Research

- Career Corner

- Diversity and Inclusion

- Infographics

- Expert Video Library

- Other Resources

- Enago Learn

- Upcoming & On-Demand Webinars

- Peer Review Week 2024

- Open Access Week 2023

- Conference Videos

- Enago Report

- Journal Finder

- Enago Plagiarism & AI Grammar Check

- Editing Services

- Publication Support Services

- Research Impact

- Translation Services

- Publication solutions

- AI-Based Solutions

- Thought Leadership

- Call for Articles

- Call for Speakers

- Author Training

- Edit Profile

I am looking for Editing/ Proofreading services for my manuscript Tentative date of next journal submission:

Which among these features would you prefer the most in a peer review assistant?

Instant insights, infinite possibilities

A guide to ethical considerations in research

Last updated

12 March 2023

Reviewed by

Miroslav Damyanov

Whether you are conducting a survey, running focus groups , doing field research, or holding interviews, the chances are participants will be a part of the process.

Taking ethical considerations into account and following all obligations are essential when people are involved in your research. Upholding academic integrity is another crucial ethical concern in all research types.

So, how can you protect your participants and ensure that your research is ethical? Let’s take a closer look at the ethical considerations in research and the best practices to follow.

Make research less tedious

Dovetail streamlines research to help you uncover and share actionable insights

- The importance of ethical research

Research ethics are integral to all forms of research. They help protect participants’ rights, ensure that the research is valid and accurate, and help minimize any risk of harm during the process.

When people are involved in your research, it’s particularly important to consider whether your planned research method follows ethical practices.

You might ask questions such as:

Will our participants be protected?

Is there a risk of any harm?

Are we doing all we can to protect the personal data and information we collect?

Does our study include any bias?

How can we ensure that the results will be accurate and valid?

Will our research impact public safety?

Is there a more ethical way to complete the research?

Conducting research unethically and not protecting participants’ rights can have serious consequences. It can discredit the entire study. Human rights, dignity, and research integrity should all be front of mind when you are conducting research.

- How to conduct ethical research

Before kicking off any project, the entire team must be familiar with ethical best practices. These include the considerations below.

Voluntary participation

In an ethical study, all participants have chosen to be part of the research. They must have voluntarily opted in without any pressure or coercion to do so. They must be aware that they are part of a research study. Their information must not be used against their will.

To ensure voluntary participation, make it clear at the outset that the person is opting into the process.

While participants may agree to be part of a study for a certain duration, they are allowed to change their minds. Participants must be free to leave or withdraw from the study at any time. They don’t need to give a reason.

Informed consent

Before kicking off any research, it’s also important to gain consent from all participants. This ensures participants are clear that they are part of a research study and understand all of the information related to it.

Gaining informed consent usually involves a written consent form—physical or digital—that participants can sign.

Best practice informed consent generally includes the following:

An explanation of what the study is

The duration of the study

The expectations of participants

Any potential risks

An explanation that participants are free to withdraw at any time

Contact information for the research supervisor

When obtaining informed consent, you should ensure that all parties truly understand what they are signing and their obligations as a participant. There should never be any coercion to sign.

Anonymity is key to ensuring that participants cannot be identified through their data. Personal information includes things like participants’ names, addresses, emails, phone numbers, characteristics, and photos.

However, making information truly anonymous can be challenging, especially if personal information is a necessary part of the research.

To maintain a degree of anonymity, avoid gathering any information you don’t need. This will minimize the risk of participants being identified.

Another useful tool is data pseudonymization, which makes it harder to directly link information to a real person. Data pseudonymization means giving participants fake names or mock information to protect their identity. You could, for example, replace participants’ names with codes.

Confidentiality

Keeping data confidential is a critical aspect of all forms of research. You should communicate to all participants that their information will be protected and then take active steps to ensure that happens.

Data protection has become a serious topic in recent years and should be taken seriously. The more information you gather, the more important it is to heavily protect that data.

There are many ways to protect data, including the following:

Restricted access: Information should only be accessible to the researchers involved in the project to limit the risk of breaches.

Password protection : Information should not be accessible without access via a password that complies with secure password guidelines.

Encrypted data: In this day and age, password protection isn’t usually sufficient. Encrypting the data can help ensure its security.

Data retention: All organizations should uphold a data retention policy whereby data gathered should only be held for a certain period of time. This minimizes the risk of breaches further down the line.

In research where participants are grouped together (such as in focus groups), ask participants not to pass on what has been discussed. This helps maintain the group’s privacy.

Data falsification

Regardless of what your study is about or whether it involves humans, it’s always unethical to falsify data or information. That means editing or changing any data that has been gathered or gathering data in ways that skew the results.

Bias in research is highly problematic and can significantly impact research integrity. Data falsification or misrepresentation can have serious consequences.

Take the case of Korean researcher Hwang Woo-suk, for example. Woo-suk, once considered a scientific leader in stem-cell research, was found guilty of fabricating experiments in the field and making ethical violations. Once discovered, he was fired from his role and sentenced to two years in prison.

All conflicts of interest should be declared at the outset to avoid any bias or risk of fabrication in the research process. Data must be collected and recorded accurately, and analysis must be completed impartially.

If conflicts do arise during the study, researchers may need to step back to maintain the study’s integrity. Outsourcing research to neutral third parties is necessary in some cases.

Potential for harm

Another consideration is the potential for harm. When completing research, it’s important to ensure that your participants will be safe throughout the study’s duration.

Harm during research could occur in many forms.

Physical harm may occur if your participants are asked to perform a physical activity, or if they are involved in a medical study.

Psychological harm can occur if questions or activities involve triggering or sensitive topics, or if participants are asked to complete potentially embarrassing tasks.

Harm can be caused through a data breach or privacy concern.

A study can cause harm if the participants don’t feel comfortable with the study expectations or their supervisors.

Maintaining the physical and mental well-being of all participants throughout studies is an essential aspect of ethical research.

- Gaining ethical approval

Gaining ethical approval may be necessary before conducting some types of research.

The US Department of Health and Human Services (HHS) and the US Food and Drug Administration (FDA) advise that approval is likely required for studies involving people.

To gain approval, it’s necessary to submit a proposal to an Institutional Review Board (IRB). The board will check the proposal and ensure that the research aligns with ethical practices. It will allow the project to proceed if it meets requirements.

Not gaining appropriate approval could invalidate your study, so it’s essential to pay attention to all local guidelines and laws.

- The dangers of unethical practices

Not maintaining ethical standards in research isn’t just questionable—it can be dangerous too. Many historical cases show just how widespread the ramifications can be.

The case of Korean researcher Hwang Woo-suk shows just how critical it is to obtain information ethically and accurately represent findings.

A case in 1998, which involved fraudulent data reporting, further proves this point.

The study, now debunked, was completed by Andrew Wakefield. It suggested there may be a link between the measles, mumps, and rubella (MMR) vaccine and autism in children. It was later found that the data was manipulated to show a causal link when there wasn’t one. Wakefield’s medical license was removed as a result, but the fraudulent study was still widely cited and continues to cause vaccine hesitancy among many parents.

Large organizational bodies have also been a part of unethical research. The alcohol industry, for example, was found to be highly influential in a major public health study in an attempt to prove that moderate alcohol consumption had health benefits. Five major alcohol companies pledged approximately $66 million to fund the study.

However, the World Health Organization (WHO) is clear that research shows there is no safe level of alcohol consumption. After pressure from many organizations, the study was eventually pulled due to biasing by the alcohol industry. Despite this, the idea that moderate alcohol consumption is better than abstaining may still appear in public discourse.

In more extreme cases, unethical research has led to medical studies being completed on people without their knowledge and against their will. The atrocities committed in Nazi Germany during World War II are an example.

Unethical practices in research are not just problematic or in conflict with academic integrity; they can seriously harm public health and safety.

- The ethical way to research

Considering ethical concerns and adopting best practices throughout studies is essential when conducting research.

When people are involved in studies, it’s important to consider their rights. They must not be coerced into participating, and they should be protected throughout the process.

Accurate reporting, unbiased results, and a genuine interest in answering questions rather than confirming assumptions are all essential aspects of ethical research.

Ethical research ultimately means producing true and valuable results for the benefit of everyone impacted by your study.

What are ethical considerations in research?

Ethical research involves a series of guidelines and considerations to ensure that the information gathered is valid and reliable. These guidelines ensure that:

People are not harmed during research

Participants have data protection and anonymity

Academic integrity is upheld

Not maintaining ethics in research can have serious consequences for those involved in the studies, the broader public, and policymakers.

What are the most common ethical considerations?

To maintain integrity and validity in research, all biases must be removed, data should be reported accurately, and studies must be clearly represented.

Some of the most common ethical guidelines when it comes to humans in research include avoiding harm, data protection, anonymity, informed consent, and confidentiality.

What are the ethical issues in secondary research?

Using secondary data is generally considered an ethical practice. That’s because the use of secondary data minimizes the impact on participants, reduces the need for additional funding, and maximizes the value of the data collection.

However, secondary research still has risks. For example, the risk of data breaches increases as more parties gain access to the information.

To minimize the risk, researchers should consider anonymity or data pseudonymization before the data is passed on. Furthermore, using the data should not cause any harm or distress to participants.

Should you be using a customer insights hub?

Do you want to discover previous research faster?

Do you share your research findings with others?

Do you analyze research data?

Start for free today, add your research, and get to key insights faster

Editor’s picks

Last updated: 18 April 2023

Last updated: 27 February 2023

Last updated: 22 August 2024

Last updated: 5 February 2023

Last updated: 16 August 2024

Last updated: 9 March 2023

Last updated: 30 April 2024

Last updated: 12 December 2023

Last updated: 11 March 2024

Last updated: 4 July 2024

Last updated: 6 March 2024

Last updated: 5 March 2024

Last updated: 13 May 2024

Latest articles

Related topics, .css-je19u9{-webkit-align-items:flex-end;-webkit-box-align:flex-end;-ms-flex-align:flex-end;align-items:flex-end;display:-webkit-box;display:-webkit-flex;display:-ms-flexbox;display:flex;-webkit-flex-direction:row;-ms-flex-direction:row;flex-direction:row;-webkit-box-flex-wrap:wrap;-webkit-flex-wrap:wrap;-ms-flex-wrap:wrap;flex-wrap:wrap;-webkit-box-pack:center;-ms-flex-pack:center;-webkit-justify-content:center;justify-content:center;row-gap:0;text-align:center;max-width:671px;}@media (max-width: 1079px){.css-je19u9{max-width:400px;}.css-je19u9>span{white-space:pre;}}@media (max-width: 799px){.css-je19u9{max-width:400px;}.css-je19u9>span{white-space:pre;}} decide what to .css-1kiodld{max-height:56px;display:-webkit-box;display:-webkit-flex;display:-ms-flexbox;display:flex;-webkit-align-items:center;-webkit-box-align:center;-ms-flex-align:center;align-items:center;}@media (max-width: 1079px){.css-1kiodld{display:none;}} build next, decide what to build next, log in or sign up.

Get started for free

- The Open University

- Accessibility hub

- Guest user / Sign out

- Study with The Open University

My OpenLearn Profile

Personalise your OpenLearn profile, save your favourite content and get recognition for your learning

Addressing ethical issues in your research proposal

This article explores the ethical issues that may arise in your proposed study during your doctoral research degree.

What ethical principles apply when planning and conducting research?

Research ethics are the moral principles that govern how researchers conduct their studies (Wellcome Trust, 2014). As there are elements of uncertainty and risk involved in any study, every researcher has to consider how they can uphold these ethical principles and conduct the research in a way that protects the interests and welfare of participants and other stakeholders (such as organisations).

You will need to consider the ethical issues that might arise in your proposed study. Consideration of the fundamental ethical principles that underpin all research will help you to identify the key issues and how these could be addressed. As you are probably a practitioner who wants to undertake research within your workplace, consider how your role as an ‘insider’ influences how you will conduct your study. Think about the ethical issues that might arise when you become an insider researcher (for example, relating to trust, confidentiality and anonymity).

What key ethical principles do you think will be important when planning or conducting your research, particularly as an insider? Principles that come to mind might include autonomy, respect, dignity, privacy, informed consent and confidentiality. You may also have identified principles such as competence, integrity, wellbeing, justice and non-discrimination.

Key ethical issues that you will address as an insider researcher include:

- Gaining trust

- Avoiding coercion when recruiting colleagues or other participants (such as students or service users)

- Practical challenges relating to ensuring the confidentiality and anonymity of organisations and staff or other participants.

(Heslop et al, 2018)

A fuller discussion of ethical principles is available from the British Psychological Society’s Code of Human Research Ethics (BPS, 2021).

You can also refer to guidance from the British Educational Research Association and the British Association for Applied Linguistics .

Ethical principles are essential for protecting the interests of research participants, including maximising the benefits and minimising any risks associated with taking part in a study. These principles describe ethical conduct which reflects the integrity of the researcher, promotes the wellbeing of participants and ensures high-quality research is conducted (Health Research Authority, 2022).

Research ethics is therefore not simply about gaining ethical approval for your study to be conducted. Research ethics relates to your moral conduct as a doctoral researcher and will apply throughout your study from design to dissemination (British Psychological Society, 2021). When you apply to undertake a doctorate, you will need to clearly indicate in your proposal that you understand these ethical principles and are committed to upholding them.

Where can I find ethical guidance and resources?

Professional bodies, learned societies, health and social care authorities, academic publications, Research Ethics Committees and research organisations provide a range of ethical guidance and resources. International codes such as the Universal Declaration of Human Rights underpin ethical frameworks (United Nations, 1948).

You may be aware of key legislation in your own country or the country where you plan to undertake the research, including laws relating to consent, data protection and decision-making capacity, for example, the Data Protection Act, 2018 (UK). If you want to find out more about becoming an ethical researcher, check out this Open University short course: Becoming an ethical researcher: Introduction and guidance: What is a badged course? - OpenLearn - Open University

You should be able to justify the research decisions you make. Utilising these resources will guide your ethical judgements when writing your proposal and ultimately when designing and conducting your research study. The Ethical Guidelines for Educational Research (British Educational Research Association, 2018) identifies the key responsibilities you will have when you conduct your research, including the range of stakeholders that you will have responsibilities to, as follows:

- to your participants (e.g. to appropriately inform them, facilitate their participation and support them)

- clients, stakeholders and sponsors

- the community of educational or health and social care researchers

- for publication and dissemination

- your wellbeing and development

The National Institute for Health and Care Research (no date) has emphasised the need to promote equality, diversity and inclusion when undertaking research, particularly to address long-standing social and health inequalities. Research should be informed by the diversity of people’s experiences and insights, so that it will lead to the development of practice that addresses genuine need. A commitment to equality, diversity and inclusion aims to eradicate prejudice and discrimination on the basis of an individual or group of individuals' protected characteristics such as sex (gender), disability, race, sexual orientation, in line with the Equality Act 2010.

The NIHR has produced guidance for enhancing the inclusion of ‘under-served groups’ when designing a research study (2020). Although the guidance refers to clinical research it is relevant to research more broadly.

You should consider how you will promote equality and diversity in your planned study, including through aspects such as your research topic or question, the methodology you will use, the participants you plan to recruit and how you will analyse and interpret your data.

What ethical issues do I need to consider when writing my research proposal?

You might be planning to undertake research in a health, social care, educational or other setting, including observations and interviews. The following prompts should help you to identify key ethical issues that you need to bear in mind when undertaking research in such settings.

1. Imagine you are a potential participant. Think about the questions and concerns that you might have:

- How would you feel if a researcher sat in your space and took notes, completed a checklist, or made an audio or film recording?

- What harm might a researcher cause by observing or interviewing you and others?

- What would you want to know about the researcher and ask them about the study before giving consent?

- When imagining you are the participant, how could the researcher make you feel more comfortable to be observed or interviewed?

2. Having considered the perspective of your potential participant, how would you take account of concerns such as privacy, consent, wellbeing and power in your research proposal?

[Adapted from OpenLearn course: Becoming an ethical researcher, Week 2 Activity 3: Becoming an ethical researcher - OpenLearn - Open University ]

The ethical issues to be considered will vary depending on your organisational context/role, the types of participants you plan to recruit (for example, children, adults with mental health problems), the research methods you will use, and the types of data you will collect. You will need to decide how to recruit your participants so you do not inappropriately exclude anyone. Consider what methods may be necessary to facilitate their voice and how you can obtain their consent to taking part or ensure that consent is obtained from someone else as necessary, for example, a parent in the case of a child.