Thank you for visiting nature.com. You are using a browser version with limited support for CSS. To obtain the best experience, we recommend you use a more up to date browser (or turn off compatibility mode in Internet Explorer). In the meantime, to ensure continued support, we are displaying the site without styles and JavaScript.

- View all journals

- Explore content

- About the journal

- Publish with us

- Sign up for alerts

- Open access

- Published: 09 October 2023

Sign language recognition using the fusion of image and hand landmarks through multi-headed convolutional neural network

- Refat Khan Pathan 1 ,

- Munmun Biswas 2 ,

- Suraiya Yasmin 3 ,

- Mayeen Uddin Khandaker ORCID: orcid.org/0000-0003-3772-294X 4 , 5 ,

- Mohammad Salman 6 &

- Ahmed A. F. Youssef 6

Scientific Reports volume 13 , Article number: 16975 ( 2023 ) Cite this article

16k Accesses

13 Citations

Metrics details

- Computational science

- Image processing

Sign Language Recognition is a breakthrough for communication among deaf-mute society and has been a critical research topic for years. Although some of the previous studies have successfully recognized sign language, it requires many costly instruments including sensors, devices, and high-end processing power. However, such drawbacks can be easily overcome by employing artificial intelligence-based techniques. Since, in this modern era of advanced mobile technology, using a camera to take video or images is much easier, this study demonstrates a cost-effective technique to detect American Sign Language (ASL) using an image dataset. Here, “Finger Spelling, A” dataset has been used, with 24 letters (except j and z as they contain motion). The main reason for using this dataset is that these images have a complex background with different environments and scene colors. Two layers of image processing have been used: in the first layer, images are processed as a whole for training, and in the second layer, the hand landmarks are extracted. A multi-headed convolutional neural network (CNN) model has been proposed and tested with 30% of the dataset to train these two layers. To avoid the overfitting problem, data augmentation and dynamic learning rate reduction have been used. With the proposed model, 98.981% test accuracy has been achieved. It is expected that this study may help to develop an efficient human–machine communication system for a deaf-mute society.

Similar content being viewed by others

AI enabled sign language recognition and VR space bidirectional communication using triboelectric smart glove

Sign language recognition based on dual-path background erasure convolutional neural network

Improved 3D-ResNet sign language recognition algorithm with enhanced hand features

Introduction.

Spoken language is the medium of communication between a majority of the population. With spoken language, it would be workable for a massive extent of the population to impart. Nonetheless, despite spoken language, a section of the population cannot speak with most of the other population. Mute people cannot convey a proper meaning using spoken language. Hard of hearing is a handicap that weakens their hearing and makes them unfit to hear, while quiet is an incapacity that impedes their talking and makes them incapable of talking. Both are just handicapped in their hearing or potentially, therefore, cannot still do many other things. Communication is the only thing that isolates them from ordinary people 1 . As there are so many languages in the world, a unique language is needed to express their thoughts and opinions, which will be understandable to ordinary people, and such a language is named sign language. Understanding sign language is an arduous task, an ability that must be educated with training.

Many methods are available that use different things/tools like images (2D, 3D), sensor data (hand globe 2 , Kinect sensor 3 , neuromorphic sensor 4 ), videos, etc. All things are considered due to the fact that the captured images are excessively noisy. Therefore an elevated level of pre-processing is required. The available online datasets are already processed or taken in a lab environment where it becomes easy for recent advanced AI models to train and evaluate, causing prone to errors in real-life applications with different kinds of noises. Accordingly, it is a basic need to make a model that can deal with noisy images and also be able to deliver positive results. Different sorts of methods can be utilized to execute the classification and recognition of images using machine learning. Apart from recognizing static images, work has been done in depth-camera detecting and video processing 5 , 6 , 7 . Various cycles inserted in the system were created utilizing other programming languages to execute the procedural strategies for the final system's maximum adequacy. The issue can be addressed and deliberately coordinated into three comparable methodologies: initially using static image recognition techniques and pre-processing procedures, secondly by using deep learning models, and thirdly by using Hidden Markov Models.

Sign language guides this part of the community and empowers smooth communication in the community of people with trouble talking and hearing (deaf and dumb). They use hand signals along with facial expressions and body activities to cooperate. Yet, as a global language, not many people become familiar with communication via sign language gestures 8 . Hand motions comprise a significant part of communication through signing vocabulary. At the same time, facial expressions and body activities assume the jobs of underlining the words and phrases communicated by hand motions. Hand motions can be static or dynamic 9 , 10 . There are methodologies for motion discovery utilizing the dynamic vision sensor (DVS), a similar technique used in the framework introduced in this composition. For example, Arnon et al. 11 have presented an event-based gesture recognition system, which measures the event stream utilizing a natively event-based processor from International Business Machines called TrueNorth. They use a temporal filter cascade to create Spatio-temporal frames that CNN executes in the event-based processor, and they reported an accuracy of 96.46%. But in a real-life scenario, corresponding background situations are not static. Therefore the stated power saving process might not work properly. Jun Haeng Lee et al. 12 proposed a motion classification method with two DVSs to get a stereo-vision system. They used spike neurons to handle the approaching occasions with the same real-life issue. Static hand signals are also called hand acts and are framed in different shapes and directions of hands without speaking to any movement data. Dynamic hand motions comprise a sequence of hand stances with related movement information 13 . Using facial expressions, static hand images, and hand signals, communication through signing gives instruments to convey similarly as if communicated in dialects; there are different kinds of communication via gestures as well 14 .

In this work, we have applied a fusion of traditional image processing with extracted hand landmarks and trained on a multi-headed CNN so that it could complement each other’s weights on the concatenation layer. The main objective is to achieve a better detection rate without relying on a traditional single-channel CNN. This method has been proven to work well with less computational power and fewer epochs on medical image datasets 15 . The rest of the paper is divided into multiple sections as literature review in " Literature review " section, materials and methods in " Materials and methods " section with three subsections: dataset description in Dataset description , image pre-processing in " Pre-processing of image dataset " and working procedure in " Working procedure ", result analysis in " Result analysis " section, and conclusion in " Conclusion " section.

Literature review

State-of-the-art techniques centered after utilizing deep learning models to improve good accuracy and less execution time. CNNs have indicated huge improvements in visual object recognition 16 , natural language processing 17 , scene labeling 18 , medical image processing 15 , and so on. Despite these accomplishments, there is little work on applying CNNs to video classification. This is halfway because of the trouble in adjusting the CNNs to join both spatial and fleeting data. Model using exceptional hardware components such as a depth camera has been used to get the data on the depth variation in the image to locate an extra component for correlation, and then built up a CNN for getting the results 19 , still has low accuracy. An innovative technique that does not need a pre-trained model for executing the system was created using a capsule network and versatile pooling 11 .

Furthermore, it was revealed that lowering the layers of CNN, which employs a greedy way to do so, and developing a deep belief network produced superior outcomes compared to other fundamental methodologies 20 . Feature extraction using scale-invariant feature transform (SIFT) and classification using Neural Networks were developed to obtain the ideal results 21 . In one of the methods, the images were changed into an RGB conspire, the data was developed utilizing the movement depth channel lastly using 3D recurrent convolutional neural networks (3DRCNN) to build up a working system 5 , 22 where Canny edge detection oriented FAST and Rotated BRIEF (ORB) has been used. ORB feature detection technique and K-means clustering algorithm used to create the bag of feature model for all descriptors is described, but the plain background, easy to detect edges are totally dependent on edges; if the edges give wrong info, the model may fall accuracy and become the main problem to solve.

In recent years, utilizing deep learning approaches has become standard for improving the recognition accuracy of sign language models. Using Faster Region-based Convolutional Neural Network (Faster-RCNN) 23 , a CNN model is applied for hand recognition in the data image. Rastgoo et al. 24 proposed a method where they cropped an image properly, used fusion between RGB and depth image (RBM), added two noise types (Gaussian noise + salt n paper noise), and prepared the data for training. As a naturally propelled deep learning model, CNNs achieve every one of the three phases with a single framework that is prepared from crude pixel esteems to classifier yields, but extreme computation power was needed. Authors in ref. 25 proposed 3D CNNs where the third dimension joins both spatial and fleeting stamps. It accepts a few neighboring edges as input and performs 3D convolution in the convolutional layers. Along with them, the study reported in 26 followed similar thoughts and proposed regularizing the yields with high-level features, joining the expectations of a wide range of models. They applied the developed models to perceive human activities and accomplished better execution in examination than benchmark methods. But it is not sure it works with hand gestures as they detected face first and thenody movement 27 .

On the other hand, the Microsoft and Leap Motion companies have developed unmistakable approaches to identify and track a user’s hand and body movement by presenting Kinect and the leap motion controller (LMC) separately. Kinect recognizes the body skeleton and tracks the hands, whereas the LMC distinguishes and tracks hands with its underlying cameras and infrared sensors 3 , 28 . Using the provided framework, Sykora et al. 7 utilized the Kinect system to catch the depth data of 10 hand motions to classify them using a speeded-up robust features (SURF) technique that came up to an 82.8% accuracy, but it cannot test on more extensive database and modified feature extraction methods (SIFT, SURF) so it can be caused non-invariant to the orientation of gestures. Likewise, Huang et al. 29 proposed a 10-word-based ASL recognition system utilizing Kinect by tenfold cross-validation with an SVM that accomplished a precision pace of 97% using a set of frame-independent features, but the most significant problem in this method is segmentation.

The literature summarizes that most of the models used in this application either depend on a single variable or require high computational power. Also, their dataset choice for training and validating the model is in plain background, which is easier to detect. Our main aim is to show how to reduce the computational power for training and the dependency of model training on one layer.

Materials and methods

Dataset description.

Using a generalized single-color background to classify sign language is very common. We intended to avoid that single color background and use a complex background with many users’ hand images to increase the detection complexity. That’s why we have used the “ASL Finger Spelling” dataset 30 , which has images of different sizes, orientations, and complex backgrounds of over 500 images per sign (24 sign total) of 4 users (non-native to sign language). This dataset contains separate RGB and depth images; we have worked with the RGB images in this research. The photos were taken in 5 sessions with the same background and lighting. The dataset details are shown in Table 1 , and some sample images are shown in Fig. 1 .

Sample images from a dataset containing 24 signs from the same user.

Pre-processing of image dataset

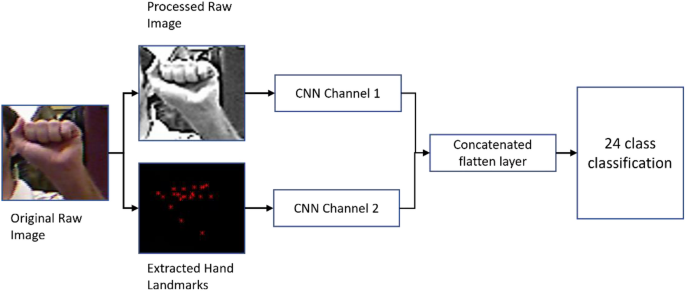

Images were pre-processed for two operations: preparing the original image training set and extracting the hand landmarks. Traditional CNN has one input data channel and one output channel. We are using two input data channels and one output channel, so data needs to be prepared for both inputs individually.

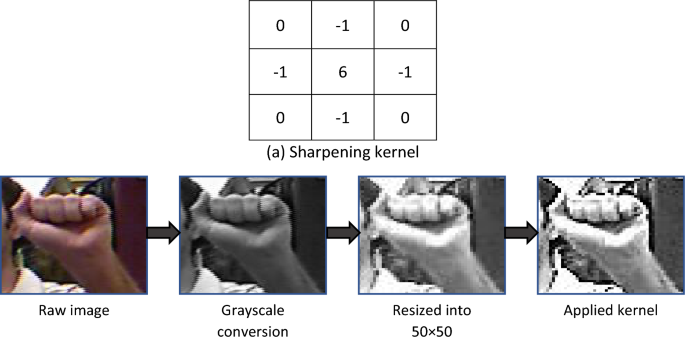

Raw image processing

In raw image processing, we have converted the images from RGB to grayscale to reduce color complexity. Then we used a 2D kernel matrix for sharpening the images, as shown in Fig. 2 . After that, we resized the images into 50 × 50 pixels for evaluation through CNN. Finally, we have normalized the grayscale values (0–255) by dividing the pixel values by 255, so now the new pixel array contains value ranges (0–1). The primary advantage of this normalization is that CNN works faster in the (0–1) range rather than other limits.

Raw image pre-processing with ( a ) sharpening kernel.

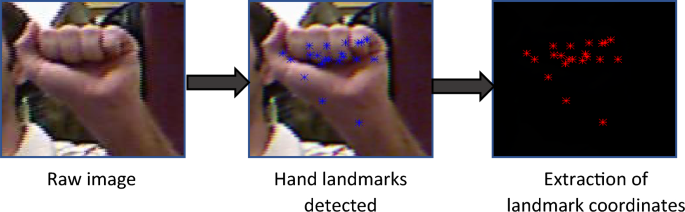

Hand landmark detection

Google’s hand landmark model has an input channel of RGB and an image size of (224 × 224 × 3). So, we have taken the RGB images, converted pixel values into float32, and resized all the images into (256 × 256 × 3). After applying the model, it gives 21 coordinated 3-dimensional points. The landmark detection process is shown in Fig. 3 .

Hand landmarks detection and extraction of 21 coordinates.

Working procedure

The whole work is divided into two main parts, one is the raw image processing, and another one is the hand landmarks extraction. After both individual processing had been completed, a custom lightweight simple multi-headed CNN model was built to train both data. Before processing through a fully connected layer for classification, we merged both channel’s features so that the model could choose between the best weights. This working procedure is illustrated in Fig. 4 .

Flow diagram of working procedure.

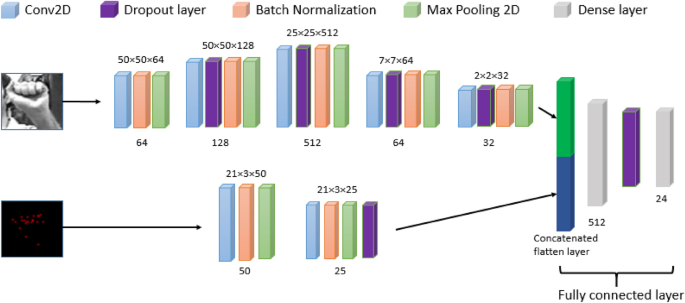

Model building

In this research, we have used multi-headed CNN, meaning our model has two input data channels. Before this, we trained processed images and hand landmarks with two separate models to compare. Google’s model is not best for “in the wild” situations, so we needed original images to complement the low faults in Google’s model. In the first head of the model, we have used the processed images as input and hand landmarks data as the second head’s input. Two-dimensional Convolutional layers with filter size 50, 25, kernel (3, 3) with Relu, strides 1; MaxPooling 2D with pool size (2, 2), batch normalization, and Dropout layer has been used in the hand landmarks training side. Besides, the 2D Convolutional layer with filter size 32, 64, 128, 512, kernel (3, 3) with Relu; MaxPooling 2D with pool size (2, 2); batch normalization and dropout layer has been used in the image training side. After both flatten layers, two heads are concatenated and go through a dense, dropout layer. Finally, the output dense layer has 24 units with Softmax activation. This model has been compiled with Adam optimizer and MSE loss for 50 epochs. Figure 5 illustrates the proposed CNN architecture, and Table 2 shows the model details.

Proposed multi-headed CNN architecture. Bottom values are the number of filters and top values are output shapes.

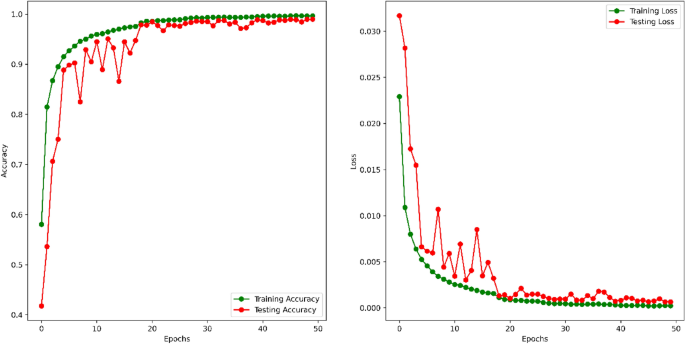

Training and testing

The input images were augmented to generate more difficulty in training so that the model could not overfit. Image Data Generator did image augmentation with 10° rotation, 0.1 zoom range, 0.1 widths and height shift range, and horizontal flip. Being more conscious about the overfitting issues, we have used dynamic learning rates, monitoring the validation accuracy with patience 5, factor 0.5, and a minimum learning rate of 0.00001. For training, we have used 46,023 images, and for testing, 19,725 images. For 50 epochs, the training vs testing accuracy and loss has been shown in Fig. 6 .

Training versus testing accuracy and loss for 50 epochs.

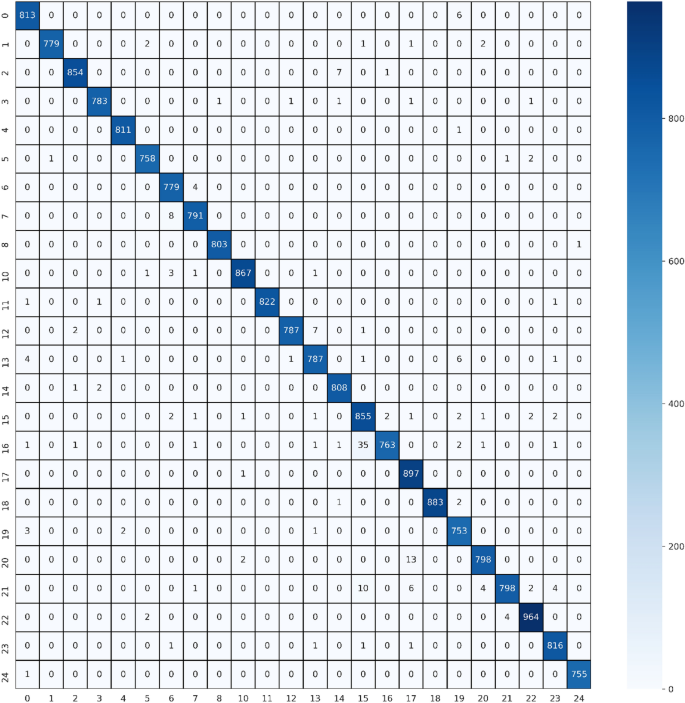

For further evaluation, we have calculated the precision, recall, and F1 score of the proposed multi-headed CNN model, which shows excellent performance. To compute these values, we first calculated the confusion matrix (shown in Fig. 7 ). When a class is positive and also classified as so, it is called true positive (TP). Again, when a class is negative and classified as so, it is called true negative (TN). If a class is negative and classified as positive, it is called false positive (FP). Also, when a class is positive and classified as not negative, it is called false negative (FN). From these, we can conclude precision, recall, and F1 score like the below:

Confusion matrix of the testing dataset. Numerical values in X and Y axis means the sequential letters from A = 0 to Y = 24, number 9 and 25 is missing because dataset does not have letter J and Z.

Precision: Precision is the ratio of TP and total predicted positive observation.

Recall: It is the ratio of TP and total positive observations in the actual class.

F1 score: F1 score is the weighted average of precision and recall.

The Precision, Recall, and F1 score for 24 classes are shown in Table 3 .

Result analysis

In human action recognition tasks, sign language has an extra advantage as it can be used to communicate efficiently. Many techniques have been developed using image processing, sensor data processing, and motion detection by applying different dynamic algorithms and methods like machine learning and deep learning. Depending on methodologies, researchers have proposed their way of classifying sign languages. As technologies develop, we can explore the limitations of previous works and improve accuracy. In ref. 13 , this paper proposes a technique for acknowledging hand motions, which is an excellent part of gesture-based communication jargon, because of a proficient profound deep convolutional neural network (CNN) architecture. The proposed CNN design disposes of the requirement for recognition and division of hands from the captured images, decreasing the computational weight looked at during hand pose recognition with classical approaches. In our method, we used two input channels for the images and hand landmarks to get more robust data, making the process more efficient with a dynamic learning rate adjustment. Besides in ref 14 , the presented results were acquired by retraining and testing the sign language gestures dataset on a convolutional neural organization model utilizing Inception v3. The model comprises various convolution channel inputs that are prepared on a piece of similar information. A capsule-based deep neural network sign posture translator for an American Sign Language (ASL) fingerspelling (posture) 20 has been introduced where the idea concept of capsules and pooling are used simultaneously in the network. This exploration affirms that utilizing pooling and capsule routing on a similar network can improve the network's accuracy and convergence speed. In our method, we have used the pre-trained model of Google to extract the hand landmarks, almost like transfer learning. We have shown that utilizing two input channels could also improve accuracy.

Moreover, ref 5 proposed a 3DRCNN model integrating a 3D convolutional neural network (3DCNN) and upgraded completely associated recurrent neural network (FC-RNN), where 3DCNN learns multi-methodology features from RGB, motion, and depth channels, and FCRNN catch the fleeting data among short video clips divided from the original video. Consecutive clips with a similar semantic significance are singled out by applying the sliding window way to deal with a section of the clips on the whole video sequence. Combining a CNN and traditional feature extractors, capable of accurate and real-time hand posture recognition 26 where the architecture is assessed on three particular benchmark datasets and contrasted and the cutting edge convolutional neural networks. Extensive experimentation is directed utilizing binary, grayscale, and depth data and two different validation techniques. The proposed feature fusion-based CNN 31 is displayed to perform better across blends of approval procedures and image representation. Similarly, fusion-based CNN is demonstrated to improve the recognition rate in our study.

After worldwide motion analysis, the hand gesture image sequence was dissected for keyframe choice. The video sequences of a given gesture were divided in the RGB shading space before feature extraction. This progression enjoyed the benefit of shaded gloves worn by the endorsers. Samples of pixel vectors representative of the glove’s color were used to estimate the mean and covariance matrix of the shading, which was sectioned. So, the division interaction was computerized with no user intervention. The video frames were converted into color HSV (Hue-SaturationValue) space in the color object tracking method. Then the pixels with the following shading were distinguished and marked, and the resultant images were converted to a binary (Gray Scale image). The system identifies image districts compared to human skin by binarizing the input image with a proper threshold value. Then, at that point, small regions from the binarized image were eliminated by applying a morphological operator and selecting the districts to get an image as an applicant of hand.

In the proposed method we have used two-headed CNN to train the processed input images. Though the single image input stream is widely used, two input streams have an advantage among them. In the classification layer of CNN, if one layer is giving a false result, it could be complemented by the other layer’s weight, and it is possible that combining both results could provide a positive outcome. We used this theory and successfully improved the final validation and test results. Before combining image and hand landmark inputs, we tested both individually and acquired a test accuracy of 96.29% for the image and 98.42% for hand landmarks. We did not use binarization as it would affect the background of an image with skin color matched with hand color. This method is also suitable for wild situations as it is not entirely dependent on hand position in an image frame. A comparison of the literature and our work has been shown in Table 4 , which shows that our method overcomes most of the current position in accuracy gain.

Table 5 illustrates that the Combined Model, while having a larger number of parameters and consuming more memory, achieves the highest accuracy of 98.98%. This suggests that the combined approach, which incorporates both image and hand landmark information, is effective for the task when accuracy is priority. On the other hand, the Hand Landmarks Model, despite having fewer parameters and lower memory consumption, also performs impressively with an accuracy of 98.42%. But it has its own error and memory consumption rate in model training by Google. The Image Model, while consuming less memory, has a slightly lower accuracy of 96.29%. The choice between these models would depend on the specific application requirements, trade-offs between accuracy and resource utilization, and the importance of execution time.

This work proposes a methodology for perceiving the classification of sign language recognition. Sign language is the core medium of communication between deaf-mute and everyday people. It is highly implacable in real-world scenarios like communication, human–computer interaction, security, advanced AI, and much more. For a long time, researchers have been working in this field to make a reliable, low cost and publicly available SRL system using different sensors, images, videos, and many more techniques. Many datasets have been used, including numeric sensory, motion, and image datasets. Most datasets are prepared in a good lab condition to do experiments, but in the real world, it may not be a practical case. That’s why, looking into the real-world situation, the Fingerspelling dataset has been used, which contains real-world scenarios like complex backgrounds, uneven image shapes, and conditions. First, the raw images are processed and resized into a 50 × 50 size. Then, the hand landmark points are detected and extracted from these hand images. Making images goes through two processing techniques; now, there are two data channels. A multi-headed CNN architecture has been proposed for these two data channels. Total data has been augmented to avoid overfitting, and dynamic learning rate adjustment has been done. From the prepared data, 70–30% of the train test spilled has been done. With the 30% dataset, a validation accuracy of 98.98% has been achieved. In this kind of large dataset, this accuracy is much more reliable.

There are some limitations found in the proposed method compared with the literature. Some methods might work with low image dataset numbers, but as we use the simple CNN model, this method requires a good number of images for training. Also, the proposed method depends on the hand landmark extraction model. Other hand landmark model can cause different results. In raw image processing, it is possible to detect hand portions to reduce the image size, which may increase the recognition chance and reduce the model training time. Hence, we may try this method in future work. Currently, raw image processing takes a good amount of training time as we considered the whole image for training.

Data availability

The dataset used in this paper (ASL Fingerspelling Images (RGB & Depth)) is publicly available at Kaggle on this URL: https://www.kaggle.com/datasets/mrgeislinger/asl-rgb-depth-fingerspelling-spelling-it-out .

Anderson, R., Wiryana, F., Ariesta, M. C. & Kusuma, G. P. Sign language recognition application systems for deaf-mute people: A review based on input-process-output. Proced. Comput. Sci. 116 , 441–448. https://doi.org/10.1016/j.procs.2017.10.028 (2017).

Article Google Scholar

Mummadi, C. et al. Real-time and embedded detection of hand gestures with an IMU-based glove. Informatics 5 (2), 28. https://doi.org/10.3390/informatics5020028 (2018).

Hickeys Kinect for Windows - Windows apps. (2022). Accessed 01 January 2023. https://learn.microsoft.com/en-us/windows/apps/design/devices/kinect-for-windows

Rivera-Acosta, M., Ortega-Cisneros, S., Rivera, J. & Sandoval-Ibarra, F. American sign language alphabet recognition using a neuromorphic sensor and an artificial neural network. Sensors 17 (10), 2176. https://doi.org/10.3390/s17102176 (2017).

Article ADS PubMed PubMed Central Google Scholar

Ye, Y., Tian, Y., Huenerfauth, M., & Liu, J. Recognizing American Sign Language Gestures from Within Continuous Videos. In 2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops (CVPRW) , 2145–214509 (IEEE, 2018). https://doi.org/10.1109/CVPRW.2018.00280 .

Ameen, S. & Vadera, S. A convolutional neural network to classify American Sign Language fingerspelling from depth and colour images. Expert Syst. 34 (3), e12197. https://doi.org/10.1111/exsy.12197 (2017).

Sykora, P., Kamencay, P. & Hudec, R. Comparison of SIFT and SURF methods for use on hand gesture recognition based on depth map. AASRI Proc. 9 , 19–24. https://doi.org/10.1016/j.aasri.2014.09.005 (2014).

Sahoo, A. K., Mishra, G. S. & Ravulakollu, K. K. Sign language recognition: State of the art. ARPN J. Eng. Appl. Sci. 9 (2), 116–134 (2014).

Google Scholar

Mitra, S. & Acharya, T. “Gesture recognition: A survey. IEEE Trans. Syst. Man Cybern. Part C 37 (3), 311–324. https://doi.org/10.1109/TSMCC.2007.893280 (2007).

Rautaray, S. S. & Agrawal, A. Vision based hand gesture recognition for human computer interaction: A survey. Artif. Intell. Rev. 43 (1), 1–54. https://doi.org/10.1007/s10462-012-9356-9 (2015).

Amir A. et al A low power, fully event-based gesture recognition system. In 2017 IEEE Conference on Computer Vision and Pattern Recognition (CVPR) , 7388–7397 (IEEE, 2017). https://doi.org/10.1109/CVPR.2017.781 .

Lee, J. H. et al. Real-time gesture interface based on event-driven processing from stereo silicon retinas. IEEE Trans. Neural Netw. Learn Syst. 25 (12), 2250–2263. https://doi.org/10.1109/TNNLS.2014.2308551 (2014).

Article PubMed Google Scholar

Adithya, V. & Rajesh, R. A deep convolutional neural network approach for static hand gesture recognition. Proc. Comput. Sci. 171 , 2353–2361. https://doi.org/10.1016/j.procs.2020.04.255 (2020).

Das, A., Gawde, S., Suratwala, K., & Kalbande, D. Sign language recognition using deep learning on custom processed static gesture images. In 2018 International Conference on Smart City and Emerging Technology (ICSCET) , 1–6 (IEEE, 2018). https://doi.org/10.1109/ICSCET.2018.8537248 .

Pathan, R. K. et al. Breast cancer classification by using multi-headed convolutional neural network modeling. Healthcare 10 (12), 2367. https://doi.org/10.3390/healthcare10122367 (2022).

Article PubMed PubMed Central Google Scholar

Lecun, Y., Bottou, L., Bengio, Y. & Haffner, P. Gradient-based learning applied to document recognition. Proc. IEEE 86 (11), 2278–2324. https://doi.org/10.1109/5.726791 (1998).

Collobert, R., & Weston, J. A unified architecture for natural language processing. In Proceedings of the 25th international conference on Machine learning—ICML ’08 , 160–167 (ACM Press, 2008). https://doi.org/10.1145/1390156.1390177 .

Farabet, C., Couprie, C., Najman, L. & LeCun, Y. Learning hierarchical features for scene labeling. IEEE Trans. Pattern Anal. Mach. Intell. 35 (8), 1915–1929. https://doi.org/10.1109/TPAMI.2012.231 (2013).

Xie, B., He, X. & Li, Y. RGB-D static gesture recognition based on convolutional neural network. J. Eng. 2018 (16), 1515–1520. https://doi.org/10.1049/joe.2018.8327 (2018).

Jalal, M. A., Chen, R., Moore, R. K., & Mihaylova, L. American sign language posture understanding with deep neural networks. In 2018 21st International Conference on Information Fusion (FUSION) , 573–579 (IEEE, 2018).

Shanta, S. S., Anwar, S. T., & Kabir, M. R. Bangla Sign Language Detection Using SIFT and CNN. In 2018 9th International Conference on Computing, Communication and Networking Technologies (ICCCNT) , 1–6 (IEEE, 2018). https://doi.org/10.1109/ICCCNT.2018.8493915 .

Sharma, A., Mittal, A., Singh, S. & Awatramani, V. Hand gesture recognition using image processing and feature extraction techniques. Proc. Comput. Sci. 173 , 181–190. https://doi.org/10.1016/j.procs.2020.06.022 (2020).

Ren, S., He, K., Girshick, R., & Sun, J. Faster r-cnn: Towards real-time object detection with region proposal networks. Adv. Neural Inf. Process Syst. , 28 (2015).

Rastgoo, R., Kiani, K. & Escalera, S. Multi-modal deep hand sign language recognition in still images using restricted Boltzmann machine. Entropy 20 (11), 809. https://doi.org/10.3390/e20110809 (2018).

Jhuang, H., Serre, T., Wolf, L., & Poggio, T. A biologically inspired system for action recognition. In 2007 IEEE 11th International Conference on Computer Vision , 1–8. (IEEE, 2007) https://doi.org/10.1109/ICCV.2007.4408988 .

Ji, S., Xu, W., Yang, M. & Yu, K. 3D convolutional neural networks for human action recognition. IEEE Trans. Pattern Anal. Mach. Intell. 35 (1), 221–231. https://doi.org/10.1109/TPAMI.2012.59 (2013).

Huang, J., Zhou, W., Li, H., & Li, W. sign language recognition using 3D convolutional neural networks. In 2015 IEEE International Conference on Multimedia and Expo (ICME) , 1–6 (IEEE, 2015). https://doi.org/10.1109/ICME.2015.7177428 .

Digital worlds that feel human Ultraleap. Accessed 01 January 2023. Available: https://www.leapmotion.com/

Huang, F., & Huang, S. Interpreting american sign language with Kinect. Journal of Deaf Studies and Deaf Education, [Oxford University Press] , (2011).

Pugeault, N., & Bowden, R. Spelling it out: Real-time ASL fingerspelling recognition. In 2011 IEEE International Conference on Computer Vision Workshops (ICCV Workshops) , 1114–1119 (IEEE, 2011). https://doi.org/10.1109/ICCVW.2011.6130290 .

Rahim, M. A., Islam, M. R. & Shin, J. Non-touch sign word recognition based on dynamic hand gesture using hybrid segmentation and CNN feature fusion. Appl. Sci. 9 (18), 3790. https://doi.org/10.3390/app9183790 (2019).

“ASL Alphabet.” Accessed 01 Jan, 2023. https://www.kaggle.com/grassknoted/asl-alphabet

Download references

Funding was provided by the American University of the Middle East, Egaila, Kuwait.

Author information

Authors and affiliations.

Department of Computing and Information Systems, School of Engineering and Technology, Sunway University, 47500, Bandar Sunway, Selangor, Malaysia

Refat Khan Pathan

Department of Computer Science and Engineering, BGC Trust University Bangladesh, Chittagong, 4381, Bangladesh

Munmun Biswas

Department of Computer and Information Science, Graduate School of Engineering, Tokyo University of Agriculture and Technology, Koganei, Tokyo, 184-0012, Japan

Suraiya Yasmin

Centre for Applied Physics and Radiation Technologies, School of Engineering and Technology, Sunway University, 47500, Bandar Sunway, Selangor, Malaysia

Mayeen Uddin Khandaker

Faculty of Graduate Studies, Daffodil International University, Daffodil Smart City, Birulia, Savar, Dhaka, 1216, Bangladesh

College of Engineering and Technology, American University of the Middle East, Egaila, Kuwait

Mohammad Salman & Ahmed A. F. Youssef

You can also search for this author in PubMed Google Scholar

Contributions

R.K.P and M.B, Conceptualization; R.K.P. methodology; R.K.P. software and coding; M.B. and R.K.P. validation; R.K.P. and M.B. formal analysis; R.K.P., S.Y., and M.B. investigation; S.Y. and R.K.P. resources; R.K.P. and M.B. data curation; S.Y., R.K.P., and M.B. writing—original draft preparation; S.Y., R.K.P., M.B., M.U.K., M.S., A.A.F.Y. and M.S. writing—review and editing; R.K.P. and M.U.K. visualization; M.U.K. and M.B. supervision; M.B., M.S. and A.A.F.Y. project administration; M.S. and A.A.F.Y, funding acquisition.

Corresponding author

Correspondence to Mayeen Uddin Khandaker .

Ethics declarations

Competing interests.

The authors declare no competing interests.

Additional information

Publisher's note.

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/ .

Reprints and permissions

About this article

Cite this article.

Pathan, R.K., Biswas, M., Yasmin, S. et al. Sign language recognition using the fusion of image and hand landmarks through multi-headed convolutional neural network. Sci Rep 13 , 16975 (2023). https://doi.org/10.1038/s41598-023-43852-x

Download citation

Received : 04 March 2023

Accepted : 29 September 2023

Published : 09 October 2023

DOI : https://doi.org/10.1038/s41598-023-43852-x

Share this article

Anyone you share the following link with will be able to read this content:

Sorry, a shareable link is not currently available for this article.

Provided by the Springer Nature SharedIt content-sharing initiative

This article is cited by

- Junming Zhang

- Xiaolong Bu

Scientific Reports (2024)

Boxing behavior recognition based on artificial intelligence convolutional neural network with sports psychology assistant

- Yuanhui Kong

- Zhiyuan Duan

Using LSTM to translate Thai sign language to text in real time

- Werapat Jintanachaiwat

- Kritsana Jongsathitphaibul

- Thitirat Siriborvornratanakul

Discover Artificial Intelligence (2024)

By submitting a comment you agree to abide by our Terms and Community Guidelines . If you find something abusive or that does not comply with our terms or guidelines please flag it as inappropriate.

Quick links

- Explore articles by subject

- Guide to authors

- Editorial policies

Sign up for the Nature Briefing: AI and Robotics newsletter — what matters in AI and robotics research, free to your inbox weekly.

Recent progress in sign language recognition: a review

- Original Paper

- Published: 21 October 2023

- Volume 34 , article number 127 , ( 2023 )

Cite this article

- Aamir Wali ORCID: orcid.org/0000-0002-5314-6113 1 ,

- Roha Shariq 1 ,

- Sajdah Shoaib 1 ,

- Sukhan Amir 1 &

- Asma Ahmad Farhan 1

851 Accesses

Explore all metrics

Sign language is a predominant form of communication among a large group of society. The nature of sign languages is visual, making them distinct from spoken languages. Unfortunately, very few able people can understand sign language making communication with the hearing-impaired infeasible. Research in the field of sign language recognition (SLR) can help reduce the barrier between deaf and able people. Despite having tremendous advances in SLR, unfortunately, this form of recognition is still at least a decade behind speech recognition. There has been a gradual transition from static to isolated to continuous SLR, but still the research is scattered, limited to very small vocabularies, and only suitable for tailor-made conditions. This paper aims to compile recent progress in SLR and presents a comprehensive review of the emerging SLR frameworks and algorithms. We have categorized SLR based on the unit of written text, i.e., letters or alphabets, words and sentences. This review also includes a study-wise summary of the datasets used in different research conducted during the last few years. We identify state-of-the-art techniques for each category. We also suggest novel research directions for future work, and highlight several primary factors contributing to SLR’s inability to achieve improved practical outcomes.

This is a preview of subscription content, log in via an institution to check access.

Access this article

Subscribe and save.

- Get 10 units per month

- Download Article/Chapter or eBook

- 1 Unit = 1 Article or 1 Chapter

- Cancel anytime

Price excludes VAT (USA) Tax calculation will be finalised during checkout.

Instant access to the full article PDF.

Rent this article via DeepDyve

Institutional subscriptions

Similar content being viewed by others

An Investigation and Observational Remarks on Conventional Sign Language Recognition

A Survey on Dynamic Sign Language Recognition

A Systematic Study of Sign Language Recognition Systems Employing Machine Learning Algorithms

Bromwich, M.: 360 million people worldwide suffer disabling hearing loss. https://www.shoebox.md/360-million-people-worldwide-suffer-disabling-hearing-loss/ (2022). Accessed 09 Apr 2022

UN, U.N.: International day of sign languages. https://www.un.org/en/observances/sign-languages-day . Accessed 09 Apr 2022

Sign language. https://en.wikipedia.org/wiki/Sign_language . Accessed 09 Apr 2022

Zhu, Q., Li, J., Yuan, F., Gan, Q.: Multi-scale temporal network for continuous sign language recognition (2022). arXiv preprint arXiv:2204.03864

Adaloglou, N.M., et al.: A comprehensive study on deep learning-based methods for sign language recognition. IEEE Trans. Multimed. (2021). https://doi.org/10.1109/TMM.2021.3070438

Article Google Scholar

Rastgoo, R., Kiani, K., Escalera, S.: Sign language recognition: a deep survey. Expert Syst. Appl. 164 , 113794 (2021)

Recent advances in sign language recognition using deep learning techniques

El-Alfy, E.-S.M., Luqman, H.: A comprehensive survey and taxonomy of sign language research. Eng. Appl. Artif. Intell. 114 , 105198 (2022)

Gaikwad, R.S., Admuthe, L.S.: A review of various sign language recognition techniques. Model. Simul. Optim. 8 , 111–126 (2022)

Google Scholar

Arab sign language recognition with convolutional neural networks. IEEE

Sign language recognition using convolutional neural networks. Springer

Shahzad, A., Wali, A.: Computerization of off-topic essay detection: a possibility? Educ. Inf. Technol. 27 (4), 5737–5747 (2022)

Jiang, Z., Zaheer, W., Wali, A., Gilani, S.: Visual sentiment analysis using data-augmented deep transfer learning techniques. Multimed. Tools Appl. 8 , 1–17 (2023)

Yan, C., Gong, B., Wei, Y., Gao, Y.: Deep multi-view enhancement hashing for image retrieval. IEEE Trans. Pattern Anal. Mach. Intell. 43 (4), 1445–1451 (2020)

A new benchmark on american sign language recognition using convolutional neural network. IEEE

Wali, A., Saeed, M.: m-calp-yet another way of generating handwritten data through evolution for pattern recognition. Biosystems 175 , 24–29 (2019)

Wali, A.: Ca-nn: a cellular automata neural network for handwritten pattern recognition. Nat. Comput. 20 , 1–8 (2022)

Wali, A., Ahmad, M., Naseer, A., Tamoor, M., Gilani, S.: Stynmedgan: medical images augmentation using a new GAN model for improved diagnosis of diseases. J. Intell. Fuzzy Syst. 26 , 1–18 (2023)

Xu, Y., Wali, A.: Handwritten pattern recognition using birds-flocking inspired data augmentation technique. IEEE Access (2023)

Mannan, A., et al.: Hypertuned deep convolutional neural network for sign language recognition. Comput. Intell. Neurosci. (2022)

Sign language recognition using deep learning on custom processed static gesture images. IEEE

Mannan, A. et al.: Hypertuned deep convolutional neural network for sign language recognition. Comput. Intell. Neurosci. (2022)

Kasapbaşi, A., Elbushra, A.E.A., Omar, A.-H., Yilmaz, A.: Deepaslr: a CNN based human computer interface for American sign language recognition for hearing-impaired individuals. Comput. Methods Programs Biomed. Update 2 , 100048 (2022)

Zakariah, M., Alotaibi, Y.A., Koundal, D., Guo, Y., Mamun Elahi, M.: Sign language recognition for Arabic alphabets using transfer learning technique. Comput. Intell. Neurosci. (2022)

Thakur, A., Budhathoki, P., Upreti, S., Shrestha, S., Shakya, S.: Real time sign language recognition and speech generation. J. Innov. Image Process. 2 (2), 65–76 (2020)

Yirtici, T., Yurtkan, K.: Regional-CNN-based enhanced Turkish sign language recognition. Signal Image Video Process. 8 , 1–7 (2022)

Sahoo, A.K., Mishra, G.S., Ravulakollu, K.K.: Sign language recognition: state of the art. ARPN J. Eng. Appl. Sci. 9 (2), 116–134 (2014)

Hussain, M.J., et al.: Intelligent sign language recognition system for e-learning context (2022)

American sign language identification using hand trackpoint analysis. Springer

Shah, F., et al.: Sign language recognition using multiple kernel learning: a case study of Pakistan sign language. IEEE Access 9 , 67548–67558 (2021)

Katoch, S., Singh, V., Tiwary, U.S.: Indian sign language recognition system using surf with SVM and CNN. Array 14 , 100141 (2022)

Word-level deep sign language recognition from video: a new large-scale dataset and methods comparison

Context matters: self-attention for sign language recognition. IEEE

Connectionist temporal classification: labelling unsegmented sequence data with recurrent neural networks

Töngi, R.: Application of transfer learning to sign language recognition using an inflated 3d deep convolutional neural network (2021). arXiv preprint arXiv:2103.05111

Sharma, S., Singh, S.: Vision-based hand gesture recognition using deep learning for the interpretation of sign language. Expert Syst. Appl. 182 , 115657 (2021)

Lim, K.M., Tan, A.W.C., Lee, C.P., Tan, S.C.: Isolated sign language recognition using convolutional neural network hand modelling and hand energy image. Multimed. Tools Appl. 78 (14), 19917–19944 (2019)

Sincan, O.M., Keles, H.Y.: Using motion history images with 3d convolutional networks in isolated sign language recognition. IEEE Access 10 , 18608–18618 (2022)

Venugopalan, A., Reghunadhan, R.: Applying hybrid deep neural network for the recognition of sign language words used by the deaf covid-19 patients. Arab. J. Sci. Eng. 8 , 1–14 (2022)

Boukdir, A., Benaddy, M., Ellahyani, A., Meslouhi, O.E., Kardouchi, M.: Isolated video-based Arabic sign language recognition using convolutional and recursive neural networks. Arab. J. Sci. Eng. 47 (2), 2187–2199 (2022)

Sign pose-based transformer for word-level sign language recognition

Yan, C., et al.: Task-adaptive attention for image captioning. IEEE Trans. Circuits Syst. Video Technol. 32 (1), 43–51 (2021)

Yan, C., et al.: Age-invariant face recognition by multi-feature fusionand decomposition with self-attention. ACM Trans. Multimed. Comput. Commun. Appl. 18 (1), 1–18 (2022)

Vaswani, A., et al.: Attention is all you need. Adv. Neural Inf. Process. Syst. 30 , 50 (2017)

Rastgoo, R., Kiani, K., Escalera, S.: Hand sign language recognition using multi-view hand skeleton. Expert Syst. Appl. 150 , 113336 (2020)

Hamza, H.M., Wali, A.: Pakistan sign language recognition: leveraging deep learning models with limited dataset. Mach. Vis. Appl. 34 (5), 71 (2023)

More, V., Sangamnerkar, S., Thakare, V., Mane, D., Dolas, R.: Sign language recognition using image processing. J. NX 8 , 85–87 (2021)

Kumar, A.R., Bhavana, T., Sri, P.M.: A deep neural framework for continuous sign language recognition by iterative training. J. Algebraic Stat. 13 (3), 4574–4584 (2022)

Wang, F., Song, Y., Zhang, J., Han, J., Huang, D.: Temporal unet: sample level human action recognition using wifi (2019). arXiv preprint arXiv:1904.11953

Rastgoo, R., Kiani, K., Escalera, S.: Real-time isolated hand sign language recognition using deep networks and SVD. J. Ambient. Intell. Humaniz. Comput. 13 (1), 591–611 (2022)

Tolentino, L.K.S., et al.: Static sign language recognition using deep learning. Int. J. Mach. Learn. Comput 9 (6), 821–827 (2019)

European Language Resources Association (ELRA). Sign language recognition with transformer networks

ML based sign language recognition system. IEEE

Transferring cross-domain knowledge for video sign language recognition

Wadhawan, A., Kumar, P.: Deep learning-based sign language recognition system for static signs. Neural Comput. Appl. 32 (12), 7957–7968 (2020)

Better sign language translation with STMC-transformer

Deep sign: hybrid CNN-HMM for continuous sign language recognition

Real-time sign language detection using human pose estimation. Springer

Liao, Y., Xiong, P., Min, W., Min, W., Lu, J.: Dynamic sign language recognition based on video sequence with BLSTM-3d residual networks. IEEE Access 7 , 38044–38054 (2019)

Gao, L., et al.: RNN-transducer based Chinese sign language recognition. Neurocomputing 434 , 45–54 (2021)

Spatial-temporal multi-cue network for continuous sign language recognition, vol. 34

Aditya, W., et al.: Novel spatio-temporal continuous sign language recognition using an attentive multi-feature network. Sensors 22 (17), 6452 (2022)

Venugopalan, A., Reghunadhan, R.: Applying deep neural networks for the automatic recognition of sign language words: a communication aid to deaf agriculturists. Expert Syst. Appl. 185 , 115601 (2021)

Fully convolutional networks for continuous sign language recognition. Springer

Kumar, E.K., Kishore, P., Kumar, M.T.K., Kumar, D.A.: 3d sign language recognition with joint distance and angular coded color topographical descriptor on a 2-stream CNN. Neurocomputing 372 , 40–54 (2020)

Khan, R. Sign Language Recognition from a webcam video stream. Master’s thesis, Technische Universität München (2022)

Deep high-resolution representation learning for human pose estimation

Wen, F., Zhang, Z., He, T., Lee, C.: Ai enabled sign language recognition and VR space bidirectional communication using triboelectric smart glove. Nat. Commun. 12 (1), 1–13 (2021)

MyoSign: enabling end-to-end sign language recognition with wearables

Cerna, L.R., Cardenas, E.E., Miranda, D.G., Menotti, D., Camara-Chavez, G.: A multimodal libras-ufop Brazilian sign language dataset of minimal pairs using a microsoft kinect sensor. Expert Syst. Appl. 167 , 114179 (2021)

Mirza, S.F., Al-Talabani, A.K.: Efficient kinect sensor-based Kurdish sign language recognition using echo system network. ARO Sci. J. Koya Univ. 9 (2), 1–9 (2021)

Mittal, A., Kumar, P., Roy, P.P., Balasubramanian, R., Chaudhuri, B.B.: A modified LSTM model for continuous sign language recognition using leap motion. IEEE Sens. J. 19 (16), 7056–7063 (2019)

Lee, C.K., et al.: American sign language recognition and training method with recurrent neural network. Expert Syst. Appl. 167 , 114403 (2021)

Chong, T.-W., Lee, B.-G.: American sign language recognition using leap motion controller with machine learning approach. Sensors 18 (10), 3554 (2018)

Pereira-Montiel, E., et al.: Automatic sign language recognition based on accelerometry and surface electromyography signals: a study for Colombian sign language. Biomed. Signal Process. Control 71 , 103201 (2022)

Visual alignment constraint for continuous sign language recognition

Adaloglou, N., et al.: A comprehensive study on deep learning-based methods for sign language recognition. IEEE Trans. Multimed. 24 , 1750–1762 (2021)

Sign language production: a review

Bazarevsky, V. et al. Blazepose: on-device real-time body pose tracking (2020). arXiv preprint arXiv:2006.10204

Hrúz, M., et al.: One model is not enough: ensembles for isolated sign language recognition. Sensors 22 (13), 5043 (2022)

Zhou, Z., Tam, V.W., Lam, E.Y.: A cross-attention Bert-based framework for continuous sign language recognition. IEEE Signal Process. Lett. 29 , 1818–1822 (2022)

Lugaresi, C. et al.: Mediapipe: a framework for building perception pipelines (2019). arXiv preprint arXiv:1906.08172

Subramanian, B., et al.: An integrated mediapipe-optimized GRU model for Indian sign language recognition. Sci. Rep. 12 (1), 1–16 (2022)

Bidirectional Skeleton-Based Isolated Sign Recognition using Graph Convolution Networks

Download references

This research did not receive any specific grant from funding agencies in the public, commercial, or not-for-profit sectors.

Author information

Authors and affiliations.

FAST School of Computing, National University of Computer and Emerging Science, 852-B, Faisal Town, Lahore, Pakistan

Aamir Wali, Roha Shariq, Sajdah Shoaib, Sukhan Amir & Asma Ahmad Farhan

You can also search for this author in PubMed Google Scholar

Corresponding author

Correspondence to Aamir Wali .

Ethics declarations

Conflict of interest.

The authors declare that they have no relevant financial or non-financial interests to disclose. There is no personal relationship that could influence the work reported in this paper. No funding was received for conducting this study. The authors have no conflicts of interest to declare that are relevant to the content of this article.

Compliance with Ethical Standards

This statement is to certify that the author list is correct. The Authors also confirm that this research has not been published previously and that it is not under consideration for publication elsewhere. On behalf of all Co-Authors, the Corresponding Author shall bear full responsibility for the submission. There is no conflict of interest. This research did not involve any human participants and/or animals.

Additional information

Publisher's note.

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

Reprints and permissions

About this article

Wali, A., Shariq, R., Shoaib, S. et al. Recent progress in sign language recognition: a review. Machine Vision and Applications 34 , 127 (2023). https://doi.org/10.1007/s00138-023-01479-y

Download citation

Received : 14 November 2022

Revised : 13 August 2023

Accepted : 11 September 2023

Published : 21 October 2023

DOI : https://doi.org/10.1007/s00138-023-01479-y

Share this article

Anyone you share the following link with will be able to read this content:

Sorry, a shareable link is not currently available for this article.

Provided by the Springer Nature SharedIt content-sharing initiative

- Sign language recognition

- Transformer

- Gesture recognition

- Continuous SLR

- Isolated SLR

- Find a journal

- Publish with us

- Track your research

Information

- Author Services

Initiatives

You are accessing a machine-readable page. In order to be human-readable, please install an RSS reader.

All articles published by MDPI are made immediately available worldwide under an open access license. No special permission is required to reuse all or part of the article published by MDPI, including figures and tables. For articles published under an open access Creative Common CC BY license, any part of the article may be reused without permission provided that the original article is clearly cited. For more information, please refer to https://www.mdpi.com/openaccess .

Feature papers represent the most advanced research with significant potential for high impact in the field. A Feature Paper should be a substantial original Article that involves several techniques or approaches, provides an outlook for future research directions and describes possible research applications.

Feature papers are submitted upon individual invitation or recommendation by the scientific editors and must receive positive feedback from the reviewers.

Editor’s Choice articles are based on recommendations by the scientific editors of MDPI journals from around the world. Editors select a small number of articles recently published in the journal that they believe will be particularly interesting to readers, or important in the respective research area. The aim is to provide a snapshot of some of the most exciting work published in the various research areas of the journal.

Original Submission Date Received: .

- Active Journals

- Find a Journal

- Proceedings Series

- For Authors

- For Reviewers

- For Editors

- For Librarians

- For Publishers

- For Societies

- For Conference Organizers

- Open Access Policy

- Institutional Open Access Program

- Special Issues Guidelines

- Editorial Process

- Research and Publication Ethics

- Article Processing Charges

- Testimonials

- Preprints.org

- SciProfiles

- Encyclopedia

Article Menu

- Subscribe SciFeed

- Recommended Articles

- Google Scholar

- on Google Scholar

- Table of Contents

Find support for a specific problem in the support section of our website.

Please let us know what you think of our products and services.

Visit our dedicated information section to learn more about MDPI.

JSmol Viewer

Deepsign: sign language detection and recognition using deep learning.

1. Introduction

2. related work, 3. methodology, proposed lstm-gru-based model.

- The feature vectors are extracted using InceptionResNetV2 and passed to the model. Here, the video frames are classified into objects with InceptionResNet-2; then, the task is to create key points stacked for video frames;

- The first layer of the neural network is composed of a combination of LSTM and GRU. This composition can be used to capture the semantic dependencies in a more effective way;

- The dropout is used to reduce overfitting and improve the model’s generalization ability;

- The final output is obtained through the ‘softmax’ function.

- The LSTM layer of 1536 units, 0.3 dropouts, and a kernel regularizer of ′l2′ receive data from the input layer;

- Then, the data are passed from the GRU layer using the same parameters;

- Results are passed to a fully connected dense layer;

- The output is fed to the dropout layer, with an effective value of 0.3.

4. Experiments and Results

4.1. dataset, 4.2. results, 5. discussion and limitations, 6. conclusions, author contributions, data availability statement, acknowledgments, conflicts of interest.

- Ministry of Statistics & Programme Implementation. Available online: https://pib.gov.in/PressReleasePage.aspx?PRID=1593253 (accessed on 5 January 2022).

- Manware, A.; Raj, R.; Kumar, A.; Pawar, T. Smart Gloves as a Communication Tool for the Speech Impaired and Hearing Impaired. Int. J. Emerg. Technol. Innov. Res. 2017 , 4 , 78–82. [ Google Scholar ]

- Wadhawan, A.; Kumar, P. Sign language recognition systems: A decade systematic literature review. Arch. Comput. Methods Eng. 2021 , 28 , 785–813. [ Google Scholar ] [ CrossRef ]

- Papastratis, I.; Chatzikonstantinou, C.; Konstantinidis, D.; Dimitropoulos, K.; Daras, P. Artificial Intelligence Technologies for Sign Language. Sensors 2021 , 21 , 5843. [ Google Scholar ] [ CrossRef ] [ PubMed ]

- Nandy, A.; Prasad, J.; Mondal, S.; Chakraborty, P.; Nandi, G. Recognition of Isolated Indian Sign Language Gesture in Real Time. Commun. Comput. Inf. Sci. 2010 , 70 , 102–107. [ Google Scholar ]

- Mekala, P.; Gao, Y.; Fan, J.; Davari, A. Real-time sign language recognition based on neural network architecture. In Proceedings of the IEEE 43rd Southeastern Symposium on System Theory, Auburn, AL, USA, 14–16 March 2011. [ Google Scholar ]

- Chen, J.K. Sign Language Recognition with Unsupervised Feature Learning ; CS229 Project Final Report; Stanford University: Stanford, CA, USA, 2011. [ Google Scholar ]

- Sharma, M.; Pal, R.; Sahoo, A. Indian sign language recognition using neural networks and KNN classifiers. J. Eng. Appl. Sci. 2014 , 9 , 1255–1259. [ Google Scholar ]

- Agarwal, S.R.; Agrawal, S.B.; Latif, A.M. Article: Sentence Formation in NLP Engine on the Basis of Indian Sign Language using Hand Gestures. Int. J. Comput. Appl. 2015 , 116 , 18–22. [ Google Scholar ]

- Wazalwar, S.S.; Shrawankar, U. Interpretation of sign language into English using NLP techniques. J. Inf. Optim. Sci. 2017 , 38 , 895–910. [ Google Scholar ] [ CrossRef ]

- Shivashankara, S.; Srinath, S. American Sign Language Recognition System: An Optimal Approach. Int. J. Image Graph. Signal Process. 2018 , 10 , 18–30. [ Google Scholar ]

- Camgoz, N.C.; Hadfield, S.; Koller, O.; Ney, H.; Bowden, R. Neural Sign Language Translation. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR) 2018, Salt Lake City, UT, USA, 18–22 June 2018; IEEE: Piscataway, NJ, USA, 2018. [ Google Scholar ]

- Muthu Mariappan, H.; Gomathi, V. Real-Time Recognition of Indian Sign Language. In Proceedings of the International Conference on Computational Intelligence in Data Science, Haryana, India, 6–7 September 2019. [ Google Scholar ]

- Mittal, A.; Kumar, P.; Roy, P.P.; Balasubramanian, R.; Chaudhuri, B.B. A Modified LSTM Model for Continuous Sign Language Recognition Using Leap Motion. IEEE Sens. J. 2019 , 19 , 7056–7063. [ Google Scholar ] [ CrossRef ]

- De Coster, M.; Herreweghe, M.V.; Dambre, J. Sign Language Recognition with Transformer Networks. In Proceedings of the Conference on Language Resources and Evaluation (LREC 2020), Marseille, France, 13–15 May 2020; pp. 6018–6024. [ Google Scholar ]

- Jiang, S.; Sun, B.; Wang, L.; Bai, Y.; Li, K.; Fu, Y. Skeleton aware multi-modal sign language recognition. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Nashville, TN, USA, 21–24 June 2021; pp. 3413–3423. [ Google Scholar ]

- Liao, Y.; Xiong, P.; Min, W.; Min, W.; Lu, J. Dynamic Sign Language Recognition Based on Video Sequence with BLSTM-3D Residual Networks. IEEE Access 2019 , 7 , 38044–38054. [ Google Scholar ] [ CrossRef ]

- Adaloglou, N.; Chatzis, T. A Comprehensive Study on Deep Learning-based Methods for Sign Language Recognition. IEEE Trans. Multimed. 2022 , 24 , 1750–1762. [ Google Scholar ] [ CrossRef ]

- Aparna, C.; Geetha, M. CNN and Stacked LSTM Model for Indian Sign Language Recognition. Commun. Comput. Inf. Sci. 2020 , 1203 , 126–134. [ Google Scholar ] [ CrossRef ]

- Szegedy, C.; Ioffe, S.; Vanhoucke, V.; Alemi, A.A. Inception-v4, Inception-ResNet and the Impact of Residual Connections on Learning. arXiv 2016 , arXiv:1602.07261. [ Google Scholar ]

- Yang, D.; Martinez, C.; Visuña, L.; Khandhar, H.; Bhatt, C.; Carretero, J. Detection and Analysis of COVID-19 in medical images using deep learning techniques. Sci. Rep. 2021 , 11 , 19638. [ Google Scholar ] [ CrossRef ] [ PubMed ]

- Likhar, P.; Bhagat, N.K.; Rathna, G.N. Deep Learning Methods for Indian Sign Language Recognition. In Proceedings of the 2020 IEEE 10th International Conference on Consumer Electronics (ICCE-Berlin), Berlin, Germany, 9–11 November 2020; pp. 1–6. [ Google Scholar ] [ CrossRef ]

- Hochreiter, S.; Schmidhuber, J. Long Short-term Memory. Neural Comput. 1997 , 9 , 1735–1780. [ Google Scholar ] [ CrossRef ] [ PubMed ]

- Le, X.-H.; Hung, V.; Ho, G.L.; Sungho, J. Application of Long Short-Term Memory (LSTM) Neural Network for Flood Forecasting. Water 2019 , 11 , 1387. [ Google Scholar ] [ CrossRef ] [ Green Version ]

- Yan, S. Understanding LSTM and Its Diagrams. Available online: https://medium.com/mlreview/understanding-lstm-and-its-diagrams-37e2f46f1714 (accessed on 19 January 2022).

- Chen, J. CS231A Course Project Final Report Sign Language Recognition with Unsupervised Feature Learning. 2012. Available online: http://vision.stanford.edu/teaching/cs231a_autumn1213_internal/project/final/writeup/distributable/Chen_Paper.pdf (accessed on 15 March 2022).

Click here to enlarge figure

| Author | Methodology | Dataset | Accuracy |

|---|---|---|---|

| Mittal et al. (2019) [ ] | 2D-CNN and Modified LSTM, with Leap motion sensor | ASL | 89.50% |

| Aparna and Geetha (2019) [ ] | CNN and 2layer LSTM | Custom Dataset (6 signs) | 94% |

| Jiang et al. (2021) [ ] | 3DCNN with SL-GCN using RGB-D modalities | AUTSL | 98% |

| Liao et al. (2019) [ ] | 3D- ConvNet with BLSTM | DEVISIGN_D | 89.8% |

| Adaloglou et al. (2021) [ ] | Inflated 3D ConvNet with BLSTM | RGB + D | 89.74% |

| IISL2020 (Our Dataset) | AUTSL | GSL | |||||||

|---|---|---|---|---|---|---|---|---|---|

| Model | Precision | Recall | F1-Score | Precision | Recall | F1-Score | Precision | Recall | F1-Score |

| GRU-GRU | 0.92 | 0.90 | 0.90 | 0.93 | 0.90 | 0.90 | 0.93 | 0.92 | 0.93 |

| LSTM-LSTM | 0.96 | 0.96 | 0.95 | 0.89 | 0.89 | 0.89 | 0.90 | 0.89 | 0.89 |

| GRU-LSTM | 0.91 | 0.89 | 0.89 | 0.90 | 0.89 | 0.89 | 0.91 | 0.90 | 0.90 |

| LSTM-GRU | 0.97 | 0.97 | 0.97 | 0.95 | 0.94 | 0.95 | 0.95 | 0.94 | 0.94 |

| MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

Share and Cite

Kothadiya, D.; Bhatt, C.; Sapariya, K.; Patel, K.; Gil-González, A.-B.; Corchado, J.M. Deepsign: Sign Language Detection and Recognition Using Deep Learning. Electronics 2022 , 11 , 1780. https://doi.org/10.3390/electronics11111780

Kothadiya D, Bhatt C, Sapariya K, Patel K, Gil-González A-B, Corchado JM. Deepsign: Sign Language Detection and Recognition Using Deep Learning. Electronics . 2022; 11(11):1780. https://doi.org/10.3390/electronics11111780

Kothadiya, Deep, Chintan Bhatt, Krenil Sapariya, Kevin Patel, Ana-Belén Gil-González, and Juan M. Corchado. 2022. "Deepsign: Sign Language Detection and Recognition Using Deep Learning" Electronics 11, no. 11: 1780. https://doi.org/10.3390/electronics11111780

Article Metrics

Article access statistics, further information, mdpi initiatives, follow mdpi.

Subscribe to receive issue release notifications and newsletters from MDPI journals

- Architecture and Design

- Asian and Pacific Studies

- Business and Economics

- Classical and Ancient Near Eastern Studies

- Computer Sciences

- Cultural Studies

- Engineering

- General Interest

- Geosciences

- Industrial Chemistry

- Islamic and Middle Eastern Studies

- Jewish Studies

- Library and Information Science, Book Studies

- Life Sciences

- Linguistics and Semiotics

- Literary Studies

- Materials Sciences

- Mathematics

- Social Sciences

- Sports and Recreation

- Theology and Religion

- Publish your article

- The role of authors

- Promoting your article

- Abstracting & indexing

- Publishing Ethics

- Why publish with De Gruyter

- How to publish with De Gruyter

- Our book series

- Our subject areas

- Your digital product at De Gruyter

- Contribute to our reference works

- Product information

- Tools & resources

- Product Information

- Promotional Materials

- Orders and Inquiries

- FAQ for Library Suppliers and Book Sellers

- Repository Policy

- Free access policy

- Open Access agreements

- Database portals

- For Authors

- Customer service

- People + Culture

- Journal Management

- How to join us

- Working at De Gruyter

- Mission & Vision

- De Gruyter Foundation

- De Gruyter Ebound

- Our Responsibility

- Partner publishers

Your purchase has been completed. Your documents are now available to view.

Sign language identification and recognition: A comparative study

Sign Language (SL) is the main language for handicapped and disabled people. Each country has its own SL that is different from other countries. Each sign in a language is represented with variant hand gestures, body movements, and facial expressions. Researchers in this field aim to remove any obstacles that prevent the communication with deaf people by replacing all device-based techniques with vision-based techniques using Artificial Intelligence (AI) and Deep Learning. This article highlights two main SL processing tasks: Sign Language Recognition (SLR) and Sign Language Identification (SLID). The latter task is targeted to identify the signer language, while the former is aimed to translate the signer conversation into tokens (signs). The article addresses the most common datasets used in the literature for the two tasks (static and dynamic datasets that are collected from different corpora) with different contents including numerical, alphabets, words, and sentences from different SLs. It also discusses the devices required to build these datasets, as well as the different preprocessing steps applied before training and testing. The article compares the different approaches and techniques applied on these datasets. It discusses both the vision-based and the data-gloves-based approaches, aiming to analyze and focus on main methods used in vision-based approaches such as hybrid methods and deep learning algorithms. Furthermore, the article presents a graphical depiction and a tabular representation of various SLR approaches.

1 Introduction

Based on the World Health Organization (WHO) statistics, there are over 360 million people with hearing loss disability (WHO 2015 [ 1 , 2 ]). This number has increased to 466 million by 2020, and it is estimated that by 2050 over 900 million people will have hearing loss disability. According to the world federation of deaf people, there are about 300 sign languages (SLs) used around the world. SL is the bridge for communication between deaf and normal people. It is defined as a mode of interaction for the hard of hearing people through a collection of hand gestures, postures, movements, and facial expressions or movements which correspond to letters and words in our real life. To communicate with deaf people, an interpreter is needed to translate real-world words and sentences. So, deaf people can understand us or vice versa . Unfortunately, deaf people do not have a written form and have a huge lack of electronic resources. The most common SLs are American Sign Language (ASL) [ 3 ], Spanish Sign Language (SSL) [ 4 ], Australian Sign Language (AUSLAN) [ 5 ], and Arabic Sign Language (ArSL) [ 6 ]. Some of these societies use only one hand for sign languages such as USA, France, and Russia, while others use two-hands like UK, Turkey, and Czech Republic.

The need for an organized and unified SL was first discussed in World Sign Congress in 1951. The British Deaf Association (BDA) Published a book named Gestuno [ 7 ]. Gestuno is an International SL for the Deaf which contains a vocabulary list of about 1,500 signs. The name “Gestuno” was chosen referencing gesture and oneness. This language arises in the Western and Middle Eastern languages. Gestuno is considered a pidgin of SLs with limited lexicons. It was established in different countries such as US, Denmark, Italy, Russia, and Great Britain, in order to cover the international meetings of deaf people. Although, Gestuno cannot be considered as a language due to several reasons. First, no children or ordinary people grow up using this global language. Second, it has no unified grammar (their book contains only a collection of signs without any grammar). Third, there are a fewer number of specialized people who are fluent or professional in practicing this language. Last, it is not used daily in any single country and it is not likely that people replace their national SL with this international one [ 8 ].

ASL has many linguistics that is difficult to be understood by researchers who are interested in technology, so experts of SLs are needed to facilitate these difficulties. SL has many building blocks that are known as phonological features. These features are represented as hand gestures, facial expressions, and body movements. Each one of these three phonological features has its own shape which differs and varies from one sign to another one. A word/an expression may have similar phonological features in different SLs. For example, the word “drink” could be represented similarly in the three languages ASL, ArSL, and SSL [ 16 ]. On the other hand, a word/an expression may have different phonological features in different SLs. For example, the word “Stand” in American and the word “يقف” (stand) in Arabic are represented differently in the two SLs. The process of understanding a SL by a machine is called Sign Language Processing (SLP) [ 9 ]. Many research problems are suggested in this domain such as Sign Language Recognition (SLR), Sign Language Identification (SLID), Sign Language Synthesis, and Sign Language Translation [ 10 ]. This article covers the first two tasks: SLR and SLID.

SLR basically depends on what is the translation of any hand gesture and posture included in SL, and continues/deals from sign gesture until the step of text generation to the ordinary people to understand deaf people. To detect any sign, a feature extraction step is a crucial phase in the recognition system. It plays the most important role in sign recognition. They must be unique, normalized, and preprocessed. Many algorithms have been suggested to solve sign recognition ranging from traditional machine learning (ML) algorithms to deep learning algorithms as we shall discuss in the upcoming sections. On the other hand, few researchers have focused on SLID [ 11 ]. SLID is the task of assigning a language when given a collection of hand gestures, postures, movements, and facial expressions or movements. The term “SLID” raised in the last decade as a result of many attempts to globalize and identify a global SL. The identification process is considered as a multiclass classification problem. There are many contributions in SLR with prior surveys. The latest survey was a workshop [ 12 , 13 ]. To the best of our knowledge, no prior works have surveyed SLID in previous Literature. This shortage was due to the need for experts who can explain and illustrate many different SLs to researchers. Also, this shortage due to the distinction between any SL and its spoken language [ 8 , 14 ] (i.e., ASL is not a manual form of English and does not have a unified written form).

Although many SLR models have been developed, to the best of our knowledge, none of them can be used to recognize multiple SLs. At the same time, in recent decades, the need for a reliable system that could interact and communicate with people from different nations with different SLs is of great necessity [ 15 ]. COVID-19 Coronavirus is a global pandemic that forced a huge percentage of employees to work and contact remotely. Deaf people need to contact and attend online meetings using different platforms such as Zoom, Microsoft Team, and Google Meeting rooms. So, we need to identify and globalize a unique SL as excluding deaf people and discarding their attendance will affect the whole work progress and damage their psyche which emphasizes the principle of “nothing about us without us.” Also, SL occupies a big space of all daily life activities such as TV sign translators, local conferences sign translators, and international sign translators which is a big issue to translate all conference’s points to all deaf people from different nations, as every deaf person requires a translator of their own SL to translate and communicate with him. In Deaflympics 2010, many deaf athletics were invited for this international Olympics. They need to interact and communicate with each other or even with anybody in their residence [ 16 ]. Building an interactive unified recognizer system is a challenge [ 11 ] as there are many words/expressions with the same sign in different languages, other words/expressions with different signs in the different languages, and other words/expressions could be expressed using the hands beside the movements of the eyebrows, mouth, head, shoulders, and eye gaze. For example, in ASL, raised eyebrows indicate an open-ended question, and furrowed eyebrows indicate a yes/no question. SLs could also be modified by mouth movements. For example, expressing the sign CUP with different mouth positions may indicate cup size, also body movements which may be included while expressing any SL provides different meanings. SLID will help in breaking down all these barriers for SL across the world.

Traditional machine and deep learning algorithms were applied to different SLs to recognize and detect signs. Most proposed systems achieved promising results and indicated significant improvements in SL recognition accuracy. According to higher results in SLR on different SLs, a new task of SLID arises to achieve more stability and facility in deaf and ordinary people communication. SLID has many subtasks starting from image preprocessing, segmentation, feature extraction, and image classification. Most proposed models for recognition were applied to a single dataset, whereas the proposed SLID was applied to more than one SL dataset [ 11 ]. SLID inherits all SLR challenges, such as background and illumination variance [ 17 ], also skin detection and hands segmentation using both static and dynamic gestures. Challenges are doubled and maximized in SLID as many characters and words in different signs share the same hand’s gestures, body movements, and so on, but may differ by considering facial expressions. For example, in ASL, raised eyebrows indicate an open-ended question, and furrowed eyebrows indicate a yes/no question, SL could also be modified by mouth movements.