Have a language expert improve your writing

Run a free plagiarism check in 10 minutes, generate accurate citations for free.

- Knowledge Base

Methodology

- Systematic Review | Definition, Example, & Guide

Systematic Review | Definition, Example & Guide

Published on June 15, 2022 by Shaun Turney . Revised on November 20, 2023.

A systematic review is a type of review that uses repeatable methods to find, select, and synthesize all available evidence. It answers a clearly formulated research question and explicitly states the methods used to arrive at the answer.

They answered the question “What is the effectiveness of probiotics in reducing eczema symptoms and improving quality of life in patients with eczema?”

In this context, a probiotic is a health product that contains live microorganisms and is taken by mouth. Eczema is a common skin condition that causes red, itchy skin.

Table of contents

What is a systematic review, systematic review vs. meta-analysis, systematic review vs. literature review, systematic review vs. scoping review, when to conduct a systematic review, pros and cons of systematic reviews, step-by-step example of a systematic review, other interesting articles, frequently asked questions about systematic reviews.

A review is an overview of the research that’s already been completed on a topic.

What makes a systematic review different from other types of reviews is that the research methods are designed to reduce bias . The methods are repeatable, and the approach is formal and systematic:

- Formulate a research question

- Develop a protocol

- Search for all relevant studies

- Apply the selection criteria

- Extract the data

- Synthesize the data

- Write and publish a report

Although multiple sets of guidelines exist, the Cochrane Handbook for Systematic Reviews is among the most widely used. It provides detailed guidelines on how to complete each step of the systematic review process.

Systematic reviews are most commonly used in medical and public health research, but they can also be found in other disciplines.

Systematic reviews typically answer their research question by synthesizing all available evidence and evaluating the quality of the evidence. Synthesizing means bringing together different information to tell a single, cohesive story. The synthesis can be narrative ( qualitative ), quantitative , or both.

Prevent plagiarism. Run a free check.

Systematic reviews often quantitatively synthesize the evidence using a meta-analysis . A meta-analysis is a statistical analysis, not a type of review.

A meta-analysis is a technique to synthesize results from multiple studies. It’s a statistical analysis that combines the results of two or more studies, usually to estimate an effect size .

A literature review is a type of review that uses a less systematic and formal approach than a systematic review. Typically, an expert in a topic will qualitatively summarize and evaluate previous work, without using a formal, explicit method.

Although literature reviews are often less time-consuming and can be insightful or helpful, they have a higher risk of bias and are less transparent than systematic reviews.

Similar to a systematic review, a scoping review is a type of review that tries to minimize bias by using transparent and repeatable methods.

However, a scoping review isn’t a type of systematic review. The most important difference is the goal: rather than answering a specific question, a scoping review explores a topic. The researcher tries to identify the main concepts, theories, and evidence, as well as gaps in the current research.

Sometimes scoping reviews are an exploratory preparation step for a systematic review, and sometimes they are a standalone project.

A systematic review is a good choice of review if you want to answer a question about the effectiveness of an intervention , such as a medical treatment.

To conduct a systematic review, you’ll need the following:

- A precise question , usually about the effectiveness of an intervention. The question needs to be about a topic that’s previously been studied by multiple researchers. If there’s no previous research, there’s nothing to review.

- If you’re doing a systematic review on your own (e.g., for a research paper or thesis ), you should take appropriate measures to ensure the validity and reliability of your research.

- Access to databases and journal archives. Often, your educational institution provides you with access.

- Time. A professional systematic review is a time-consuming process: it will take the lead author about six months of full-time work. If you’re a student, you should narrow the scope of your systematic review and stick to a tight schedule.

- Bibliographic, word-processing, spreadsheet, and statistical software . For example, you could use EndNote, Microsoft Word, Excel, and SPSS.

A systematic review has many pros .

- They minimize research bias by considering all available evidence and evaluating each study for bias.

- Their methods are transparent , so they can be scrutinized by others.

- They’re thorough : they summarize all available evidence.

- They can be replicated and updated by others.

Systematic reviews also have a few cons .

- They’re time-consuming .

- They’re narrow in scope : they only answer the precise research question.

The 7 steps for conducting a systematic review are explained with an example.

Step 1: Formulate a research question

Formulating the research question is probably the most important step of a systematic review. A clear research question will:

- Allow you to more effectively communicate your research to other researchers and practitioners

- Guide your decisions as you plan and conduct your systematic review

A good research question for a systematic review has four components, which you can remember with the acronym PICO :

- Population(s) or problem(s)

- Intervention(s)

- Comparison(s)

You can rearrange these four components to write your research question:

- What is the effectiveness of I versus C for O in P ?

Sometimes, you may want to include a fifth component, the type of study design . In this case, the acronym is PICOT .

- Type of study design(s)

- The population of patients with eczema

- The intervention of probiotics

- In comparison to no treatment, placebo , or non-probiotic treatment

- The outcome of changes in participant-, parent-, and doctor-rated symptoms of eczema and quality of life

- Randomized control trials, a type of study design

Their research question was:

- What is the effectiveness of probiotics versus no treatment, a placebo, or a non-probiotic treatment for reducing eczema symptoms and improving quality of life in patients with eczema?

Step 2: Develop a protocol

A protocol is a document that contains your research plan for the systematic review. This is an important step because having a plan allows you to work more efficiently and reduces bias.

Your protocol should include the following components:

- Background information : Provide the context of the research question, including why it’s important.

- Research objective (s) : Rephrase your research question as an objective.

- Selection criteria: State how you’ll decide which studies to include or exclude from your review.

- Search strategy: Discuss your plan for finding studies.

- Analysis: Explain what information you’ll collect from the studies and how you’ll synthesize the data.

If you’re a professional seeking to publish your review, it’s a good idea to bring together an advisory committee . This is a group of about six people who have experience in the topic you’re researching. They can help you make decisions about your protocol.

It’s highly recommended to register your protocol. Registering your protocol means submitting it to a database such as PROSPERO or ClinicalTrials.gov .

Step 3: Search for all relevant studies

Searching for relevant studies is the most time-consuming step of a systematic review.

To reduce bias, it’s important to search for relevant studies very thoroughly. Your strategy will depend on your field and your research question, but sources generally fall into these four categories:

- Databases: Search multiple databases of peer-reviewed literature, such as PubMed or Scopus . Think carefully about how to phrase your search terms and include multiple synonyms of each word. Use Boolean operators if relevant.

- Handsearching: In addition to searching the primary sources using databases, you’ll also need to search manually. One strategy is to scan relevant journals or conference proceedings. Another strategy is to scan the reference lists of relevant studies.

- Gray literature: Gray literature includes documents produced by governments, universities, and other institutions that aren’t published by traditional publishers. Graduate student theses are an important type of gray literature, which you can search using the Networked Digital Library of Theses and Dissertations (NDLTD) . In medicine, clinical trial registries are another important type of gray literature.

- Experts: Contact experts in the field to ask if they have unpublished studies that should be included in your review.

At this stage of your review, you won’t read the articles yet. Simply save any potentially relevant citations using bibliographic software, such as Scribbr’s APA or MLA Generator .

- Databases: EMBASE, PsycINFO, AMED, LILACS, and ISI Web of Science

- Handsearch: Conference proceedings and reference lists of articles

- Gray literature: The Cochrane Library, the metaRegister of Controlled Trials, and the Ongoing Skin Trials Register

- Experts: Authors of unpublished registered trials, pharmaceutical companies, and manufacturers of probiotics

Step 4: Apply the selection criteria

Applying the selection criteria is a three-person job. Two of you will independently read the studies and decide which to include in your review based on the selection criteria you established in your protocol . The third person’s job is to break any ties.

To increase inter-rater reliability , ensure that everyone thoroughly understands the selection criteria before you begin.

If you’re writing a systematic review as a student for an assignment, you might not have a team. In this case, you’ll have to apply the selection criteria on your own; you can mention this as a limitation in your paper’s discussion.

You should apply the selection criteria in two phases:

- Based on the titles and abstracts : Decide whether each article potentially meets the selection criteria based on the information provided in the abstracts.

- Based on the full texts: Download the articles that weren’t excluded during the first phase. If an article isn’t available online or through your library, you may need to contact the authors to ask for a copy. Read the articles and decide which articles meet the selection criteria.

It’s very important to keep a meticulous record of why you included or excluded each article. When the selection process is complete, you can summarize what you did using a PRISMA flow diagram .

Next, Boyle and colleagues found the full texts for each of the remaining studies. Boyle and Tang read through the articles to decide if any more studies needed to be excluded based on the selection criteria.

When Boyle and Tang disagreed about whether a study should be excluded, they discussed it with Varigos until the three researchers came to an agreement.

Step 5: Extract the data

Extracting the data means collecting information from the selected studies in a systematic way. There are two types of information you need to collect from each study:

- Information about the study’s methods and results . The exact information will depend on your research question, but it might include the year, study design , sample size, context, research findings , and conclusions. If any data are missing, you’ll need to contact the study’s authors.

- Your judgment of the quality of the evidence, including risk of bias .

You should collect this information using forms. You can find sample forms in The Registry of Methods and Tools for Evidence-Informed Decision Making and the Grading of Recommendations, Assessment, Development and Evaluations Working Group .

Extracting the data is also a three-person job. Two people should do this step independently, and the third person will resolve any disagreements.

They also collected data about possible sources of bias, such as how the study participants were randomized into the control and treatment groups.

Step 6: Synthesize the data

Synthesizing the data means bringing together the information you collected into a single, cohesive story. There are two main approaches to synthesizing the data:

- Narrative ( qualitative ): Summarize the information in words. You’ll need to discuss the studies and assess their overall quality.

- Quantitative : Use statistical methods to summarize and compare data from different studies. The most common quantitative approach is a meta-analysis , which allows you to combine results from multiple studies into a summary result.

Generally, you should use both approaches together whenever possible. If you don’t have enough data, or the data from different studies aren’t comparable, then you can take just a narrative approach. However, you should justify why a quantitative approach wasn’t possible.

Boyle and colleagues also divided the studies into subgroups, such as studies about babies, children, and adults, and analyzed the effect sizes within each group.

Step 7: Write and publish a report

The purpose of writing a systematic review article is to share the answer to your research question and explain how you arrived at this answer.

Your article should include the following sections:

- Abstract : A summary of the review

- Introduction : Including the rationale and objectives

- Methods : Including the selection criteria, search method, data extraction method, and synthesis method

- Results : Including results of the search and selection process, study characteristics, risk of bias in the studies, and synthesis results

- Discussion : Including interpretation of the results and limitations of the review

- Conclusion : The answer to your research question and implications for practice, policy, or research

To verify that your report includes everything it needs, you can use the PRISMA checklist .

Once your report is written, you can publish it in a systematic review database, such as the Cochrane Database of Systematic Reviews , and/or in a peer-reviewed journal.

In their report, Boyle and colleagues concluded that probiotics cannot be recommended for reducing eczema symptoms or improving quality of life in patients with eczema. Note Generative AI tools like ChatGPT can be useful at various stages of the writing and research process and can help you to write your systematic review. However, we strongly advise against trying to pass AI-generated text off as your own work.

If you want to know more about statistics , methodology , or research bias , make sure to check out some of our other articles with explanations and examples.

- Student’s t -distribution

- Normal distribution

- Null and Alternative Hypotheses

- Chi square tests

- Confidence interval

- Quartiles & Quantiles

- Cluster sampling

- Stratified sampling

- Data cleansing

- Reproducibility vs Replicability

- Peer review

- Prospective cohort study

Research bias

- Implicit bias

- Cognitive bias

- Placebo effect

- Hawthorne effect

- Hindsight bias

- Affect heuristic

- Social desirability bias

A literature review is a survey of scholarly sources (such as books, journal articles, and theses) related to a specific topic or research question .

It is often written as part of a thesis, dissertation , or research paper , in order to situate your work in relation to existing knowledge.

A literature review is a survey of credible sources on a topic, often used in dissertations , theses, and research papers . Literature reviews give an overview of knowledge on a subject, helping you identify relevant theories and methods, as well as gaps in existing research. Literature reviews are set up similarly to other academic texts , with an introduction , a main body, and a conclusion .

An annotated bibliography is a list of source references that has a short description (called an annotation ) for each of the sources. It is often assigned as part of the research process for a paper .

A systematic review is secondary research because it uses existing research. You don’t collect new data yourself.

Cite this Scribbr article

If you want to cite this source, you can copy and paste the citation or click the “Cite this Scribbr article” button to automatically add the citation to our free Citation Generator.

Turney, S. (2023, November 20). Systematic Review | Definition, Example & Guide. Scribbr. Retrieved August 21, 2024, from https://www.scribbr.com/methodology/systematic-review/

Is this article helpful?

Shaun Turney

Other students also liked, how to write a literature review | guide, examples, & templates, how to write a research proposal | examples & templates, what is critical thinking | definition & examples, get unlimited documents corrected.

✔ Free APA citation check included ✔ Unlimited document corrections ✔ Specialized in correcting academic texts

An official website of the United States government

The .gov means it’s official. Federal government websites often end in .gov or .mil. Before sharing sensitive information, make sure you’re on a federal government site.

The site is secure. The https:// ensures that you are connecting to the official website and that any information you provide is encrypted and transmitted securely.

- Publications

- Account settings

- My Bibliography

- Collections

- Citation manager

Save citation to file

Email citation, add to collections.

- Create a new collection

- Add to an existing collection

Add to My Bibliography

Your saved search, create a file for external citation management software, your rss feed.

- Search in PubMed

- Search in NLM Catalog

- Add to Search

How to Do a Systematic Review: A Best Practice Guide for Conducting and Reporting Narrative Reviews, Meta-Analyses, and Meta-Syntheses

Affiliations.

- 1 Behavioural Science Centre, Stirling Management School, University of Stirling, Stirling FK9 4LA, United Kingdom; email: [email protected].

- 2 Department of Psychological and Behavioural Science, London School of Economics and Political Science, London WC2A 2AE, United Kingdom.

- 3 Department of Statistics, Northwestern University, Evanston, Illinois 60208, USA; email: [email protected].

- PMID: 30089228

- DOI: 10.1146/annurev-psych-010418-102803

Systematic reviews are characterized by a methodical and replicable methodology and presentation. They involve a comprehensive search to locate all relevant published and unpublished work on a subject; a systematic integration of search results; and a critique of the extent, nature, and quality of evidence in relation to a particular research question. The best reviews synthesize studies to draw broad theoretical conclusions about what a literature means, linking theory to evidence and evidence to theory. This guide describes how to plan, conduct, organize, and present a systematic review of quantitative (meta-analysis) or qualitative (narrative review, meta-synthesis) information. We outline core standards and principles and describe commonly encountered problems. Although this guide targets psychological scientists, its high level of abstraction makes it potentially relevant to any subject area or discipline. We argue that systematic reviews are a key methodology for clarifying whether and how research findings replicate and for explaining possible inconsistencies, and we call for researchers to conduct systematic reviews to help elucidate whether there is a replication crisis.

Keywords: evidence; guide; meta-analysis; meta-synthesis; narrative; systematic review; theory.

PubMed Disclaimer

Similar articles

- The future of Cochrane Neonatal. Soll RF, Ovelman C, McGuire W. Soll RF, et al. Early Hum Dev. 2020 Nov;150:105191. doi: 10.1016/j.earlhumdev.2020.105191. Epub 2020 Sep 12. Early Hum Dev. 2020. PMID: 33036834

- Summarizing systematic reviews: methodological development, conduct and reporting of an umbrella review approach. Aromataris E, Fernandez R, Godfrey CM, Holly C, Khalil H, Tungpunkom P. Aromataris E, et al. Int J Evid Based Healthc. 2015 Sep;13(3):132-40. doi: 10.1097/XEB.0000000000000055. Int J Evid Based Healthc. 2015. PMID: 26360830

- RAMESES publication standards: meta-narrative reviews. Wong G, Greenhalgh T, Westhorp G, Buckingham J, Pawson R. Wong G, et al. BMC Med. 2013 Jan 29;11:20. doi: 10.1186/1741-7015-11-20. BMC Med. 2013. PMID: 23360661 Free PMC article.

- A Primer on Systematic Reviews and Meta-Analyses. Nguyen NH, Singh S. Nguyen NH, et al. Semin Liver Dis. 2018 May;38(2):103-111. doi: 10.1055/s-0038-1655776. Epub 2018 Jun 5. Semin Liver Dis. 2018. PMID: 29871017 Review.

- Publication Bias and Nonreporting Found in Majority of Systematic Reviews and Meta-analyses in Anesthesiology Journals. Hedin RJ, Umberham BA, Detweiler BN, Kollmorgen L, Vassar M. Hedin RJ, et al. Anesth Analg. 2016 Oct;123(4):1018-25. doi: 10.1213/ANE.0000000000001452. Anesth Analg. 2016. PMID: 27537925 Review.

- Bridging disciplines-key to success when implementing planetary health in medical training curricula. Malmqvist E, Oudin A. Malmqvist E, et al. Front Public Health. 2024 Aug 6;12:1454729. doi: 10.3389/fpubh.2024.1454729. eCollection 2024. Front Public Health. 2024. PMID: 39165783 Free PMC article. Review.

- Strength of evidence for five happiness strategies. Puterman E, Zieff G, Stoner L. Puterman E, et al. Nat Hum Behav. 2024 Aug 12. doi: 10.1038/s41562-024-01954-0. Online ahead of print. Nat Hum Behav. 2024. PMID: 39134738 No abstract available.

- Nursing Education During the SARS-COVID-19 Pandemic: The Implementation of Information and Communication Technologies (ICT). Soto-Luffi O, Villegas C, Viscardi S, Ulloa-Inostroza EM. Soto-Luffi O, et al. Med Sci Educ. 2024 May 9;34(4):949-959. doi: 10.1007/s40670-024-02056-2. eCollection 2024 Aug. Med Sci Educ. 2024. PMID: 39099870 Review.

- Surveillance of Occupational Exposure to Volatile Organic Compounds at Gas Stations: A Scoping Review Protocol. Mendes TMC, Soares JP, Salvador PTCO, Castro JL. Mendes TMC, et al. Int J Environ Res Public Health. 2024 Apr 23;21(5):518. doi: 10.3390/ijerph21050518. Int J Environ Res Public Health. 2024. PMID: 38791733 Free PMC article. Review.

- Association between poor sleep and mental health issues in Indigenous communities across the globe: a systematic review. Fernandez DR, Lee R, Tran N, Jabran DS, King S, McDaid L. Fernandez DR, et al. Sleep Adv. 2024 May 2;5(1):zpae028. doi: 10.1093/sleepadvances/zpae028. eCollection 2024. Sleep Adv. 2024. PMID: 38721053 Free PMC article.

- Search in MeSH

LinkOut - more resources

Full text sources.

- Ingenta plc

- Ovid Technologies, Inc.

Other Literature Sources

- scite Smart Citations

Miscellaneous

- NCI CPTAC Assay Portal

- Citation Manager

NCBI Literature Resources

MeSH PMC Bookshelf Disclaimer

The PubMed wordmark and PubMed logo are registered trademarks of the U.S. Department of Health and Human Services (HHS). Unauthorized use of these marks is strictly prohibited.

Have a language expert improve your writing

Run a free plagiarism check in 10 minutes, automatically generate references for free.

- Knowledge Base

- Methodology

- Systematic Review | Definition, Examples & Guide

Systematic Review | Definition, Examples & Guide

Published on 15 June 2022 by Shaun Turney . Revised on 18 July 2024.

A systematic review is a type of review that uses repeatable methods to find, select, and synthesise all available evidence. It answers a clearly formulated research question and explicitly states the methods used to arrive at the answer.

They answered the question ‘What is the effectiveness of probiotics in reducing eczema symptoms and improving quality of life in patients with eczema?’

In this context, a probiotic is a health product that contains live microorganisms and is taken by mouth. Eczema is a common skin condition that causes red, itchy skin.

Table of contents

What is a systematic review, systematic review vs meta-analysis, systematic review vs literature review, systematic review vs scoping review, when to conduct a systematic review, pros and cons of systematic reviews, step-by-step example of a systematic review, frequently asked questions about systematic reviews.

A review is an overview of the research that’s already been completed on a topic.

What makes a systematic review different from other types of reviews is that the research methods are designed to reduce research bias . The methods are repeatable , and the approach is formal and systematic:

- Formulate a research question

- Develop a protocol

- Search for all relevant studies

- Apply the selection criteria

- Extract the data

- Synthesise the data

- Write and publish a report

Although multiple sets of guidelines exist, the Cochrane Handbook for Systematic Reviews is among the most widely used. It provides detailed guidelines on how to complete each step of the systematic review process.

Systematic reviews are most commonly used in medical and public health research, but they can also be found in other disciplines.

Systematic reviews typically answer their research question by synthesising all available evidence and evaluating the quality of the evidence. Synthesising means bringing together different information to tell a single, cohesive story. The synthesis can be narrative ( qualitative ), quantitative , or both.

Prevent plagiarism, run a free check.

Systematic reviews often quantitatively synthesise the evidence using a meta-analysis . A meta-analysis is a statistical analysis, not a type of review.

A meta-analysis is a technique to synthesise results from multiple studies. It’s a statistical analysis that combines the results of two or more studies, usually to estimate an effect size .

A literature review is a type of review that uses a less systematic and formal approach than a systematic review. Typically, an expert in a topic will qualitatively summarise and evaluate previous work, without using a formal, explicit method.

Although literature reviews are often less time-consuming and can be insightful or helpful, they have a higher risk of bias and are less transparent than systematic reviews.

Similar to a systematic review, a scoping review is a type of review that tries to minimise bias by using transparent and repeatable methods.

However, a scoping review isn’t a type of systematic review. The most important difference is the goal: rather than answering a specific question, a scoping review explores a topic. The researcher tries to identify the main concepts, theories, and evidence, as well as gaps in the current research.

Sometimes scoping reviews are an exploratory preparation step for a systematic review, and sometimes they are a standalone project.

A systematic review is a good choice of review if you want to answer a question about the effectiveness of an intervention , such as a medical treatment.

To conduct a systematic review, you’ll need the following:

- A precise question , usually about the effectiveness of an intervention. The question needs to be about a topic that’s previously been studied by multiple researchers. If there’s no previous research, there’s nothing to review.

- If you’re doing a systematic review on your own (e.g., for a research paper or thesis), you should take appropriate measures to ensure the validity and reliability of your research.

- Access to databases and journal archives. Often, your educational institution provides you with access.

- Time. A professional systematic review is a time-consuming process: it will take the lead author about six months of full-time work. If you’re a student, you should narrow the scope of your systematic review and stick to a tight schedule.

- Bibliographic, word-processing, spreadsheet, and statistical software . For example, you could use EndNote, Microsoft Word, Excel, and SPSS.

A systematic review has many pros .

- They minimise research b ias by considering all available evidence and evaluating each study for bias.

- Their methods are transparent , so they can be scrutinised by others.

- They’re thorough : they summarise all available evidence.

- They can be replicated and updated by others.

Systematic reviews also have a few cons .

- They’re time-consuming .

- They’re narrow in scope : they only answer the precise research question.

The 7 steps for conducting a systematic review are explained with an example.

Step 1: Formulate a research question

Formulating the research question is probably the most important step of a systematic review. A clear research question will:

- Allow you to more effectively communicate your research to other researchers and practitioners

- Guide your decisions as you plan and conduct your systematic review

A good research question for a systematic review has four components, which you can remember with the acronym PICO :

- Population(s) or problem(s)

- Intervention(s)

- Comparison(s)

You can rearrange these four components to write your research question:

- What is the effectiveness of I versus C for O in P ?

Sometimes, you may want to include a fourth component, the type of study design . In this case, the acronym is PICOT .

- Type of study design(s)

- The population of patients with eczema

- The intervention of probiotics

- In comparison to no treatment, placebo , or non-probiotic treatment

- The outcome of changes in participant-, parent-, and doctor-rated symptoms of eczema and quality of life

- Randomised control trials, a type of study design

Their research question was:

- What is the effectiveness of probiotics versus no treatment, a placebo, or a non-probiotic treatment for reducing eczema symptoms and improving quality of life in patients with eczema?

Step 2: Develop a protocol

A protocol is a document that contains your research plan for the systematic review. This is an important step because having a plan allows you to work more efficiently and reduces bias.

Your protocol should include the following components:

- Background information : Provide the context of the research question, including why it’s important.

- Research objective(s) : Rephrase your research question as an objective.

- Selection criteria: State how you’ll decide which studies to include or exclude from your review.

- Search strategy: Discuss your plan for finding studies.

- Analysis: Explain what information you’ll collect from the studies and how you’ll synthesise the data.

If you’re a professional seeking to publish your review, it’s a good idea to bring together an advisory committee . This is a group of about six people who have experience in the topic you’re researching. They can help you make decisions about your protocol.

It’s highly recommended to register your protocol. Registering your protocol means submitting it to a database such as PROSPERO or ClinicalTrials.gov .

Step 3: Search for all relevant studies

Searching for relevant studies is the most time-consuming step of a systematic review.

To reduce bias, it’s important to search for relevant studies very thoroughly. Your strategy will depend on your field and your research question, but sources generally fall into these four categories:

- Databases: Search multiple databases of peer-reviewed literature, such as PubMed or Scopus . Think carefully about how to phrase your search terms and include multiple synonyms of each word. Use Boolean operators if relevant.

- Handsearching: In addition to searching the primary sources using databases, you’ll also need to search manually. One strategy is to scan relevant journals or conference proceedings. Another strategy is to scan the reference lists of relevant studies.

- Grey literature: Grey literature includes documents produced by governments, universities, and other institutions that aren’t published by traditional publishers. Graduate student theses are an important type of grey literature, which you can search using the Networked Digital Library of Theses and Dissertations (NDLTD) . In medicine, clinical trial registries are another important type of grey literature.

- Experts: Contact experts in the field to ask if they have unpublished studies that should be included in your review.

At this stage of your review, you won’t read the articles yet. Simply save any potentially relevant citations using bibliographic software, such as Scribbr’s APA or MLA Generator .

- Databases: EMBASE, PsycINFO, AMED, LILACS, and ISI Web of Science

- Handsearch: Conference proceedings and reference lists of articles

- Grey literature: The Cochrane Library, the metaRegister of Controlled Trials, and the Ongoing Skin Trials Register

- Experts: Authors of unpublished registered trials, pharmaceutical companies, and manufacturers of probiotics

Step 4: Apply the selection criteria

Applying the selection criteria is a three-person job. Two of you will independently read the studies and decide which to include in your review based on the selection criteria you established in your protocol . The third person’s job is to break any ties.

To increase inter-rater reliability , ensure that everyone thoroughly understands the selection criteria before you begin.

If you’re writing a systematic review as a student for an assignment, you might not have a team. In this case, you’ll have to apply the selection criteria on your own; you can mention this as a limitation in your paper’s discussion.

You should apply the selection criteria in two phases:

- Based on the titles and abstracts : Decide whether each article potentially meets the selection criteria based on the information provided in the abstracts.

- Based on the full texts: Download the articles that weren’t excluded during the first phase. If an article isn’t available online or through your library, you may need to contact the authors to ask for a copy. Read the articles and decide which articles meet the selection criteria.

It’s very important to keep a meticulous record of why you included or excluded each article. When the selection process is complete, you can summarise what you did using a PRISMA flow diagram .

Next, Boyle and colleagues found the full texts for each of the remaining studies. Boyle and Tang read through the articles to decide if any more studies needed to be excluded based on the selection criteria.

When Boyle and Tang disagreed about whether a study should be excluded, they discussed it with Varigos until the three researchers came to an agreement.

Step 5: Extract the data

Extracting the data means collecting information from the selected studies in a systematic way. There are two types of information you need to collect from each study:

- Information about the study’s methods and results . The exact information will depend on your research question, but it might include the year, study design , sample size, context, research findings , and conclusions. If any data are missing, you’ll need to contact the study’s authors.

- Your judgement of the quality of the evidence, including risk of bias .

You should collect this information using forms. You can find sample forms in The Registry of Methods and Tools for Evidence-Informed Decision Making and the Grading of Recommendations, Assessment, Development and Evaluations Working Group .

Extracting the data is also a three-person job. Two people should do this step independently, and the third person will resolve any disagreements.

They also collected data about possible sources of bias, such as how the study participants were randomised into the control and treatment groups.

Step 6: Synthesise the data

Synthesising the data means bringing together the information you collected into a single, cohesive story. There are two main approaches to synthesising the data:

- Narrative ( qualitative ): Summarise the information in words. You’ll need to discuss the studies and assess their overall quality.

- Quantitative : Use statistical methods to summarise and compare data from different studies. The most common quantitative approach is a meta-analysis , which allows you to combine results from multiple studies into a summary result.

Generally, you should use both approaches together whenever possible. If you don’t have enough data, or the data from different studies aren’t comparable, then you can take just a narrative approach. However, you should justify why a quantitative approach wasn’t possible.

Boyle and colleagues also divided the studies into subgroups, such as studies about babies, children, and adults, and analysed the effect sizes within each group.

Step 7: Write and publish a report

The purpose of writing a systematic review article is to share the answer to your research question and explain how you arrived at this answer.

Your article should include the following sections:

- Abstract : A summary of the review

- Introduction : Including the rationale and objectives

- Methods : Including the selection criteria, search method, data extraction method, and synthesis method

- Results : Including results of the search and selection process, study characteristics, risk of bias in the studies, and synthesis results

- Discussion : Including interpretation of the results and limitations of the review

- Conclusion : The answer to your research question and implications for practice, policy, or research

To verify that your report includes everything it needs, you can use the PRISMA checklist .

Once your report is written, you can publish it in a systematic review database, such as the Cochrane Database of Systematic Reviews , and/or in a peer-reviewed journal.

A systematic review is secondary research because it uses existing research. You don’t collect new data yourself.

A literature review is a survey of scholarly sources (such as books, journal articles, and theses) related to a specific topic or research question .

It is often written as part of a dissertation , thesis, research paper , or proposal .

There are several reasons to conduct a literature review at the beginning of a research project:

- To familiarise yourself with the current state of knowledge on your topic

- To ensure that you’re not just repeating what others have already done

- To identify gaps in knowledge and unresolved problems that your research can address

- To develop your theoretical framework and methodology

- To provide an overview of the key findings and debates on the topic

Writing the literature review shows your reader how your work relates to existing research and what new insights it will contribute.

Cite this Scribbr article

If you want to cite this source, you can copy and paste the citation or click the ‘Cite this Scribbr article’ button to automatically add the citation to our free Reference Generator.

Turney, S. (2024, July 17). Systematic Review | Definition, Examples & Guide. Scribbr. Retrieved 21 August 2024, from https://www.scribbr.co.uk/research-methods/systematic-reviews/

Is this article helpful?

Shaun Turney

Other students also liked, what is a literature review | guide, template, & examples, exploratory research | definition, guide, & examples, what is peer review | types & examples.

- UNC Libraries

- HSL Academic Process

- Systematic Reviews

Systematic Reviews: Home

Created by health science librarians.

- Systematic review resources

What is a Systematic Review?

A simplified process map, how can the library help, publications by hsl librarians, systematic reviews in non-health disciplines, resources for performing systematic reviews.

- Step 1: Complete Pre-Review Tasks

- Step 2: Develop a Protocol

- Step 3: Conduct Literature Searches

- Step 4: Manage Citations

- Step 5: Screen Citations

- Step 6: Assess Quality of Included Studies

- Step 7: Extract Data from Included Studies

- Step 8: Write the Review

Check our FAQ's

Email us

Call (919) 962-0800

Make an appointment with a librarian

Request a systematic or scoping review consultation

A systematic review is a literature review that gathers all of the available evidence matching pre-specified eligibility criteria to answer a specific research question. It uses explicit, systematic methods, documented in a protocol, to minimize bias , provide reliable findings , and inform decision-making. ¹

There are many types of literature reviews.

Before beginning a systematic review, consider whether it is the best type of review for your question, goals, and resources. The table below compares a few different types of reviews to help you decide which is best for you.

| Systematic Review | Scoping Review | Systematized Review |

|---|---|---|

| Conducted for Publication | Conducted for Publication | Conducted for Assignment, Thesis, or (Possibly) Publication |

| Protocol Required | Protocol Required | No Protocol Required |

| Focused Research Question | Broad Research Question | Either |

| Focused Inclusion & Exclusion Criteria | Broad Inclusion & Exclusion Criteria | Either |

| Requires Large Team | Requires Small Team | Usually 1-2 People |

- Scoping Review Guide For more information about scoping reviews, refer to the UNC HSL Scoping Review Guide.

- UNC HSL's Simplified, Step-by-Step Process Map A PDF file of the HSL's Systematic Review Process Map.

- Text-Only: UNC HSL's Systematic Reviews - A Simplified, Step-by-Step Process A text-only PDF file of HSL's Systematic Review Process Map.

The average systematic review takes 1,168 hours to complete. ¹ A librarian can help you speed up the process.

Systematic reviews follow established guidelines and best practices to produce high-quality research. Librarian involvement in systematic reviews is based on two levels. In Tier 1, your research team can consult with the librarian as needed. The librarian will answer questions and give you recommendations for tools to use. In Tier 2, the librarian will be an active member of your research team and co-author on your review. Roles and expectations of librarians vary based on the level of involvement desired. Examples of these differences are outlined in the table below.

| Tasks | Tier 1: Consultative | Tier 2: Research Partner / Co-author |

|---|---|---|

| Guidance on process and steps | Yes | Yes |

| Background searching for past and upcoming reviews | Yes | Yes |

| Development and/or refinement of review topic | Yes | Yes |

| Assistance with refinement of PICO (population, intervention(s), comparator(s), and key questions | Yes | Yes |

| Guidance on study types to include | Yes | Yes |

| Guidance on protocol registration | Yes | Yes |

| Identification of databases for searches | Yes | Yes |

| Instruction in search techniques and methods | Yes | Yes |

| Training in citation management software use for managing and sharing results | Yes | Yes |

| Development and execution of searches | No | Yes |

| Downloading search results to citation management software and removing duplicates | No | Yes |

| Documentation of search strategies | No | Yes |

| Management of search results | No | Yes |

| Guidance on methods | Yes | Yes |

| Guidance on data extraction, and management techniques and software | Yes | Yes |

| Suggestions of journals to target for publication | Yes | Yes |

| Drafting of literature search description in "Methods" section | No | Yes |

| Creation of PRISMA diagram | No | Yes |

| Drafting of literature search appendix | No | Yes |

| Review other manuscript sections and final draft | No | Yes |

| Librarian contributions warrant co-authorship | No | Yes |

- Request a systematic or scoping review consultation

The following are systematic and scoping reviews co-authored by HSL librarians.

Only the most recent 15 results are listed. Click the website link at the bottom of the list to see all reviews co-authored by HSL librarians in PubMed

Researchers conduct systematic reviews in a variety of disciplines. If your focus is on a topic outside of the health sciences, you may want to also consult the resources below to learn how systematic reviews may vary in your field. You can also contact a librarian for your discipline with questions.

- EPPI-Centre methods for conducting systematic reviews The EPPI-Centre develops methods and tools for conducting systematic reviews, including reviews for education, public and social policy.

Environmental Topics

- Collaboration for Environmental Evidence (CEE) CEE seeks to promote and deliver evidence syntheses on issues of greatest concern to environmental policy and practice as a public service

Social Sciences

- Siddaway AP, Wood AM, Hedges LV. How to Do a Systematic Review: A Best Practice Guide for Conducting and Reporting Narrative Reviews, Meta-Analyses, and Meta-Syntheses. Annu Rev Psychol. 2019 Jan 4;70:747-770. doi: 10.1146/annurev-psych-010418-102803. A resource for psychology systematic reviews, which also covers qualitative meta-syntheses or meta-ethnographies

- The Campbell Collaboration

Social Work

Software engineering

- Guidelines for Performing Systematic Literature Reviews in Software Engineering The objective of this report is to propose comprehensive guidelines for systematic literature reviews appropriate for software engineering researchers, including PhD students.

Sport, Exercise, & Nutrition

- Application of systematic review methodology to the field of nutrition by Tufts Evidence-based Practice Center Publication Date: 2009

- Systematic Reviews and Meta-Analysis — Open & Free (Open Learning Initiative) The course follows guidelines and standards developed by the Campbell Collaboration, based on empirical evidence about how to produce the most comprehensive and accurate reviews of research

- Systematic Reviews by David Gough, Sandy Oliver & James Thomas Publication Date: 2020

Updating reviews

- Updating systematic reviews by University of Ottawa Evidence-based Practice Center Publication Date: 2007

- Next: Step 1: Complete Pre-Review Tasks >>

- Last Updated: Jul 15, 2024 4:55 PM

- URL: https://guides.lib.unc.edu/systematic-reviews

Library Services

UCL LIBRARY SERVICES

- Guides and databases

- Library skills

- Systematic reviews

What are systematic reviews?

- Types of systematic reviews

- Formulating a research question

- Identifying studies

- Searching databases

- Describing and appraising studies

- Synthesis and systematic maps

- Software for systematic reviews

- Online training and support

- Live and face to face training

- Individual support

- Further help

Systematic reviews are a type of literature review of research that require equivalent standards of rigour to primary research. They have a clear, logical rationale that is reported to the reader of the review. They are used in research and policymaking to inform evidence-based decisions and practice. They differ from traditional literature reviews in the following elements of conduct and reporting.

Systematic reviews:

- use explicit and transparent methods

- are a piece of research following a standard set of stages

- are accountable, replicable and updateable

- involve users to ensure a review is relevant and useful.

For example, systematic reviews (like all research) should have a clear research question, and the perspective of the authors in their approach to addressing the question is described. There are clearly described methods on how each study in a review was identified, how that study was appraised for quality and relevance and how it is combined with other studies in order to address the review question. A systematic review usually involves more than one person in order to increase the objectivity and trustworthiness of the reviews methods and findings.

Research protocols for systematic reviews may be peer-reviewed and published or registered in a suitable repository to help avoid duplication of reviews and for comparisons to be made with the final review and the planned review.

- History of systematic reviews to inform policy (EPPI-Centre)

- Six reasons why it is important to be systematic (EPPI-Centre)

- Evidence Synthesis International (ESI): Position Statement Describes the issues, principles and goals in synthesising research evidence to inform policy, practice and decisions

On this page

Should all literature reviews be 'systematic reviews', different methods for systematic reviews, reporting standards for systematic reviews.

Literature reviews provide a more complete picture of research knowledge than is possible from individual pieces of research. This can be used to: clarify what is known from research, provide new perspectives, build theory, test theory, identify research gaps or inform research agendas.

A systematic review requires a considerable amount of time and resources, and is one type of literature review.

If the purpose of a review is to make justifiable evidence claims, then it should be systematic, as a systematic review uses rigorous explicit methods. The methods used can depend on the purpose of the review, and the time and resources available.

A 'non-systematic review' might use some of the same methods as systematic reviews, such as systematic approaches to identify studies or quality appraise the literature. There may be times when this approach can be useful. In a student dissertation, for example, there may not be the time to be fully systematic in a review of the literature if this is only one small part of the thesis. In other types of research, there may also be a need to obtain a quick and not necessarily thorough overview of a literature to inform some other work (including a systematic review). Another example, is where policymakers, or other people using research findings, want to make quick decisions and there is no systematic review available to help them. They have a choice of gaining a rapid overview of the research literature or not having any research evidence to help their decision-making.

Just like any other piece of research, the methods used to undertake any literature review should be carefully planned to justify the conclusions made.

Finding out about different types of systematic reviews and the methods used for systematic reviews, and reading both systematic and other types of review will help to understand some of the differences.

Typically, a systematic review addresses a focussed, structured research question in order to inform understanding and decisions on an area. (see the Formulating a research question section for examples).

Sometimes systematic reviews ask a broad research question, and one strategy to achieve this is the use of several focussed sub-questions each addressed by sub-components of the review.

Another strategy is to develop a map to describe the type of research that has been undertaken in relation to a research question. Some maps even describe over 2,000 papers, while others are much smaller. One purpose of a map is to help choose a sub-set of studies to explore more fully in a synthesis. There are also other purposes of maps: see the box on systematic evidence maps for further information.

Reporting standards specify minimum elements that need to go into the reporting of a review. The reporting standards refer mainly to methodological issues but they are not as detailed or specific as critical appraisal for the methodological standards of conduct of a review.

A number of organisations have developed specific guidelines and standards for both the conducting and reporting on systematic reviews in different topic areas.

- PRISMA PRISMA is a reporting standard and is an acronym for Preferred Reporting Items for Systematic Reviews and Meta-Analyses. The Key Documents section of the PRISMA website links to a checklist, flow diagram and explanatory notes. PRISMA is less useful for certain types of reviews, including those that are iterative.

- eMERGe eMERGe is a reporting standard that has been developed for meta-ethnographies, a qualitative synthesis method.

- ROSES: RepOrting standards for Systematic Evidence Syntheses Reporting standards, including forms and flow diagram, designed specifically for systematic reviews and maps in the field of conservation and environmental management.

Useful books about systematic reviews

Systematic approaches to a successful literature review

An introduction to systematic reviews

Cochrane handbook for systematic reviews of interventions

Systematic reviews: crd's guidance for undertaking reviews in health care.

Finding what works in health care: Standards for systematic reviews

Systematic Reviews in the Social Sciences

Meta-analysis and research synthesis.

Research Synthesis and Meta-Analysis

Doing a Systematic Review

Literature reviews.

- What is a literature review?

- Why are literature reviews important?

- << Previous: Systematic reviews

- Next: Types of systematic reviews >>

- Last Updated: Aug 2, 2024 9:22 AM

- URL: https://library-guides.ucl.ac.uk/systematic-reviews

Systematic Review

- Library Help

- What is a Systematic Review (SR)?

Steps of a Systematic Review

- Framing a Research Question

- Developing a Search Strategy

- Searching the Literature

- Managing the Process

- Meta-analysis

- Publishing your Systematic Review

Forms and templates

Image: David Parmenter's Shop

- PICO Template

- Inclusion/Exclusion Criteria

- Database Search Log

- Review Matrix

- Cochrane Tool for Assessing Risk of Bias in Included Studies

• PRISMA Flow Diagram - Record the numbers of retrieved references and included/excluded studies. You can use the Create Flow Diagram tool to automate the process.

• PRISMA Checklist - Checklist of items to include when reporting a systematic review or meta-analysis

PRISMA 2020 and PRISMA-S: Common Questions on Tracking Records and the Flow Diagram

- PROSPERO Template

- Manuscript Template

- Steps of SR (text)

- Steps of SR (visual)

- Steps of SR (PIECES)

|

Image by | from the UMB HSHSL Guide. (26 min) on how to conduct and write a systematic review from RMIT University from the VU Amsterdam . , (1), 6–23. https://doi.org/10.3102/0034654319854352 . (1), 49-60. . (4), 471-475. (2020) (2020) - Methods guide for effectiveness and comparative effectiveness reviews (2017) - Finding what works in health care: Standards for systematic reviews (2011) - Systematic reviews: CRD’s guidance for undertaking reviews in health care (2008) |

|

| entify your research question. Formulate a clear, well-defined research question of appropriate scope. Define your terminology. Find existing reviews on your topic to inform the development of your research question, identify gaps, and confirm that you are not duplicating the efforts of previous reviews. Consider using a framework like or to define you question scope. Use to record search terms under each concept. It is a good idea to register your protocol in a publicly accessible way. This will help avoid other people completing a review on your topic. Similarly, before you start doing a systematic review, it's worth checking the different registries that nobody else has already registered a protocol on the same topic. - Systematic reviews of health care and clinical interventions - Systematic reviews of the effects of social interventions (Collaborative Approach to Meta-Analysis and Review of Animal Data from Experimental Studies) - The protocol is published immediately and subjected to open peer review. When two reviewers approve it, the paper is sent to Medline, Embase and other databases for indexing. - upload a protocol for your scoping review - Systematic reviews of healthcare practices to assist in the improvement of healthcare outcomes globally - Registry of a protocol on OSF creates a frozen, time-stamped record of the protocol, thus ensuring a level of transparency and accountability for the research. There are no limits to the types of protocols that can be hosted on OSF. - International prospective register of systematic reviews. This is the primary database for registering systematic review protocols and searching for published protocols. . PROSPERO accepts protocols from all disciplines (e.g., psychology, nutrition) with the stipulation that they must include health-related outcomes. - Similar to PROSPERO. Based in the UK, fee-based service, quick turnaround time. - Submit a pre-print, or a protocol for a scoping review. - Share your search strategy and research protocol. No limit on the format, size, access restrictions or license.outlining the details and documentation necessary for conducting a systematic review: , (1), 28. |

| Clearly state the criteria you will use to determine whether or not a study will be included in your search. Consider study populations, study design, intervention types, comparison groups, measured outcomes. Use some database-supplied limits such as language, dates, humans, female/male, age groups, and publication/study types (randomized controlled trials, etc.). | |

| Run your searches in the to your topic. Work with to help you design comprehensive search strategies across a variety of databases. Approach the grey literature methodically and purposefully. Collect ALL of the retrieved records from each search into , such as , or , and prior to screening. using the and . | |

| - export your Endnote results in this screening software | Start with a title/abstract screening to remove studies that are clearly not related to your topic. Use your to screen the full-text of studies. It is highly recommended that two independent reviewers screen all studies, resolving areas of disagreement by consensus. |

| Use , or systematic review software (e.g. , ), to extract all relevant data from each included study. It is recommended that you pilot your data extraction tool, to determine if other fields should be included or existing fields clarified. | |

| Risk of Bias (Quality) Assessment - (download the Excel spreadsheet to see all data) | Use a Risk of Bias tool (such as the ) to assess the potential biases of studies in regards to study design and other factors. Read the to learn about the topic of assessing risk of bias in included studies. You can adapt ( ) to best meet the needs of your review, depending on the types of studies included. |

| - - - | Clearly present your findings, including detailed methodology (such as search strategies used, selection criteria, etc.) such that your review can be easily updated in the future with new research findings. Perform a meta-analysis, if the studies allow. Provide recommendations for practice and policy-making if sufficient, high quality evidence exists, or future directions for research to fill existing gaps in knowledge or to strengthen the body of evidence. For more information, see: . (2), 217–226. https://doi.org/10.2450/2012.0247-12 - Get some inspiration and find some terms and phrases for writing your manuscript - Automated high-quality spelling, grammar and rephrasing corrections using artificial intelligence (AI) to improve the flow of your writing. Free and subscription plans available. |

| - - | 8. Find the best journal to publish your work. Identifying the best journal to submit your research to can be a difficult process. To help you make the choice of where to submit, simply insert your title and abstract in any of the listed under the tab. |

Adapted from A Guide to Conducting Systematic Reviews: Steps in a Systematic Review by Cornell University Library

|

This diagram illustrates in a visual way and in plain language what review authors actually do in the process of undertaking a systematic review. |

This diagram illustrates what is actually in a published systematic review and gives examples from the relevant parts of a systematic review housed online on The Cochrane Library. It will help you to read or navigate a systematic review. |

Source: Cochrane Consumers and Communications (infographics are free to use and licensed under Creative Commons )

Check the following visual resources titled " What Are Systematic Reviews?"

- Video with closed captions available

- Animated Storyboard

|

Image: | - the methods of the systematic review are generally decided before conducting it.

Source: Foster, M. (2018). Systematic reviews service: Introduction to systematic reviews. Retrieved September 18, 2018, from |

- << Previous: What is a Systematic Review (SR)?

- Next: Framing a Research Question >>

- Last Updated: Jul 11, 2024 6:38 AM

- URL: https://lib.guides.umd.edu/SR

How to Write a Systematic Review Dissertation: With Examples

Writing a systematic review dissertation isn’t easy because you must follow a thorough and accurate scientific process. You must be an expert in research methodology to synthesise studies. In this article, I will provide a step-by-step approach to writing a top-notch systematic review dissertation.

Table of Contents

However, for students who may find this process challenging and seek professional assistance, I recommend exploring SystematicReviewPro —a reliable systematic review writing service. By signing up and placing a free inquiry and engaging with the admin team at any time, students can avail themselves of an exclusive offer of up to 50% off on their systematic review order. Additionally, there is already a 30% discount running on the website, making it an excellent opportunity to ease your dissertation journey.

As an Undergraduate or Master’s student, you’re are allowed to pick a systematic review for your dissertation. As a PhD student, you can use a systematic review methodology in the second chapter (literature review) of your dissertation. A systematic review is considered the highest level of empirical evidence, especially in clinical sciences like nursing and medicine. When developing new practice guidelines, new services, or new products, systematic reviews are searched and synthesised first on that topic or idea.

Factors to Consider When Writing a Systematic Review Dissertation

The nature of your research topic or research question.

Some research topics or questions strictly conform to qualitative or quantitative methods. For example, if you’re exploring the lived experiences, attitudes, perceptions, and meaning-making in a given population, you’ll need qualitative methods. However, you will require quantitative methods if looking into quantifiable variables like happiness, depression, academic performance, sleep, etc. That said, the nature of your research question should guide you. If your topic is qualitative, you’ll need qualitative studies only. If your topic is quantitative, you’ll need quantitative studies only. Systematic reviews of qualitative studies are less intricate than of quantitative studies. Still, they require a thoughtful approach in synthesizing findings from various qualitative studies.

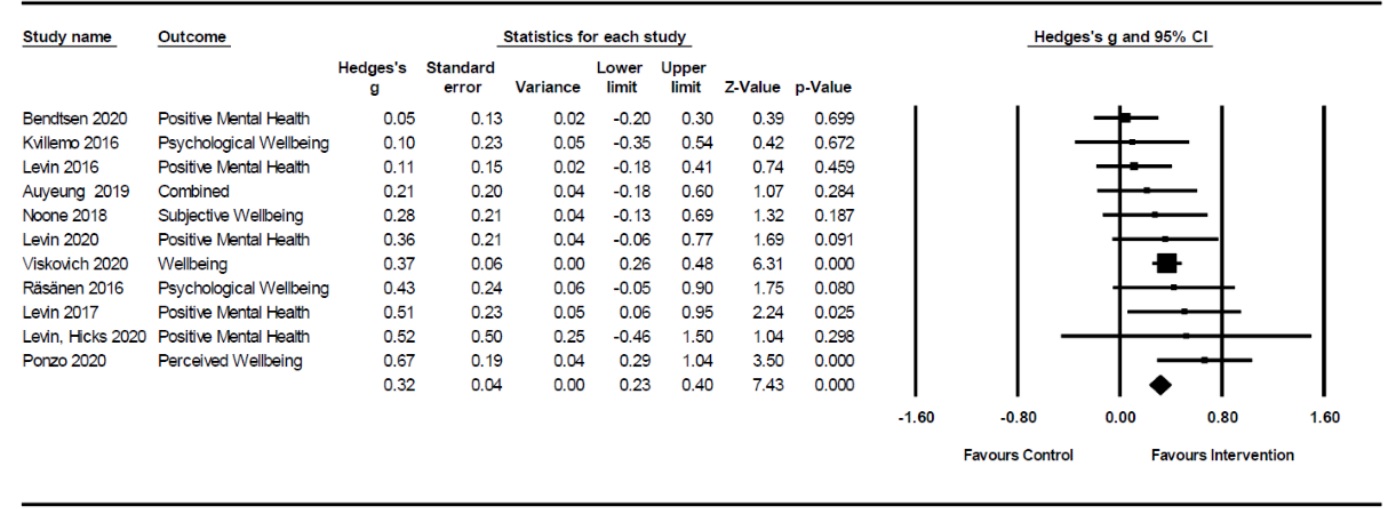

If you choose to review quantitative studies, you might need to conduct a meta-analysis in your systematic review. A meta-analysis refers to statistical techniques used in pooling findings from various independent studies to compute a summary statistic. For example, in your dissertation, you may aim to investigate the effect of a student well-being programme embedded in university classes on the happiness of university students. Various studies that have investigated the same or a related intervention and quantitively measured happiness among university students must be synthesised together using a statistical technique. The ultimate outcome of that meta-analysis is to provide an overview of the overall trend of the effect of the intervention on university student’s happiness. For more information about how to formulate a research question for a systematic review with a meta-analysis, visit this link.

An example meta-analysis showing the statistical combination of findings from various studies to indicate the overall effect of a psychological intervention on the psychological well-being of university students.

Availability of primary studies

Finding primary studies for your systematic review is the hardest thing you can encounter with this approach. You can choose your topic and plan your journey so well. Upon reaching the point you need primary studies to answer your research question, you get stuck. Retrieving primary studies is challenging because it requires advanced search strategies on various online databases. Doing an advanced search strategy can be an uphill task for someone who has never done a systematic review. This is because, more often than not, depending on the topic, primary studies are not readily available on the Internet. Remember, secondary studies, like systematic reviews and literature reviews, are not eligible for systematic reviews.

Supervisor’s recommendation

Always confirm with your supervisor if you can do a systematic review dissertation. Some supervisors may feel it better for you to do a primary study. So, always confirm with your supervisor before doing much.

Your confidence

Always ensure you’re confident that you can do a systematic review on your own. Writing a systematic review isn’t easy. You need to be aware that doing a systematic review may even be harder than doing interviews or surveys in primary research. Why? A systematic review involves combining many primary studies together in a scientific manner. That means you must have expertise in various research methodologies to know the best way to integrate or synthesise the various studies.

Availability of time and resources

The main advantage of doing a systematic review dissertation is that it saves a lot of time. Conducting interviews or surveys can be time- and resource-consuming. However, with a systematic review, you do everything from your desk. It will save you a lot of time and resources. If you find that you meet many of the requirements of successfully conducting a systematic review, the next step is to engage in the actual process. The step-by-step approach used in writing systematic reviews is outlined below.

Step-by-Step Process in Writing a Systematic Review Dissertation

The following steps are iterative, meaning you can start over again and again until you meet your research objectives. The step-by-step guide on how to write a systematic review dissertation is summarized in the infographic shown below.

Step-by-step guide on how to write a systematic review dissertation

Step 1: Formulate the systematic review research question

The starting point of a systematic review is to formulate a research question. As stated above, the nature of your research question will help you make key decisions. For example, you will be able to know which design (quantitative versus qualitative) to consider in your inclusion and exclusion criteria.

Step 2: Do a preliminary search

The next step is to perform a preliminary search on the Internet to determine if another systematic review has been published. It is not acceptable to repeat what has already been done. Your research should be novel and contribute to a knowledge gap. However, if you find that another systematic review has already been published on your topic. You should consider the publication date.

In most cases, systematic reviews on given topics are outdated. They have not used recent studies published on that topic, thus missing important updates. That can be a good reason you’re conducting your study. Suppose there’s an updated systematic review on your topic. In that case, you should consider reformulating your research question to address a specific knowledge gap.

Step 3: Develop your systematic review inclusion and exclusion criteria

One unique thing about systematic reviews is that they must be based on a very specific population, intervention/exposure, and assess a specific outcome. Let’s say, for example, you write on Intervention A’s effectiveness in reducing depression symptoms in older frail people. In that case, you must retrieve studies that strictly assess the effectiveness of Intervention A, the outcome being depression symptoms and the population being older frail people.

Therefore, it will be against the principles of a systematic review to focus on Intervention B (different intervention/exposure) on anxiety (different outcomes) in younger people (different populations). Also, depending on your research question, you will need to determine the research design (qualitative versus quantitative) of the studies you will review. Other criteria to consider are the country of publication, the publication date, language, etc.

Step 4: Develop your systematic review search strategy

As said, the main challenge in writing a systematic review is to identify papers. Your literature search should be thorough so that you don’t leave out some relevant studies. Developing a literature search strategy isn’t easy because you must start identifying relevant keywords and search terms for your topic. You must start by knowing common terminologies used in your subject of interest.

Afterward, combine the keywords using Boolean connectors like “AND” & “OR.” For example, suppose my topic is the effectiveness of cognitive behavioural therapy in treating anxiety in adolescents. In that regard, I can combine my keywords as follows: (Cognitive behavioural therapy OR CBT) AND (anxiety) AND (adolescents OR youth). If you use terminologies unknown in your discipline, you will likely not find relevant studies for review.

Step 5: Plan and perform systematic review database selection

At this stage, you identify the databases you’ll use to execute your search strategy. When writing a systematic review dissertation, you also need to report the databases that you searched. Commonly searched ones in the field of social and health sciences include PubMed, Google Scholar, Cochrane, PsycInfo, and many others. You need to know how each database works. Also, apart from Google Scholar and PubMed, most of these databases require paid or institutional access. Liaise with your supervisor or librarian to help in identifying good databases for subject and discipline.

Step 6: Perform systematic review screening using titles and abstracts

When you execute your search strategy on each database, results or search hits will be displayed. This is also another difficult step because of tedious work involved. You start by screening the titles. Then, eliminate results that contain irrelevant titles. You need to be careful at this point because sometimes people eliminate even relevant studies. The title doesn’t need to contain exactly your keywords. Some titles appear totally irrelevant but they actually contain useful data inside.

After screening titles, the next step is to screen abstracts. You may be surprised at this point that the titles you thought were irrelevant actually contain relevant information. For instance, some studies may indicate in the title that their study focused on depression as an outcome when you’re interested in anxiety. However, reading the abstract may surprise you that depression was only a primary outcome. The authors also measured secondary outcomes, among them anxiety. In such an article, you can decide to focus on anxiety results only because they are relevant to your study.

Step 7: Do a manual search to supplement database search

After screening articles identified using various databases, the next step is to augment the search strategy with a manual search. This will ensure you don’t miss relevant studies in your systematic review dissertation. The manual search involves identifying more studies in the bibliographies of the identified articles using a database search. It is also about contacting the authors and experts sourced from the found articles to give access to more articles that may not be found online. Finally, you can also identify key journals from the articles and perform a hand search. For example, suppose I identify the Journal of Cognitive Psychology. In that case, I will visit that journal’s website and perform a manual search there. A properly done manual search can help you identify more articles that you couldn’t have identified using databases only.

Step 8: Perform systematic review screening using the full-body texts

After having all your articles intact, the next step is to screen for full-text bodies. In most cases, the titles and abstracts may not contain enough information for screening purposes. You must read the full texts of the articles to determine their full eligibility. At this point, you screen articles identified through database search and manual search altogether. For example, sometimes you may be interested in healthy adolescents. In the abstract, the author of the articles may only report adolescents without providing any specifics about them. Upon reading the full text, you may discover that the authors included adolescents with mental issues that are not within your study’s scope. Therefore, always do a full-text screening before you move to the next step.

Step 9: Perform systematic review quality assessment using PRISMA, etc

Systematic review dissertations can be used to inform the formulation of practice guidelines and even inform policies. You must strive to review only studies with rigorous methodological quality. The quality assessment tool will depend on your study’s design. The commonly used ones for student dissertations include CASP Checklists and Joanna Briggs Institute (JBI) Checklists. You can consult with your supervisor before arriving at the final decision. Transparently report your quality assessment findings. For example, indicate the score of each study under each item of each tool and calculate the overall score in the form of a percentage. Also, always have a cut-off of 65%, and studies whose methodological rigour is below the cut-off are excluded.

Step 10: Perform systematic review data extraction

The next step is to extract relevant data from your studies. Your data extraction approach depends on the research design of the studies you used. If you use qualitative studies, your data extraction can focus on individual studies’ findings, particularly themes. You can also extract data that can aid in-depth analysis, such as country of study, population characteristics, etc. Using quantitative studies, you can collect quantitative data that will aid your analysis, such as means and standard deviations and other crucial information relevant to your analysis technique. Always chart your data in a tabular format to facilitate easy management and handling.

Step 11: Carry on with systematic review data analysis

The data analysis approach used in your systematic review dissertation will depend on the research design. Using qualitative studies, you will rely on qualitative approaches to analyse your data. For example, you can do a thematic analysis or a narrative synthesis. If you used quantitative studies, you might need to perform a meta-analysis or narrative synthesis. A meta-analysis is done when you have homogenous studies (such as population, outcome variables, measurement tools, etc.) that are experimental in nature. Particularly, meta-analysis is performed when reviewing controlled randomized trials or other interventional studies. In other words, meta-analysis is appropriately used when reviewing the effectiveness of interventions. However, if your quantitative studies are heterogenous, such as using different research designs, you must perform a narrative synthesis.

Step 12: Prepare the written report

The final step is to produce a written report of your systematic review dissertation. One of the ethical concerns in systematic reviews is transparency. You can improve the transparency of your reporting by using an established protocol like PRISMA (Preferred Reporting Items for Systematic Reviews and Meta-Analyses).

Approximate price: $ 22

Calculate the price of your order

- Free title page and bibliography

- Unlimited revisions

- Plagiarism-free guarantee

Money-back guarantee

- 24/7 support

- Systematic Review Service

- Meta Analysis Services

- Literature Search Service

- Literature Review Assistance

- Scientific Article Writing Service

- Manuscript Publication Assistance

- 275 words per page

- 12 pt Arial/Times New Roman

- Double line spacing

- Any citation style (APA, MLA, Chicago/Turabian, Harvard, etc)

Our guarantees