- Skip to secondary menu

- Skip to main content

- Skip to primary sidebar

Statistics By Jim

Making statistics intuitive

Z Test: Uses, Formula & Examples

By Jim Frost Leave a Comment

What is a Z Test?

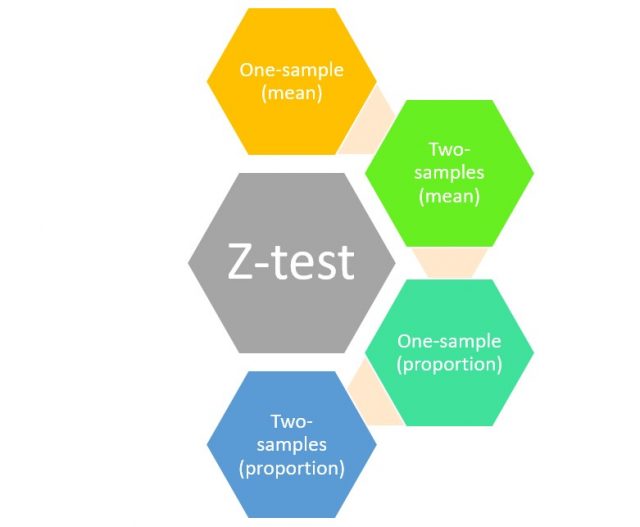

Use a Z test when you need to compare group means. Use the 1-sample analysis to determine whether a population mean is different from a hypothesized value. Or use the 2-sample version to determine whether two population means differ.

A Z test is a form of inferential statistics . It uses samples to draw conclusions about populations.

For example, use Z tests to assess the following:

- One sample : Do students in an honors program have an average IQ score different than a hypothesized value of 100?

- Two sample : Do two IQ boosting programs have different mean scores?

In this post, learn about when to use a Z test vs T test. Then we’ll review the Z test’s hypotheses, assumptions, interpretation, and formula. Finally, we’ll use the formula in a worked example.

Related post : Difference between Descriptive and Inferential Statistics

Z test vs T test

Z tests and t tests are similar. They both assess the means of one or two groups, have similar assumptions, and allow you to draw the same conclusions about population means.

However, there is one critical difference.

Z tests require you to know the population standard deviation, while t tests use a sample estimate of the standard deviation. Learn more about Population Parameters vs. Sample Statistics .

In practice, analysts rarely use Z tests because it’s rare that they’ll know the population standard deviation. It’s even rarer that they’ll know it and yet need to assess an unknown population mean!

A Z test is often the first hypothesis test students learn because its results are easier to calculate by hand and it builds on the standard normal distribution that they probably already understand. Additionally, students don’t need to know about the degrees of freedom .

Z and T test results converge as the sample size approaches infinity. Indeed, for sample sizes greater than 30, the differences between the two analyses become small.

William Sealy Gosset developed the t test specifically to account for the additional uncertainty associated with smaller samples. Conversely, Z tests are too sensitive to mean differences in smaller samples and can produce statistically significant results incorrectly (i.e., false positives).

When to use a T Test vs Z Test

Let’s put a button on it.

When you know the population standard deviation, use a Z test.

When you have a sample estimate of the standard deviation, which will be the vast majority of the time, the best statistical practice is to use a t test regardless of the sample size.

However, the difference between the two analyses becomes trivial when the sample size exceeds 30.

Learn more about a T-Test Overview: How to Use & Examples and How T-Tests Work .

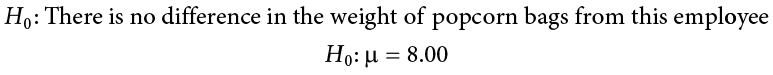

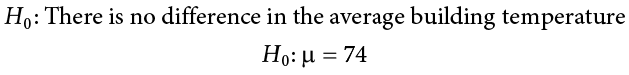

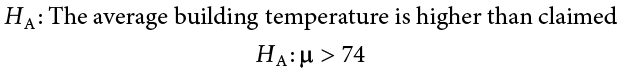

Z Test Hypotheses

This analysis uses sample data to evaluate hypotheses that refer to population means (µ). The hypotheses depend on whether you’re assessing one or two samples.

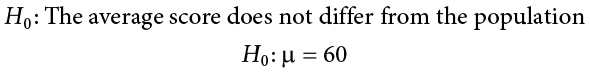

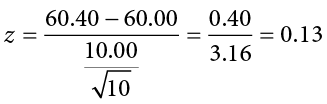

One-Sample Z Test Hypotheses

- Null hypothesis (H 0 ): The population mean equals a hypothesized value (µ = µ 0 ).

- Alternative hypothesis (H A ): The population mean DOES NOT equal a hypothesized value (µ ≠ µ 0 ).

When the p-value is less or equal to your significance level (e.g., 0.05), reject the null hypothesis. The difference between your sample mean and the hypothesized value is statistically significant. Your sample data support the notion that the population mean does not equal the hypothesized value.

Related posts : Null Hypothesis: Definition, Rejecting & Examples and Understanding Significance Levels

Two-Sample Z Test Hypotheses

- Null hypothesis (H 0 ): Two population means are equal (µ 1 = µ 2 ).

- Alternative hypothesis (H A ): Two population means are not equal (µ 1 ≠ µ 2 ).

Again, when the p-value is less than or equal to your significance level, reject the null hypothesis. The difference between the two means is statistically significant. Your sample data support the idea that the two population means are different.

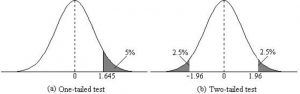

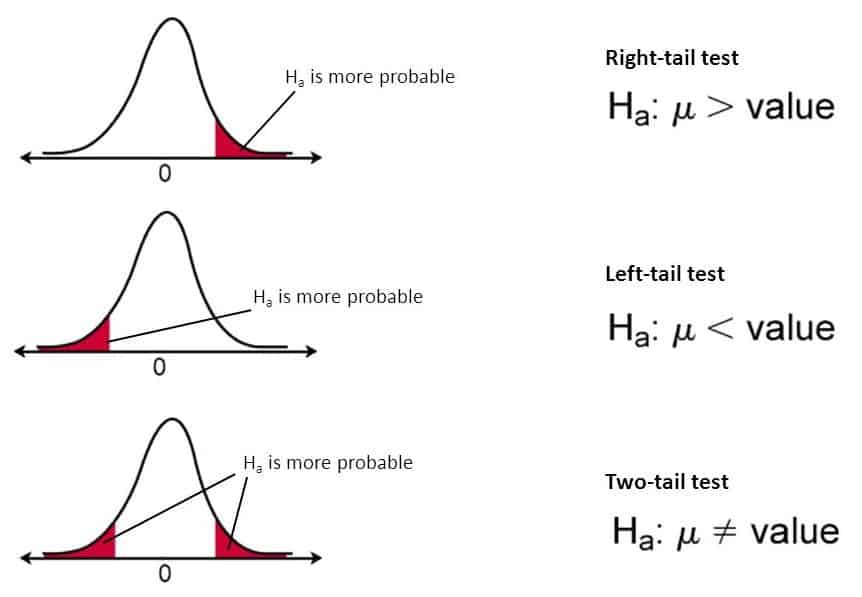

These hypotheses are for two-sided analyses. You can use one-sided, directional hypotheses instead. Learn more in my post, One-Tailed and Two-Tailed Hypothesis Tests Explained .

Related posts : How to Interpret P Values and Statistical Significance

Z Test Assumptions

For reliable results, your data should satisfy the following assumptions:

You have a random sample

Drawing a random sample from your target population helps ensure that the sample represents the population. Representative samples are crucial for accurately inferring population properties. The Z test results won’t be valid if your data do not reflect the population.

Related posts : Random Sampling and Representative Samples

Continuous data

Z tests require continuous data . Continuous variables can assume any numeric value, and the scale can be divided meaningfully into smaller increments, such as fractional and decimal values. For example, weight, height, and temperature are continuous.

Other analyses can assess additional data types. For more information, read Comparing Hypothesis Tests for Continuous, Binary, and Count Data .

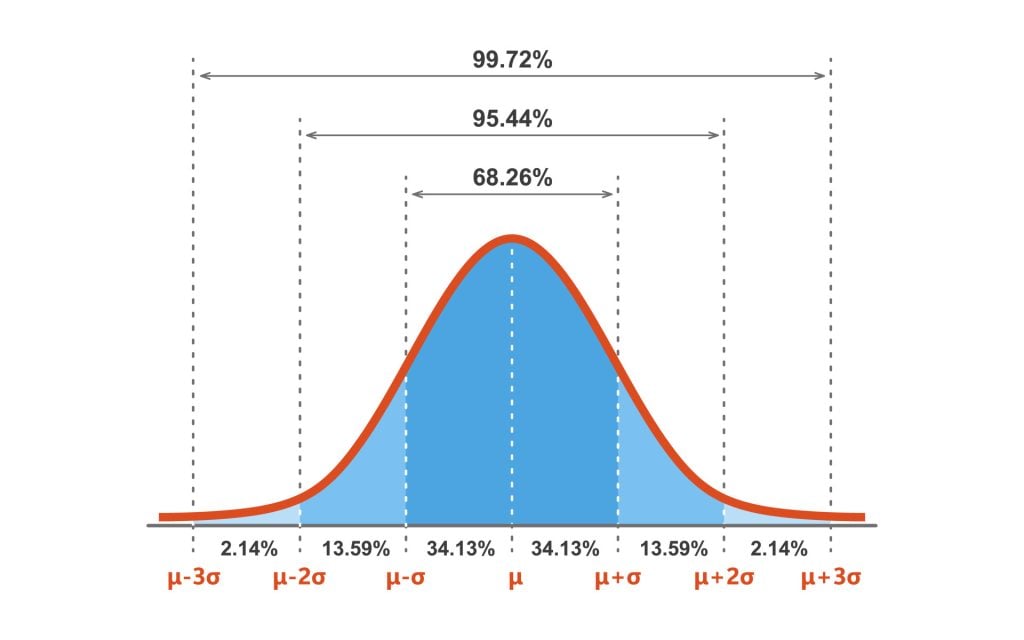

Your sample data follow a normal distribution, or you have a large sample size

All Z tests assume your data follow a normal distribution . However, due to the central limit theorem, you can ignore this assumption when your sample is large enough.

The following sample size guidelines indicate when normality becomes less of a concern:

- One-Sample : 20 or more observations.

- Two-Sample : At least 15 in each group.

Related posts : Central Limit Theorem and Skewed Distributions

Independent samples

For the two-sample analysis, the groups must contain different sets of items. This analysis compares two distinct samples.

Related post : Independent and Dependent Samples

Population standard deviation is known

As I mention in the Z test vs T test section, use a Z test when you know the population standard deviation. However, when n > 30, the difference between the analyses becomes trivial.

Related post : Standard Deviations

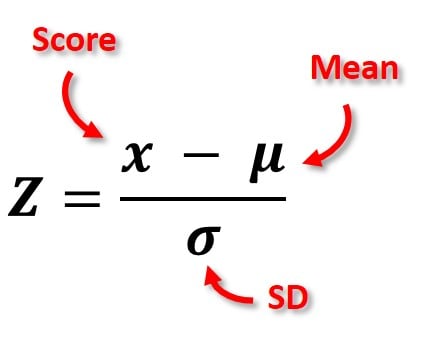

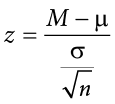

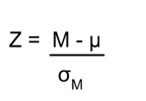

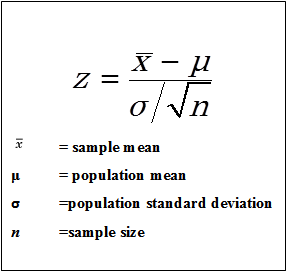

Z Test Formula

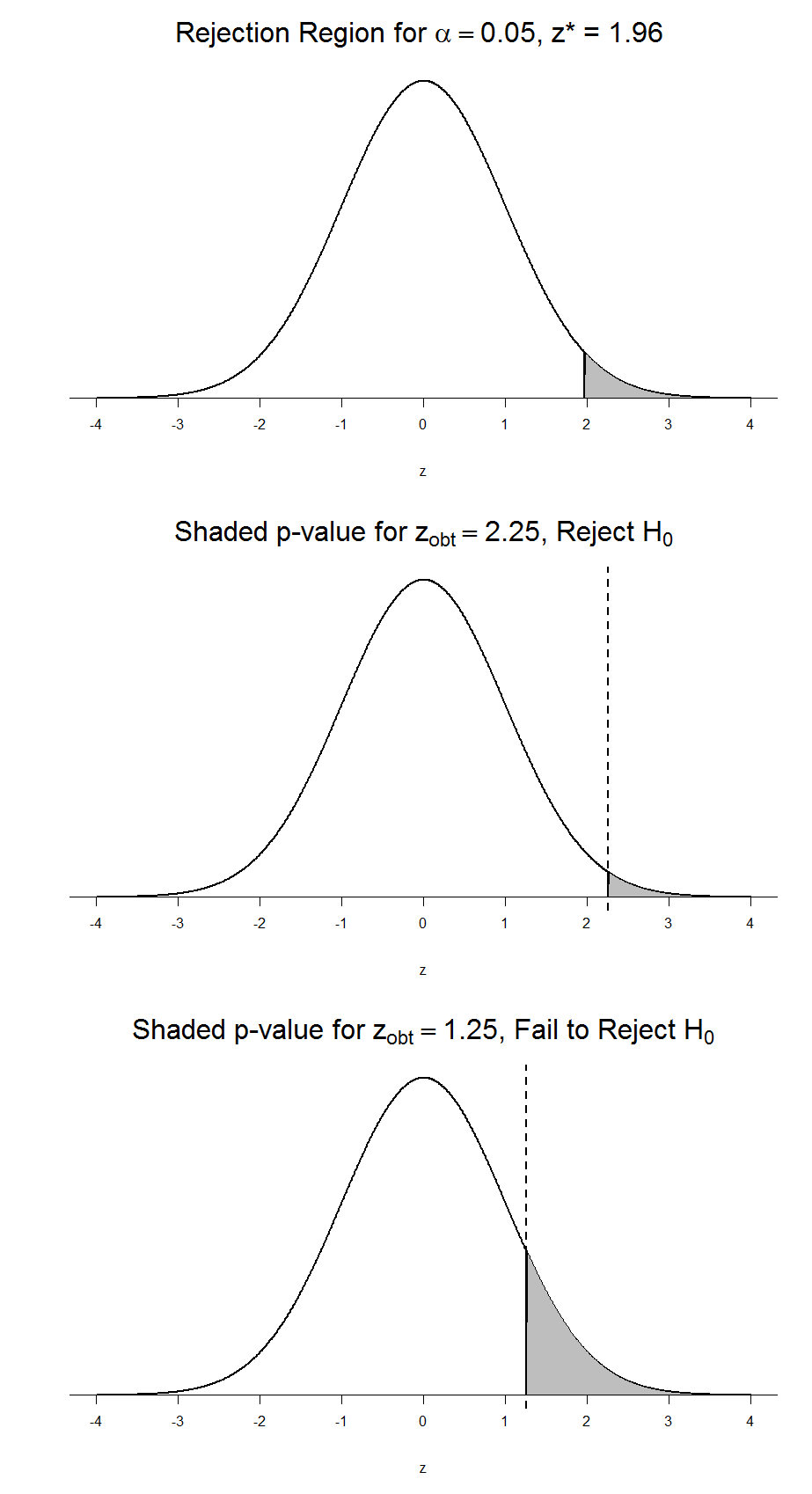

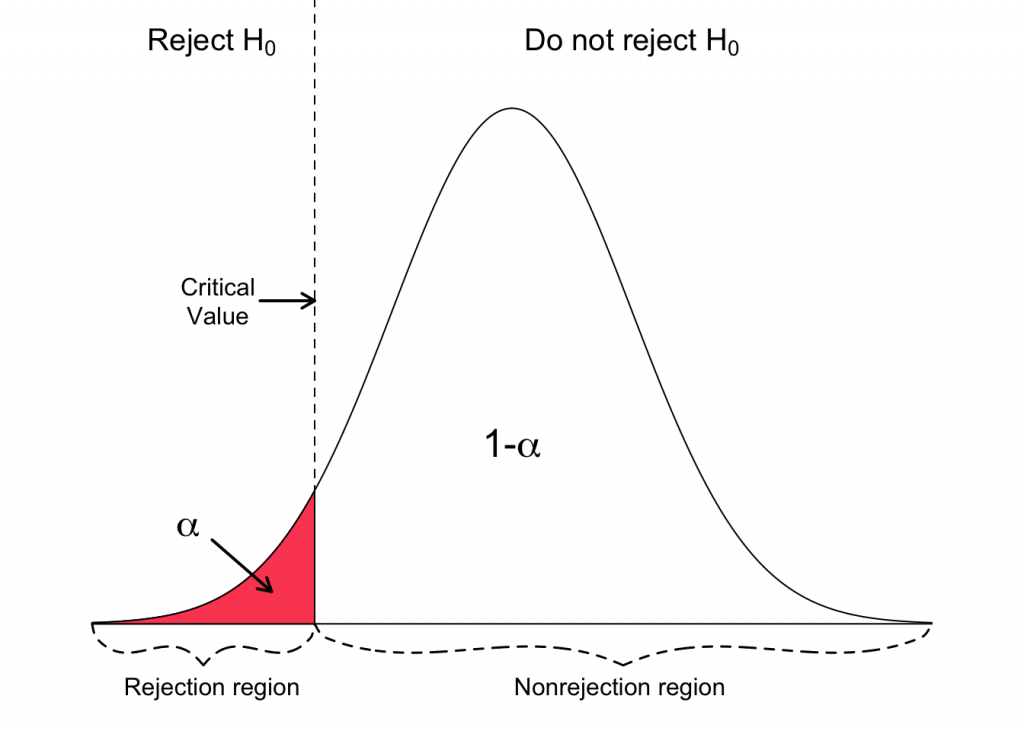

These Z test formulas allow you to calculate the test statistic. Use the Z statistic to determine statistical significance by comparing it to the appropriate critical values and use it to find p-values.

The correct formula depends on whether you’re performing a one- or two-sample analysis. Both formulas require sample means (x̅) and sample sizes (n) from your sample. Additionally, you specify the population standard deviation (σ) or variance (σ 2 ), which does not come from your sample.

I present a worked example using the Z test formula at the end of this post.

Learn more about Z-Scores and Test Statistics .

One Sample Z Test Formula

The one sample Z test formula is a ratio.

The numerator is the difference between your sample mean and a hypothesized value for the population mean (µ 0 ). This value is often a strawman argument that you hope to disprove.

The denominator is the standard error of the mean. It represents the uncertainty in how well the sample mean estimates the population mean.

Learn more about the Standard Error of the Mean .

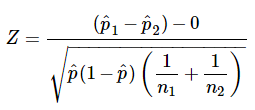

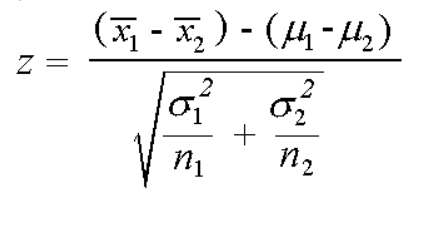

Two Sample Z Test Formula

The two sample Z test formula is also a ratio.

The numerator is the difference between your two sample means.

The denominator calculates the pooled standard error of the mean by combining both samples. In this Z test formula, enter the population variances (σ 2 ) for each sample.

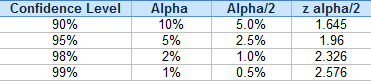

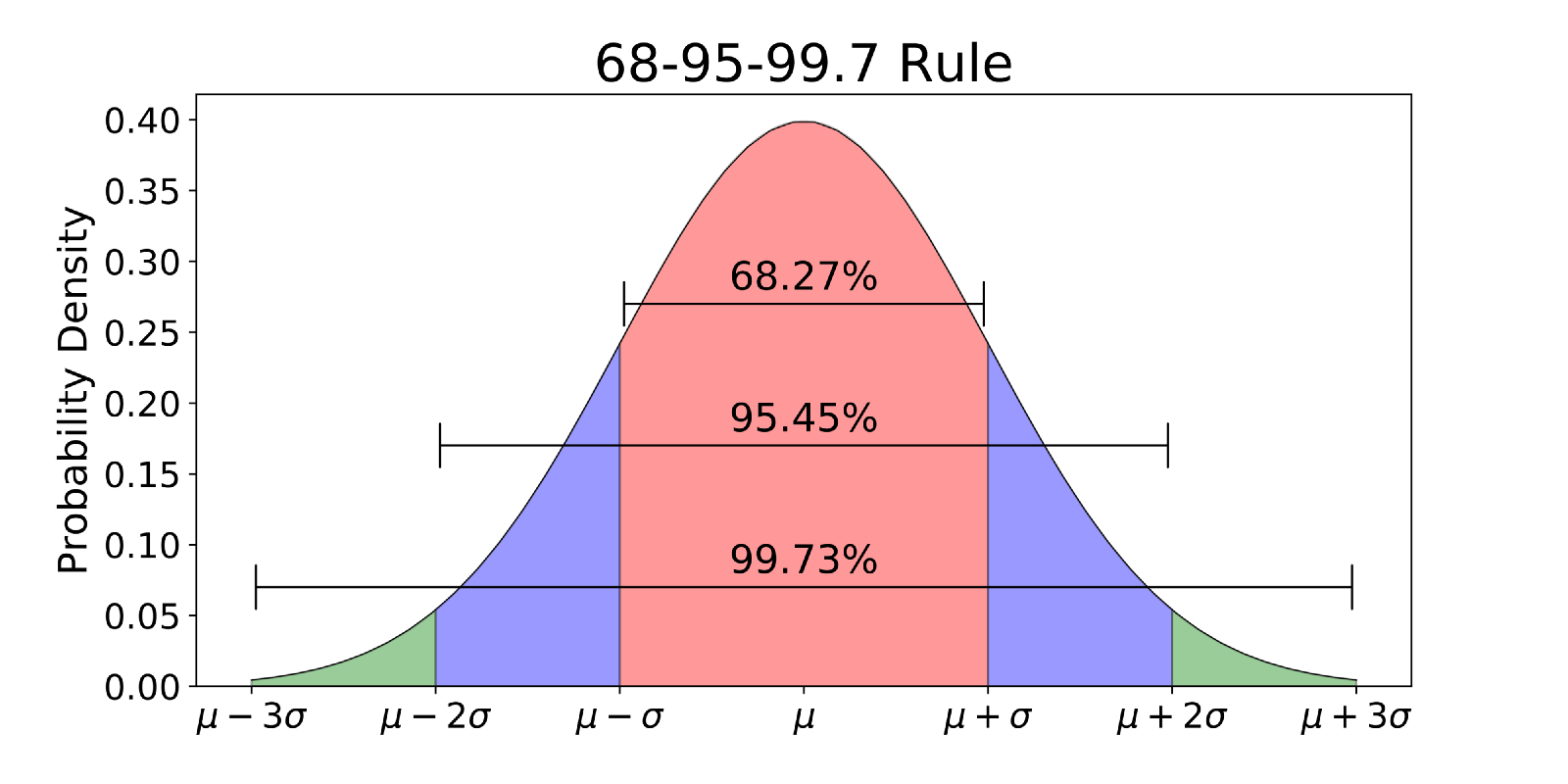

Z Test Critical Values

As I mentioned in the Z vs T test section, a Z test does not use degrees of freedom. It evaluates Z-scores in the context of the standard normal distribution. Unlike the t-distribution , the standard normal distribution doesn’t change shape as the sample size changes. Consequently, the critical values don’t change with the sample size.

To find the critical value for a Z test, you need to know the significance level and whether it is one- or two-tailed.

| 0.01 | Two-Tailed | ±2.576 |

| 0.01 | Left Tail | –2.326 |

| 0.01 | Right Tail | +2.326 |

| 0.05 | Two-Tailed | ±1.960 |

| 0.05 | Left Tail | +1.650 |

| 0.05 | Right Tail | –1.650 |

Learn more about Critical Values: Definition, Finding & Calculator .

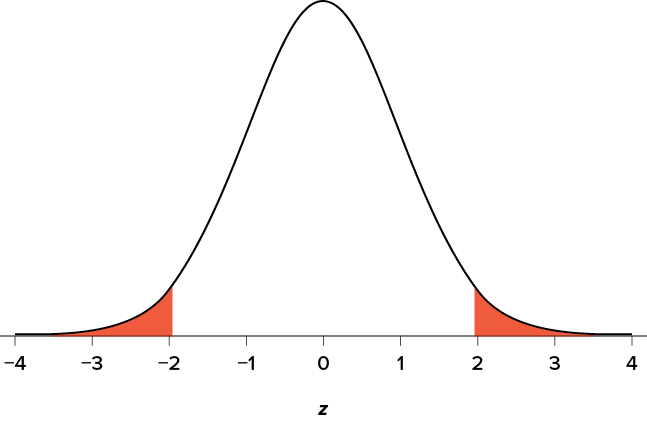

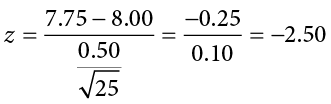

Z Test Worked Example

Let’s close this post by calculating the results for a Z test by hand!

Suppose we randomly sampled subjects from an honors program. We want to determine whether their mean IQ score differs from the general population. The general population’s IQ scores are defined as having a mean of 100 and a standard deviation of 15.

We’ll determine whether the difference between our sample mean and the hypothesized population mean of 100 is statistically significant.

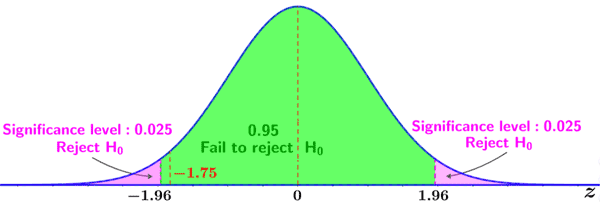

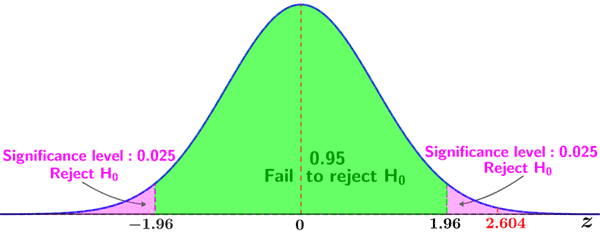

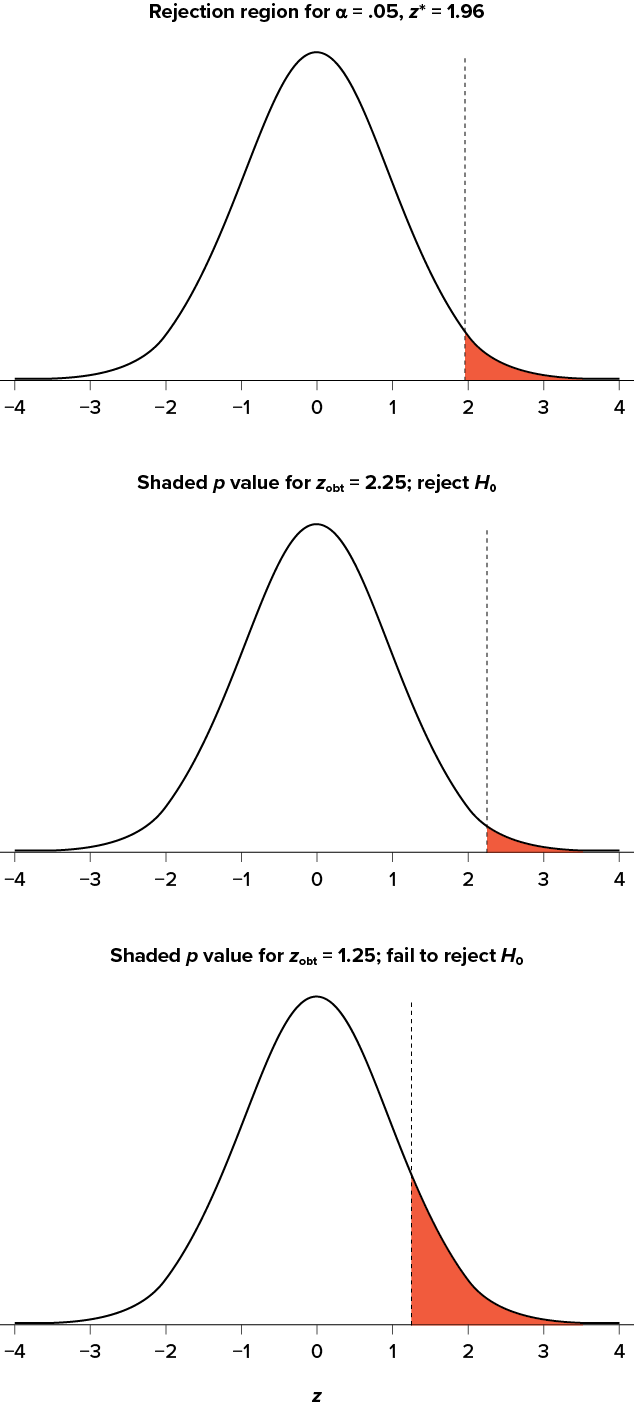

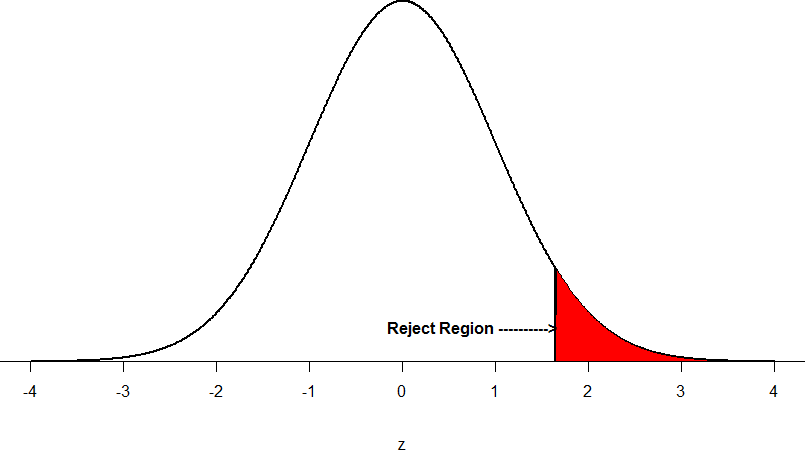

Specifically, we’ll use a two-tailed analysis with a significance level of 0.05. Looking at the table above, you’ll see that this Z test has critical values of ± 1.960. Our results are statistically significant if our Z statistic is below –1.960 or above +1.960.

The hypotheses are the following:

- Null (H 0 ): µ = 100

- Alternative (H A ): µ ≠ 100

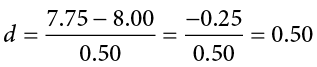

Entering Our Results into the Formula

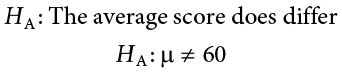

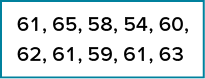

Here are the values from our study that we need to enter into the Z test formula:

- IQ score sample mean (x̅): 107

- Sample size (n): 25

- Hypothesized population mean (µ 0 ): 100

- Population standard deviation (σ): 15

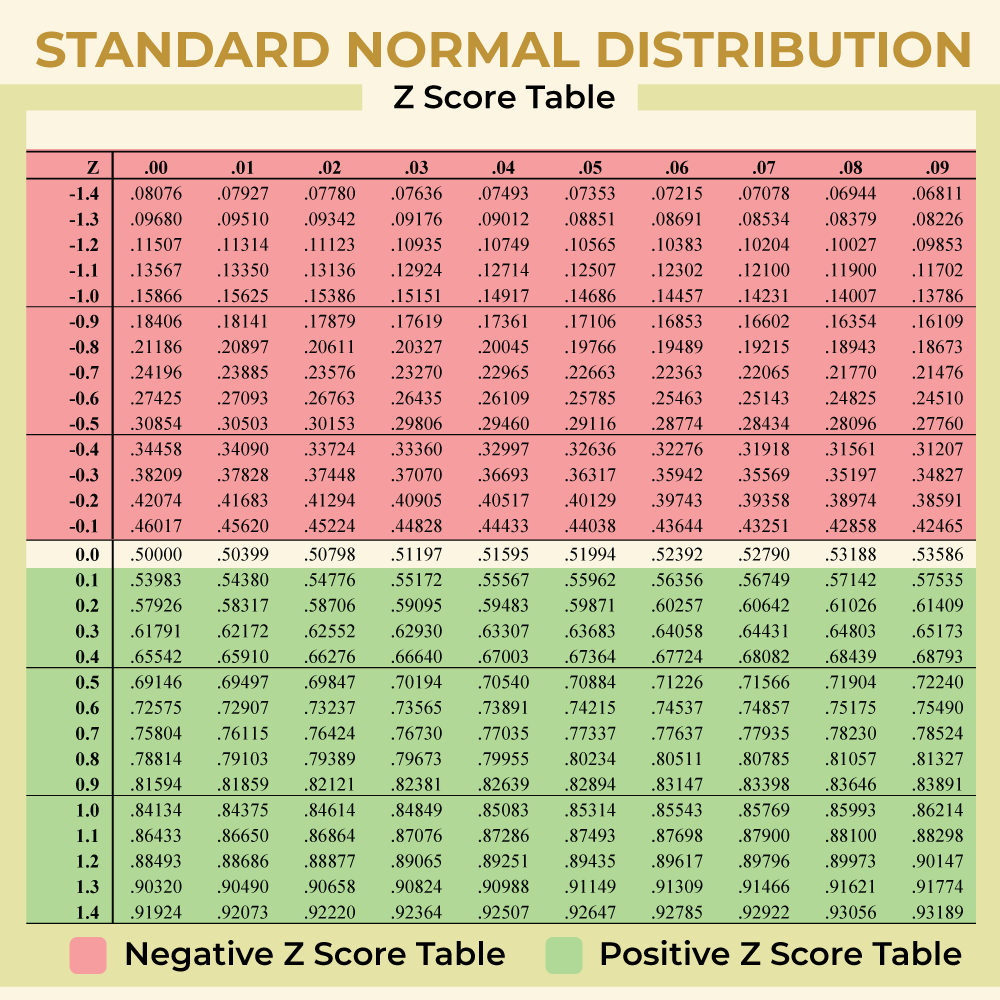

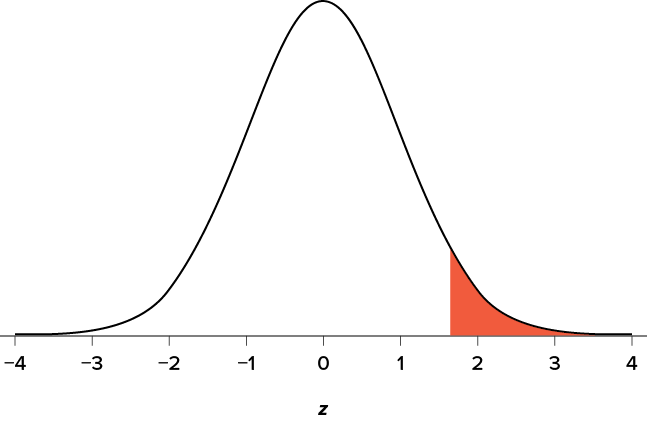

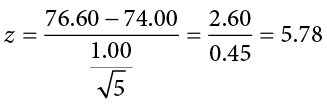

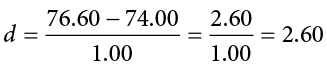

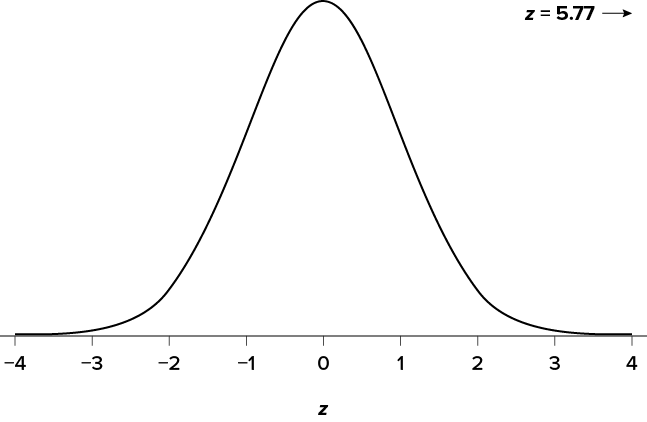

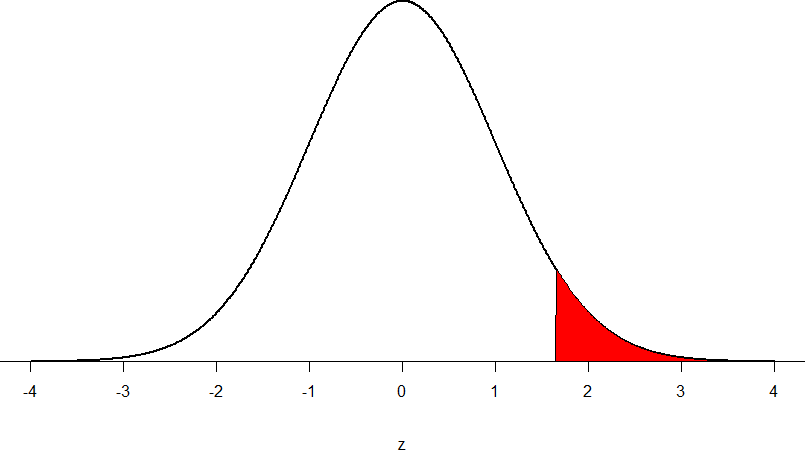

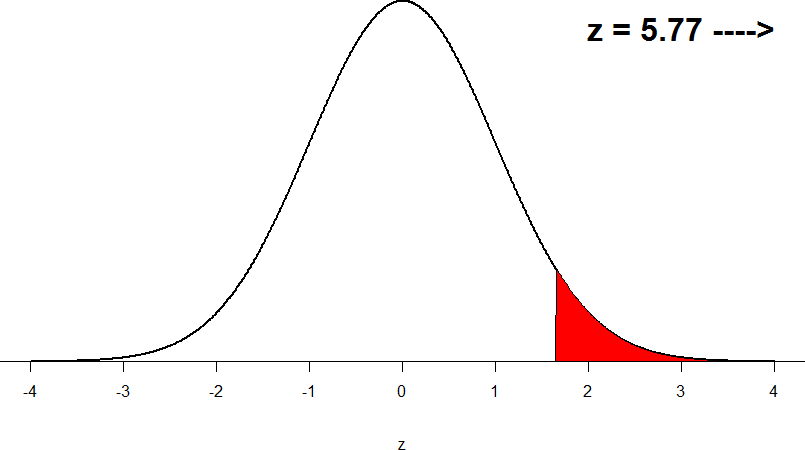

The Z-score is 2.333. This value is greater than the critical value of 1.960, making the results statistically significant. Below is a graphical representation of our Z test results showing how the Z statistic falls within the critical region.

We can reject the null and conclude that the mean IQ score for the population of honors students does not equal 100. Based on the sample mean of 107, we know their mean IQ score is higher.

Now let’s find the p-value. We could use technology to do that, such as an online calculator. However, let’s go old school and use a Z table.

To find the p-value that corresponds to a Z-score from a two-tailed analysis, we need to find the negative value of our Z-score (even when it’s positive) and double it.

In the truncated Z-table below, I highlight the cell corresponding to a Z-score of -2.33.

The cell value of 0.00990 represents the area or probability to the left of the Z-score -2.33. We need to double it to include the area > +2.33 to obtain the p-value for a two-tailed analysis.

P-value = 0.00990 * 2 = 0.0198

That p-value is an approximation because it uses a Z-score of 2.33 rather than 2.333. Using an online calculator, the p-value for our Z test is a more precise 0.0196. This p-value is less than our significance level of 0.05, which reconfirms the statistically significant results.

See my full Z-table , which explains how to use it to solve other types of problems.

Share this:

Reader Interactions

Comments and questions cancel reply.

- Practice Mathematical Algorithm

- Mathematical Algorithms

- Pythagorean Triplet

- Fibonacci Number

- Euclidean Algorithm

- LCM of Array

- GCD of Array

- Binomial Coefficient

- Catalan Numbers

- Sieve of Eratosthenes

- Euler Totient Function

- Modular Exponentiation

- Modular Multiplicative Inverse

- Stein's Algorithm

- Juggler Sequence

- Chinese Remainder Theorem

- Quiz on Fibonacci Numbers

Z-test : Formula, Types, Examples

Z-test is especially useful when you have a large sample size and know the population’s standard deviation. Different tests are used in statistics to compare distinct samples or groups and make conclusions about populations. These tests, also referred to as statistical tests, concentrate on examining the probability or possibility of acquiring the observed data under particular premises or hypotheses. They offer a framework for evaluating the evidence for or against a given hypothesis.

Table of Content

What is Z-Test?

Z-test formula, when to use z-test, hypothesis testing, steps to perform z-test, type of z-test, practice problems.

Z-test is a statistical test that is used to determine whether the mean of a sample is significantly different from a known population mean when the population standard deviation is known. It is particularly useful when the sample size is large (>30).

Z-test can also be defined as a statistical method that is used to determine whether the distribution of the test statistics can be approximated using the normal distribution or not. It is the method to determine whether two sample means are approximately the same or different when their variance is known and the sample size is large (should be >= 30).

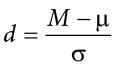

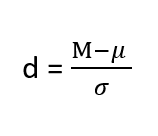

The Z-test compares the difference between the sample mean and the population means by considering the standard deviation of the sampling distribution. The resulting Z-score represents the number of standard deviations that the sample mean deviates from the population mean. This Z-Score is also known as Z-Statistics, and can be formulated as:

[Tex]\text{Z-Score} = \frac{\bar{x}-\mu}{\sigma} [/Tex]

- [Tex]\bar{x} [/Tex] : mean of the sample.

- [Tex]\mu [/Tex] : mean of the population.

- [Tex]\sigma [/Tex] : Standard deviation of the population.

z-test assumes that the test statistic (z-score) follows a standard normal distribution.

The average family annual income in India is 200k, with a standard deviation of 5k, and the average family annual income in Delhi is 300k.

Then Z-Score for Delhi will be.

[Tex]\begin{aligned} \text{Z-Score}&=\frac{\bar{x}-\mu}{\sigma} \\&=\frac{300-200}{5} \\&=20 \end{aligned} [/Tex]

This indicates that the average family’s annual income in Delhi is 20 standard deviations above the mean of the population (India).

- The sample size should be greater than 30. Otherwise, we should use the t-test.

- Samples should be drawn at random from the population.

- The standard deviation of the population should be known.

- Samples that are drawn from the population should be independent of each other.

- The data should be normally distributed , however, for a large sample size, it is assumed to have a normal distribution because central limit theorem

A hypothesis is an educated guess/claim about a particular property of an object. Hypothesis testing is a way to validate the claim of an experiment.

- Null Hypothesis: The null hypothesis is a statement that the value of a population parameter (such as proportion, mean, or standard deviation) is equal to some claimed value. We either reject or fail to reject the null hypothesis. The null hypothesis is denoted by H 0 .

- Alternate Hypothesis: The alternative hypothesis is the statement that the parameter has a value that is different from the claimed value. It is denoted by H A .

- Level of significance: It means the degree of significance in which we accept or reject the null hypothesis. Since in most of the experiments 100% accuracy is not possible for accepting or rejecting a hypothesis, we, therefore, select a level of significance. It is denoted by alpha (∝).

- First, identify the null and alternate hypotheses.

- Determine the level of significance (∝).

- Find the critical value of z in the z-test using

- n: sample size.

- Now compare with the hypothesis and decide whether to reject or not reject the null hypothesis

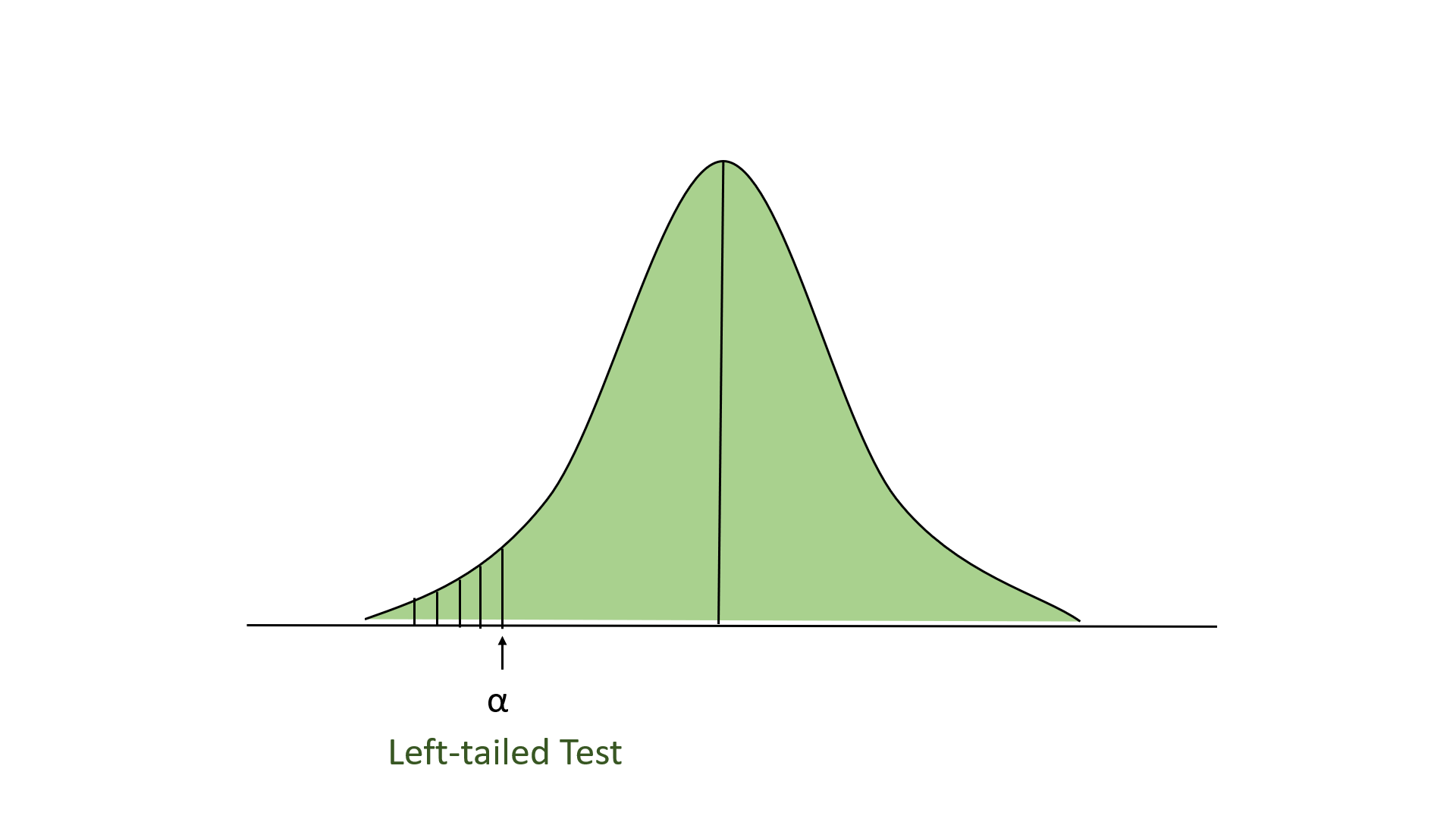

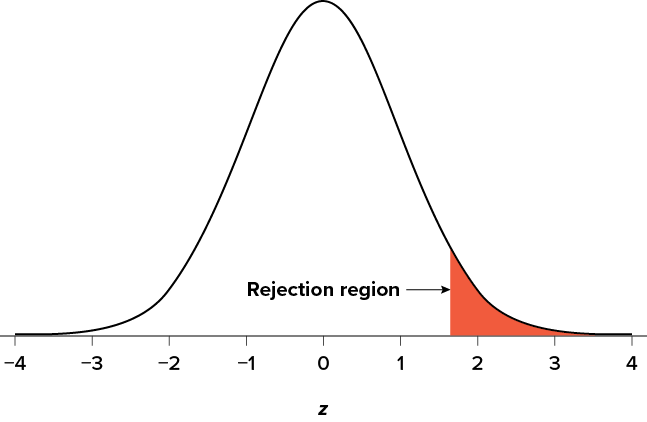

Left-tailed Test

In this test, our region of rejection is located to the extreme left of the distribution. Here our null hypothesis is that the claimed value is less than or equal to the mean population value.

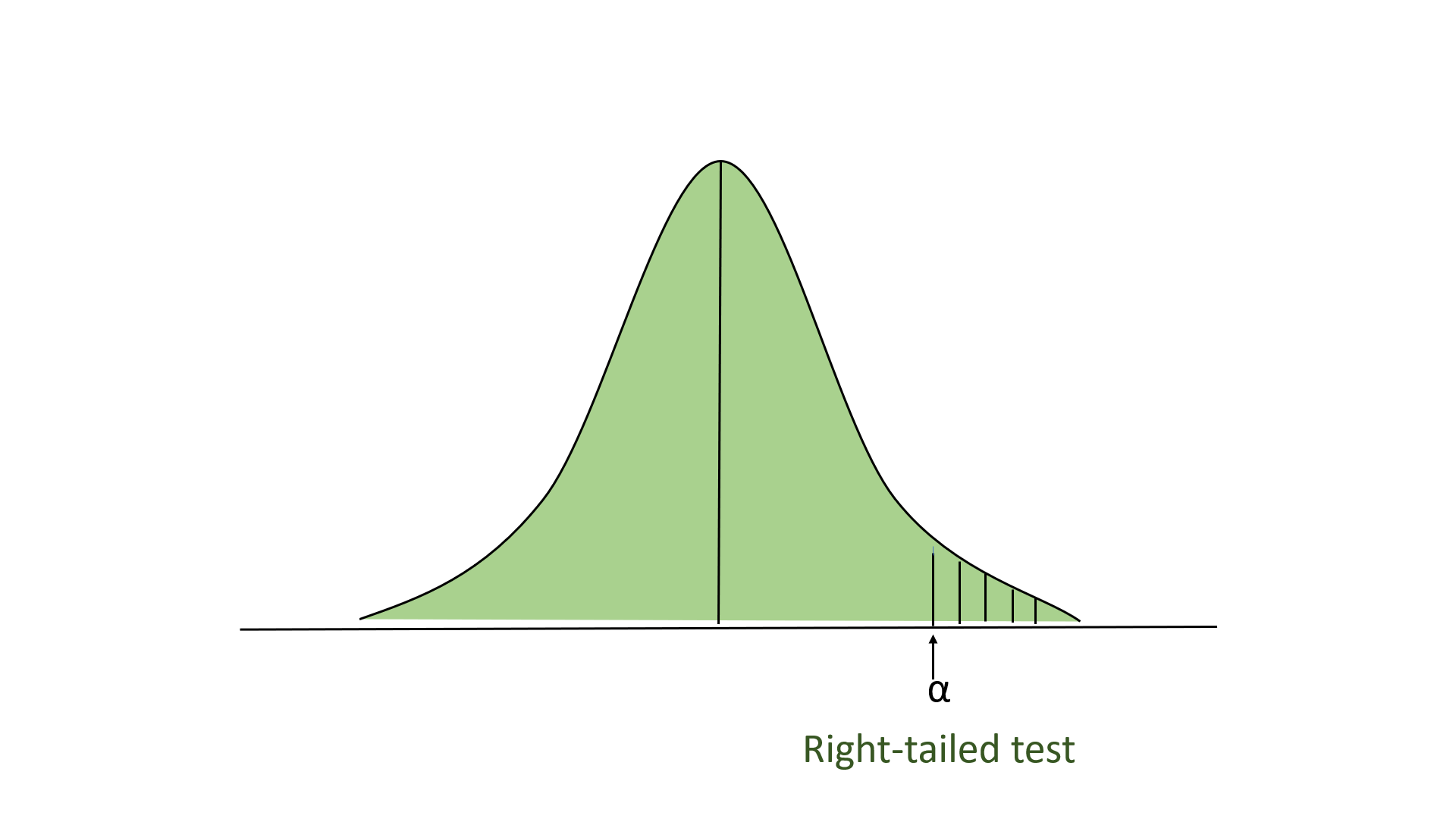

Right-tailed Test

In this test, our region of rejection is located to the extreme right of the distribution. Here our null hypothesis is that the claimed value is less than or equal to the mean population value.

One-Tailed Test

A school claimed that the students who study that are more intelligent than the average school. On calculating the IQ scores of 50 students, the average turns out to be 110. The mean of the population IQ is 100 and the standard deviation is 15. State whether the claim of the principal is right or not at a 5% significance level.

- First, we define the null hypothesis and the alternate hypothesis. Our null hypothesis will be: [Tex]H_0 : \mu = 100 [/Tex] and our alternate hypothesis. [Tex]H_A : \mu > 100 [/Tex]

- State the level of significance. Here, our level of significance is given in this question ( [Tex]\alpha [/Tex] =0.05), if not given then we take ∝=0.05 in general.

- Now, we compute the Z-Score: X = 110 Mean = 100 Standard Deviation = 15 Number of samples = 50 [Tex]\begin{aligned} \text{Z-Score}&=\frac{\bar{x}-\mu}{\sigma/\sqrt{n}} \\&=\frac{110-100}{15/\sqrt{50}} \\&=\frac{10}{2.12} \\&=4.71 \end{aligned} [/Tex]

- Now, we look up to the z-table. For the value of ∝=0.05, the z-score for the right-tailed test is 1.645.

- Here 4.71 >1.645, so we reject the null hypothesis.

- If the z-test statistics are less than the z-score, then we will not reject the null hypothesis.

Code Implementations of One-Tailed Z-Test

# Import the necessary libraries import numpy as np import scipy.stats as stats # Given information sample_mean = 110 population_mean = 100 population_std = 15 sample_size = 50 alpha = 0.05 # compute the z-score z_score = ( sample_mean - population_mean ) / ( population_std / np . sqrt ( 50 )) print ( 'Z-Score :' , z_score ) # Approach 1: Using Critical Z-Score # Critical Z-Score z_critical = stats . norm . ppf ( 1 - alpha ) print ( 'Critical Z-Score :' , z_critical ) # Hypothesis if z_score > z_critical : print ( "Reject Null Hypothesis" ) else : print ( "Fail to Reject Null Hypothesis" ) # Approach 2: Using P-value # P-Value : Probability of getting less than a Z-score p_value = 1 - stats . norm . cdf ( z_score ) print ( 'p-value :' , p_value ) # Hypothesis if p_value < alpha : print ( "Reject Null Hypothesis" ) else : print ( "Fail to Reject Null Hypothesis" )

Z-Score : 4.714045207910317Critical Z-Score : 1.6448536269514722Reject Null Hypothesisp-value : 1.2142337364462463e-06Reject Null Hypothesis

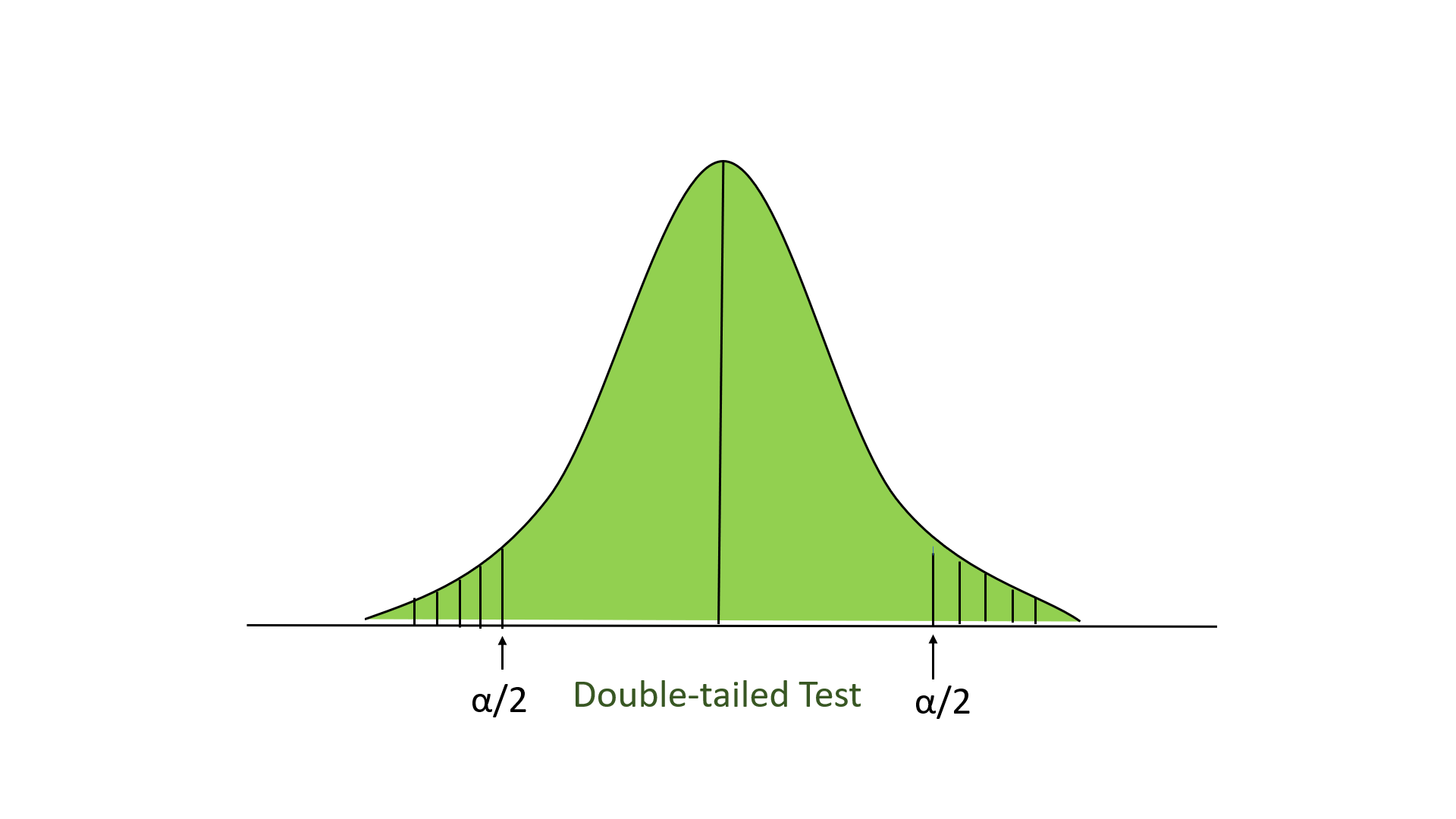

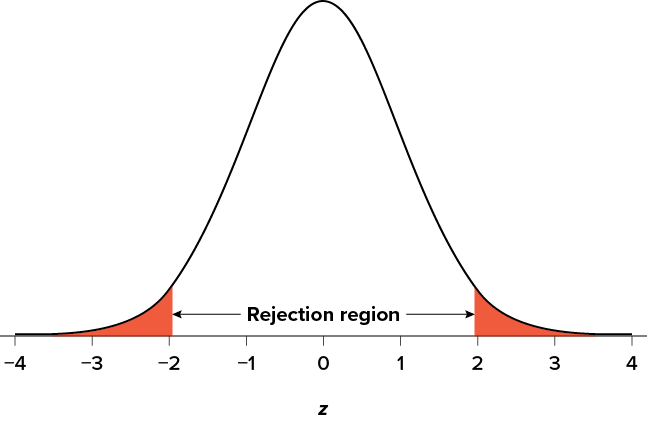

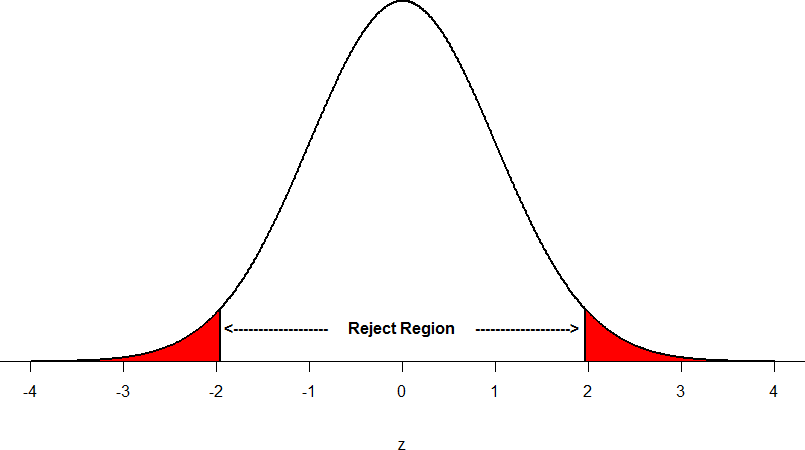

Two-tailed test

In this test, our region of rejection is located to both extremes of the distribution. Here our null hypothesis is that the claimed value is equal to the mean population value.

Below is an example of performing the z-test:

Two-sampled z-test

In this test, we have provided 2 normally distributed and independent populations, and we have drawn samples at random from both populations. Here, we consider u 1 and u 2 to be the population mean, and X 1 and X 2 to be the observed sample mean. Here, our null hypothesis could be like this:

[Tex]H_{0} : \mu_{1} -\mu_{2} = 0 [/Tex]

and alternative hypothesis

[Tex]H_{1} : \mu_{1} – \mu_{2} \ne 0 [/Tex]

and the formula for calculating the z-test score:

[Tex]Z = \frac{\left ( \overline{X_{1}} – \overline{X_{2}} \right ) – \left ( \mu_{1} – \mu_{2} \right )}{\sqrt{\frac{\sigma_{1}^2}{n_{1}} + \frac{\sigma_{2}^2}{n_{2}}}} [/Tex]

where [Tex]\sigma_1 [/Tex] and [Tex]\sigma_2 [/Tex] are the standard deviation and n 1 and n 2 are the sample size of population corresponding to u 1 and u 2 .

There are two groups of students preparing for a competition: Group A and Group B. Group A has studied offline classes, while Group B has studied online classes. After the examination, the score of each student comes. Now we want to determine whether the online or offline classes are better.

Group A: Sample size = 50, Sample mean = 75, Sample standard deviation = 10 Group B: Sample size = 60, Sample mean = 80, Sample standard deviation = 12

Assuming a 5% significance level, perform a two-sample z-test to determine if there is a significant difference between the online and offline classes.

Step 1: Null & Alternate Hypothesis

- Null Hypothesis: There is no significant difference between the mean score between the online and offline classes [Tex] \mu_1 -\mu_2 = 0 [/Tex]

- Alternate Hypothesis: There is a significant difference in the mean scores between the online and offline classes. [Tex] \mu_1 -\mu_2 \neq 0 [/Tex]

Step 2: Significance Label

- Significance Label: 5% [Tex]\alpha = 0.05 [/Tex]

Step 3: Z-Score

[Tex]\begin{aligned} \text{Z-score} &= \frac{(x_1-x_2)-(\mu_1 -\mu_2)} {\sqrt{\frac{\sigma_1^2}{n_1}+\frac{\sigma_2^2}{n_1}}} \\ &= \frac{(75-80)-0} {\sqrt{\frac{10^2}{50}+\frac{12^2}{60}}} \\ &= \frac{-5} {\sqrt{2+2.4}} \\ &= \frac{-5} {2.0976} \\&=-2.384 \end{aligned} [/Tex]

Step 4: Check to Critical Z-Score value in the Z-Table for apha/2 = 0.025

- Critical Z-Score = 1.96

Step 5: Compare with the absolute Z-Score value

- absolute(Z-Score) > Critical Z-Score

- Reject the null hypothesis. There is a significant difference between the online and offline classes.

Code Implementations on Two-sampled Z-test

import numpy as np import scipy.stats as stats # Group A (Offline Classes) n1 = 50 x1 = 75 s1 = 10 # Group B (Online Classes) n2 = 60 x2 = 80 s2 = 12 # Null Hypothesis = mu_1-mu_2 = 0 # Hypothesized difference (under the null hypothesis) D = 0 # Set the significance level alpha = 0.05 # Calculate the test statistic (z-score) z_score = (( x1 - x2 ) - D ) / np . sqrt (( s1 ** 2 / n1 ) + ( s2 ** 2 / n2 )) print ( 'Z-Score:' , np . abs ( z_score )) # Calculate the critical value z_critical = stats . norm . ppf ( 1 - alpha / 2 ) print ( 'Critical Z-Score:' , z_critical ) # Compare the test statistic with the critical value if np . abs ( z_score ) > z_critical : print ( """Reject the null hypothesis. There is a significant difference between the online and offline classes.""" ) else : print ( """Fail to reject the null hypothesis. There is not enough evidence to suggest a significant difference between the online and offline classes.""" ) # Approach 2: Using P-value # P-Value : Probability of getting less than a Z-score p_value = 2 * ( 1 - stats . norm . cdf ( np . abs ( z_score ))) print ( 'P-Value :' , p_value ) # Compare the p-value with the significance level if p_value < alpha : print ( """Reject the null hypothesis. There is a significant difference between the online and offline classes.""" ) else : print ( """Fail to reject the null hypothesis. There is not enough evidence to suggest significant difference between the online and offline classes.""" )

Z-Score: 2.3836564731139807 Critical Z-Score: 1.959963984540054 Reject the null hypothesis. There is a significant difference between the online and offline classes. P-Value : 0.01714159544079563 Reject the null hypothesis. There is a significant difference between the online and offline classes.

Solved examples :

Example 1: One-sample Z-test

Problem: A company claims that the average battery life of their new smartphone is 12 hours. A consumer group tests 100 phones and finds the average battery life to be 11.8 hours with a population standard deviation of 0.5 hours. At a 5% significance level, is there evidence to refute the company’s claim?

Solution: Step 1: State the hypotheses H₀: μ = 12 (null hypothesis) H₁: μ ≠ 12 (alternative hypothesis) Step 2: Calculate the Z-score Z = (x̄ – μ) / (σ / √n) = (11.8 – 12) / (0.5 / √100) = -0.2 / 0.05 = -4 Step 3: Find the critical value (two-tailed test at 5% significance) Z₀.₀₂₅ = ±1.96 Step 4: Compare Z-score with critical value |-4| > 1.96, so we reject the null hypothesis. Conclusion: There is sufficient evidence to refute the company’s claim about battery life.

Problem: A researcher wants to compare the effectiveness of two different medications for reducing blood pressure. Medication A is tested on 50 patients, resulting in a mean reduction of 15 mmHg with a standard deviation of 3 mmHg. Medication B is tested on 60 patients, resulting in a mean reduction of 13 mmHg with a standard deviation of 4 mmHg. At a 1% significance level, is there a significant difference between the two medications?

Step 1: State the hypotheses H₀: μ₁ – μ₂ = 0 (null hypothesis) H₁: μ₁ – μ₂ ≠ 0 (alternative hypothesis) Step 2: Calculate the Z-score Z = (x̄₁ – x̄₂) / √((σ₁²/n₁) + (σ₂²/n₂)) = (15 – 13) / √((3²/50) + (4²/60)) = 2 / √(0.18 + 0.2667) = 2 / 0.6455 = 3.10 Step 3: Find the critical value (two-tailed test at 1% significance) Z₀.₀₀₅ = ±2.576 Step 4: Compare Z-score with critical value 3.10 > 2.576, so we reject the null hypothesis. Conclusion: There is a significant difference between the effectiveness of the two medications at the 1% significance level.

Problem 3 : A polling company claims that 60% of voters support a new policy. In a sample of 1000 voters, 570 support the policy. At a 5% significance level, is there evidence to support the company’s claim?

Step 1: State the hypotheses H₀: p = 0.60 (null hypothesis) H₁: p ≠ 0.60 (alternative hypothesis) Step 2: Calculate the Z-score p̂ = 570/1000 = 0.57 (sample proportion) Z = (p̂ – p) / √(p(1-p)/n) = (0.57 – 0.60) / √(0.60(1-0.60)/1000) = -0.03 / √(0.24/1000) = -0.03 / 0.0155 = -1.94 Step 3: Find the critical value (two-tailed test at 5% significance) Z₀.₀₂₅ = ±1.96 Step 4: Compare Z-score with critical value |-1.94| < 1.96, so we fail to reject the null hypothesis. Conclusion: There is not enough evidence to refute the polling company’s claim at the 5% significance level.

Problem 4 : A manufacturer claims that their light bulbs last an average of 1000 hours. A sample of 100 bulbs has a mean life of 985 hours. The population standard deviation is known to be 50 hours. At a 5% significance level, is there evidence to reject the manufacturer’s claim?

Solution: H₀: μ = 1000 H₁: μ ≠ 1000 Z = (x̄ – μ) / (σ / √n) = (985 – 1000) / (50 / √100) = -15 / 5 = -3 Critical value (α = 0.05, two-tailed): ±1.96 |-3| > 1.96, so reject H₀. Conclusion: There is sufficient evidence to reject the manufacturer’s claim at the 5% significance level.

Example 5 : Two factories produce semiconductors. Factory A’s chips have a mean resistance of 100 ohms with a standard deviation of 5 ohms. Factory B’s chips have a mean resistance of 98 ohms with a standard deviation of 4 ohms. Samples of 50 chips from each factory are tested. At a 1% significance level, is there a difference in mean resistance between the two factories?

H₀: μA – μB = 0 H₁: μA – μB ≠ 0 Z = (x̄A – x̄B) / √((σA²/nA) + (σB²/nB)) = (100 – 98) / √((5²/50) + (4²/50)) = 2 / √(0.5 + 0.32) = 2 / 0.872 = 2.29 Critical value (α = 0.01, two-tailed): ±2.576 |2.29| < 2.576, so fail to reject H₀. Conclusion: There is not enough evidence to conclude a difference in mean resistance at the 1% significance level.

Problem 6 : A political analyst claims that 40% of voters in a certain district support a new tax policy. In a random sample of 500 voters, 220 support the policy. At a 5% significance level, is there evidence to reject the analyst’s claim?

H₀: p = 0.40 H₁: p ≠ 0.40 p̂ = 220/500 = 0.44 Z = (p̂ – p) / √(p(1-p)/n) = (0.44 – 0.40) / √(0.40(1-0.40)/500) = 0.04 / 0.0219 = 1.83 Critical value (α = 0.05, two-tailed): ±1.96 |1.83| < 1.96, so fail to reject H₀. Conclusion: There is not enough evidence to reject the analyst’s claim at the 5% significance level.

Problem 7 : Two advertising methods are compared. Method A results in 150 sales out of 1000 contacts. Method B results in 180 sales out of 1200 contacts. At a 5% significance level, is there a difference in the effectiveness of the two methods?

H₀: pA – pB = 0 H₁: pA – pB ≠ 0 p̂A = 150/1000 = 0.15 p̂B = 180/1200 = 0.15 p̂ = (150 + 180) / (1000 + 1200) = 0.15 Z = (p̂A – p̂B) / √(p̂(1-p̂)(1/nA + 1/nB)) = (0.15 – 0.15) / √(0.15(1-0.15)(1/1000 + 1/1200)) = 0 / 0.0149 = 0 Critical value (α = 0.05, two-tailed): ±1.96 |0| < 1.96, so fail to reject H₀. Conclusion: There is no significant difference in the effectiveness of the two advertising methods at the 5% significance level.

Problem 8 : A new treatment for a disease is tested in two cities. In City A, 120 out of 400 patients recover. In City B, 140 out of 500 patients recover. At a 5% significance level, is there a difference in the recovery rates between the two cities?

H₀: pA – pB = 0 H₁: pA – pB ≠ 0 p̂A = 120/400 = 0.30 p̂B = 140/500 = 0.28 p̂ = (120 + 140) / (400 + 500) = 0.2889 Z = (p̂A – p̂B) / √(p̂(1-p̂)(1/nA + 1/nB)) = (0.30 – 0.28) / √(0.2889(1-0.2889)(1/400 + 1/500)) = 0.02 / 0.0316 = 0.633 Critical value (α = 0.05, two-tailed): ±1.96 |0.633| < 1.96, so fail to reject H₀. Conclusion: There is not enough evidence to conclude a difference in recovery rates between the two cities at the 5% significance level.

Problem 9 : Two advertising methods are compared. Method A results in 150 sales out of 1000 contacts. Method B results in 180 sales out of 1200 contacts. At a 5% significance level, is there a difference in the effectiveness of the two methods?

Problem 10 : A company claims that their product weighs 500 grams on average. A sample of 64 products has a mean weight of 498 grams. The population standard deviation is known to be 8 grams. At a 1% significance level, is there evidence to reject the company’s claim?

H₀: μ = 500 H₁: μ ≠ 500 Z = (x̄ – μ) / (σ / √n) = (498 – 500) / (8 / √64) = -2 / 1 = -2 Critical value (α = 0.01, two-tailed): ±2.576 |-2| < 2.576, so fail to reject H₀. Conclusion: There is not enough evidence to reject the company’s claim at the 1% significance level.

1).A cereal company claims that their boxes contain an average of 350 grams of cereal. A consumer group tests 100 boxes and finds a mean weight of 345 grams with a known population standard deviation of 15 grams. At a 5% significance level, is there evidence to refute the company’s claim?

2).A study compares the effect of two different diets on cholesterol levels. Diet A is tested on 50 people, resulting in a mean reduction of 25 mg/dL with a standard deviation of 8 mg/dL. Diet B is tested on 60 people, resulting in a mean reduction of 22 mg/dL with a standard deviation of 7 mg/dL. At a 1% significance level, is there a significant difference between the two diets?

3).A politician claims that 60% of voters in her district support her re-election. In a random sample of 1000 voters, 570 support her. At a 5% significance level, is there evidence to reject the politician’s claim?

4).Two different teaching methods are compared. Method A results in 80 students passing out of 120 students. Method B results in 90 students passing out of 150 students. At a 5% significance level, is there a difference in the effectiveness of the two methods?

5).A company claims that their new energy-saving light bulbs last an average of 10,000 hours. A sample of 64 bulbs has a mean life of 9,800 hours. The population standard deviation is known to be 500 hours. At a 1% significance level, is there evidence to reject the company’s claim?

6).The mean salary of employees in a large corporation is said to be $75,000 per year. A union representative suspects this is too high and surveys 100 randomly selected employees, finding a mean salary of $72,500. The population standard deviation is known to be $8,000. At a 5% significance level, is there evidence to support the union representative’s suspicion?

7).Two factories produce computer chips. Factory A’s chips have a mean processing speed of 3.2 GHz with a standard deviation of 0.2 GHz. Factory B’s chips have a mean processing speed of 3.3 GHz with a standard deviation of 0.25 GHz. Samples of 100 chips from each factory are tested. At a 5% significance level, is there a difference in mean processing speed between the two factories?

8).A new vaccine is claimed to be 90% effective. In a clinical trial with 500 participants, 440 develop immunity. At a 1% significance level, is there evidence to reject the claim about the vaccine’s effectiveness?

9).Two different advertising campaigns are tested. Campaign A results in 250 sales out of 2000 views. Campaign B results in 300 sales out of 2500 views. At a 5% significance level, is there a difference in the effectiveness of the two campaigns?

10).A quality control manager claims that the defect rate in a production line is 5%. In a sample of 1000 items, 65 are found to be defective. At a 5% significance level, is there evidence to suggest that the actual defect rate is different from the claimed 5%?

Type 1 error and Type II error

- Type I error: Type 1 error has occurred when we reject the null hypothesis, even when the hypothesis is true. This error is denoted by alpha.

- Type II error: Type II error occurred when we didn’t reject the null hypothesis, even when the hypothesis is false. This error is denoted by beta.

| Null Hypothesis is TRUE | Null Hypothesis is FALSE | |

|---|---|---|

| Reject Null Hypothesis | Type I Error (False Positive) | Correct decision |

| Fail to Reject the Null Hypothesis | Correct decision | Type II error (False Negative) |

Z-tests are used to determine whether there is a statistically significant difference between a sample statistic and a population parameter, or between two population parameters.Z-tests are statistical tools used to determine if there’s a significant difference between a sample statistic and a population parameter, or between two population parameters. They’re applicable when dealing with large sample sizes (typically n > 30) and known population standard deviations. Z-tests can be used for analyzing means or proportions in both one-sample and two-sample scenarios. The process involves stating hypotheses, calculating a Z-score, comparing it to a critical value based on the chosen significance level (often 5% or 1%), and then making a decision to reject or fail to reject the null hypothesis.

What is the main limitation of the z-test?

The limitation of Z-Tests is that we don’t usually know the population standard deviation. What we do is: When we don’t know the population’s variability, we assume that the sample’s variability is a good basis for estimating the population’s variability.

What is the minimum sample for z-test?

A z-test can only be used if the population standard deviation is known and the sample size is 30 data points or larger. Otherwise, a t-test should be employed.

What is the application of z-test?

It is also used to determine if there is a significant difference between the mean of two independent samples. The z-test can also be used to compare the population proportion to an assumed proportion or to determine the difference between the population proportion of two samples.

What is the theory of the z-test?

The z test is a commonly used hypothesis test in inferential statistics that allows us to compare two populations using the mean values of samples from those populations, or to compare the mean of one population to a hypothesized value, when what we are interested in comparing is a continuous variable.

Please Login to comment...

Similar reads.

- Engineering Mathematics

- Machine Learning

- Mathematical

- Best Twitch Extensions for 2024: Top Tools for Viewers and Streamers

- Discord Emojis List 2024: Copy and Paste

- Best Adblockers for Twitch TV: Enjoy Ad-Free Streaming in 2024

- PS4 vs. PS5: Which PlayStation Should You Buy in 2024?

- 15 Most Important Aptitude Topics For Placements [2024]

Improve your Coding Skills with Practice

What kind of Experience do you want to share?

Z test is a statistical test that is conducted on data that approximately follows a normal distribution. The z test can be performed on one sample, two samples, or on proportions for hypothesis testing. It checks if the means of two large samples are different or not when the population variance is known.

A z test can further be classified into left-tailed, right-tailed, and two-tailed hypothesis tests depending upon the parameters of the data. In this article, we will learn more about the z test, its formula, the z test statistic, and how to perform the test for different types of data using examples.

| 1. | |

| 2. | |

| 3. | |

| 4. | |

| 5. | |

| 6. |

What is Z Test?

A z test is a test that is used to check if the means of two populations are different or not provided the data follows a normal distribution. For this purpose, the null hypothesis and the alternative hypothesis must be set up and the value of the z test statistic must be calculated. The decision criterion is based on the z critical value.

Z Test Definition

A z test is conducted on a population that follows a normal distribution with independent data points and has a sample size that is greater than or equal to 30. It is used to check whether the means of two populations are equal to each other when the population variance is known. The null hypothesis of a z test can be rejected if the z test statistic is statistically significant when compared with the critical value.

Z Test Formula

The z test formula compares the z statistic with the z critical value to test whether there is a difference in the means of two populations. In hypothesis testing , the z critical value divides the distribution graph into the acceptance and the rejection regions. If the test statistic falls in the rejection region then the null hypothesis can be rejected otherwise it cannot be rejected. The z test formula to set up the required hypothesis tests for a one sample and a two-sample z test are given below.

One-Sample Z Test

A one-sample z test is used to check if there is a difference between the sample mean and the population mean when the population standard deviation is known. The formula for the z test statistic is given as follows:

z = \(\frac{\overline{x}-\mu}{\frac{\sigma}{\sqrt{n}}}\). \(\overline{x}\) is the sample mean, \(\mu\) is the population mean, \(\sigma\) is the population standard deviation and n is the sample size.

The algorithm to set a one sample z test based on the z test statistic is given as follows:

Left Tailed Test:

Null Hypothesis: \(H_{0}\) : \(\mu = \mu_{0}\)

Alternate Hypothesis: \(H_{1}\) : \(\mu < \mu_{0}\)

Decision Criteria: If the z statistic < z critical value then reject the null hypothesis.

Right Tailed Test:

Alternate Hypothesis: \(H_{1}\) : \(\mu > \mu_{0}\)

Decision Criteria: If the z statistic > z critical value then reject the null hypothesis.

Two Tailed Test:

Alternate Hypothesis: \(H_{1}\) : \(\mu \neq \mu_{0}\)

Two Sample Z Test

A two sample z test is used to check if there is a difference between the means of two samples. The z test statistic formula is given as follows:

z = \(\frac{(\overline{x_{1}}-\overline{x_{2}})-(\mu_{1}-\mu_{2})}{\sqrt{\frac{\sigma_{1}^{2}}{n_{1}}+\frac{\sigma_{2}^{2}}{n_{2}}}}\). \(\overline{x_{1}}\), \(\mu_{1}\), \(\sigma_{1}^{2}\) are the sample mean, population mean and population variance respectively for the first sample. \(\overline{x_{2}}\), \(\mu_{2}\), \(\sigma_{2}^{2}\) are the sample mean, population mean and population variance respectively for the second sample.

The two-sample z test can be set up in the same way as the one-sample test. However, this test will be used to compare the means of the two samples. For example, the null hypothesis is given as \(H_{0}\) : \(\mu_{1} = \mu_{2}\).

Z Test for Proportions

A z test for proportions is used to check the difference in proportions. A z test can either be used for one proportion or two proportions. The formulas are given as follows.

One Proportion Z Test

A one proportion z test is used when there are two groups and compares the value of an observed proportion to a theoretical one. The z test statistic for a one proportion z test is given as follows:

z = \(\frac{p-p_{0}}{\sqrt{\frac{p_{0}(1-p_{0})}{n}}}\). Here, p is the observed value of the proportion, \(p_{0}\) is the theoretical proportion value and n is the sample size.

The null hypothesis is that the two proportions are the same while the alternative hypothesis is that they are not the same.

Two Proportion Z Test

A two proportion z test is conducted on two proportions to check if they are the same or not. The test statistic formula is given as follows:

z =\(\frac{p_{1}-p_{2}-0}{\sqrt{p(1-p)\left ( \frac{1}{n_{1}} +\frac{1}{n_{2}}\right )}}\)

where p = \(\frac{x_{1}+x_{2}}{n_{1}+n_{2}}\)

\(p_{1}\) is the proportion of sample 1 with sample size \(n_{1}\) and \(x_{1}\) number of trials.

\(p_{2}\) is the proportion of sample 2 with sample size \(n_{2}\) and \(x_{2}\) number of trials.

How to Calculate Z Test Statistic?

The most important step in calculating the z test statistic is to interpret the problem correctly. It is necessary to determine which tailed test needs to be conducted and what type of test does the z statistic belong to. Suppose a teacher claims that his section's students will score higher than his colleague's section. The mean score is 22.1 for 60 students belonging to his section with a standard deviation of 4.8. For his colleague's section, the mean score is 18.8 for 40 students and the standard deviation is 8.1. Test his claim at \(\alpha\) = 0.05. The steps to calculate the z test statistic are as follows:

- Identify the type of test. In this example, the means of two populations have to be compared in one direction thus, the test is a right-tailed two-sample z test.

- Set up the hypotheses. \(H_{0}\): \(\mu_{1} = \mu_{2}\), \(H_{1}\): \(\mu_{1} > \mu_{2}\).

- Find the critical value at the given alpha level using the z table. The critical value is 1.645.

- Determine the z test statistic using the appropriate formula. This is given by z = \(\frac{(\overline{x_{1}}-\overline{x_{2}})-(\mu_{1}-\mu_{2})}{\sqrt{\frac{\sigma_{1}^{2}}{n_{1}}+\frac{\sigma_{2}^{2}}{n_{2}}}}\). Substitute values in this equation. \(\overline{x_{1}}\) = 22.1, \(\sigma_{1}\) = 4.8, \(n_{1}\) = 60, \(\overline{x_{2}}\) = 18.8, \(\sigma_{2}\) = 8.1, \(n_{2}\) = 40 and \(\mu_{1} - \mu_{2} = 0\). Thus, z = 2.32

- Compare the critical value and test statistic to arrive at a conclusion. As 2.32 > 1.645 thus, the null hypothesis can be rejected. It can be concluded that there is enough evidence to support the teacher's claim that the scores of students are better in his class.

Z Test vs T-Test

Both z test and t-test are univariate tests used on the means of two datasets. The differences between both tests are outlined in the table given below:

| Z Test | T-Test |

|---|---|

| A z test is a statistical test that is used to check if the means of two data sets are different when the population variance is known. | A is used to check if the means of two data sets are different when the population variance is not known. |

| The sample size is greater than or equal to 30. | The sample size is lesser than 30. |

| The follows a normal distribution. | The data follows a student-t distribution. |

| The one-sample z test statistic is given by \(\frac{\overline{x}-\mu}{\frac{\sigma}{\sqrt{n}}}\) | The t test statistic is given as \(\frac{\overline{x}-\mu}{\frac{s}{\sqrt{n}}}\) where s is the sample standard deviation |

Related Articles:

- Probability and Statistics

- Data Handling

- Summary Statistics

Important Notes on Z Test

- Z test is a statistical test that is conducted on normally distributed data to check if there is a difference in means of two data sets.

- The sample size should be greater than 30 and the population variance must be known to perform a z test.

- The one-sample z test checks if there is a difference in the sample and population mean,

- The two sample z test checks if the means of two different groups are equal.

Examples on Z Test

Example 1: A teacher claims that the mean score of students in his class is greater than 82 with a standard deviation of 20. If a sample of 81 students was selected with a mean score of 90 then check if there is enough evidence to support this claim at a 0.05 significance level.

Solution: As the sample size is 81 and population standard deviation is known, this is an example of a right-tailed one-sample z test.

\(H_{0}\) : \(\mu = 82\)

\(H_{1}\) : \(\mu > 82\)

From the z table the critical value at \(\alpha\) = 1.645

z = \(\frac{\overline{x}-\mu}{\frac{\sigma}{\sqrt{n}}}\)

\(\overline{x}\) = 90, \(\mu\) = 82, n = 81, \(\sigma\) = 20

As 3.6 > 1.645 thus, the null hypothesis is rejected and it is concluded that there is enough evidence to support the teacher's claim.

Answer: Reject the null hypothesis

Example 2: An online medicine shop claims that the mean delivery time for medicines is less than 120 minutes with a standard deviation of 30 minutes. Is there enough evidence to support this claim at a 0.05 significance level if 49 orders were examined with a mean of 100 minutes?

Solution: As the sample size is 49 and population standard deviation is known, this is an example of a left-tailed one-sample z test.

\(H_{0}\) : \(\mu = 120\)

\(H_{1}\) : \(\mu < 120\)

From the z table the critical value at \(\alpha\) = -1.645. A negative sign is used as this is a left tailed test.

\(\overline{x}\) = 100, \(\mu\) = 120, n = 49, \(\sigma\) = 30

As -4.66 < -1.645 thus, the null hypothesis is rejected and it is concluded that there is enough evidence to support the medicine shop's claim.

Example 3: A company wants to improve the quality of products by reducing defects and monitoring the efficiency of assembly lines. In assembly line A, there were 18 defects reported out of 200 samples while in line B, 25 defects out of 600 samples were noted. Is there a difference in the procedures at a 0.05 alpha level?

Solution: This is an example of a two-tailed two proportion z test.

\(H_{0}\): The two proportions are the same.

\(H_{1}\): The two proportions are not the same.

As this is a two-tailed test the alpha level needs to be divided by 2 to get 0.025.

Using this, the critical value from the z table is 1.96.

\(n_{1}\) = 200, \(n_{2}\) = 600

\(p_{1}\) = 18 / 200 = 0.09

\(p_{2}\) = 25 / 600 = 0.0416

p = (18 + 25) / (200 + 600) = 0.0537

z =\(\frac{p_{1}-p_{2}-0}{\sqrt{p(1-p)\left ( \frac{1}{n_{1}} +\frac{1}{n_{2}}\right )}}\) = 2.62

As 2.62 > 1.96 thus, the null hypothesis is rejected and it is concluded that there is a significant difference between the two lines.

go to slide go to slide go to slide

Book a Free Trial Class

FAQs on Z Test

What is a z test in statistics.

A z test in statistics is conducted on data that is normally distributed to test if the means of two datasets are equal. It can be performed when the sample size is greater than 30 and the population variance is known.

What is a One-Sample Z Test?

A one-sample z test is used when the population standard deviation is known, to compare the sample mean and the population mean. The z test statistic is given by the formula \(\frac{\overline{x}-\mu}{\frac{\sigma}{\sqrt{n}}}\).

What is the Two-Sample Z Test Formula?

The two sample z test is used when the means of two populations have to be compared. The z test formula is given as \(\frac{(\overline{x_{1}}-\overline{x_{2}})-(\mu_{1}-\mu_{2})}{\sqrt{\frac{\sigma_{1}^{2}}{n_{1}}+\frac{\sigma_{2}^{2}}{n_{2}}}}\).

What is a One Proportion Z test?

A one proportion z test is used to check if the value of the observed proportion is different from the value of the theoretical proportion. The z statistic is given by \(\frac{p-p_{0}}{\sqrt{\frac{p_{0}(1-p_{0})}{n}}}\).

What is a Two Proportion Z Test?

When the proportions of two samples have to be compared then the two proportion z test is used. The formula is given by \(\frac{p_{1}-p_{2}-0}{\sqrt{p(1-p)\left ( \frac{1}{n_{1}} +\frac{1}{n_{2}}\right )}}\).

How Do You Find the Z Test?

The steps to perform the z test are as follows:

- Set up the null and alternative hypotheses.

- Find the critical value using the alpha level and z table.

- Calculate the z statistic.

- Compare the critical value and the test statistic to decide whether to reject or not to reject the null hypothesis.

What is the Difference Between the Z Test and the T-Test?

A z test is used on large samples n ≥ 30 and normally distributed data while a t-test is used on small samples (n < 30) following a student t distribution . Both tests are used to check if the means of two datasets are the same.

Z-test Calculator

Table of contents

This Z-test calculator is a tool that helps you perform a one-sample Z-test on the population's mean . Two forms of this test - a two-tailed Z-test and a one-tailed Z-tests - exist, and can be used depending on your needs. You can also choose whether the calculator should determine the p-value from Z-test or you'd rather use the critical value approach!

Read on to learn more about Z-test in statistics, and, in particular, when to use Z-tests, what is the Z-test formula, and whether to use Z-test vs. t-test. As a bonus, we give some step-by-step examples of how to perform Z-tests!

Or you may also check our t-statistic calculator , where you can learn the concept of another essential statistic. If you are also interested in F-test, check our F-statistic calculator .

What is a Z-test?

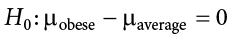

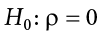

A one sample Z-test is one of the most popular location tests. The null hypothesis is that the population mean value is equal to a given number, μ 0 \mu_0 μ 0 :

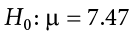

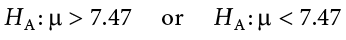

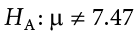

We perform a two-tailed Z-test if we want to test whether the population mean is not μ 0 \mu_0 μ 0 :

and a one-tailed Z-test if we want to test whether the population mean is less/greater than μ 0 \mu_0 μ 0 :

Let us now discuss the assumptions of a one-sample Z-test.

When do I use Z-tests?

You may use a Z-test if your sample consists of independent data points and:

the data is normally distributed , and you know the population variance ;

the sample is large , and data follows a distribution which has a finite mean and variance. You don't need to know the population variance.

The reason these two possibilities exist is that we want the test statistics that follow the standard normal distribution N ( 0 , 1 ) \mathrm N(0, 1) N ( 0 , 1 ) . In the former case, it is an exact standard normal distribution, while in the latter, it is approximately so, thanks to the central limit theorem.

The question remains, "When is my sample considered large?" Well, there's no universal criterion. In general, the more data points you have, the better the approximation works. Statistics textbooks recommend having no fewer than 50 data points, while 30 is considered the bare minimum.

Z-test formula

Let x 1 , . . . , x n x_1, ..., x_n x 1 , ... , x n be an independent sample following the normal distribution N ( μ , σ 2 ) \mathrm N(\mu, \sigma^2) N ( μ , σ 2 ) , i.e., with a mean equal to μ \mu μ , and variance equal to σ 2 \sigma ^2 σ 2 .

We pose the null hypothesis, H 0 : μ = μ 0 \mathrm H_0 \!\!:\!\! \mu = \mu_0 H 0 : μ = μ 0 .

We define the test statistic, Z , as:

x ˉ \bar x x ˉ is the sample mean, i.e., x ˉ = ( x 1 + . . . + x n ) / n \bar x = (x_1 + ... + x_n) / n x ˉ = ( x 1 + ... + x n ) / n ;

μ 0 \mu_0 μ 0 is the mean postulated in H 0 \mathrm H_0 H 0 ;

n n n is sample size; and

σ \sigma σ is the population standard deviation.

In what follows, the uppercase Z Z Z stands for the test statistic (treated as a random variable), while the lowercase z z z will denote an actual value of Z Z Z , computed for a given sample drawn from N(μ,σ²).

If H 0 \mathrm H_0 H 0 holds, then the sum S n = x 1 + . . . + x n S_n = x_1 + ... + x_n S n = x 1 + ... + x n follows the normal distribution, with mean n μ 0 n \mu_0 n μ 0 and variance n 2 σ n^2 \sigma n 2 σ . As Z Z Z is the standardization (z-score) of S n / n S_n/n S n / n , we can conclude that the test statistic Z Z Z follows the standard normal distribution N ( 0 , 1 ) \mathrm N(0, 1) N ( 0 , 1 ) , provided that H 0 \mathrm H_0 H 0 is true. By the way, we have the z-score calculator if you want to focus on this value alone.

If our data does not follow a normal distribution, or if the population standard deviation is unknown (and thus in the formula for Z Z Z we substitute the population standard deviation σ \sigma σ with sample standard deviation), then the test statistics Z Z Z is not necessarily normal. However, if the sample is sufficiently large, then the central limit theorem guarantees that Z Z Z is approximately N ( 0 , 1 ) \mathrm N(0, 1) N ( 0 , 1 ) .

In the sections below, we will explain to you how to use the value of the test statistic, z z z , to make a decision , whether or not you should reject the null hypothesis . Two approaches can be used in order to arrive at that decision: the p-value approach, and critical value approach - and we cover both of them! Which one should you use? In the past, the critical value approach was more popular because it was difficult to calculate p-value from Z-test. However, with help of modern computers, we can do it fairly easily, and with decent precision. In general, you are strongly advised to report the p-value of your tests!

p-value from Z-test

Formally, the p-value is the smallest level of significance at which the null hypothesis could be rejected. More intuitively, p-value answers the questions: provided that I live in a world where the null hypothesis holds, how probable is it that the value of the test statistic will be at least as extreme as the z z z - value I've got for my sample? Hence, a small p-value means that your result is very improbable under the null hypothesis, and so there is strong evidence against the null hypothesis - the smaller the p-value, the stronger the evidence.

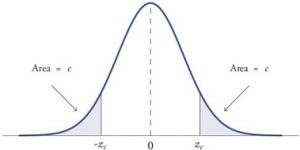

To find the p-value, you have to calculate the probability that the test statistic, Z Z Z , is at least as extreme as the value we've actually observed, z z z , provided that the null hypothesis is true. (The probability of an event calculated under the assumption that H 0 \mathrm H_0 H 0 is true will be denoted as P r ( event ∣ H 0 ) \small \mathrm{Pr}(\text{event} | \mathrm{H_0}) Pr ( event ∣ H 0 ) .) It is the alternative hypothesis which determines what more extreme means :

- Two-tailed Z-test: extreme values are those whose absolute value exceeds ∣ z ∣ |z| ∣ z ∣ , so those smaller than − ∣ z ∣ -|z| − ∣ z ∣ or greater than ∣ z ∣ |z| ∣ z ∣ . Therefore, we have:

The symmetry of the normal distribution gives:

- Left-tailed Z-test: extreme values are those smaller than z z z , so

- Right-tailed Z-test: extreme values are those greater than z z z , so

To compute these probabilities, we can use the cumulative distribution function, (cdf) of N ( 0 , 1 ) \mathrm N(0, 1) N ( 0 , 1 ) , which for a real number, x x x , is defined as:

Also, p-values can be nicely depicted as the area under the probability density function (pdf) of N ( 0 , 1 ) \mathrm N(0, 1) N ( 0 , 1 ) , due to:

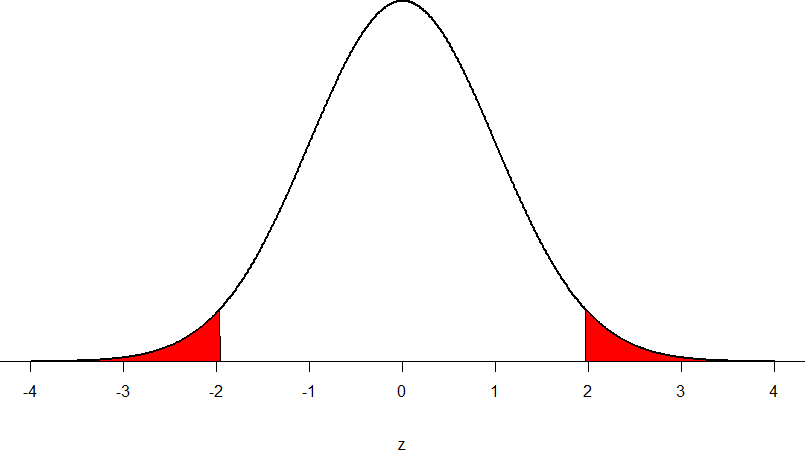

Two-tailed Z-test and one-tailed Z-test

With all the knowledge you've got from the previous section, you're ready to learn about Z-tests.

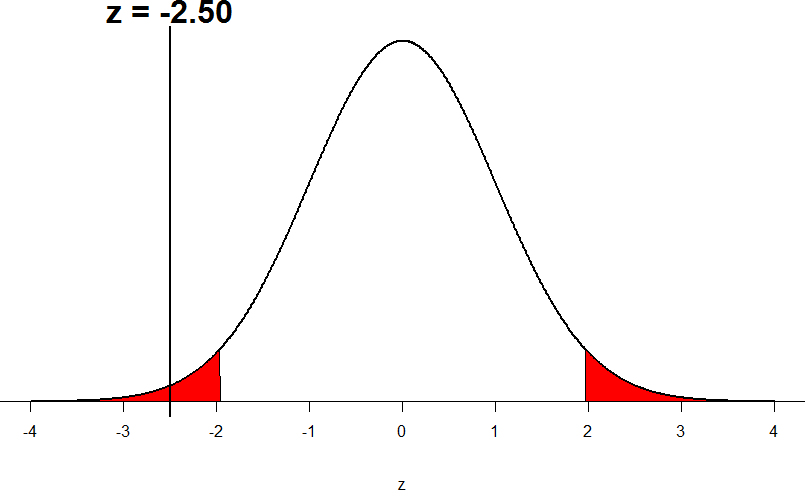

- Two-tailed Z-test:

From the fact that Φ ( − z ) = 1 − Φ ( z ) \Phi(-z) = 1 - \Phi(z) Φ ( − z ) = 1 − Φ ( z ) , we deduce that

The p-value is the area under the probability distribution function (pdf) both to the left of − ∣ z ∣ -|z| − ∣ z ∣ , and to the right of ∣ z ∣ |z| ∣ z ∣ :

- Left-tailed Z-test:

The p-value is the area under the pdf to the left of our z z z :

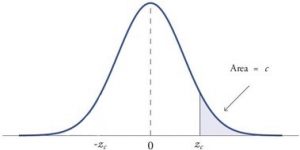

- Right-tailed Z-test:

The p-value is the area under the pdf to the right of z z z :

The decision as to whether or not you should reject the null hypothesis can be now made at any significance level, α \alpha α , you desire!

if the p-value is less than, or equal to, α \alpha α , the null hypothesis is rejected at this significance level; and

if the p-value is greater than α \alpha α , then there is not enough evidence to reject the null hypothesis at this significance level.

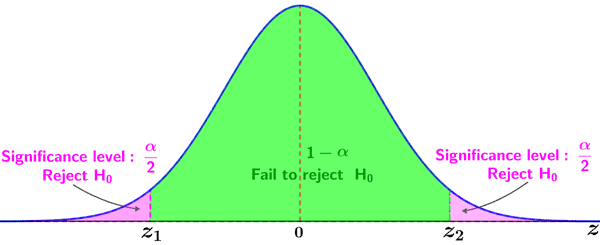

Z-test critical values & critical regions

The critical value approach involves comparing the value of the test statistic obtained for our sample, z z z , to the so-called critical values . These values constitute the boundaries of regions where the test statistic is highly improbable to lie . Those regions are often referred to as the critical regions , or rejection regions . The decision of whether or not you should reject the null hypothesis is then based on whether or not our z z z belongs to the critical region.

The critical regions depend on a significance level, α \alpha α , of the test, and on the alternative hypothesis. The choice of α \alpha α is arbitrary; in practice, the values of 0.1, 0.05, or 0.01 are most commonly used as α \alpha α .

Once we agree on the value of α \alpha α , we can easily determine the critical regions of the Z-test:

To decide the fate of H 0 \mathrm H_0 H 0 , check whether or not your z z z falls in the critical region:

If yes, then reject H 0 \mathrm H_0 H 0 and accept H 1 \mathrm H_1 H 1 ; and

If no, then there is not enough evidence to reject H 0 \mathrm H_0 H 0 .

As you see, the formulae for the critical values of Z-tests involve the inverse, Φ − 1 \Phi^{-1} Φ − 1 , of the cumulative distribution function (cdf) of N ( 0 , 1 ) \mathrm N(0, 1) N ( 0 , 1 ) .

How to use the one-sample Z-test calculator?

Our calculator reduces all the complicated steps:

Choose the alternative hypothesis: two-tailed or left/right-tailed.

In our Z-test calculator, you can decide whether to use the p-value or critical regions approach. In the latter case, set the significance level, α \alpha α .

Enter the value of the test statistic, z z z . If you don't know it, then you can enter some data that will allow us to calculate your z z z for you:

- sample mean x ˉ \bar x x ˉ (If you have raw data, go to the average calculator to determine the mean);

- tested mean μ 0 \mu_0 μ 0 ;

- sample size n n n ; and

- population standard deviation σ \sigma σ (or sample standard deviation if your sample is large).

Results appear immediately below the calculator.

If you want to find z z z based on p-value , please remember that in the case of two-tailed tests there are two possible values of z z z : one positive and one negative, and they are opposite numbers. This Z-test calculator returns the positive value in such a case. In order to find the other possible value of z z z for a given p-value, just take the number opposite to the value of z z z displayed by the calculator.

Z-test examples

To make sure that you've fully understood the essence of Z-test, let's go through some examples:

- A bottle filling machine follows a normal distribution. Its standard deviation, as declared by the manufacturer, is equal to 30 ml. A juice seller claims that the volume poured in each bottle is, on average, one liter, i.e., 1000 ml, but we suspect that in fact the average volume is smaller than that...

Formally, the hypotheses that we set are the following:

H 0 : μ = 1000 ml \mathrm H_0 \! : \mu = 1000 \text{ ml} H 0 : μ = 1000 ml

H 1 : μ < 1000 ml \mathrm H_1 \! : \mu \lt 1000 \text{ ml} H 1 : μ < 1000 ml

We went to a shop and bought a sample of 9 bottles. After carefully measuring the volume of juice in each bottle, we've obtained the following sample (in milliliters):

1020 , 970 , 1000 , 980 , 1010 , 930 , 950 , 980 , 980 \small 1020, 970, 1000, 980, 1010, 930, 950, 980, 980 1020 , 970 , 1000 , 980 , 1010 , 930 , 950 , 980 , 980 .

Sample size: n = 9 n = 9 n = 9 ;

Sample mean: x ˉ = 980 m l \bar x = 980 \ \mathrm{ml} x ˉ = 980 ml ;

Population standard deviation: σ = 30 m l \sigma = 30 \ \mathrm{ml} σ = 30 ml ;

And, therefore, p-value = Φ ( − 2 ) ≈ 0.0228 \text{p-value} = \Phi(-2) \approx 0.0228 p-value = Φ ( − 2 ) ≈ 0.0228 .

As 0.0228 < 0.05 0.0228 \lt 0.05 0.0228 < 0.05 , we conclude that our suspicions aren't groundless; at the most common significance level, 0.05, we would reject the producer's claim, H 0 \mathrm H_0 H 0 , and accept the alternative hypothesis, H 1 \mathrm H_1 H 1 .

We tossed a coin 50 times. We got 20 tails and 30 heads. Is there sufficient evidence to claim that the coin is biased?

Clearly, our data follows Bernoulli distribution, with some success probability p p p and variance σ 2 = p ( 1 − p ) \sigma^2 = p (1-p) σ 2 = p ( 1 − p ) . However, the sample is large, so we can safely perform a Z-test. We adopt the convention that getting tails is a success.

Let us state the null and alternative hypotheses:

H 0 : p = 0.5 \mathrm H_0 \! : p = 0.5 H 0 : p = 0.5 (the coin is fair - the probability of tails is 0.5 0.5 0.5 )

H 1 : p ≠ 0.5 \mathrm H_1 \! : p \ne 0.5 H 1 : p = 0.5 (the coin is biased - the probability of tails differs from 0.5 0.5 0.5 )

In our sample we have 20 successes (denoted by ones) and 30 failures (denoted by zeros), so:

Sample size n = 50 n = 50 n = 50 ;

Sample mean x ˉ = 20 / 50 = 0.4 \bar x = 20/50 = 0.4 x ˉ = 20/50 = 0.4 ;

Population standard deviation is given by σ = 0.5 × 0.5 \sigma = \sqrt{0.5 \times 0.5} σ = 0.5 × 0.5 (because 0.5 0.5 0.5 is the proportion p p p hypothesized in H 0 \mathrm H_0 H 0 ). Hence, σ = 0.5 \sigma = 0.5 σ = 0.5 ;

- And, therefore

Since 0.1573 > 0.1 0.1573 \gt 0.1 0.1573 > 0.1 we don't have enough evidence to reject the claim that the coin is fair , even at such a large significance level as 0.1 0.1 0.1 . In that case, you may safely toss it to your Witcher or use the coin flip probability calculator to find your chances of getting, e.g., 10 heads in a row (which are extremely low!).

What is the difference between Z-test vs t-test?

We use a t-test for testing the population mean of a normally distributed dataset which had an unknown population standard deviation . We get this by replacing the population standard deviation in the Z-test statistic formula by the sample standard deviation, which means that this new test statistic follows (provided that H₀ holds) the t-Student distribution with n-1 degrees of freedom instead of N(0,1) .

When should I use t-test over the Z-test?

For large samples, the t-Student distribution with n degrees of freedom approaches the N(0,1). Hence, as long as there are a sufficient number of data points (at least 30), it does not really matter whether you use the Z-test or the t-test, since the results will be almost identical. However, for small samples with unknown variance, remember to use the t-test instead of Z-test .

How do I calculate the Z test statistic?

To calculate the Z test statistic:

- Compute the arithmetic mean of your sample .

- From this mean subtract the mean postulated in null hypothesis .

- Multiply by the square root of size sample .

- Divide by the population standard deviation .

- That's it, you've just computed the Z test statistic!

Here, we perform a Z-test for population mean μ. Null hypothesis H₀: μ = μ₀.

Alternative hypothesis H₁

Significance level α

The probability that we reject the true hypothesis H₀ (type I error).

Z Test: Definition & Two Proportion Z-Test

What is a z test.

For example, if someone said they had found a new drug that cures cancer, you would want to be sure it was probably true. A hypothesis test will tell you if it’s probably true, or probably not true. A Z test, is used when your data is approximately normally distributed (i.e. the data has the shape of a bell curve when you graph it).

When you can run a Z Test.

Several different types of tests are used in statistics (i.e. f test , chi square test , t test ). You would use a Z test if:

- Your sample size is greater than 30 . Otherwise, use a t test .

- Data points should be independent from each other. In other words, one data point isn’t related or doesn’t affect another data point.

- Your data should be normally distributed . However, for large sample sizes (over 30) this doesn’t always matter.

- Your data should be randomly selected from a population, where each item has an equal chance of being selected.

- Sample sizes should be equal if at all possible.

How do I run a Z Test?

Running a Z test on your data requires five steps:

- State the null hypothesis and alternate hypothesis .

- Choose an alpha level .

- Find the critical value of z in a z table .

- Calculate the z test statistic (see below).

- Compare the test statistic to the critical z value and decide if you should support or reject the null hypothesis .

You could perform all these steps by hand. For example, you could find a critical value by hand , or calculate a z value by hand . For a step by step example, watch the following video: Watch the video for an example:

Can’t see the video? Click here to watch it on YouTube. You could also use technology, for example:

- Two sample z test in Excel .

- Find a critical z value on the TI 83 .

- Find a critical value on the TI 89 (left-tail) .

Two Proportion Z-Test

Watch the video to see a two proportion z-test:

Can’t see the video? Click here to watch it on YouTube.

A Two Proportion Z-Test (or Z-interval) allows you to calculate the true difference in proportions of two independent groups to a given confidence interval .

There are a few familiar conditions that need to be met for the Two Proportion Z-Interval to be valid.

- The groups must be independent. Subjects can be in one group or the other, but not both – like teens and adults.

- The data must be selected randomly and independently from a homogenous population. A survey is a common example.

- The population should be at least ten times bigger than the sample size. If the population is teenagers for example, there should be at least ten times as many total teenagers as the number of teenagers being surveyed.

- The null hypothesis (H 0 ) for the test is that the proportions are the same.

- The alternate hypothesis (H 1 ) is that the proportions are not the same.

Example question: let’s say you’re testing two flu drugs A and B. Drug A works on 41 people out of a sample of 195. Drug B works on 351 people in a sample of 605. Are the two drugs comparable? Use a 5% alpha level .

Step 1: Find the two proportions:

- P 1 = 41/195 = 0.21 (that’s 21%)

- P 2 = 351/605 = 0.58 (that’s 58%).

Set these numbers aside for a moment.

Step 2: Find the overall sample proportion . The numerator will be the total number of “positive” results for the two samples and the denominator is the total number of people in the two samples.

- p = (41 + 351) / (195 + 605) = 0.49.

Set this number aside for a moment.

Solving the formula, we get: Z = 8.99

We need to find out if the z-score falls into the “ rejection region .”

Step 5: Compare the calculated z-score from Step 3 with the table z-score from Step 4. If the calculated z-score is larger, you can reject the null hypothesis.

8.99 > 1.96, so we can reject the null hypothesis .

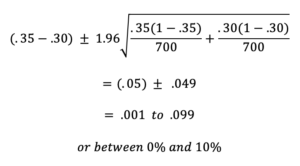

Example 2: Suppose that in a survey of 700 women and 700 men, 35% of women and 30% of men indicated that they support a particular presidential candidate. Let’s say we wanted to find the true difference in proportions of these two groups to a 95% confidence interval .

At first glance the survey indicates that women support the candidate more than men by about 5% . However, for this statistical inference to be valid we need to construct a range of values to a given confidence interval.

To do this, we use the formula for Two Proportion Z-Interval:

Plugging in values we find the true difference in proportions to be

Based on the results of the survey, we are 95% confident that the difference in proportions of women and men that support the presidential candidate is between about 0 % and 10% .

Check out our YouTube channel for more stats help and tips!

Z-Test for Statistical Hypothesis Testing Explained

The Z-test is a statistical hypothesis test that determines where the distribution of the statistic we are measuring, like the mean, is part of the normal distribution.

The Z-test is a statistical hypothesis test used to determine where the distribution of the test statistic we are measuring, like the mean , is part of the normal distribution .

There are multiple types of Z-tests, however, we’ll focus on the easiest and most well known one, the one sample mean test. This is used to determine if the difference between the mean of a sample and the mean of a population is statistically significant.

What Is a Z-Test?

A Z-test is a type of statistical hypothesis test where the test-statistic follows a normal distribution.

The name Z-test comes from the Z-score of the normal distribution. This is a measure of how many standard deviations away a raw score or sample statistics is from the populations’ mean.

Z-tests are the most common statistical tests conducted in fields such as healthcare and data science . Therefore, it’s an essential concept to understand.

Requirements for a Z-Test

In order to conduct a Z-test, your statistics need to meet a few requirements, including:

- A Sample size that’s greater than 30. This is because we want to ensure our sample mean comes from a distribution that is normal. As stated by the c entral limit theorem , any distribution can be approximated as normally distributed if it contains more than 30 data points.

- The standard deviation and mean of the population is known .

- The sample data is collected/acquired randomly .

More on Data Science: What Is Bootstrapping Statistics?

Z-Test Steps

There are four steps to complete a Z-test. Let’s examine each one.

4 Steps to a Z-Test

- State the null hypothesis.

- State the alternate hypothesis.

- Choose your critical value.

- Calculate your Z-test statistics.

1. State the Null Hypothesis

The first step in a Z-test is to state the null hypothesis, H_0 . This what you believe to be true from the population, which could be the mean of the population, μ_0 :

2. State the Alternate Hypothesis

Next, state the alternate hypothesis, H_1 . This is what you observe from your sample. If the sample mean is different from the population’s mean, then we say the mean is not equal to μ_0:

3. Choose Your Critical Value

Then, choose your critical value, α , which determines whether you accept or reject the null hypothesis. Typically for a Z-test we would use a statistical significance of 5 percent which is z = +/- 1.96 standard deviations from the population’s mean in the normal distribution:

This critical value is based on confidence intervals.

4. Calculate Your Z-Test Statistic

Compute the Z-test Statistic using the sample mean, μ_1 , the population mean, μ_0 , the number of data points in the sample, n and the population’s standard deviation, σ :

If the test statistic is greater (or lower depending on the test we are conducting) than the critical value, then the alternate hypothesis is true because the sample’s mean is statistically significant enough from the population mean.

Another way to think about this is if the sample mean is so far away from the population mean, the alternate hypothesis has to be true or the sample is a complete anomaly.

More on Data Science: Basic Probability Theory and Statistics Terms to Know

Z-Test Example

Let’s go through an example to fully understand the one-sample mean Z-test.

A school says that its pupils are, on average, smarter than other schools. It takes a sample of 50 students whose average IQ measures to be 110. The population, or the rest of the schools, has an average IQ of 100 and standard deviation of 20. Is the school’s claim correct?

The null and alternate hypotheses are:

Where we are saying that our sample, the school, has a higher mean IQ than the population mean.

Now, this is what’s called a right-sided, one-tailed test as our sample mean is greater than the population’s mean. So, choosing a critical value of 5 percent, which equals a Z-score of 1.96 , we can only reject the null hypothesis if our Z-test statistic is greater than 1.96.

If the school claimed its students’ IQs were an average of 90, then we would use a left-tailed test, as shown in the figure above. We would then only reject the null hypothesis if our Z-test statistic is less than -1.96.

Computing our Z-test statistic, we see:

Therefore, we have sufficient evidence to reject the null hypothesis, and the school’s claim is right.

Hope you enjoyed this article on Z-tests. In this post, we only addressed the most simple case, the one-sample mean test. However, there are other types of tests, but they all follow the same process just with some small nuances.

Recent Data Science Articles

One-sample Z-test: Hypothesis Testing, Effect Size, and Power

Ke (kay) fang ( [email protected] ).

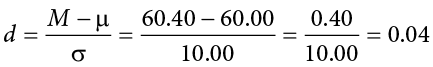

Hey, I’m Kay! This guide provides an introduction to the fundamental concepts of and relationships between hypothesis testing, effect size, and power analysis, using the one-sample z-test as a prime example. While the primary goal is to elucidate the idea behind hypothesis testing, this guide does try to carefully derive the math details behind the test in the hope that it helps clarification. DISCLAIMER: It’s important to mention that the one-sample z-test is rarely used due to its restrictive assumptions. As such, there are limited resources on the subject, compelling me to derive most of the formulas, particularly those related to power, on my own. This self-reliance might increase the likelihood of errors. If you detect any inaccuracies or inconsistencies, please don’t hesitate to let me know, and I’ll make the necessary updates. Happy learning! ;)

Single sample Z-test

I. the data generating process.

In a single sample z-test, our data generating process (DGP) assumes that our observations of a random variable \(X\) are independently drawn from one identical distribution (i.i.d.) with mean \(\mu\) and variance \(\sigma^2\) .

Important Notation:

Here we use the capital \(\bar{X}\) to denote the sample mean to refer it as a random variable. And the \(X_i\) refer to each element in a sample also as a random variable.

Later, when we have an actual observed sample, we would use the lower case letter \(x_i\) to denote each observation/realization of the random variable \(X_i\) and calculate the observed sample mean \(\bar{x}\) and treat it as an realization of our sample mean \(\bar{X}\) .

The sample mean is defined as below. As indicated in previous guide, the sample mean is an unbiased estimator of population expectation under i.i.d. assumption.

\[\bar{X} = \frac{\sum^n_i X_i}{n}\]

The expectation of the sample mean should be:

\[ \begin{align*} E(\bar{X}) =& E(\frac{1}{n} \cdot \sum^n_i(X_i)) \\ =& \frac{1}{n} \cdot \sum^n_iE(X_i)\\ =&\frac{1}{n}\cdot n \cdot \mu\\ =& \mu \end{align*} \]

and the variance of the sample mean would be:

\[ \begin{align*} Var(\bar{X}) =& Var(\frac{1}{n} \cdot \sum^n_i(X_i))\\ =& \frac{1}{n^2} \cdot \sum^n_i Var(X_i)\\ =&\frac{1}{n^2} \cdot n \cdot \sigma^2\\ =& \frac{\sigma^2}{n}\\[2ex] *\text{Note: } & Var(X_1 +X_2) = Var(X_1) + Var(X_2) + Cov(X_1, X_2)\\ &\text{As the samples are drawn individually, } Cov(X_1, X_2) =0, \\ &Var(X_1 +X_2) = Var(X_1) + Var(X_2)\\ \end{align*} \]

More importantly, according to The Central Limit Theorem (CLT), even we did not specify the original distribution of \(x\) , if the original distributions of \(x\) have finite variances, as n become sufficiently large (rule of thumb: n >30), the distribution of \(\bar{x}\) become a normal distribution:

\[\bar{X} \sim N(\mu, \frac{\sigma^2}{n})\]

Given the nature of the normal distribution, we know the probability density function of \(\bar{X}\) would be

\[f_{pdf}(\bar{X}|\mu, \sigma, n) = \frac{1}{\left(\frac{\sigma}{\sqrt{n}}\right)\sqrt{2\pi}} \cdot \exp\left[-\frac{(\bar{X}-\mu)^2}{2 \cdot \left(\frac{\sigma^2}{n}\right)}\right]\]

This can be tedious to calculate so we could standardize the normal distribution to a standard normal distribution ( \(N(0, 1)\) ).

\[ Z = (\frac{\bar{X} - \mu}{\sigma/\sqrt{n}}) = (\frac{\sqrt{n} \cdot (\bar{X} - \mu)}{\sigma})\sim N(0, 1)\\ \]

Important Notation: Similar to \(\bar{X}\) and \(\bar{x}\) , we use \(Z\) to refer to the random variable and \(z\) to refer to the observation from a fixed sample.

Also we could get the theoretical probability of getting Z between an interval from the distribution by

\[ Pr(z_{min} < Z < z_{max}) = \Phi(z_{max}) - \Phi(z_{min})\\[2ex] \text{where } \Phi(k) = \int^k_{-\infty} f_{pdf}(Z|\mu, \sigma,n)\ dZ\\[2ex] f_{pdf}(Z|\mu, \sigma,n) = \frac{1}{\sqrt{2\pi}} \cdot exp(-\frac{1}{2}Z^2)\\[2ex] Z|\mu, \sigma,n = \frac{\sqrt{n} \cdot (\bar{X} - \mu)}{\sigma} \]

II. The Hypothesis Testing

1. logic of hypothesis testing: the null hypothesis.

For a one-sample Z-test, we assume we know the variance parameter \(\sigma^2\) of our data generating distribution (a very unrealistic assumption, but let’s stick with it for now)

Given a sample, we could also know the sample size n, the observed sample mean \(\bar{x}\) (remember we use lower case so it don’t get confused as we view the sample mean \(\bar{X}\) as a random variable in our DGP).

The aim of our hypothesis testing is then, given our knowledge about the \(\sigma\) , n and the \(\bar{x}\) , we can test hypothesis about our sample mean \(\mu\) . Specifically, the null hypothesis ( \(H_0\) ) stating that,

\(\mu = \mu_{H_0}\) (a two-tailed test)

\(\mu \geq \mu_{H_0}\) (a right-tailed test)

\(\mu \leq \mu_{H_0}\) (a left-tailed test)