Using code reviews to automatically configure static analysis tools

- Published: 11 December 2021

- Volume 27 , article number 28 , ( 2022 )

Cite this article

- Fiorella Zampetti ORCID: orcid.org/0000-0001-7098-8964 1 ,

- Saghan Mudbhari 2 ,

- Venera Arnaoudova 2 ,

- Massimiliano Di Penta 1 ,

- Sebastiano Panichella 3 &

- Giuliano Antoniol 4

880 Accesses

10 Citations

Explore all metrics

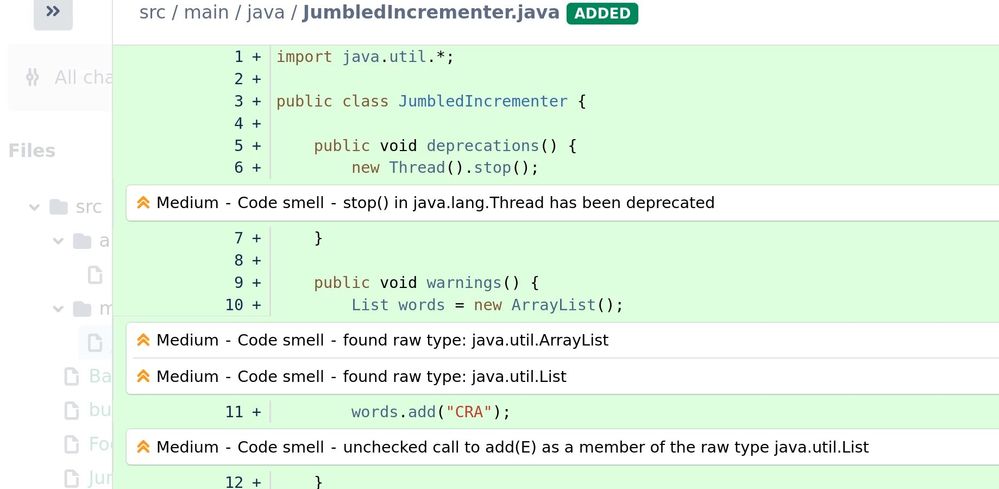

Developers often use Static Code Analysis Tools (SCAT) to automatically detect different kinds of quality flaws in their source code. Since many warnings raised by SCATs may be irrelevant for a project/organization, it can be possible to leverage information from the project development history, to automatically configure which warnings a SCAT should raise, and which not. In this paper, we propose an automated approach (Auto-SCAT) to leverage (statement-level) code review comments for recommending SCAT warnings, or warning categories, to be enabled. To this aim, we trace code review comments onto SCAT warnings by leveraging their descriptions and messages, as well as review comments made in other different projects. We apply Auto-SCAT to study how CheckStyle, a well-known SCAT, can be configured in the context of six Java open source projects, all using Gerrit for handling code reviews. Our results show that, Auto-SCAT is able to classify code review comments into CheckStyle checks with a precision of 61% and a recall of 52%. While considering also the code review comments not related to CheckStyle warnings Auto-SCAT has a precision and a recall of ≈ 75%. Furthermore, Auto-SCAT can configuring CheckStyle with a precision of 72.7% at checks level and a precision of 96.3% at category level. Finally, our findings highlight that Auto-SCAT outperforms state-of-art baselines based on default CheckStyle configurations, or leveraging the history of previously-removed warnings.

This is a preview of subscription content, log in via an institution to check access.

Access this article

Subscribe and save.

- Get 10 units per month

- Download Article/Chapter or eBook

- 1 Unit = 1 Article or 1 Chapter

- Cancel anytime

Price includes VAT (Russian Federation)

Instant access to the full article PDF.

Rent this article via DeepDyve

Institutional subscriptions

Similar content being viewed by others

How developers engage with static analysis tools in different contexts

Supporting Code Review by Automatic Detection of Potentially Buggy Changes

Taxonomy of inline code comment smells

Explore related subjects.

- Artificial Intelligence

http://checkstyle.sourceforge.net

https://www.gerritcodereview.com

http://review.couchbase.org/#/c/21805/ line 241, author’s hidden for privacy reasons.

http://checkstyle.sourceforge.net/checks.html

https://www.eclipse.org/mylyn/

https://wiki.eclipse.org/Development_Conventions_and_Guidelines

https://checkstyle.sourceforge.io/sun_style.html

https://checkstyle.sourceforge.io/google_style.html

core/org.eclipse.cdt.ui/src/org/eclipse/cdt/internal/ui/refactoring/pullup/PullUp Information.java+refs-changes-77-22177-3

client/src/com/vaadin/client/widgets/Escalator.java+refs-changes-28-7628-1

Anderson P, Reps T, Teitelbaum T, Zarins M (2003) Tool support for fine-grained software inspection. In: IEEE Software

Ayewah N, Pugh W (2009) Using checklists to review static analysis warnings. In: Proceedings of the International Workshop on Defects in Large Software Systems: Held in Conjunction with the ACM SIGSOFT International Symposium on Software Testing and Analysis (ISSTA 2009), pp 11–15

Bacchelli A, Bird C (2013) Expectations, outcomes, and challenges of modern code review. In: Proceedings of the International Conference on Software Engineering (ICSE), pp 712–721

Baeza-Yates RA, Ribeiro-Neto B (1999) Modern information retrieval. Addison-Wesley longman publishing co. inc, UBoston

Google Scholar

Bavota G, Russo B (2015) Four eyes are better than two: on the impact of code reviews on software quality. In: IEEE International Conference on Software Maintenance and Evolution, (ICSME)

Baysal O, Kononenko O, Holmes R, Godfrey M (2013) The influence of non-technical factors on code review. In: Reverse Engineering (WCRE), 2013 20th Working Conference on

Beller M, Bacchelli A, Zaidman A, Juergens E (2014) Modern code reviews in open-source projects: Which problems do they fix?. In: Proceedings of the Working Conference on Mining Software Repositories (MSR), pp 202–211

Beller M, Bholanath R, McIntosh S, Zaidman A (2016) Analyzing the state of static analysis: A large-scale evaluation in open source software. In: Proceedings of the International Conference on Software Analysis, Evolution, and Reengineering (SANER), pp 470–481

Bosu A (2014) Characteristics of the vulnerable code changes identified through peer code review. In: 36Th international conference on software engineering, ICSE ’14, companion proceedings, hyderabad, india, may 31 - june 07, 2014, pp 736–738

Bosu A, Carver JC, Bird C, Orbeck J, Chockley C (2017) Process aspects and social dynamics of contemporary code review. Insights from open source development and industrial practice at microsoft. IEEE Transactions on Software Engineering

Cassee N, Vasilescu B, Serebrenik A (2020) The silent helper: The impact of continuous integration on code reviews. In: 27Th IEEE international conference on software analysis, evolution and reengineering, SANER 2020, london, ON, Canada, February 18-21, 2020, pp 423–434

Couto C, Montandon JE, Silva C, Valente MT (2013) Static correspondence and correlation between field defects and warnings reported by a bug finding tool. Softw Qual J 21(2):241–257

Article Google Scholar

Duvall P, Matyas SM, Glover A (2007) Continuous Integration. Improving Software Quality and Reducing Risk (The Addison-Wesley Signature Series). Addison-Wesley Professional

Fagan M (1976) Design and code inspections to reduce errors in program development. IBM Systems Journal

Fry Z, Weimer W (2013) Clustering static analysis defect reports to reduce maintenance costs. In: Proceedings of the Working Conference on Reverse Engineering (WCRE)

Hanam Q, Tan L, Holmes R, Lam P (2014) Finding patterns in static analysis alerts: Improving actionable alert ranking. In: Proceedings of the Working Conference on Mining Software Repositories

Huang A (2008) Similarity measures for text document clustering. In: New Zealand Computer Science Research Student Conference

Johnson B, Song Y, Murphy-Hill E, Bowdidge R (2013) Why don’t software developers use static analysis tools to find bugs?. In: Proceedings of the International Conference on Software Engineering (ICSE), pp 672–681

Khoo YP, Foster JS, Hicks M, Sazawal V (2008) Path projection for user-centered static analysis tools. In: Proceedings of the ACM SIGPLAN-SIGSOFT Workshop on Program Analysis for Software Tools and Engineering, pp 57–63

Kim S, Ernst M (2007) Which warnings should I fix first?. In: Proceedings of the Joint Meeting of the European Software Engineering Conference and the ACM SIGSOFT Symposium on the Foundations of Software Engineering (ESEC/FSE), pp 45–54

Kononenko O, Baysal O, Godfrey MW (2016) Code review quality: How developers see it. In: Proceedings of the 38th International Conference on Software Engineering

Manning CD, Raghavan P, Schütze H (2008) Introduction to Information Retrieval. Cambridge University Press, Cambridge

Book Google Scholar

Mäntylä M, Lassenius C (2009) What types of defects are really discovered in code reviews? IEEE Trans Software Eng 35(3):430–448

Marcilio D, Bonifacio R, Monteiro E, Canedo E, Luz W, Pinto G (2019) Are static analysis violations really fixed? a closer look at realistic usage of sonarqube. In: International Conference on Program Comprehension(ICPC)

McIntosh S, Kamei Y, Adams B, Hassan AE (2016) An empirical study of the impact of modern code review practices on software quality. Empir Softw Eng 21(5):2146–2189

Morales R, McIntosh S, Khomh F (2015) Do code review practices impact design quality? a case study of the qt, vtk, and itk projects. In: Proc. of the 22nd Int’l Conf. on Software Analysis, Evolution, and Reengineering (SANER)

Muske T, Baid A, Sanas T (2013) Review efforts reduction by partitioning of static analysis warnings. In: Proceedings of the International Working Conference on Source Code Analysis and Manipulation (SCAM)

Cousot P , Cousot R, Feret J, Mauborgne L, Monniaux D, Rival AX (2005) The astreé analyzer. In: Proceedings of the European Symposium on Programming (ESOP)

Panichella S, Zaugg N (2020) An empirical investigation of relevant changes and automation needs in modern code review. Empir Softw Eng 25(6):4833–4872

Panichella S, Arnaoudova V, Di Penta M, Antoniol G (2015) Would static analysis tools help developers with code reviews?. In: Proceedings of the International Conference on Software Analysis, Evolution, and Reengineering (SANER), pp 161–170

Pascarella L, Spadini D, Palomba F, Bruntink M, Bacchelli A (2018) Information needs in contemporary code review. PACMHCI 2(CSCW):135 27:1–135

Phang K, Foster JS, Hicks MW, Sazawal V (2009) Triaging checklists: a substitute for a phd in static analysis. Evaluation and Usability of Programming Languages and Tools (PLATEAU)

Porter M (1980) An algorithm for suffix stripping. Program 14 (3):130–137. https://doi.org/10.1108/eb046814

Querel LP, Rigby PC (2018) Warningsguru: integrating statistical bug models with static analysis to provide timely and specific bug warnings. In: Proceedings of the 2018 26th ACM Joint Meeting on European Software Engineering Conference and Symposium on the Foundations of Software Engineering, pp 892–895

Řehůřek R, Sojka P (2010) Software framework for topic modelling with large corpora. In: Proceedings of the LREC 2010 Workshop on New Challenges for NLP Frameworks (ELRA), pp 45–50

Reiss S (2007) Automatic code stylizing. In: Proceedings of the International Conference on Automated Software Engineering (ASE), pp 74–83

Ribeiro A, Meirelles P, Lago N, Kon F (2019) Ranking warnings from multiple source code static analyzers via ensemble learning. In: Proceedings of the 15th International Symposium on Open Collaboration, ACM, p 5

Rigby PC, German DM, Storey MA (2008) Open source software peer review practices: A case study of the apache server. In: Proceedings of the 30th International Conference on Software Engineering

Ruthruff JR, Penix J, Morgenthaler JD, Elbaum S, Rothermel G (2008) Predicting accurate and actionable static analysis warnings: an experimental approach. In: Proceedings of the International Conference on Software Engineering (ICSE), pp 341–350

Salton GM, Wong A, Yang C (1975) A vector space model for automatic indexing

Sokolova M, Guy L (2009) A systematic analysis of performance measures for classification tasks. Information Processing & Management 427–437

Spacco J, Hovemeyer D, Pugh W (2006) Tracking defect warnings across versions. In: Proceedings of the 2006 international workshop on Mining software repositories, ACM, pp 133–136

Vassallo C, Panichella S, Palomba F, Proksch S, Zaidman A, Gall HC (2018) Context is king: The developer perspective on the usage of static analysis tools. In: Proceedings of the International Conference on Software Analysis, Evolution and Reengineering (SANER), pp 38–49

Wedyan F, Alrmuny D, Bieman JM (2009) The effectiveness of automated static analysis tools for fault detection and refactoring prediction. In: Second International Conference on Software Testing Verification and Validation, ICST 2009, Denver, Colorado, USA, April 1-4, 2009, IEEE Computer Society, pp 141–150

Weißgerber P, Neu D, Diehl S (2008) Small patches get in!. In: Proceedings of the 2008 International Working Conference on Mining Software Repositories

Williams CC, Hollingsworth JK (2005) Automatic mining of source code repositories to improve bug finding techniques. IEEE Transactions on Software Engineering

Yang Y (1999) An evaluation of statistical approaches to text categorization. Inf Retr 1(1):69–90

Yoon J, Jin M, Jung Y (2014) Reducing false alarms from an industrial-strength static analyzer by SVM. In: 21St asia-pacific software engineering conference, APSEC 2014, jeju, south korea, december 1–4, 2014 2 Industry, Short, and QuASoQ Papers, pp 3–6

Yüksel U, Sözer H (2013) Automated classification of static code analysis alerts: a case study. In: Proceedings of the International Conference on Software Maintenance

Zampetti F, Scalabrino S, Oliveto R, Canfora G, Di Penta M (2017) How open source projects use static code analysis tools in continuous integration pipelines. In: Proceedings of the 14th International Conference on Mining Software Repositories, MSR 2017, Buenos Aires, Argentina, May 20-28, 2017, pp 334–344

Zampetti F, Mudbhari S, Arnaoudova V, Di Penta M, Panichella S, Antoniol G (2020). https://doi.org/10.5281/zenodo.4399225

Zhang D, Jin YGD, Zhang H (2013) Diagnosis-oriented alarm correlations. In: Asia-Pacific Software Engineering Conference (APSEC)

Zheng J, Williams L, Nagappan N, Snipes W, Hudepohl JP, Vouk MA (2006) On the value of static analysis for fault detection in software. IEEE Transactions on Software Engineering (TSE) 32(4):240–253

Download references

Author information

Authors and affiliations.

University of Sannio, Benevento, Italy

Fiorella Zampetti & Massimiliano Di Penta

Washington State University, Pullman, WA, USA

Saghan Mudbhari & Venera Arnaoudova

Zurich University of Applied Sciences, Winterthur, Switzerland

Sebastiano Panichella

Ecole Polytechnique de Montreal, Montreal, Canada

Giuliano Antoniol

You can also search for this author in PubMed Google Scholar

Corresponding author

Correspondence to Fiorella Zampetti .

Additional information

Communicated by: Lin Tan

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Reprints and permissions

About this article

Zampetti, F., Mudbhari, S., Arnaoudova, V. et al. Using code reviews to automatically configure static analysis tools. Empir Software Eng 27 , 28 (2022). https://doi.org/10.1007/s10664-021-10076-4

Download citation

Accepted : 28 October 2021

Published : 11 December 2021

DOI : https://doi.org/10.1007/s10664-021-10076-4

Share this article

Anyone you share the following link with will be able to read this content:

Sorry, a shareable link is not currently available for this article.

Provided by the Springer Nature SharedIt content-sharing initiative

- Static analysis tools

- Code reviews

- Automated tool configuration

- Find a journal

- Publish with us

- Track your research

Academia.edu no longer supports Internet Explorer.

To browse Academia.edu and the wider internet faster and more securely, please take a few seconds to upgrade your browser .

Enter the email address you signed up with and we'll email you a reset link.

- We're Hiring!

- Help Center

Static Code Analysis Tools: A Systematic Literature Review

DAAAM Proceedings

Related Papers

Artur Ataíde

Static Code Analysis tools can reduce the number of bugs in one program therefore it can reduce the cost of this program. Many developers don’t use these tools losing a lot of time with manual code analysis (in some cases there are no analysis at all) and a lot of money with resources to do the analysis. In this paper we will test and study the results of three static code analysis tools that by being inexpensive can efficiently remove the most common vulnerabilities in a software. It can be difficult to compare tools with different characteristics but we can get interesting results by testing the tools together.

Proceedings of the 32nd International DAAAM Symposium 2021

Danilo Nikolic

Matthias Saft

There is a lack of information concerning the quality of static code analysis tools. In order to overcome this we therefore developed a method and a tool supporting quality engineers to determine the quality of static code analysis tools. This paper shows how the method works and where the tool supports it. We already applied the combination of the method and its tool to two static code analysis tools in different versions. On this basis, we further illustrate some results of the usage of the method.

Not any error detection tool, is capable of detecting, processing and rectifying all the errors. Our main aim is to increase the level of authenticity that ASA (Automatic Static Analysis) can provide us. These Static analysis tools are used to check for vulnerabilities in systems and programs, as the correctness or authenticity of the program is the greatest concern in developing them and verifying them prior to their release. These tools use a wide variety of functions, to prevent many errors and loopholes from occurring at many different stages of the programs. But still, ASA tools provide a lot of false positives that again require a lot of human involvement to rectify them. Here we review the different techniques, and methods that are primarily used in Automatic Static Analysis, and also that coding concerns that arise in the process.

—The main disadvantage of static analysis tools is their high false positive rates. False positives are errors that either do not exist or do not lead to serious software failures. Thus, the benefits of automated static analysis tools are reduced due to the need for manual interventions to assess true and false positive warnings. This paper presents a systematic mapping study to identify current state-of-the-art static analysis techniques and tools as well as the main approaches that have been developed to mitigate false positives.

Faith Mueni

Web applications have become an integral part of the daily lives of trillions of users. These systems are usually complex and are developed by different programmers. Regularly programmers make mistakes in the code which could generate critical software vulnerabilities. Despite the knowledge about vulnerabilities nowadays there is still a growing tendency in the number of reported vulnerabilities, reason why software security has become an important field of research. Due to the presence of vulnerabilities it has been necessary to have tools that can help programmers detect them in code development stage. This paper has analysed pattern matching and taints analysis techniques that are currently used in development of static tools to in detection of vulnerabilities.

15th International Symposium on Software Reliability Engineering

John Hudepohl

khalid alemerien

Shows that several open source and commercial coverage tools give different results on programs. These results differ more as the size of the programs grows.

Eugene H Spafford

Proceedings of the Institute for System Programming of the RAS

Damir Gimatdinov

Automated testing frameworks are widely used for assuring quality of modern software in secure software development lifecycle. Sometimes it is needed to assure quality of specific software and, hence specific approach should be applied. In this paper, we present an approach and implementation details of automated testing framework suitable for acceptance testing of static source code analysis tools. The presented framework is used for continuous testing of static source code analyzers for C, C++ and Python programs.

Loading Preview

Sorry, preview is currently unavailable. You can download the paper by clicking the button above.

RELATED PAPERS

Proceedings of the 2008 workshop on Defects in large software systems

William Pugh

IEEE Transactions on Software Engineering, Vol. 32, No. 4, pp. 240-253

Jiang Zheng

11th Asia-Pacific Software Engineering Conference

Christopher Pham

Yuriy Tymchuk

Subburaj Ramasamy , Pooja U. Raikar

Subburaj Ramasamy , Deepak Singal

Cyrille Artho

Karen J Smiley

Proceedings 16th Annual Computer Security Applications Conference (ACSAC'00)

Wolfgang Wögerer

Engineering Secure Software and Systems

James Walden

2017 IEEE 24th International Conference on Software Analysis, Evolution and Reengineering (SANER)

Michiel Doesburg

IBM Systems Journal

Marco Pistoia

2015 IEEE 22nd International Conference on Software Analysis, Evolution, and Reengineering (SANER)

Venera Arnaoudova , Sebastiano Panichella

Advances in Science, Technology and Engineering Systems Journal

Arooba Shahoor

2018 IEEE 25th International Conference on Software Analysis, Evolution and Reengineering (SANER)

Sebastiano Panichella

Misha Zitser

Proceedings of the 7th ACM SIGPLAN-SIGSOFT workshop on Program analysis for software tools and engineering

Tim Moses , David Syman

LATW2010 - 11th Latin-American Test Workshop

Leonardo Steinfeld

Proceedings of the 6th Conference on Formal Methods in Software Engineering - FormaliSE '18

Agostino Cortesi

2012 35th Annual IEEE Software Engineering Workshop

Nico Zazworka , Michele Shaw

Hristina Gulabovska

RELATED TOPICS

- We're Hiring!

- Help Center

- Find new research papers in:

- Health Sciences

- Earth Sciences

- Cognitive Science

- Mathematics

- Computer Science

- Academia ©2024

We apologize for the inconvenience...

To ensure we keep this website safe, please can you confirm you are a human by ticking the box below.

If you are unable to complete the above request please contact us using the below link, providing a screenshot of your experience.

https://ioppublishing.org/contacts/

- DOI: 10.1109/INFOTEH51037.2021.9400688

- Corpus ID: 233262250

Analysis of the Tools for Static Code Analysis

- Danilo Nikolić , Darko Stefanović , +2 authors S. Ristić

- Published in 20th International Symposium… 17 March 2021

- Computer Science

- 2021 20th International Symposium INFOTEH-JAHORINA (INFOTEH)

Figures and Tables from this paper

One Citation

Methods and benchmark for detecting cryptographic api misuses in python, 15 references, identification of strategies over tools for static code analysis, how open source projects use static code analysis tools in continuous integration pipelines, code analysis for software and system security using open source tools, analyzing the state of static analysis: a large-scale evaluation in open source software, static program analysis, a methodology for evaluating software engineering methods and tools.

- Highly Influential

Tools to support systematic reviews in software engineering: a feature analysis

Desmet : a method for evaluating software engineering methods and tools, improved metrics handling in sonarqube for software quality monitoring, static code analysis tools: a systematic literature review, related papers.

Showing 1 through 3 of 0 Related Papers

A systematic literature review of actionable alert identification techniques for automated static code analysis

New Citation Alert added!

This alert has been successfully added and will be sent to:

You will be notified whenever a record that you have chosen has been cited.

To manage your alert preferences, click on the button below.

New Citation Alert!

Please log in to your account

Information & Contributors

Bibliometrics & citations, view options.

- Vijayvergiya M Salawa M Budiselić I Zheng D Lamblin P Ivanković M Carin J Lewko M Andonov J Petrović G Tarlow D Maniatis P Just R Adams B Zimmermann T Ozkaya I Lin D Zhang J (2024) AI-Assisted Assessment of Coding Practices in Modern Code Review Proceedings of the 1st ACM International Conference on AI-Powered Software 10.1145/3664646.3665664 (85-93) Online publication date: 10-Jul-2024 https://dl.acm.org/doi/10.1145/3664646.3665664

- Mohajer M Aleithan R Harzevili N Wei M Belle A Pham H Wang S Adams B Zimmermann T Ozkaya I Lin D Zhang J (2024) Effectiveness of ChatGPT for Static Analysis: How Far Are We? Proceedings of the 1st ACM International Conference on AI-Powered Software 10.1145/3664646.3664777 (151-160) Online publication date: 10-Jul-2024 https://dl.acm.org/doi/10.1145/3664646.3664777

- Liu Z Yan M Gao Z Li D Zhang X Yang D Spinellis D Constantinou E Bacchelli A (2024) AW4C: A Commit-Aware C Dataset for Actionable Warning Identification Proceedings of the 21st International Conference on Mining Software Repositories 10.1145/3643991.3644885 (133-137) Online publication date: 15-Apr-2024 https://dl.acm.org/doi/10.1145/3643991.3644885

- Show More Cited By

Index Terms

Software and its engineering

Software creation and management

Software verification and validation

Software defect analysis

Software testing and debugging

Recommendations

Finding patterns in static analysis alerts: improving actionable alert ranking.

Static analysis (SA) tools that find bugs by inferring programmer beliefs (e.g., FindBugs) are commonplace in today's software industry. While they find a large number of actual defects, they are often plagued by high rates of alerts that a developer ...

On establishing a benchmark for evaluating static analysis alert prioritization and classification techniques

Benchmarks provide an experimental basis for evaluating software engineering processes or techniques in an objective and repeatable manner. We present the FAULTBENCH v0.1 benchmark, as a contribution to current benchmark materials, for evaluation and ...

A comparative evaluation of static analysis actionable alert identification techniques

Automated static analysis (ASA) tools can identify potential source code anomalies that could lead to field failures. Developer inspection is required to determine if an ASA alert is important enough to fix, or an actionable alert . Supplementing current ...

Information

Published in.

Butterworth-Heinemann

United States

Publication History

Author tags.

- Actionable alert identification

- Actionable alert prediction

- Automated static analysis

- Systematic literature review

- Unactionable alert mitigation

- Warning prioritization

- Research-article

Contributors

Other metrics, bibliometrics, article metrics.

- 38 Total Citations View Citations

- 0 Total Downloads

- Downloads (Last 12 months) 0

- Downloads (Last 6 weeks) 0

- Latappy C Degueule T Falleri J Robbes R Blanc X Teyton C Baysal O Linares-Vasquez M Moran K Steinmacher I (2024) What the Fix? A Study of ASATs Rule Documentation Proceedings of the 32nd IEEE/ACM International Conference on Program Comprehension 10.1145/3643916.3644404 (246-257) Online publication date: 15-Apr-2024 https://dl.acm.org/doi/10.1145/3643916.3644404

- Zhang H Pei Y Chen J Tan S Chandra S Blincoe K Tonella P (2023) Statfier: Automated Testing of Static Analyzers via Semantic-Preserving Program Transformations Proceedings of the 31st ACM Joint European Software Engineering Conference and Symposium on the Foundations of Software Engineering 10.1145/3611643.3616272 (237-249) Online publication date: 30-Nov-2023 https://dl.acm.org/doi/10.1145/3611643.3616272

- Guo Z Tan T Liu S Liu X Lai W Yang Y Li Y Chen L Dong W Zhou Y (2023) Mitigating False Positive Static Analysis Warnings: Progress, Challenges, and Opportunities IEEE Transactions on Software Engineering 10.1109/TSE.2023.3329667 49 :12 (5154-5188) Online publication date: 1-Dec-2023 https://dl.acm.org/doi/10.1109/TSE.2023.3329667

- Lenarduzzi V Pecorelli F Saarimaki N Lujan S Palomba F (2023) A critical comparison on six static analysis tools Journal of Systems and Software 10.1016/j.jss.2022.111575 198 :C Online publication date: 1-Apr-2023 https://dl.acm.org/doi/10.1016/j.jss.2022.111575

- Choi Y Nam J (2023) WINE Information and Software Technology 10.1016/j.infsof.2022.107109 155 :C Online publication date: 1-Mar-2023 https://dl.acm.org/doi/10.1016/j.infsof.2022.107109

- Nachtigall M Schlichtig M Bodden E Ryu S Smaragdakis Y (2022) A large-scale study of usability criteria addressed by static analysis tools Proceedings of the 31st ACM SIGSOFT International Symposium on Software Testing and Analysis 10.1145/3533767.3534374 (532-543) Online publication date: 18-Jul-2022 https://dl.acm.org/doi/10.1145/3533767.3534374

- Wang J Huang Y Wang S Wang Q Rastogi A Tufano R Bavota G Arnaoudova V Haiduc S (2022) Find bugs in static bug finders Proceedings of the 30th IEEE/ACM International Conference on Program Comprehension 10.1145/3524610.3527899 (516-527) Online publication date: 16-May-2022 https://dl.acm.org/doi/10.1145/3524610.3527899

View options

Login options.

Check if you have access through your login credentials or your institution to get full access on this article.

Full Access

Share this publication link.

Copying failed.

Share on social media

Affiliations, export citations.

- Please download or close your previous search result export first before starting a new bulk export. Preview is not available. By clicking download, a status dialog will open to start the export process. The process may take a few minutes but once it finishes a file will be downloadable from your browser. You may continue to browse the DL while the export process is in progress. Download

- Download citation

- Copy citation

We are preparing your search results for download ...

We will inform you here when the file is ready.

Your file of search results citations is now ready.

Your search export query has expired. Please try again.

IEEE Account

- Change Username/Password

- Update Address

Purchase Details

- Payment Options

- Order History

- View Purchased Documents

Profile Information

- Communications Preferences

- Profession and Education

- Technical Interests

- US & Canada: +1 800 678 4333

- Worldwide: +1 732 981 0060

- Contact & Support

- About IEEE Xplore

- Accessibility

- Terms of Use

- Nondiscrimination Policy

- Privacy & Opting Out of Cookies

A not-for-profit organization, IEEE is the world's largest technical professional organization dedicated to advancing technology for the benefit of humanity. © Copyright 2024 IEEE - All rights reserved. Use of this web site signifies your agreement to the terms and conditions.

Digitala Vetenskapliga Arkivet

| Please wait ... |

- Static Code Analysis: A Systematic Literature Review and an Industr...

- CSV all metadata

- CSV all metadata version 2

- modern-language-association-8th-edition

- Other style

- Other locale

Ilyas, Bilal

Elkhalifa, islam, abstract [en].

Context : Static code analysis is a software verification technique that refers to the process of examining code without executing it in order to capture defects in the code early, avoiding later costly fixations. The lack of realistic empirical evaluations in software engineering has been identified as a major issue limiting the ability of research to impact industry and in turn preventing feedback from industry that can improve, guide and orient research. Studies emphasized rigor and relevance as important criteria to assess the quality and realism of research. The rigor defines how adequately a study has been carried out and reported, while relevance defines the potential impact of the study on industry. Despite the importance of static code analysis techniques and its existence for more than three decades, the number of empirical evaluations in this field are less in number and do not take into account the rigor and relevance into consideration.

Objectives : The aim of this study is to contribute toward bridging the gap between static code analysis research and industry by improving the ability of research to impact industry and vice versa. This study has two main objectives. First, developing guidelines for researchers, which will explore the existing research work in static code analysis research to identify the current status, shortcomings, rigor and industrial relevance of the research, reported benefits/limitations of different static code analysis techniques, and finally, give recommendations to researchers to help improve the future research to make it more industrial oriented. Second, developing guidelines for practitioners, which will investigate the adoption of different static code analysis techniques in industry and identify benefits/limitations of these techniques as perceived by industrial professionals. Then cross-analyze the findings of the SLR and the surbvey to draw final conclusions, and finally, give recommendations to professionals to help them decide which techniques to adopt.

Methods : A sequential exploratory strategy characterized by the collection and analysis of qualitative data (systematic literature review) followed by the collection and analysis of quantitative data (survey), has been used to conduct this research. In order to achieve the first objective, a thorough systematic literature review has been conducted using Kitchenham guidelines. To achieve the second study objective, a questionnaire-based online survey was conducted, targeting professionals from software industry in order to collect their responses regarding the usage of different static code analysis techniques, as well as their benefits and limitations. The quantitative data obtained was subjected to statistical analysis for the further interpretation of the data and draw results based on it.

Results : In static code analysis research, inspection and static analysis tools received significantly more attention than the other techniques. The benefits and limitations of static code analysis techniques were extracted and seven recurrent variables were used to report them. The existing research work in static code analysis field significantly lacks rigor and relevance and the reason behind it has been identified. Somre recommendations are developed outlining how to improve static code analysis research and make it more industrial oriented. From the industrial point of view, static analysis tools are widely used followed by informal reviews, while inspections and walkthroughs are rarely used. The benefits and limitations of different static code analysis techniques, as perceived by industrial professionals, have been identified along with the influential factors.

Conclusions : The SLR concluded that the techniques having a formal, well-defined process and process elements have receive more attention in research, however, this doesn’t necessarily mean that technique is better than the other techniques. The experiments have been used widely as a research method in static code analysis research, but the outcome variables in the majority of the experiments are inconsistent. The use of experiments in academic context contributed nothing to improve the relevance, while the inadequate reporting of validity threats and their mitigation strategies contributed significantly to poor rigor of research. The benefits and limitations of different static code analysis techniques identified by the SLR could not complement the survey findings, because the rigor and relevance of most of the studies reporting them was weak. The survey concluded that the adoption of static code analysis techniques in the industry is more influenced by the software life-cycle models in practice in organizations, while software product type and company size do not have much influence. The amount of attention a static code analysis technique has received in research doesn’t necessarily influence its adoption in industry which indicates a wide gap between research and industry. However, the company size, product type, and software life-cycle model do influence professionals perception on benefits and limitations of different static code analysis techniques.

Place, publisher, year, edition, pages

Keywords [en], national category, identifiers, subject / course, educational program, presentation, supervisors, petersen, kai, professor, börstler, jürgen, professor, open access in diva, file information, search in diva, by author/editor, by organisation, on the subject, search outside of diva, altmetric score.

This website uses cookies to analyze our traffic and only share that information with our analytics partners.

Static Code Analysis

Description.

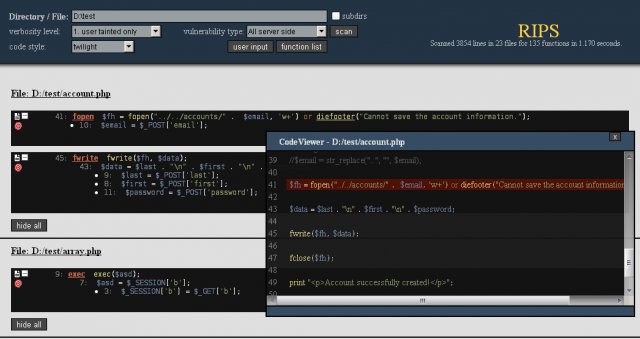

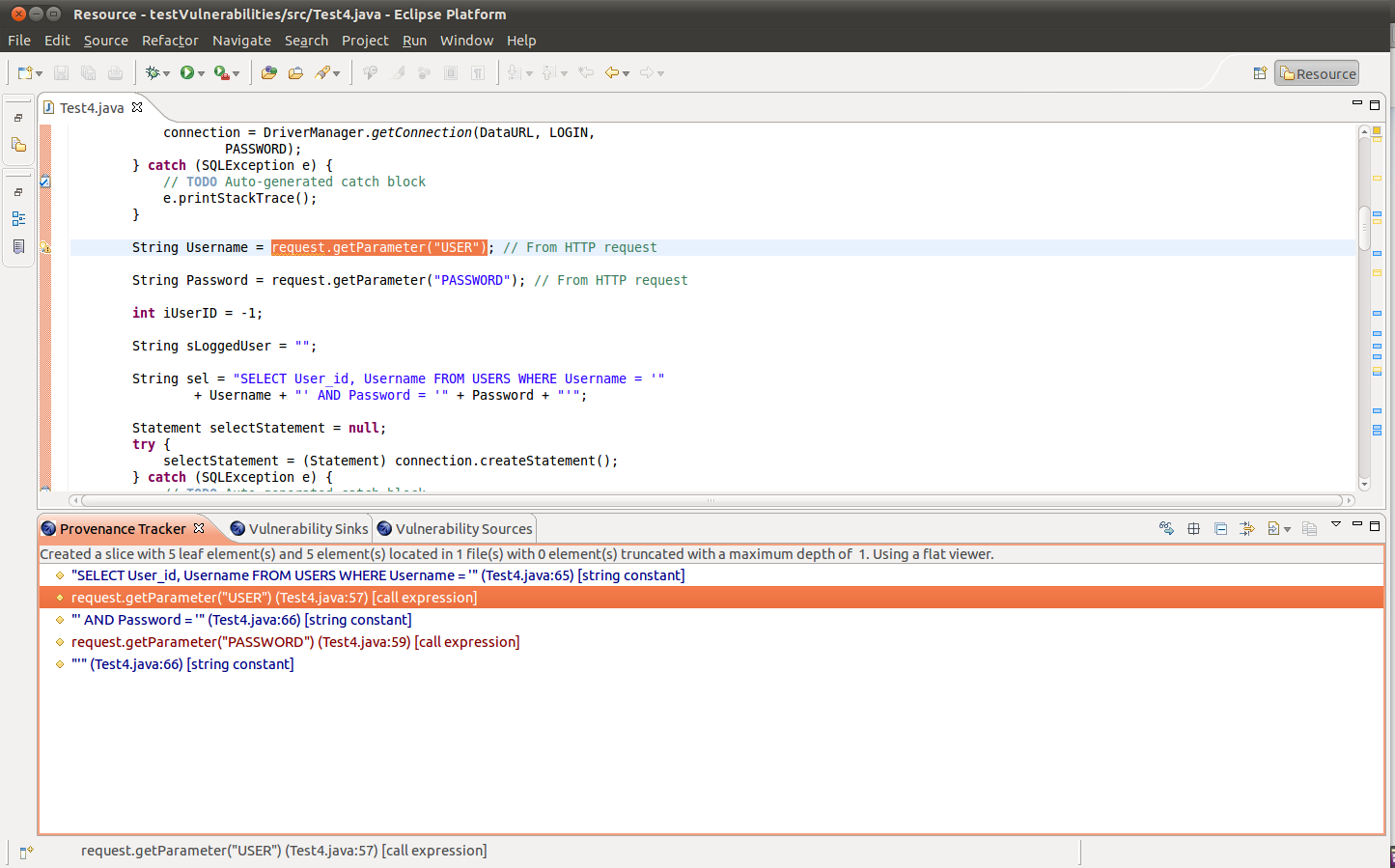

Static Code Analysis (also known as Source Code Analysis) is usually performed as part of a Code Review (also known as white-box testing) and is carried out at the Implementation phase of a Security Development Lifecycle (SDL). Static Code Analysis commonly refers to the running of Static Code Analysis tools that attempt to highlight possible vulnerabilities within ‘static’ (non-running) source code by using techniques such as Taint Analysis and Data Flow Analysis.

Ideally, such tools would automatically find security flaws with a high degree of confidence that what is found is indeed a flaw. However, this is beyond the state of the art for many types of application security flaws. Thus, such tools frequently serve as aids for an analyst to help them zero in on security relevant portions of code so they can find flaws more efficiently, rather than a tool that simply finds flaws automatically.

Some tools are starting to move into the Integrated Development Environment (IDE). For the types of problems that can be detected during the software development phase itself, this is a powerful phase within the development lifecycle to employ such tools, as it provides immediate feedback to the developer on issues they might be introducing into the code during code development itself. This immediate feedback is very useful as compared to finding vulnerabilities much later in the development cycle.

The UK Defense Standard 00-55 requires that Static Code Analysis be used on all ‘safety related software in defense equipment’. [0]

There are various techniques to analyze static source code for potential vulnerabilities that maybe combined into one solution. These techniques are often derived from compiler technologies.

Data Flow Analysis

Data flow analysis is used to collect run-time (dynamic) information about data in software while it is in a static state ( Wögerer, 2005 ).

There are three common terms used in data flow analysis, basic block (the code), Control Flow Analysis (the flow of data) and Control Flow Path (the path the data takes):

Basic block: A sequence of consecutive instructions where control enters at the beginning of a block, control leaves at the end of a block and the block cannot halt or branch out except at its end ( Wögerer, 2005 ).

Example PHP basic block:

Control Flow Graph (CFG)

An abstract graph representation of software by use of nodes that represent basic blocks. A node in a graph represents a block; directed edges are used to represent jumps (paths) from one block to another. If a node only has an exit edge, this is known as an ‘entry’ block, if a node only has a entry edge, this is know as an ‘exit’ block ( Wögerer, 2005 ).

Example Control Flow Graph; ‘node 1’ represents the entry block and ‘node 6’ represents the exit block.

Taint Analysis

Taint Analysis attempts to identify variables that have been ‘tainted’ with user controllable input and traces them to possible vulnerable functions also known as a ‘sink’. If the tainted variable gets passed to a sink without first being sanitized it is flagged as a vulnerability.

Some programming languages such as Perl and Ruby have Taint Checking built into them and enabled in certain situations such as accepting data via CGI.

Lexical Analysis

Lexical Analysis converts source code syntax into ‘tokens’ of information in an attempt to abstract the source code and make it easier to manipulate (Sotirov, 2005).

Pre-tokenised PHP source code:

<?php $name = "Ryan"; ?>

Post tokenised PHP source code:

Strengths and Weaknesses

- Scales Well (Can be run on lots of software, and can be repeatedly (like in nightly builds))

- For things that such tools can automatically find with high confidence, such as buffer overflows, SQL Injection Flaws, etc. they are great.

- Many types of security vulnerabilities are very difficult to find automatically, such as authentication problems, access control issues, insecure use of cryptography, etc. The current state of the art only allows such tools to automatically find a relatively small percentage of application security flaws. Tools of this type are getting better, however.

- High numbers of false positives.

- Frequently can’t find configuration issues, since they are not represented in the code.

- Difficult to ‘prove’ that an identified security issue is an actual vulnerability.

- Many of these tools have difficulty analyzing code that can’t be compiled. Analysts frequently can’t compile code because they don’t have the right libraries, all the compilation instructions, all the code, etc.

Limitations

False positives.

A static code analysis tool will often produce false positive results where the tool reports a possible vulnerability that in fact is not. This often occurs because the tool cannot be sure of the integrity and security of data as it flows through the application from input to output.

False positive results might be reported when analysing an application that interacts with closed source components or external systems because without the source code it is impossible to trace the flow of data in the external system and hence ensure the integrity and security of the data.

False Negatives

The use of static code analysis tools can also result in false negative results where vulnerabilities result but the tool does not report them. This might occur if a new vulnerability is discovered in an external component or if the analysis tool has no knowledge of the runtime environment and whether it is configured securely.

Important Selection Criteria

- Requirement: Must support your language, but not usually a key factor once it does.

- Types of Vulnerabilities it can detect (The OWASP Top Ten?) (more?)

- Does it require a fully buildable set of source?

- Can it run against binaries instead of source?

- Can it be integrated into the developer’s IDE?

- License cost for the tool. (Some are sold per user, per org, per app, per line of code analyzed. Consulting licenses are frequently different than end user licenses.)

- Does it support Object-oriented programming (OOP)?

RIPS PHP Static Code Analysis Tool

OWASP LAPSE+ Static Code Analysis Tool

- OWASP - Source Code Analysis Tools

- NIST - Source Code Security Analyzers

- Wikipedia - List of tools for static code analysis

Further Reading

Important community links.

- Vulnerabilities

- Controls (You Are Here)

Upcoming OWASP Global Events

Owasp news & opinions.

Ask a question

Start a discussion.

- Atlassian logo Jira Product Discovery

- Jira Service Desk Jira Service Management

- Confluence Confluence

- Trello Trello

Community resources

- Announcements

- Documentation and support

Atlassian Community Events

- Atlassian University

- groups-icon Welcome Center

- groups-icon Featured Groups

- groups-icon Product Groups

- groups-icon Regional Groups

- groups-icon Industry Groups

- groups-icon Community Groups

- Learning Paths

- Certifications

- Courses by Product

- Live learning

- Local meet ups

- Community led conferences

Get product advice from experts

Join a community group

Advance your career with learning paths

Earn badges and rewards

Connect and share ideas at events

- Featured Groups

- App Central

Why Static Code Analysis Should Also Be Used In Your Code Review Process

Was this helpful?

Ilona_Mibex Software

About this author

Product Marketing Manager - Mibex Software

Mibex Software

43 total posts

- +10 more...

- atlassian-marketplace

- bitbucket-server

- code-review

- data-center

- Community Guidelines

- Privacy policy

- Notice at Collection

- Terms of use

- © 2024 Atlassian

8 Best Static Code Analysis Tools For 2024

Tired of trying to find a proper tool to run static code analysis on your codes, or are the tools you use not satisfying? Then, keep reading because we are about to reveal the top 8 tools for static code analysis of 2024 that will revolutionize your coding experience.

But first, let’s quickly go through the basics of static code analysis.

What is Static Code Analysis?

Static code analysis is an approach that examines your code without executing it to identify any potential errors, violations of coding standards, and security vulnerabilities. Generally, static code analysis can find,

- Errors in the code ( syntax, logic, etc .)

- Security vulnerabilities

- Issues with code quality

- Violations of coding standards and best practices

- Performance issues

To perform static code analysis, there are dedicated tools referred to as Static Code Analysis tools (or Static Source Code Analysis tools). These tools are more professional than regular code analysis tools.

Unlike dynamic code analysis tools, these tools help you create a cleaner , enhanced , secure codebase that meets your quality goals and metrics with minimum bugs and errors.

Without going further, let’s explore some of the best static code analysis tools for 2024.

1. CodiumAI

CodiumAI is one of the best tools you can find to run your static code analysis. It leverages AI to analyze your code before executing it, identify potential bugs and security risks, and suggest improvements.

Some of its impressive features include,

- Code Analysis : Analyze your code thoroughly and write a complete analysis report as text.

- Code Enhancement : Gives you an enhanced and cleaner code.

- Code Improve : Identify bugs and security risks and suggest improvements and best practices to solve them.

- Code Explain : Gives you a detailed overview of the code.

- Generate Test Suite : Generate test cases for different scenarios where you can improve code performance and behavior.

CodiumAI can be used as an IDE plugin ( Codiumate ), a Git plugin ( PR-Agent ), or a CLI tool ( Cover-Agent ), allowing seamless integration and experience.

It also supports many programming languages, such as Python , JavaScript , TypeScript , Java , C++, Go , and PHP .

When it comes to pricing, CodiumAI is free for individual developers , while there are paid plans with exciting features for teams and enterprises.

2. PVS Studio

PVS Studio is a static code analyzer that helps developers easily detect security vulnerabilities and bugs. It supports code snippets written in C , C++ , C# and Java .

The main features it provides as a static code analysis tool include,

- Bug detection : Identify any bugs/errors and provide warnings.

- Code quality suggestions : Analyzes the code and suggests code improvements.

- Vulnerability scanning : Scan potential security risks and vulnerabilities.

- Detailed reporting : Generates comprehensive reports on the findings and suggestions.

PVS Studio provides many integration options, including IDEs, build systems, CI platforms , etc. You can also install this tool on operating systems like Windows, macOS , or Linux .

You have to request the pricing of this tool, and it has flexible pricing options for individuals, teams, enterprises, resellers, etc. Also, it allows for a free trial and is free for students, teachers, and open-source projects .

ESLint is an open-source project you can integrate and use for static code analysis. It is built to analyze your JavaScript codes and find and fix issues, allowing you to have your code at its best.

It allows you to,

- Find issues : Analyze your code and identify potential bugs.

- Fix problems automatically : Automatically fix most of the identified issues with your code.

- Configuration options : You can customize the tool as needed by creating your own rules and using custom parsers.

You can use ESLint through a supported IDE such as VS Code, Eclipse, and IntelliJ IDEA or integrate it with your CI pipelines . Moreover, you can install it locally using a package manager like npm, yarn, npx , etc.

Since ESLint is an open-source tool, it is free for anyone, and there are no paid plans.

4. SonarQube

SonarQube is a widely used code analysis tool that helps you write clean, reliable, and secure code. Below are some of its key features that allow you to conduct a proper static code analysis.

- Defect issues : Find bugs and issues that may cause unexpected behaviors or problems.

- Vast language coverage : SonarQube supports 30+ programming languages, frameworks, and IaC (Infrastructure as Code) platforms.

- SAST (static application security testing) engine : Uncovers deeply concealed security vulnerabilities using the SAST engine.

- Quality gates : Fails code pipelines when defined code quality metrics are not met.

- Super fast analysis : You can get actionable clean code metrics within minutes.

- Extensive reporting : Gives you well-detailed dashboards and reports on numerous code quality metrics.

SonarQube allows you to integrate it with various DevOps platforms such as Azure DevOps, GitLab, GitHub, BitBucket . and CI/CD tools such as Jenkins .

Regarding the pricing options, SonarQube offers a free Community Edition and paid plans with advanced features for developers, enterprises, and data centers.

5. Fortify Static Code Analyzer

Fortify Static Code Analyzer is one of the best SAST (static application security testing) tools available. It can deeply scan your code, identify potential security vulnerabilities, and suggest mitigation strategies.

Its main features include:

- Comprehensive coverage : Static Code Analyzer has the power to identify 1600+ vulnerability types over 35+ programming languages.

- Comprehensive vulnerability scanning : Deeply scan your code using SAST and DAST methods to identify security vulnerabilities and eliminate them in their early stages.

- Scalability : It scans your code even if it is complex and has a large codebase with thousands of code lines. It also reduces build times by increasing performance and false positives by up to 95%.

Fortify Static Code Analyzer can be integrated with Jenkins, Jira, Azure DevOps, Eclipse , and Microsoft Visual Studio .

Regarding the pricing, you have to request pricing , and there are no free trials .

6. Coverity

Coverity by Synopsys is one of the code scanning tools widely used for static code analysis. It can help you easily identify and fix various issues, improving performance and reducing build times.

Below are the key features it provides.

- Identifying bugs and errors : Analyze your code thoroughly and find possible errors and bugs that may cause unexpected behavior.

- Root cause explanation : After finding issues, Coverity will provide a detailed explanation of each issue’s root cause, allowing you to fix them quickly.

- Vulnerability detection : Fully scans your code, identifies security risks, and provides mitigation guidelines.

- Language coverage : Coverity scans projects built with JavaScript, Java, C, C++, C#, Ruby, and Python.

Coverity can be integrated with GitLab, GitHub, Jenkins , and Travis CI platforms, and it provides plugins for multiple IDEs, including VS Code .

You can use Coverity for free by registering for your open-source project.

Codacy is a popular code analysis and quality tool that helps you deliver better software. It continuously reviews your code and monitors its quality from the beginning.

It includes features such as:

- Healthy code : Identifies bugs in the code and provides suggestions enforcing code quality, performance, and behavior.

- Complete visibility : Dedicated dashboards allow you to check the health quality of your repositories.

- Risk prioritization : Through security and risk management dashboards, you can prioritize and fix the identified security risks immediately.

- Securing your code : Protect your code with SAST, hard-coded secrets detection, configuring IaC platforms, dynamic application security testing, etc.

Codacy supports a broader range of tools, languages, and frameworks, including GitHub, GitLab, BtBucket, Slack, Jira, Kubernetes, Ruby, JS, Ts, C++ , etc.

Codacy is an open-source tool that can be used for free , and pricing plans with more benefits start from $15/month.

8. ReSharper

ReSharper is an extension developed for Visual Studio IDE that provides benefits for .Net Developers . It has a rich set of features, including on-the-fly error detection, quick error correction, and intelligent coding assistance.

As a static code analyzer, it allows users to:

- Support multiple languages : Analyze the quality of your codes developed with C#, VB.NET, XAML, ASP.NET, HTML, and XML.

- Fix issues quickly : You can apply the suggested quick-fix solutions for identified code issues, eliminating code smells and errors.

- Verify compliance : Have your code compliant with coding standards and best practices by removing unused code chunks and making the code cleaner.

Other than these, it includes automatic code generation and code editing helpers .

When it comes to pricing, ReSharper is free for open-source projects, students, and teachers . They offer reasonable paid plans for organizations, individuals, and other categories starting from $13.90/month.

As discussed, static code analysis helps you identify and fix bugs, security vulnerabilities, and coding standards violations in the early stages without executing the codebase. This allows you to have cleaner, well-organized code while reducing errors and

This blog enables you to explore the best static source code analysis tools for 2024 that suit your needs. The insights provided will help you select the right tool so you can ensure your projects remain secure, clean, and well-organized and are built with coding standards and practices.

Quick contact always up to date

More from our blog

Software Testing Best Practices Checklist: A Detailed Guide And Templates

10 Best Test Management Tools For 2024

Enhancing Software Testing Methodologies for Optimal Results

Identification of strategies over tools for static code analysis

- August 2021

- IOP Conference Series Materials Science and Engineering 1163(1):012012

- 1163(1):012012

- University of Novi Sad

Abstract and Figures

![literature review static code analysis The code review cycle [5].](https://www.researchgate.net/profile/Danilo-Nikolic/publication/353960757/figure/fig1/AS:1058005390987264@1629259550435/The-code-review-cycle-5_Q320.jpg)

Discover the world's research

- 25+ million members

- 160+ million publication pages

- 2.3+ billion citations

- Abdullah Akinde

- Darko Stefanovic

- Aleksandar Ivic

- Sara Lazarevic

- Vladimir Mandic

- Sheetal Phatangare

- Aakash Matkar

- Akshay Jadhav

- Anish Bonde

- Sofija Djordjevic

- Arvinder Kaur

- Ruchikaa Nayyar

- SOFTW SYST MODEL

- J SYST SOFTWARE

- Moritz Beller

- Radjino Bholanath

- Shane McIntosh

- INFORM SOFTWARE TECH

- Katerina Goseva-Popstojanova

- Andrei Perhinschi

- Brian Chess

- Martin Fowler

- Recruit researchers

- Join for free

- Login Email Tip: Most researchers use their institutional email address as their ResearchGate login Password Forgot password? Keep me logged in Log in or Continue with Google Welcome back! Please log in. Email · Hint Tip: Most researchers use their institutional email address as their ResearchGate login Password Forgot password? Keep me logged in Log in or Continue with Google No account? Sign up

IMAGES

COMMENTS

PDF | On Jan 1, 2020, Darko Stefanovic and others published Static Code Analysis Tools: A Systematic Literature Review | Find, read and cite all the research you need on ResearchGate

Developers often use Static Code Analysis Tools (SCAT) to automatically detect different kinds of quality flaws in their source code. ... Section 2 discusses the related literature concerning code reviews and Static Code Analysis Tools. ... (2009) Using checklists to review static analysis warnings. In: Proceedings of the International Workshop ...

The most significant value of a static code analysis tool is the ability to identify common programming defects. The standard code review cycle includes four main phases [11]: 1. establish goals, 2. run the static analysis tool, 3. review code (using the output of the tool), 4. make fixes.

In this paper, three tools to support static code analysis were analyzed and evaluated using the DESMET methodology. The tools were selected by conducting a systematic literature review in the field of static code analysis. Article #: Date of Conference: 17-19 March 2021. Date Added to IEEE Xplore: 14 April 2021. ISBN Information:

Abstract. Static code analysis tools are being increasingly used to improve code quality. The source code's quality is a key factor in any software product and requires constant inspection and supervision. Static code analysis is a valid way to infer the behavior of a program without executing it. Many tools allow static analysis in different ...

Three tools to support static code analysis were analyzed and evaluated using the DESMET methodology and the selected tools were selected by conducting a systematic literature review in the field ofstatic code analysis. Static code analysis tools are being increasingly used to improve code quality. Such tools can statically analyze the code to find bugs, security vulnerabilities, security ...

The tools used for static code analysis are programs that explain the behavior of other programs [1]. The standard code review cycle includes four main phases [6]: defining the goal, launching a ...

ContextAutomated static analysis (ASA) identifies potential source code anomalies early in the software development lifecycle that could lead to field failures. ... A systematic literature review of actionable alert identification techniques for automated static code analysis. ... C. Pham, Performing high efficiency source code static analysis ...

Results: In static code analysis research: 1) static analysis tools and inspections received significantly more attention than other techniques. 2) The benefits and limitations of static code analysis techniques were extracted and seven recurrent variables were used to report them. 3) Static code analysis research significantly lacks rigor

Static code analysis tools are being increasingly used to improve code quality. Such tools can statically analyze the code to find bugs, security vulnerabilitie ... The tools were selected by conducting a systematic literature review in the field of static code analysis. Published in: 2021 20th International Symposium INFOTEH-JAHORINA (INFOTEH)

Static code analysis is a valid way to infer the behavior of a program without executing it. Many tools allow static analysis in different frameworks, for different programming languages, and for detecting different defects in the source code. Still, a small number of tools provide support for domain-specific languages.

The tools used for static code analysis are programs that explain the behaviour of other programs [8]. Static code analysis is significantly faster than conventional testing and can detect any defect visible in the program's source code. If the tools for static code analysis are compared with the manual code review by software developers, it can

Additionally, static analysis tools and linters provide limited syntactic support to check comment quality. Therefore, ... In this work, we present the results of a systematic literature review on source code comment quality evaluation practices in the decade 2011— 2020. We analyze 2353 publications and study 47 of them to understand of ...

A Systematic Literature Review of Actionable Alert Identification Techniques for Automated Static Code Analysis Sarah Heckman (corresponding author) and Laurie Williams North Carolina State University 890 Oval Drive, Campus Box 8206, Raleigh, NC 27695-8206 (phone) +1.919.515.2042 (fax) +1.919.515.7896 [heckman, williams]@csc.ncsu.edu Abstract

1. Introduction. Static analysis is "the process of evaluating a system or component based on its form, structure, content, or documentation" [26].Automated static analysis (ASA), like Lint [29], can identify common coding problems early in the development process via a tool that automates the inspection 1 of source code [60].ASA reports potential source code anomalies, 2 which we call ...

The objective of this systematic literature review (SLR) is to summarize state of the art and prominent areas for future research. ... Most of the research trends and potential research areas are identified in static source code analysis, program comprehension, refactoring, reverse engineering, detection, and traceability of cross-language ...

Static code analysis, systematic literature review, empirical evaluation, industrial survey National Category Software Engineering Identifiers URN: urn:nbn:se:bth-12871 OAI: oai:DiVA.org:bth-12871 DiVA, id: diva2:947354 Subject / course PA2511 Master's Thesis (120 credits) in Software Engineering Educational program

The UK Defense Standard 00-55 requires that Static Code Analysis be used on all 'safety related software in defense equipment'. [0] Techniques. There are various techniques to analyze static source code for potential vulnerabilities that maybe combined into one solution. These techniques are often derived from compiler technologies.

Keywords: static code analysis, tools, literature review. 1. Introduction ... 2. run the static analysis tool, 3. review code (using the output of the tool), 4. make fixes.

tools used for static code analysis are programs that explain the. behavior of other programs [1]. The standard code review cycle includes four main phases [6 ]: 1. defining the goal, 2. launching ...

Code Review Assistant helps to integrate the results of static code analyses and compilers into the code review process - allowing for much better usage of the code analysis results. If you look at the example below, you'll see some bugs detected by PMD as well as some Java compiler warnings.

Unlike dynamic code analysis tools, these tools help you create a cleaner, enhanced, secure codebase that meets your quality goals and metrics with minimum bugs and errors. Without going further, let's explore some of the best static code analysis tools for 2024. 8 Best Static Code Analysis Tools For 2024 1. CodiumAI

Systematic literature review in the field of static analysis of code and its tools was conducted. A paper A paper describing the process of t he systematic literature review was published in [4].

The anthropology department is offering an "entry-level" pathway and an "advanced" pathway to obtain a minor in anthropology. Departments took varying approaches to designing their minors For example, anthropology is offering two versions of the same minor. The entry level version requires a lower-level introductory course or lets the ...

Environmental Review This proposal will be subject to an environmental analysis in accordance ... BILLING CODE 4910-13-P CONSUMER PRODUCT SAFETY COMMISSION 16 CFR Parts 1112, 1130, and 1240 ... and instructional literature. The ASTM standard has been revised six times since 2014: in 2016, 2018, ...